- The paper introduces hyperbolic geometry to model hierarchical relationships in RL, reducing dimensionality and enhancing representation efficiency.

- It presents spectrally-regularized hyperbolic mappings and spectral normalization to stabilize training in non-stationary, high-dimensional settings.

- Empirical results on Procgen and Atari 100K benchmarks show that hyperbolic models outperform traditional Euclidean approaches with better generalization and efficiency.

Hyperbolic Deep Reinforcement Learning: An Overview

This essay discusses the implementation and implications of using hyperbolic geometry in deep reinforcement learning (RL) to enhance the training and efficiency of agents. The hyperbolic space offers a natural way to model hierarchical relationships typical in many RL tasks, providing a potentially superior alternative to traditional Euclidean embeddings.

Hyperbolic Representations in RL

Motivation and Benefits

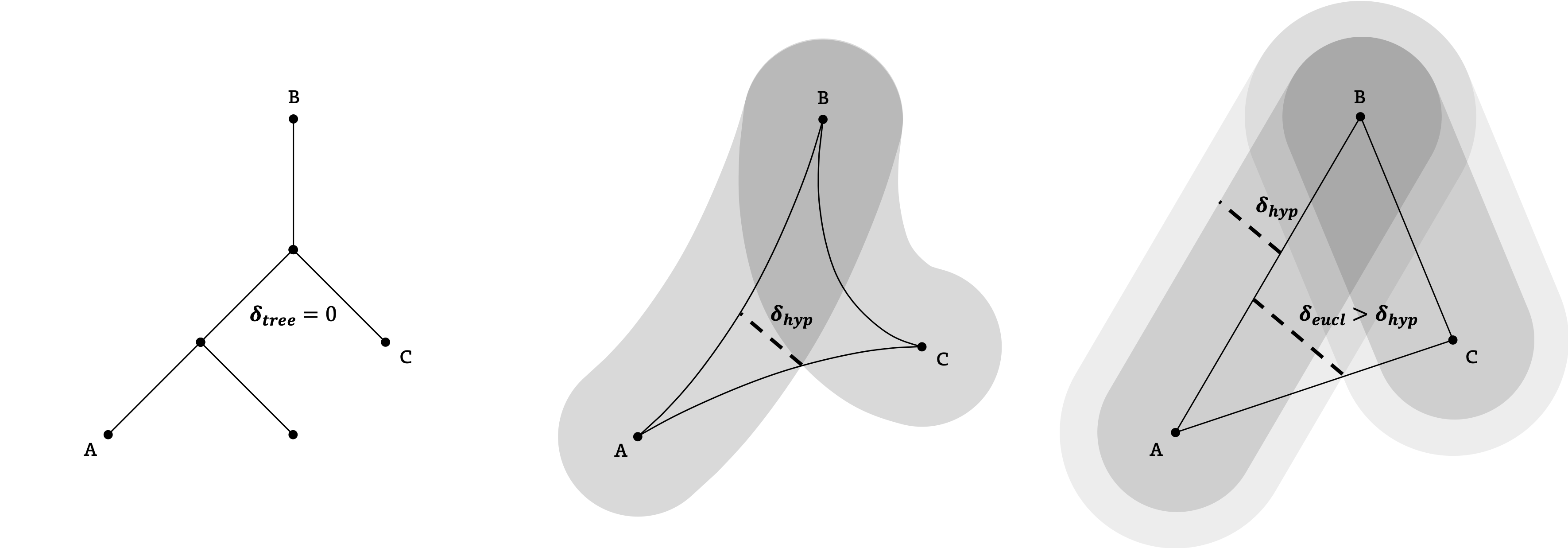

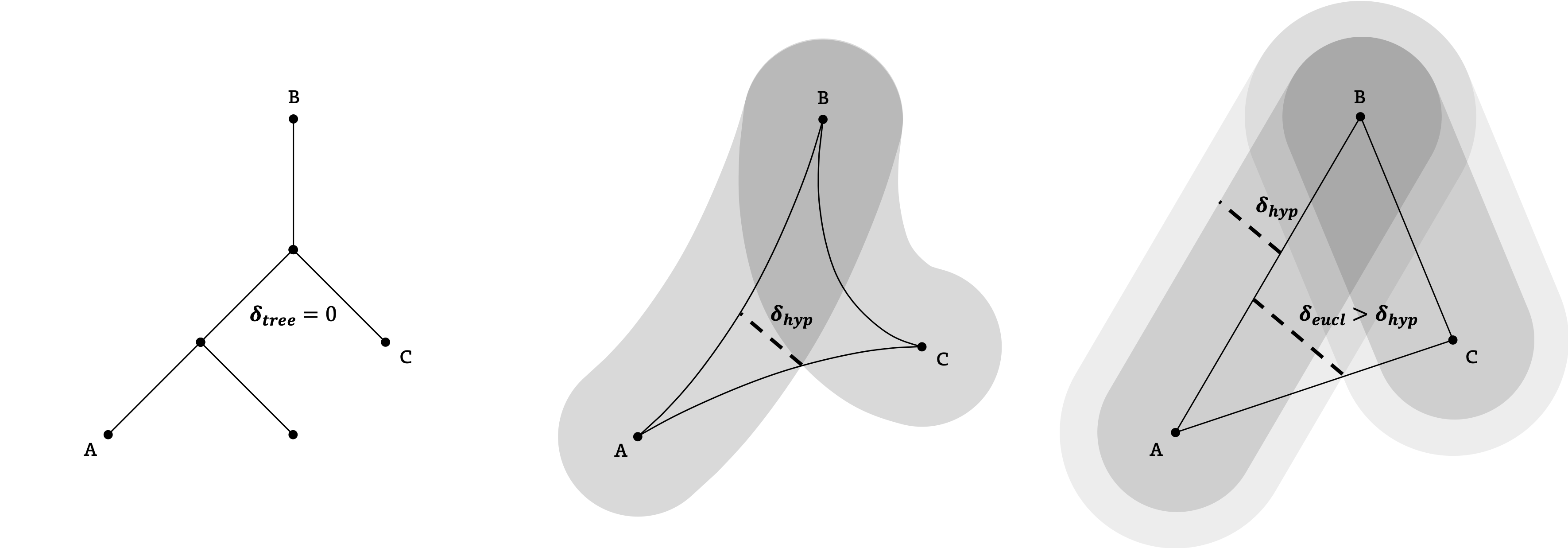

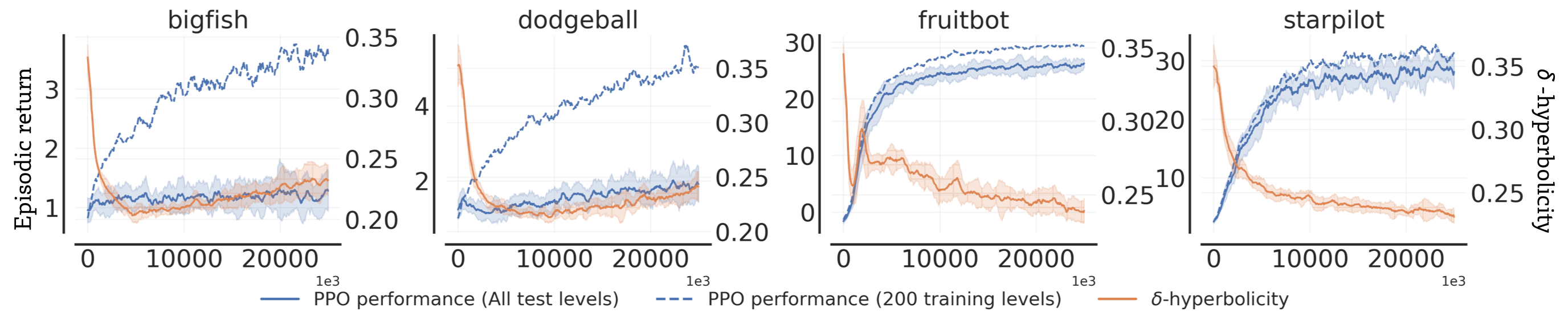

Hyperbolic geometry models data that have underlying tree-like structures with low distortion. This characteristic is particularly advantageous in RL, where agents must understand hierarchical relationships between states and actions to make effective decisions. Unlike Euclidean spaces, hyperbolic spaces can represent hierarchies more efficiently in fewer dimensions, reducing complexity and overfitting.

Implementation Strategy

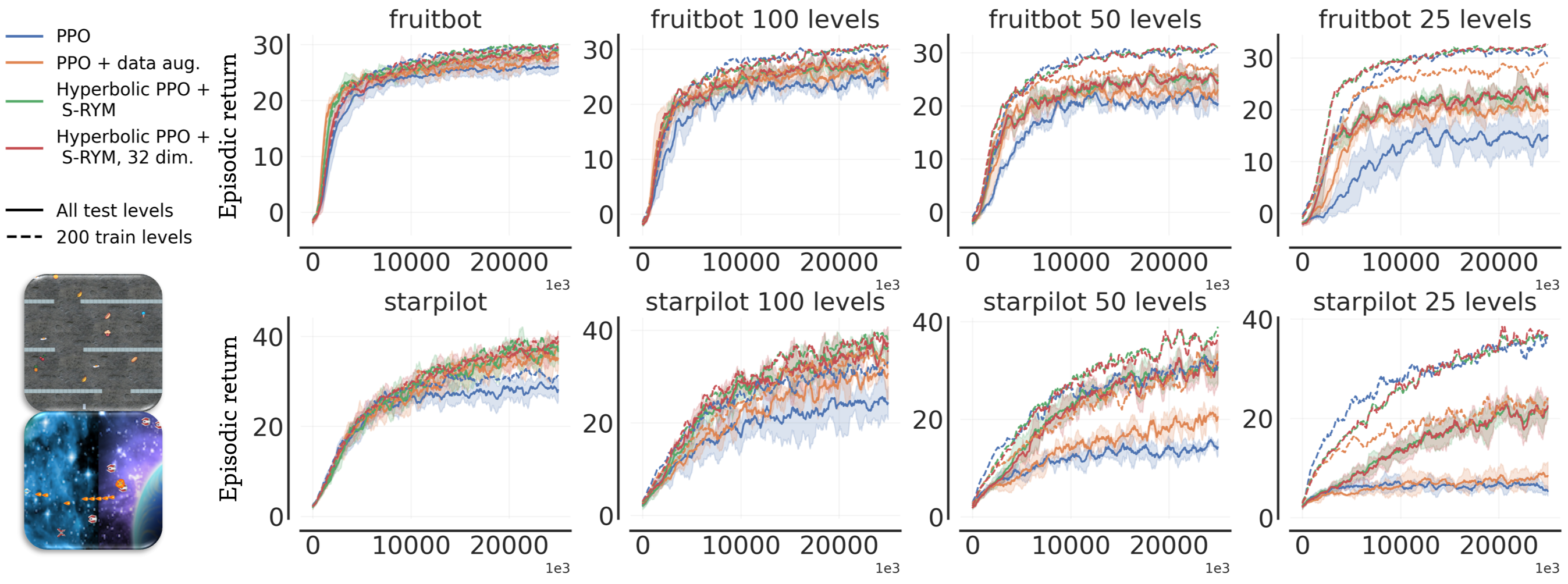

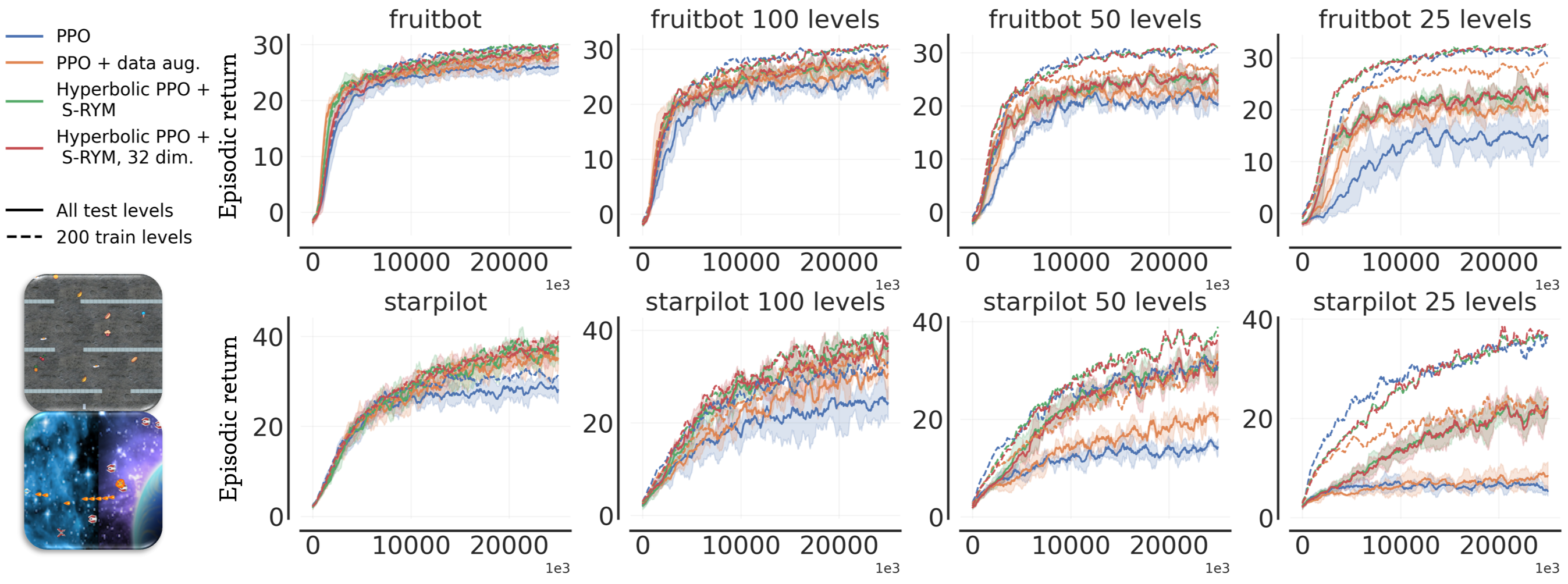

The proposed framework for incorporating hyperbolic geometry into RL involves transforming the latent spaces of RL models into hyperbolic spaces. The work introduces spectrally-regularized hyperbolic mappings (S-RYM) to stabilize training in hyperbolic spaces, addressing the optimization challenges posed by high-dimensional, non-stationary RL environments.

Figure 1: A geodesic space is δ-hyperbolic if every triangle is δ-slim. Depictions of tree, hyperbolic, and Euclidean triangles showcase their differences in this metric.

Addressing Optimization Challenges

Spectral Normalization

Training in hyperbolic spaces can suffer from instabilities due to the nature of RL's non-stationary data and gradient estimators. Applying spectral normalization (SN) to neural networks helps stabilize this process by controlling the Lipschitz constant, hence reducing exploding/vanishing gradients.

Dimensionality Rescaling

To ensure dimensionality-invariance in representations, the outputs from Euclidean layers are rescaled, maintaining stability across different dimensional hyperbolic spaces.

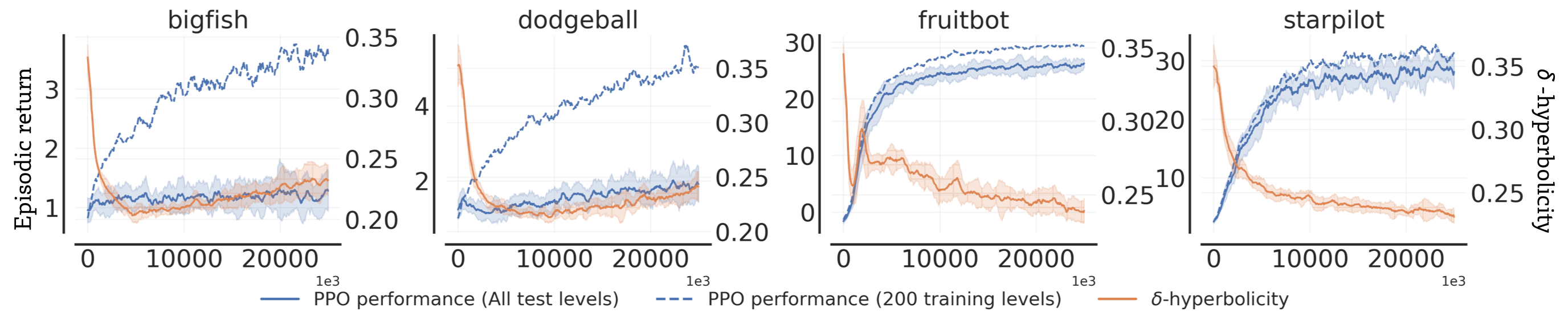

Figure 2: Performance and relative delta-hyperbolicity of the final latent representations demonstrating the impact of hyperbolic geometry.

Empirical Validation

Benchmarks and Comparisons

The implementation was validated on the Procgen and Atari 100K benchmarks, showing substantial performance improvements over Euclidean baselines and several state-of-the-art (SotA) RL algorithms. These hyperbolic models demonstrated superior generalization to previously unseen environments and enhanced efficiency, even with reduced representation dimensionality.

Figure 3: Performance comparison for varying dimensions of PPO agents, indicating the robustness of hyperbolic models at lower dimensions.

Analysis of Representations

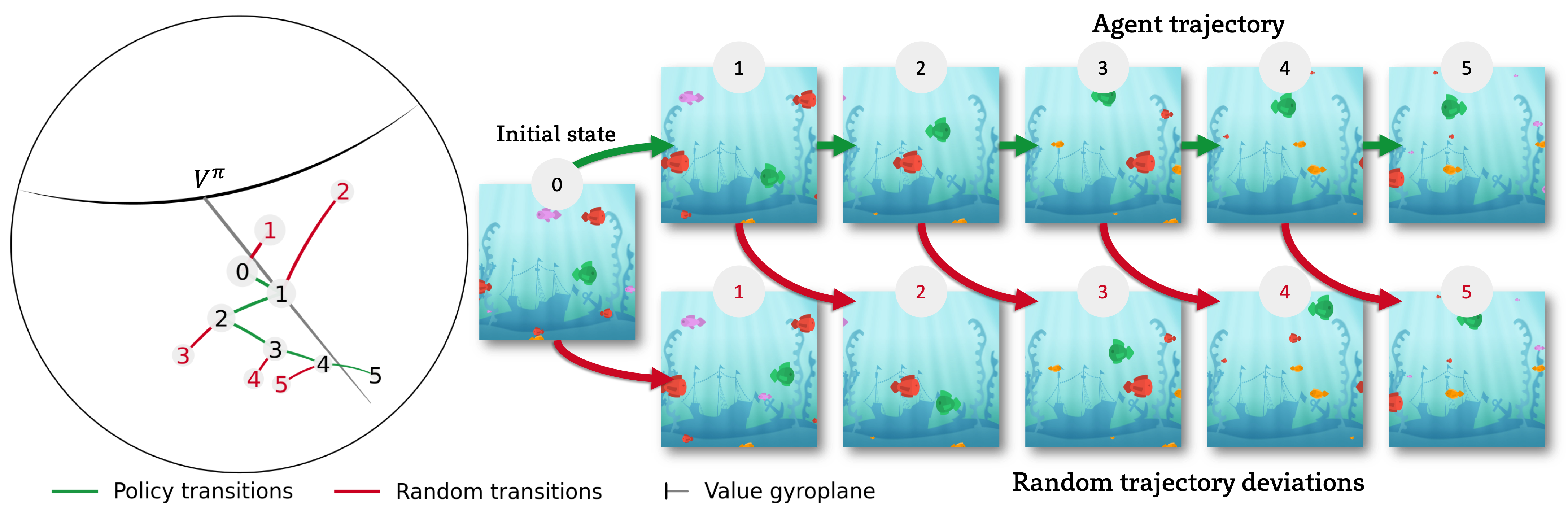

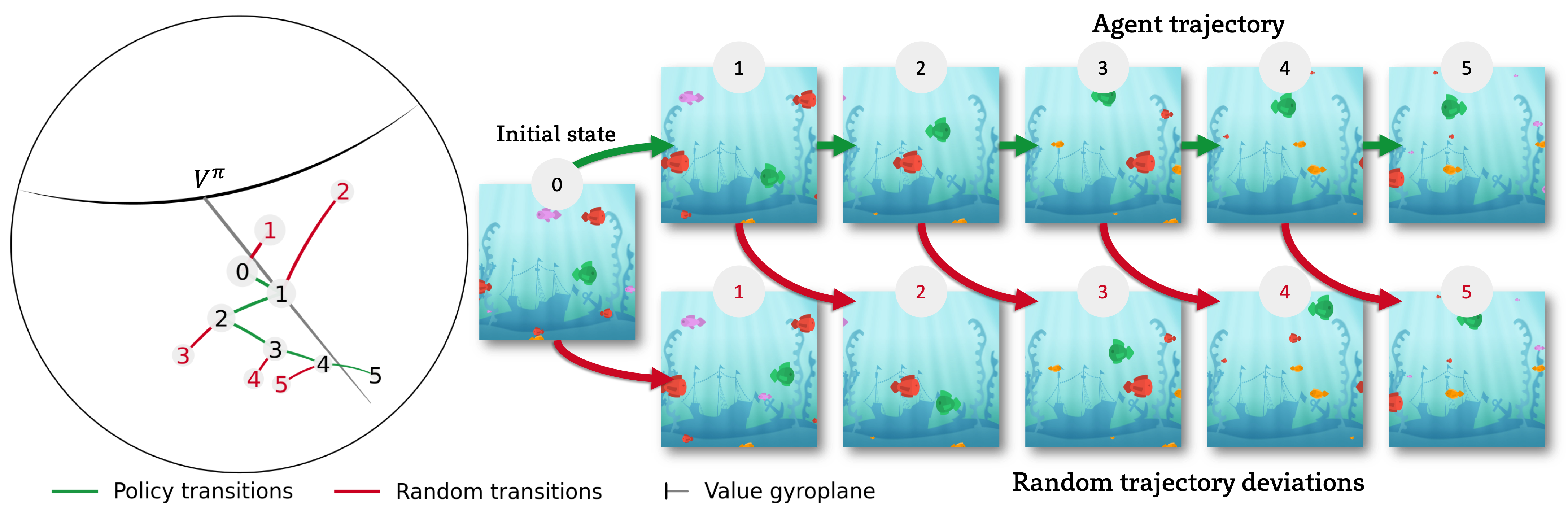

Examinations of 2D hyperbolic embeddings revealed that these models efficiently captured hierarchical task structures, leading to more stable and interpretable decision policies.

Figure 4: Visualization of 2D hyperbolic embeddings in a Procgen environment revealing structured hierarchical representations of state transitions

Future Work and Implications

The incorporation of hyperbolic geometry into RL opens new avenues for improving model efficiency and performance. This approach promises not only enhanced test proficiency but also greater generalization capabilities. Future research could explore integrating such geometric perspectives across various domains within unsupervised and semi-supervised learning, potentially broadening the scope and applicability of RL solutions.

Conclusion

The integration of hyperbolic representations in deep RL as proposed provides significant performance gains and a robust framework for understanding complex hierarchical relationships within environments. This work underscores the potential of geometric approaches to induce inductive biases that enhance learning in high-dimensional, dynamic contexts. As such, hyperbolic geometry holds promise as a foundation for future advancements in AI and machine learning.