- The paper introduces RoomFormer, a novel transformer-based model that directly reconstructs 2D floorplans from 3D scans using a two-level query mechanism.

- It employs a single-stage, end-to-end architecture that eliminates the need for hand-crafted rules by predicting room polygons and vertices in parallel.

- Evaluations on Structured3D and SceneCAD datasets demonstrate that RoomFormer outperforms traditional multi-stage methods in terms of precision, recall, and overall efficiency.

Connecting the Dots: Floorplan Reconstruction Using Two-Level Queries

Introduction

"Connecting the Dots: Floorplan Reconstruction Using Two-Level Queries" addresses the complex task of generating 2D floorplans from 3D scans, leveraging a novel single-stage approach. This paper presents RoomFormer, a Transformer architecture that directly outputs floorplans as sets of polygons without relying on multi-stage pipelines that require hand-crafted optimizations and complex sequential processes prevalent in traditional methods.

Methodology

Floorplan Representation

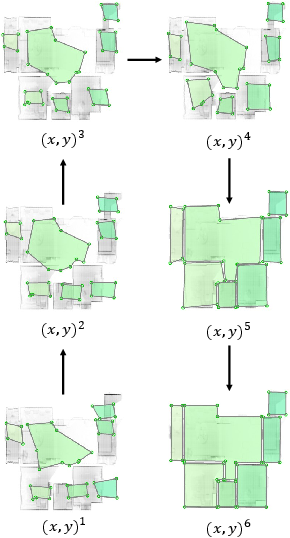

The paper formulates floorplan reconstruction as a structured prediction task, where the goal is to deduce a set of polygons each represented as a variable-length sequence of ordered vertices. This representation omits the need for intermediate geometric primitive detection like corners and walls, thereby simplifying the process and reducing dependence on hand-crafted rules.

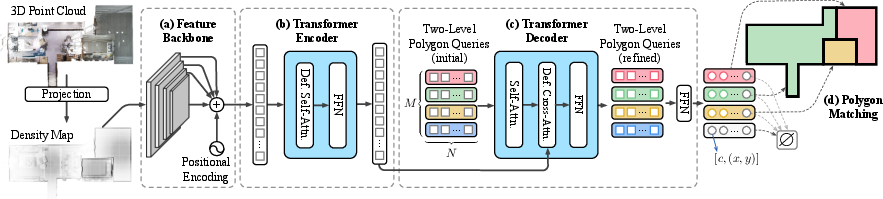

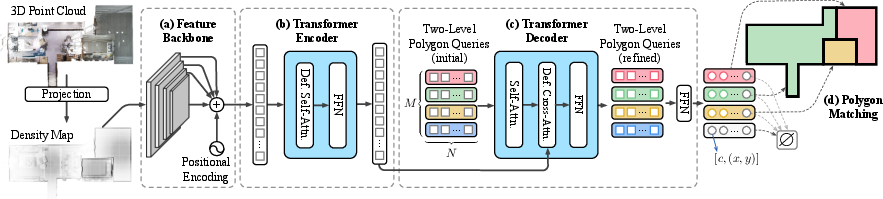

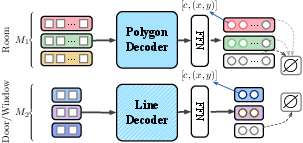

RoomFormer adopts a feature backbone composed of CNNs to extract image features followed by a Transformer encoder-decoder architecture. The encoder and decoder employ multi-scale deformable attention mechanisms, significantly reducing computational burdens compared to traditional attention mechanisms. This architecture facilitates hierarchical structured output prediction, accommodating varying room numbers and vertices.

Figure 1: Illustration of the RoomFormer model demonstrating its feature extraction and prediction capabilities through an encoder-decoder architecture.

Two-Level Queries

The innovation of RoomFormer lies in its two-level queries model — one for room polygons and one for vertices. This design provides parallel processing and refinement of queries, with polygon matching ensuring optimal prediction alignment with groundtruth data. It enables structured reasoning across different rooms contributing to higher accuracy and efficiency.

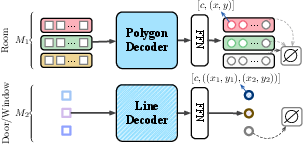

Figure 2: Structure of the decoder illustrating the two-level query mechanism for room and vertex predictions.

Polygon Matching

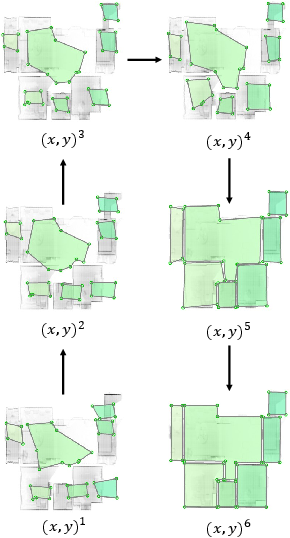

To accommodate variable-sized output beyond fixed-size neural predictions, the paper introduces a polygon matching strategy. This involves computing matching costs at both set and sequence levels, using the Hungarian algorithm to achieve effective end-to-end model training.

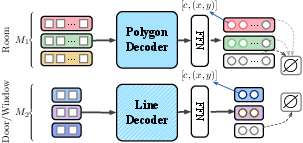

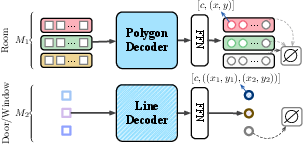

Figure 3: SD-TQ setup, illustrating a single decoder with two-level queries for prediction.

Results and Discussion

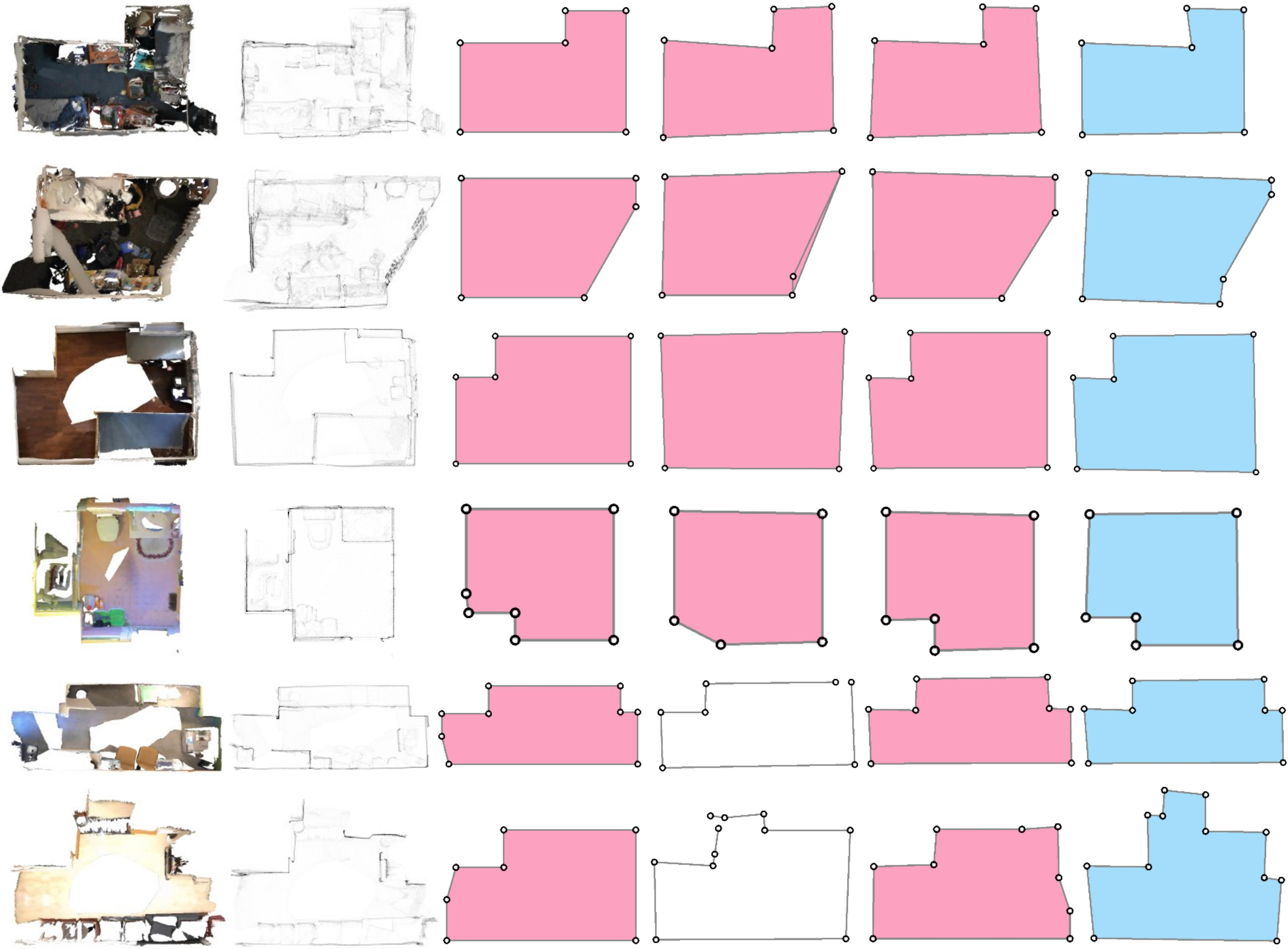

RoomFormer sets a new benchmark, evaluated on datasets like Structured3D and SceneCAD. It surpasses traditional methods in precision, recall, and F1 scores for rooms, corners, and angles, demonstrating superior efficacy in reconstructing various room configurations.

Generalization and Flexibility

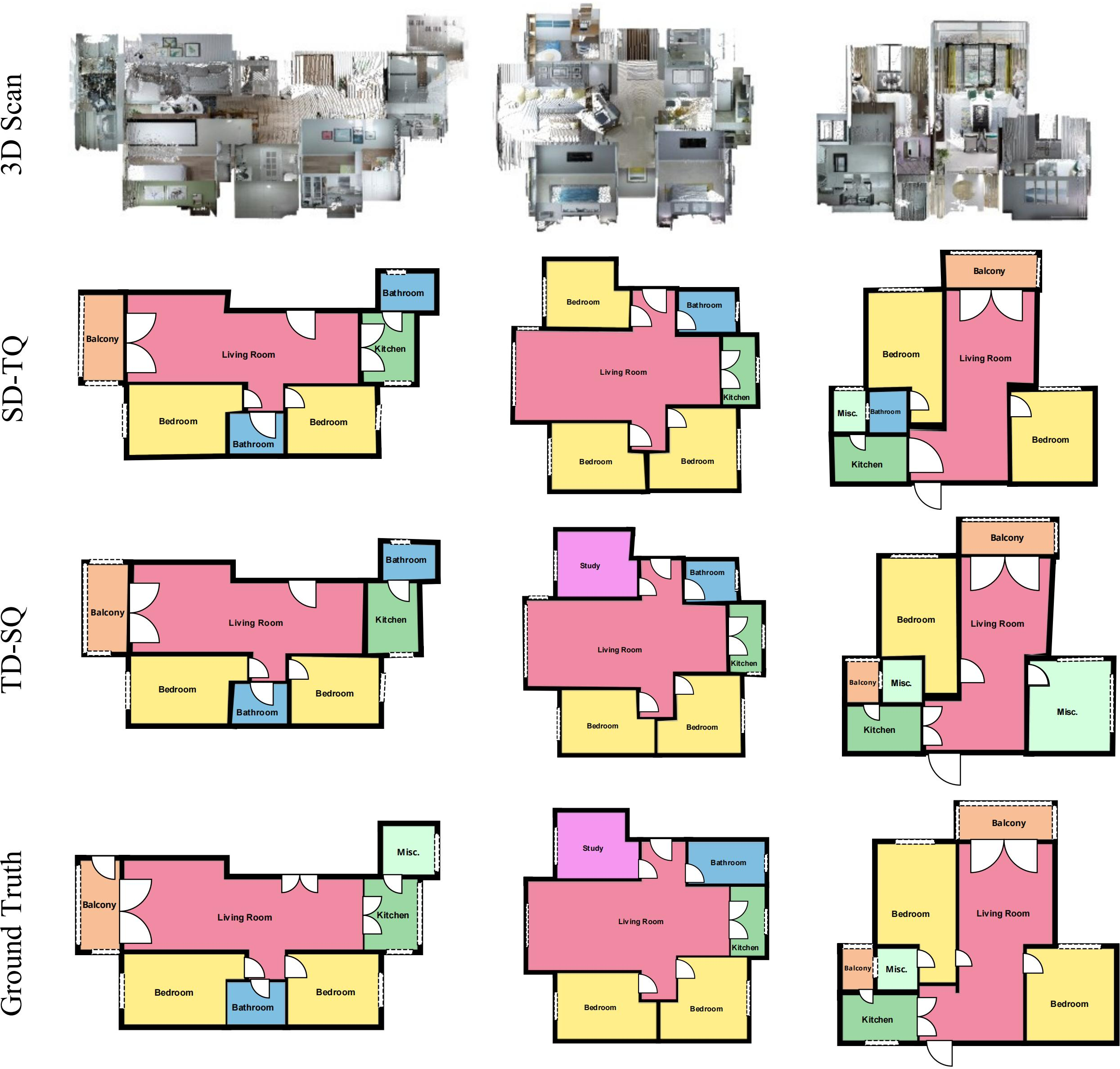

The model exhibits strong cross-data generalization capabilities, performing robustly even when trained and tested on datasets with different characteristics. Its ability to extend predictions to semantic room types, doors, and windows, enhances its practical applicability in intelligent design systems.

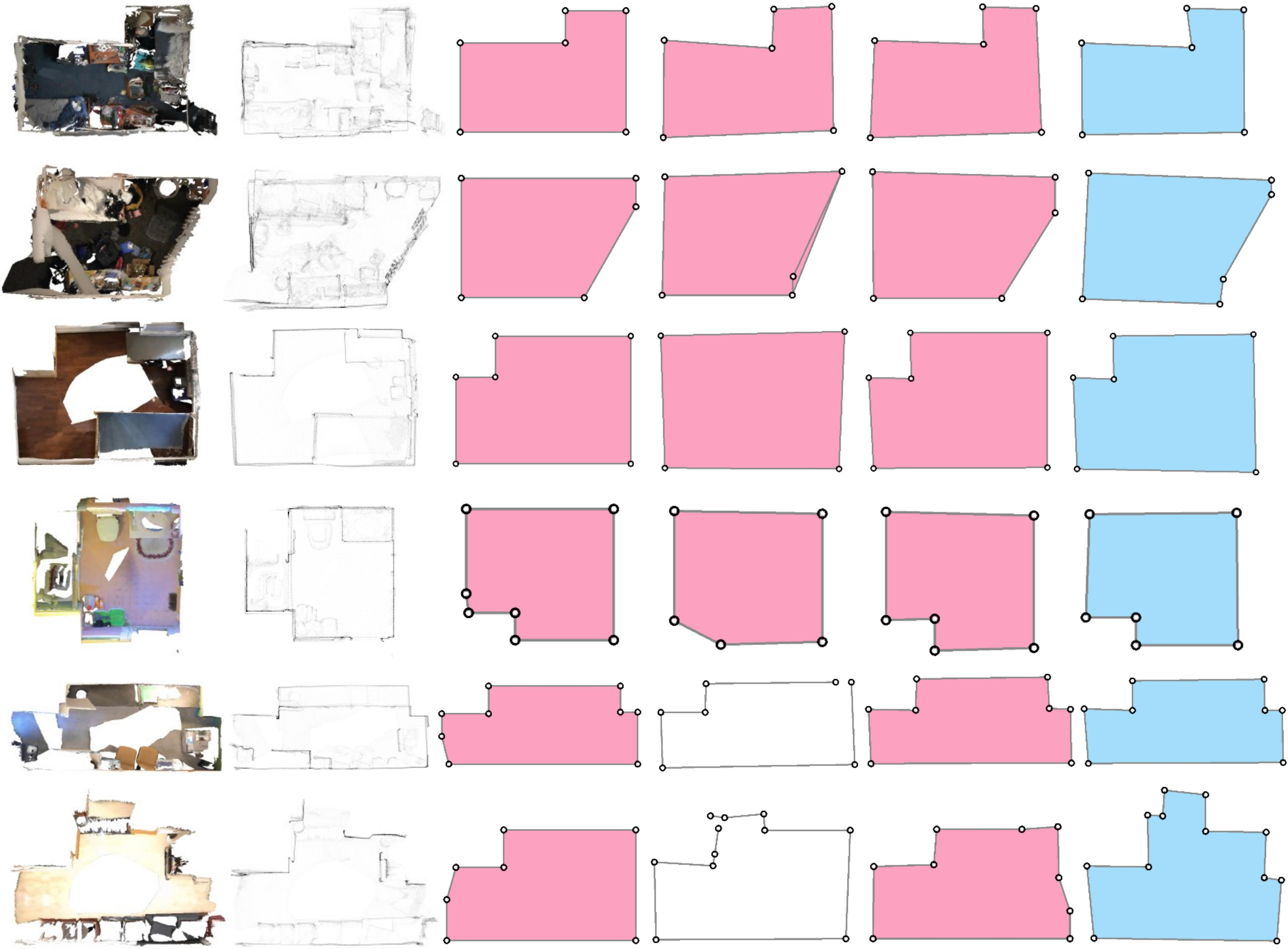

Figure 4: Qualitative evaluations on SceneCAD, showcasing RoomFormer's detailed reconstruction capabilities.

Semantically-Rich Floorplans

RoomFormer's adaptability to predict semantically-rich floorplans is showcased through its model variants which integrate semantic information like room types, architectural elements, etc., without compromising on performance.

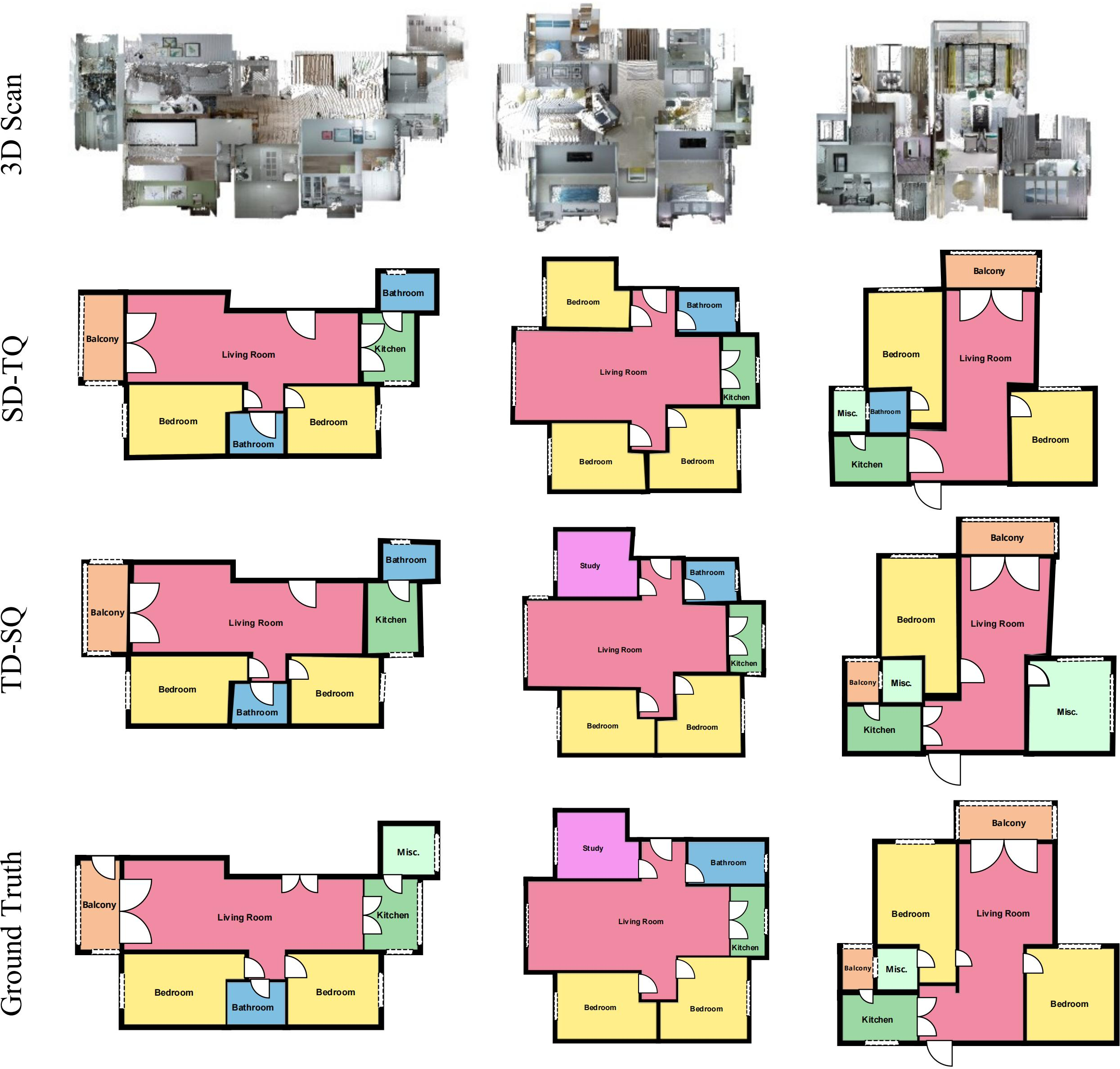

Figure 5: Results of semantic-rich floorplans highlighting the model's ability to identify structural elements.

Conclusion

RoomFormer introduces an efficient, flexible approach to floorplan reconstruction, eliminating the need for cumbersome multi-stage processes and domain-specific design constraints. Its deployment in real-world applications promises improvements in areas like architectural modeling, interior design, and AR/VR system integrations.

By proposing a holistic model that efficiently handles complex inputs and outputs structured predictions, RoomFormer paves the way for future research in AI-driven structural reconstruction tasks. The code and models are publicly accessible, enabling further exploration and application across potentially broader domains.