- The paper presents a novel benchmark and taxonomy to evaluate image classification methods on small datasets.

- It shows that hyper-parameter optimization is critical, with well-tuned cross-entropy baselines outperforming most specialized methods.

- It categorizes techniques into architecture, cost function, data augmentation, latent augmentation, and warm-starting, guiding future research.

Image Classification with Small Datasets: Overview and Benchmark

This essay synthesizes the key contributions and findings of "Image Classification with Small Datasets: Overview and Benchmark" (2212.12478), focusing on its implications for practitioners in the field of data-efficient deep learning. The paper addresses the pressing need for a systematic analysis of existing methods and a standardized benchmark for image classification under data scarcity.

Core Contributions

The paper's primary contributions are twofold: a comprehensive review of existing literature and the introduction of a novel benchmark for image classification with small datasets. The literature review categorizes existing methods into five main families: architecture, cost function, data augmentation, latent augmentation, and warm-starting. This taxonomy provides a structured overview of the research landscape. The benchmark comprises five datasets spanning diverse domains and data types, including RGB, grayscale, and multispectral imagery, thereby addressing the limitations of existing benchmarks that primarily focus on natural images.

Benchmark and Evaluation

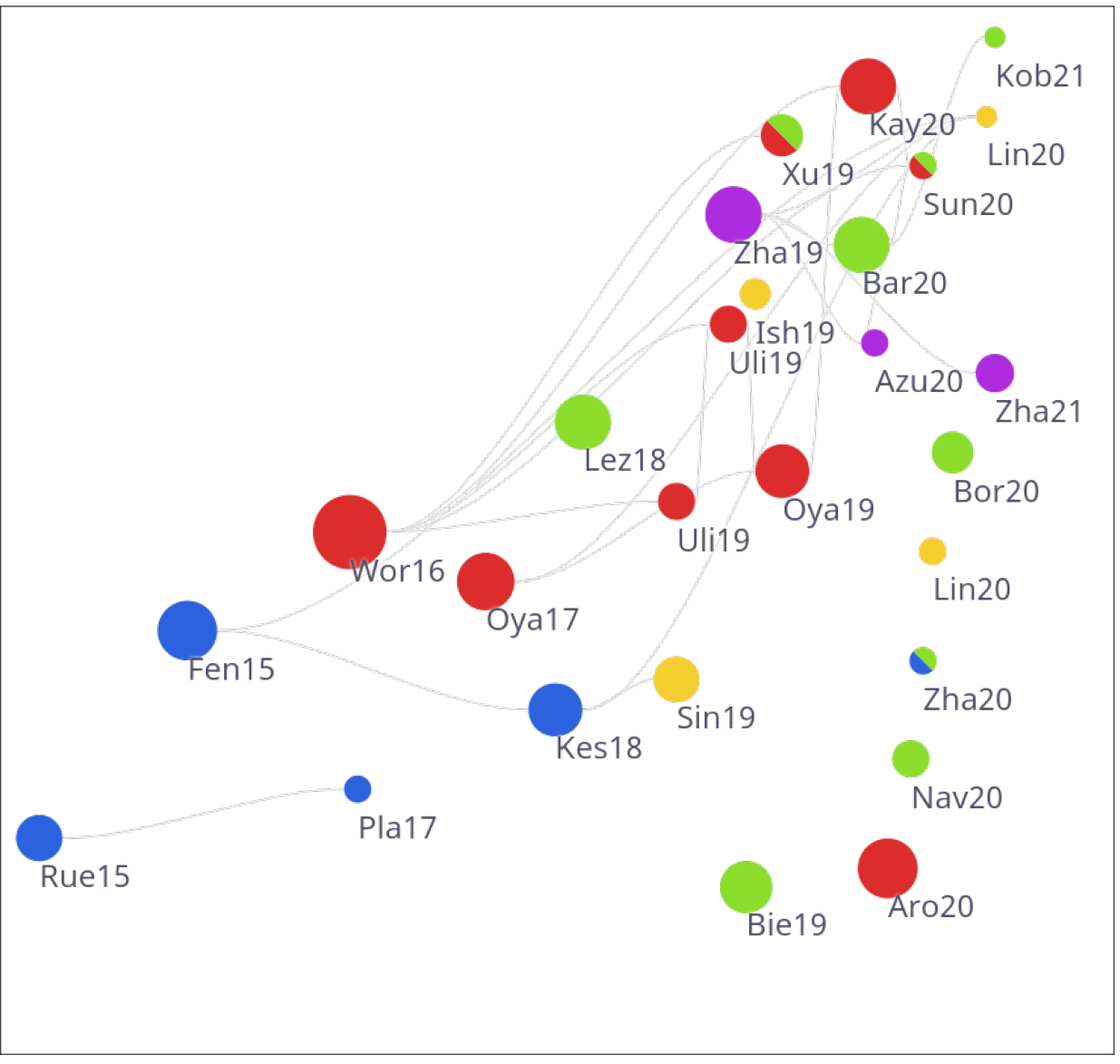

The authors conduct a thorough empirical evaluation of ten existing methods, alongside a standard cross-entropy baseline, across the proposed benchmark. A key aspect of their evaluation is the careful hyper-parameter optimization (HPO) performed for each method and dataset individually. This rigorous approach reveals a somewhat disillusioning truth: many specialized methods fail to outperform a well-tuned cross-entropy baseline. Only one method, dating back to 2019, consistently surpasses the baseline. (Figure 1)

(Figure 1)

Figure 1: Accuracy of state-of-the-art methods and baselines on the proposed benchmark, highlighting the limited performance progress over the years.

The authors also find that an untuned baseline, trained with default hyper-parameters, significantly underperforms the HPO-tuned baseline, underscoring the critical importance of proper hyper-parameter optimization in data-deficient scenarios. This suggests that the perceived progress in the field may be, in part, an illusion caused by comparisons against insufficiently tuned baselines.

Taxonomy of Methods

The paper categorizes existing methods into five families, depending on how the model is regularized:

- Architecture: Modifications to network architectures such as fixed filters based on wavelet transformations or discrete cosine transform

- Cost function: Regularization or penalty terms such as cosine similarity

- Data augmentation: Increases the size of the training dataset

- Latent augmentation: Stochastic or adversarial transformations applied to features inside networks

- Warm-starting: Algorithmic schemes to initialize the classifier with weights that favor better learning on small datasets.

(Figure 2)

Figure 2: Distribution of publication venues concerning the reviewed body of literature, revealing the dominance of computer vision conferences.

\subsection{Practical Implications}

The findings of this paper have significant practical implications for researchers and practitioners working on image classification with small datasets.

Future Directions

The paper identifies several promising directions for future research:

- Development of new regularization techniques: The limited success of existing specialized methods suggests a need for more effective regularization strategies specifically designed for data-deficient scenarios.

- Exploration of novel architectures: Although the benchmark focuses on a common base architecture, exploring alternative architectures tailored for small datasets could lead to further performance gains.

- Development of automated HPO strategies: Given the importance of HPO, developing automated techniques that can efficiently find optimal hyper-parameter configurations would be highly valuable.

Conclusion

"Image Classification with Small Datasets: Overview and Benchmark" (2212.12478) provides a valuable contribution to the field of data-efficient deep learning. Its rigorous evaluation methodology and the establishment of a challenging benchmark provide a solid foundation for future research. The paper's findings underscore the importance of careful hyper-parameter optimization and highlight the need for new approaches that can effectively address the challenges of image classification with limited data.