- The paper presents a unified denoising objective linking probability density estimation with a global optimum MSE objective using I-MMSE relations.

- The method enhances density estimation by fine-tuning and ensembling discrete diffusion models, achieving competitive negative log-likelihoods.

- Thermodynamic integration is leveraged to simplify sampling and improve practical performance in high-dimensional generative tasks.

Introduction

The paper "Information-Theoretic Diffusion" (arXiv ID: (2302.03792)) introduces a novel framework for denoising diffusion models using an information-theoretic foundation. This approach leverages the I-MMSE relations from information theory to establish a direct relationship between probability densities and optimal denoising regression. This novel perspective aims to unify and enhance current methodologies in diffusion models, offering theoretical justifications and practical improvements in probability distribution estimation.

Core Contributions

The paper presents several significant contributions to the field of diffusion models:

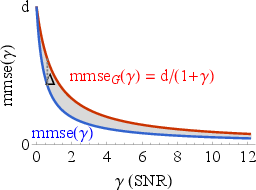

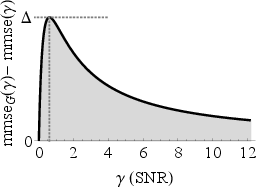

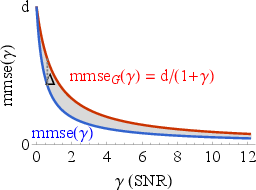

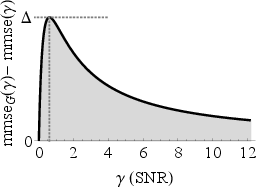

- Unified Denoising Objective: The authors present an exact relationship between probability density and a global optimum mean square error (MSE) denoising objective. This relationship, expressed as −logp()=21∫0∞mmse(,)d+constant terms, provides a unified framework for continuous and discrete probabilities.

- Enhanced Density Estimation: By using the I-MMSE relations, the paper improves existing diffusion bounds and introduces a method to ensemble diffusion models for better negative log-likelihood (NLL) estimates. This approach leverages the analytic tractability of Gaussian noise channels to bridge discrete and continuous distributions.

- Numerical Results: The experiments demonstrate that the proposed framework can reinterpret pre-trained discrete diffusion models as continuous density models, achieving competitive log-likelihoods. The framework allows fine-tuning and ensembling, which leads to improvements in NLL metrics.

Fundamental Denoising Relation

The key theoretical development in this paper is the derivation of a pointwise denoising relation, grounded in the I-MMSE relations. This is formalized as:

dKL(p(∣)∣∣p())=21mmse(,)

This relation links the Kullback-Leibler divergence and the Minimum Mean Square Error (MMSE) in a Gaussian noise channel. The derivation relies on the properties of the Gaussian channel and integration by parts, leading to a novel understanding of diffusion models in terms of thermodynamic integration.

Figure 1: The integral of the gap between MMSE curves for data from the target distribution versus data from a Gaussian distribution is used in Eq.~\eqref{eq:entropy} to get an exact expression for the entropy, or expected Negative Log Likelihood (NLL), of the data.

Diffusion as Thermodynamic Integration

Thermodynamic integration is utilized to estimate log-likelihoods, a critical component of the framework. This method circumvents the need for variational approximations by evaluating the difference in free energy or log partition functions through integration. The Gaussian noise channel simplifies intermediate distribution sampling, enhancing computational efficiency.

By integrating over SNR values, the authors effectively exploit the I-MMSE relations to obtain a concise expression for data density, expressed solely in terms of the optimal regression solution, which significantly simplifies the estimation process.

Practical Implications and Future Work

The theoretical advancements outlined in this paper provide practical implications for deploying diffusion models in industrial applications, particularly those involving high-dimensional data like images. The unified treatment of continuous and discrete variables paves the way for more versatile generative models that avoid the confines of domain-specific architectures.

Looking forward, this framework suggests several avenues for future research:

- Improved Sampling: By refining noise schedules based on MMSE importance, it is possible to enhance model efficiency further, particularly during the sampling phase.

- Model Ensembling: The theory supports creating ensembles of specialized denoisers that perform optimally in distinct SNR regimes, likely increasing robustness across diverse datasets.

- Broader Applicability: Extending this approach to other types of generative models, including those applied to non-Gaussian distributions, remains an exciting possibility.

Conclusion

The information-theoretic approach to diffusion modeling, as introduced in this paper, offers a conceptually simple yet powerful framework for understanding and improving generative models. By seamlessly integrating concepts from information theory with practical machine learning techniques, this work sets a promising trajectory for future innovations in AI model development and deployment.