- The paper introduces DQMQ, a hybrid reinforcement learning framework that dynamically adjusts quantization bit-widths based on data quality.

- It employs a Precision Decision Agent and a Quantization Auxiliary Computer to integrate bit-width decision-making into end-to-end quantization training.

- Experiments on CIFAR-10 and SVHN show improved top-1 accuracy over methods like HAWQ, proving the framework's robustness under varying data qualities.

Data Quality-aware Mixed-precision Quantization via Hybrid Reinforcement Learning

Introduction

This paper presents a novel framework, DQMQ (Data Quality-aware Mixed-precision Quantization), which addresses the limitations of conventional fixed/mixed-precision quantization by incorporating data quality awareness into the quantization process using hybrid reinforcement learning. Traditional quantization approaches often predefine bit-width settings based on static assumptions, leading to suboptimal performance when data quality varies across training and inference stages. The proposed DQMQ framework dynamically adapts quantization bit-widths in response to input data qualities, thereby enhancing the model's robustness and efficiency.

Methodology

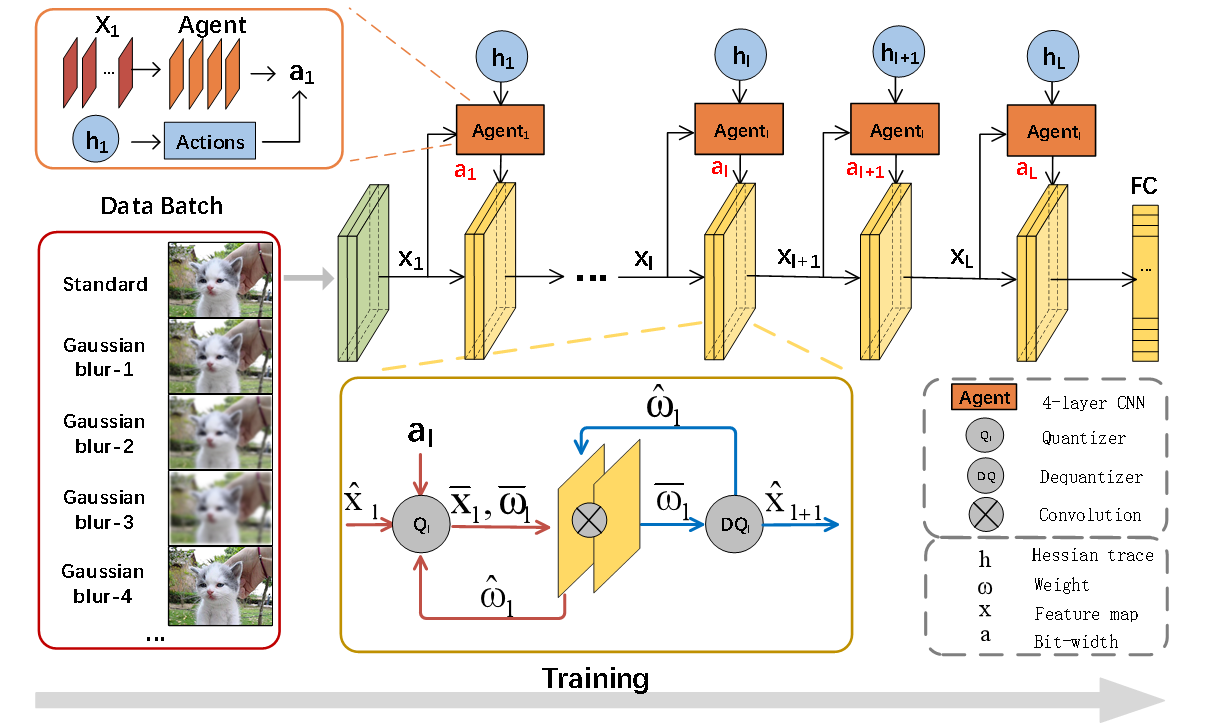

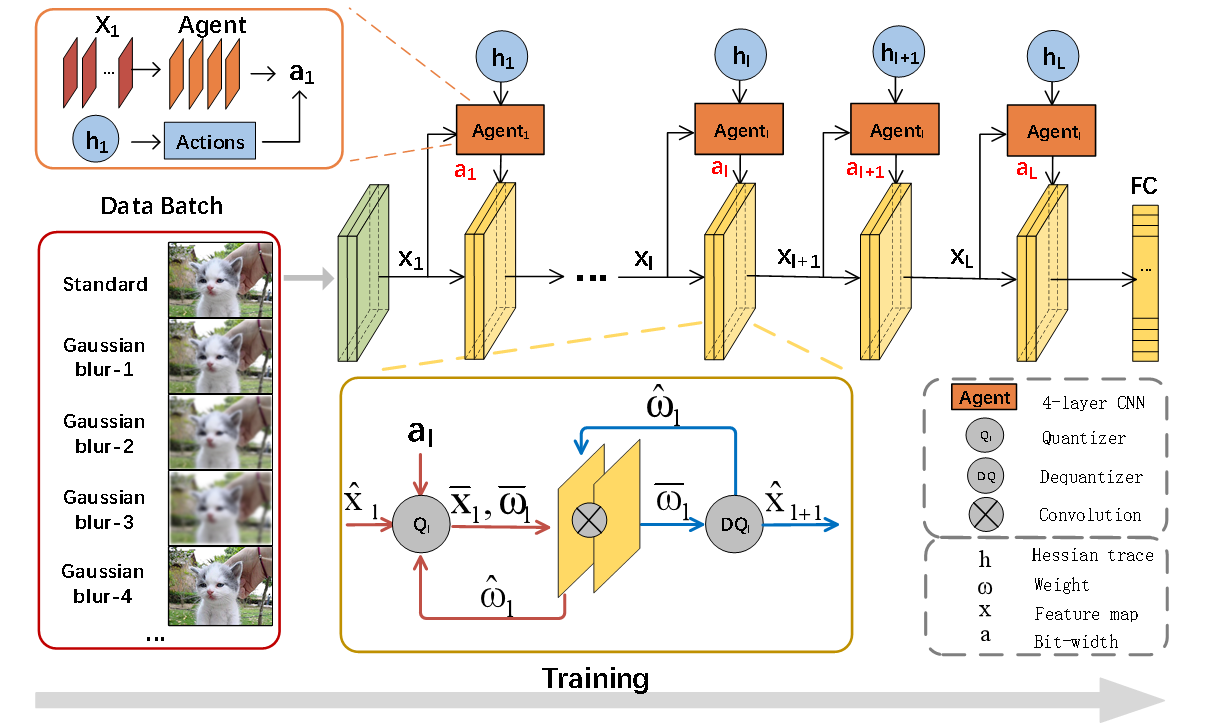

DQMQ is characterized by its hybrid reinforcement learning framework that combines model-based policy optimization with supervised quantization training. By utilizing a continuous probability distribution approach for bit-width sampling, DQMQ remains differentiable and allows for end-to-end optimization. This aspect distinguishes it from prior works that decouple bit-width decision-making from quantization training. The core components of DQMQ include:

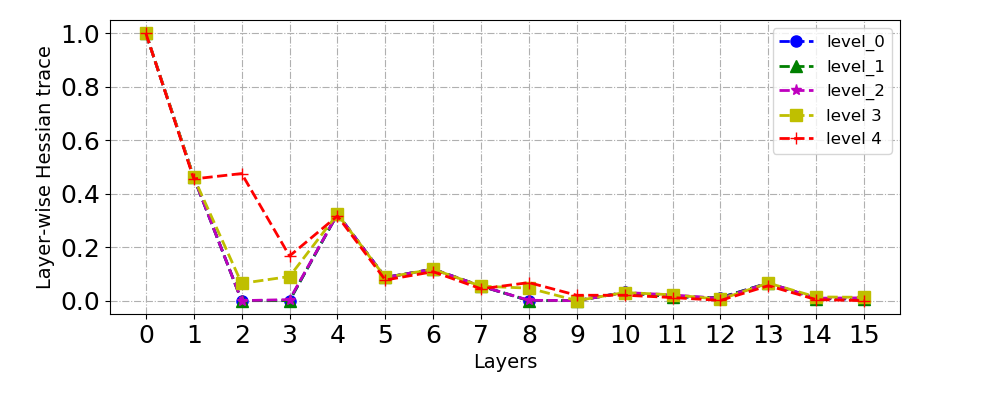

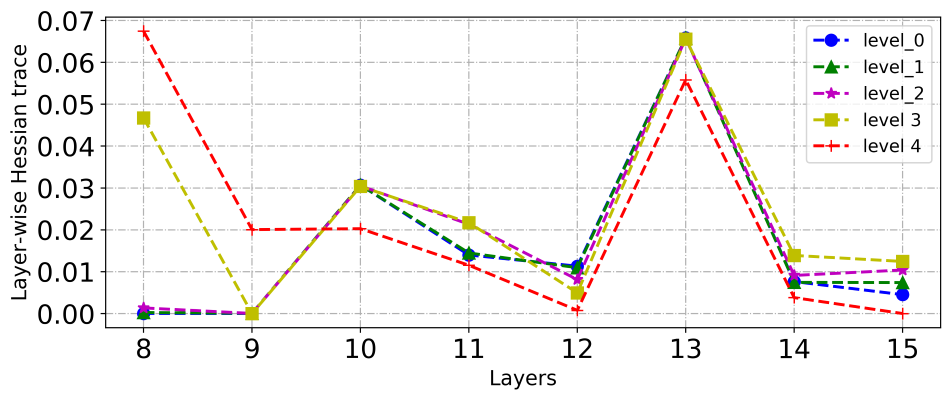

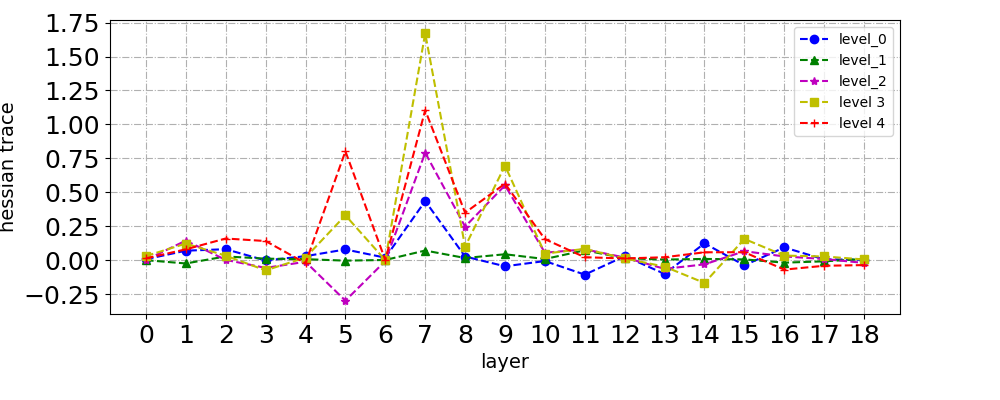

- Precision Decision Agent (PDA): A lightweight module that makes layer-wise bit-width decisions based on current data quality and quantization sensitivity derived from Hessian trace information.

- Quantization Auxiliary Computer (QAC): Ensures that quantization operations do not accumulate errors over multiple iterations and allows the model to maintain high accuracy across varying data qualities.

Figure 1: Overall architecture design of DQMQ.

Experimental Evaluation

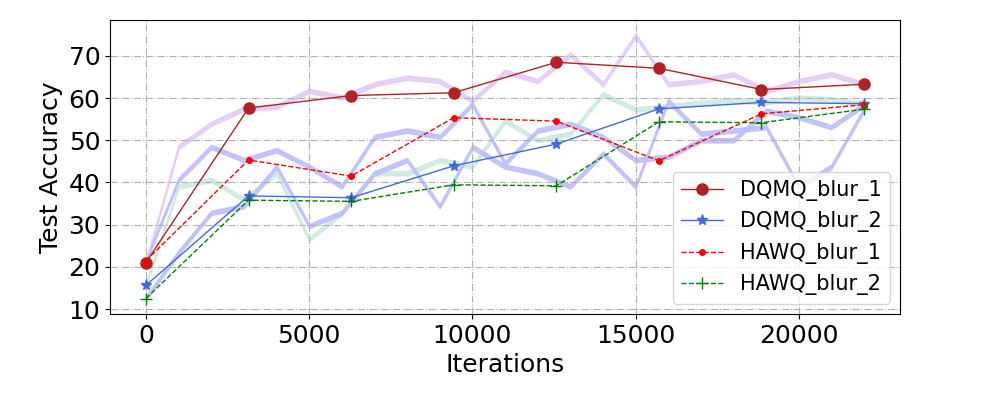

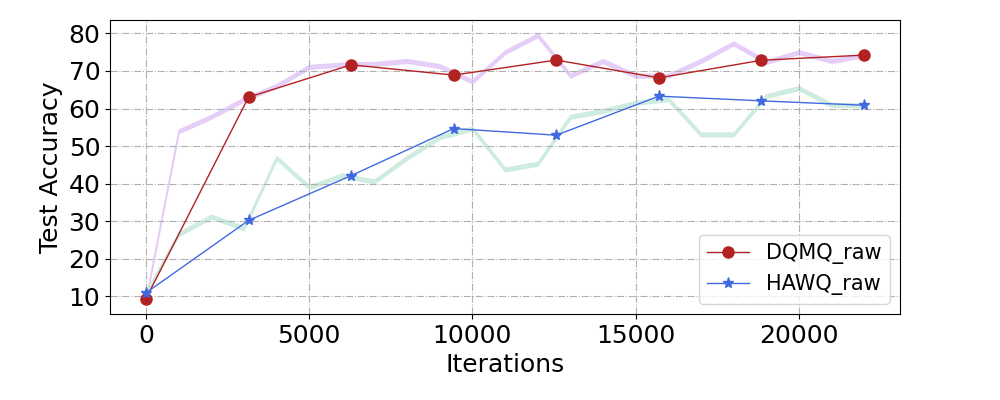

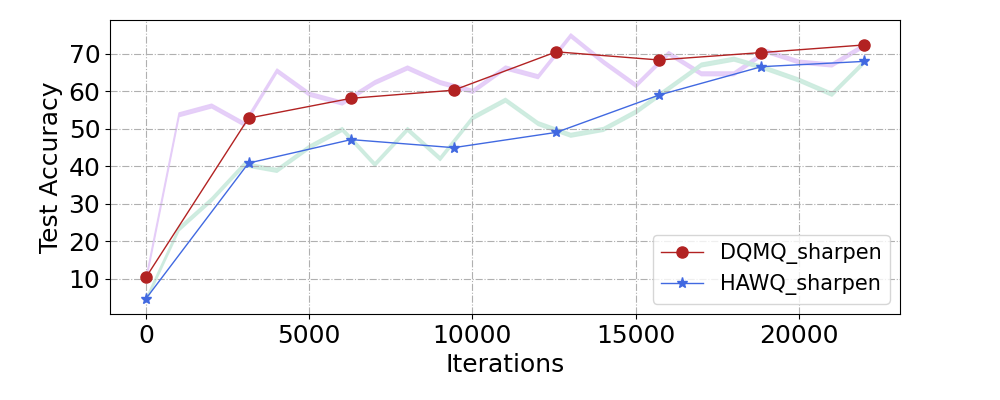

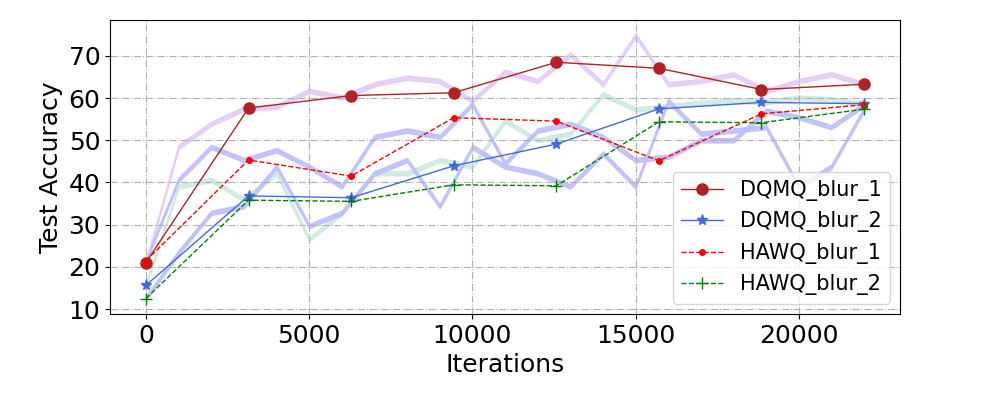

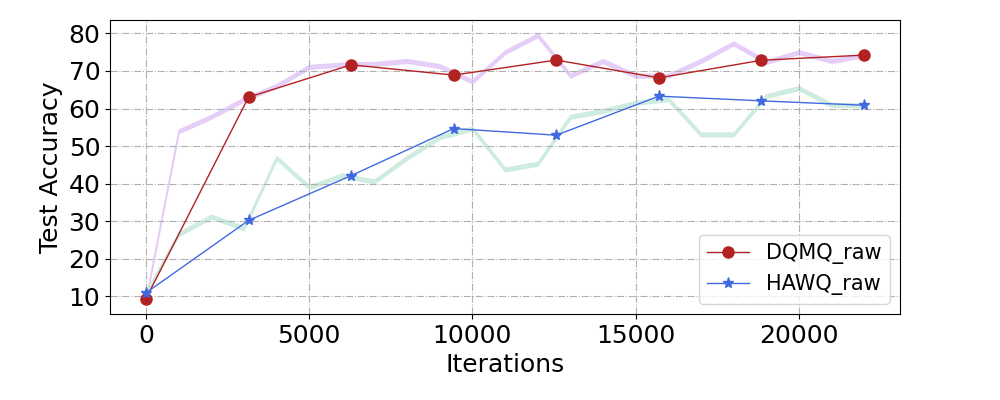

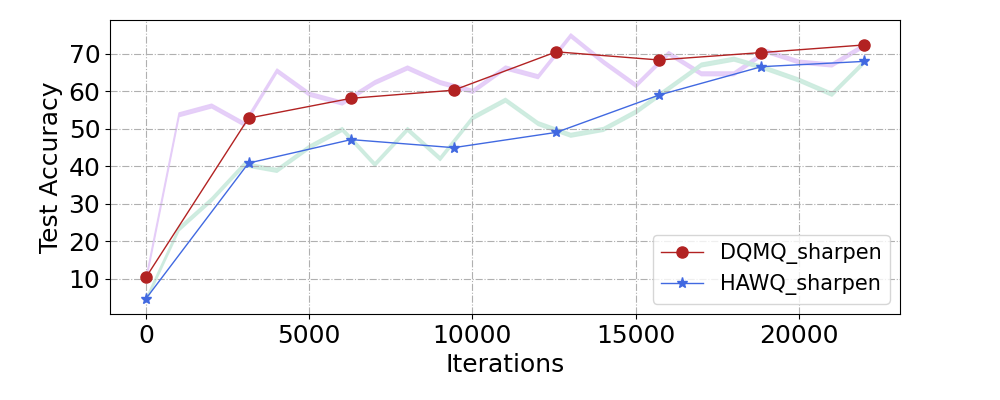

The performance of DQMQ was evaluated across multiple datasets, including CIFAR-10 and SVHN, with varying data qualities. The results demonstrated the effectiveness of DQMQ in maintaining high top-1 accuracy under different quality levels compared to existing methods such as HAWQ and AutoQ.

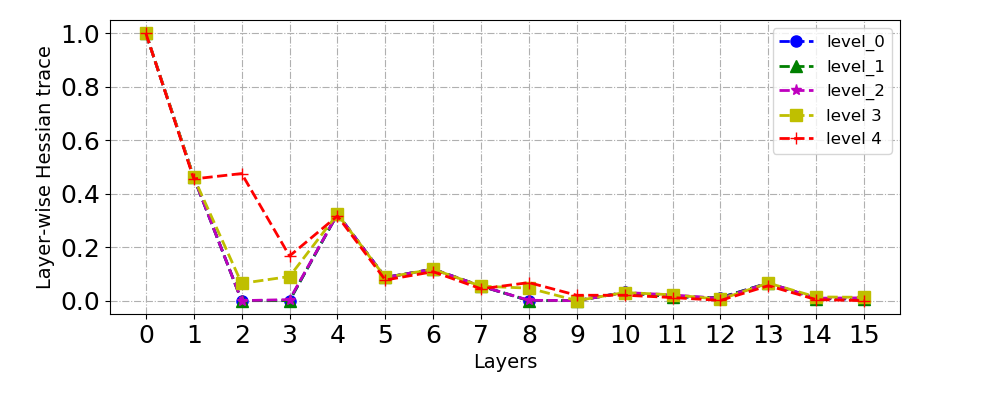

Figure 2: Comparison of accuracy (Top-1)(%) of DQMQ and HAWQ of ResNet-18 on CIFAR-10 with different image qualities.

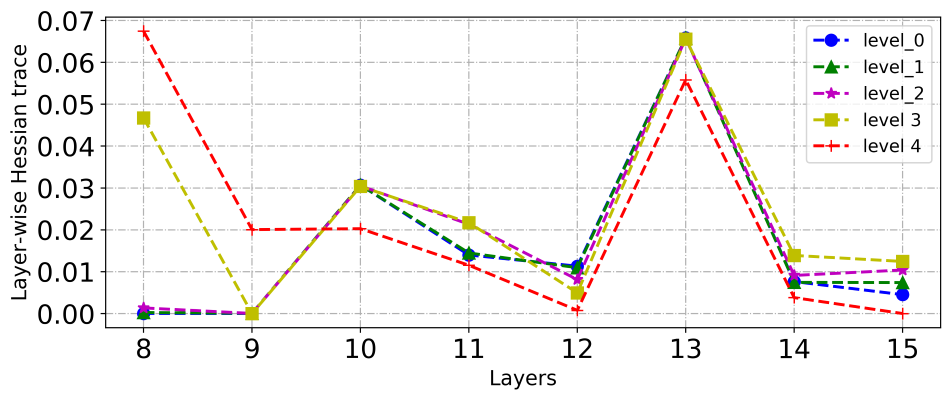

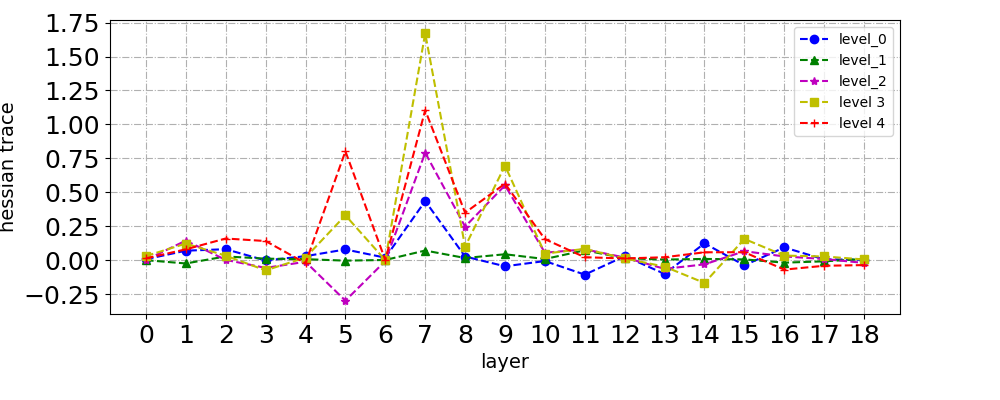

Figure 3: Comparison of the layer-wise quantization sensitivities of DQMQ on ResNet-18 under CIFAR-10 with five different data qualities.

Analysis

The main advantage of DQMQ over traditional methods is its ability to dynamically adjust bit-widths considering real-world data quality variations. This adaptability is crucial for edge deployment scenarios where data quality can significantly fluctuate due to environmental factors. The PDA component effectively learns a policy that balances quantization precision and task performance, resulting in more efficient model compression without sacrificing accuracy.

DQM's approach to integrate the previously decoupled bit-width decision making with quantization training into a one-shot process enhances overall training efficiency and accuracy, supporting its claims of improved robustness and adaptability.

Conclusion

DQMQ presents a significant advancement in mixed-precision quantization by introducing data quality awareness into the quantization process through hybrid reinforcement learning. The framework ensures that deep neural networks can operate efficiently on edge devices while maintaining robustness and adaptability to dynamic input data qualities. Future research could explore further optimization of the PDA and QAC components to handle a broader range of neural network architectures and data complexities. This work establishes a foundational approach for achieving data-aware quantization in real-world AI applications.