- The paper demonstrates that text-based AI assistants lead to higher query volume and efficiency compared to voice-based systems.

- Methodology involves a Wizard-of-Oz study with 20 participants categorizing UX evaluator questions across five key categories.

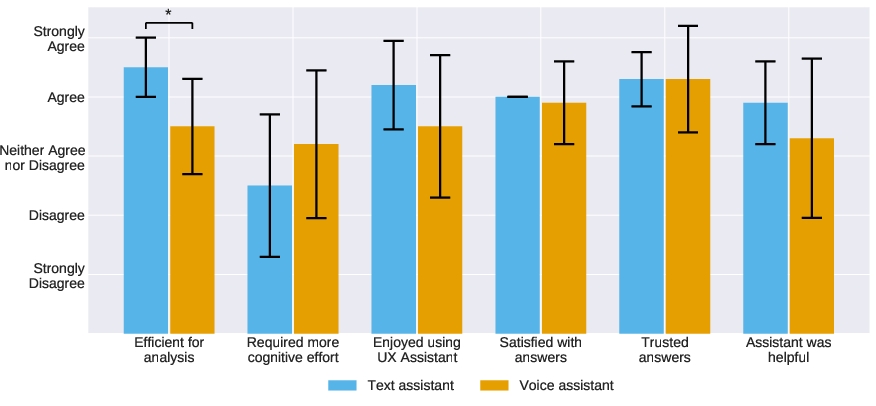

- Results indicate that while both modalities maintain trust, text interfaces reduce cognitive load and enhance user satisfaction in UX tests.

Collaboration with Conversational AI Assistants for UX Evaluation: Voice vs Text

This paper explores the interaction between UX evaluators and conversational AI assistants, comparing two input modalities: voice and text. It aims to identify the types of questions users are likely to ask and the implications of different modes of interaction on the efficiency and satisfaction of UX evaluators.

Interactive AI Assistants in UX Evaluation

Conversational AI assistants offer a more dynamic, interactive method for UX evaluators to analyze usability test recordings. Traditional interfaces often present data through static visualizations, limiting direct interaction. The paper posits that interactive assistants potentially enhance autonomy and efficiency in UX evaluations.

User Interfaces

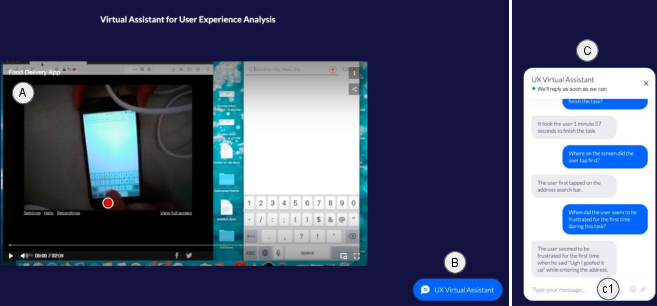

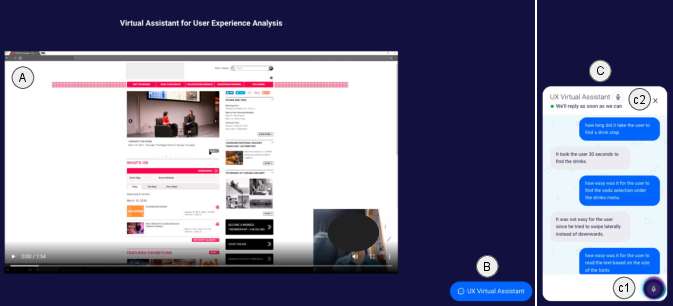

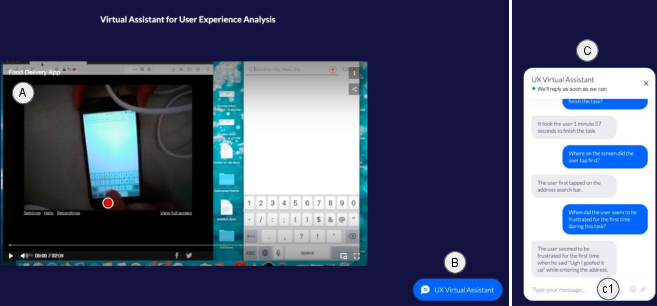

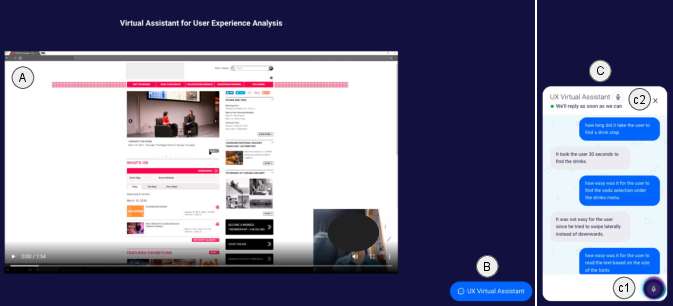

The research experimentally used simulated AI assistants in text and voice modalities. The text assistant interface allows users to interact via a chatbox adjacent to the video player (Figure 1). The voice assistant features a microphone icon enabling speech-to-text transcription and voice responses (Figure 2).

Figure 1: User interface of the text assistant: leveraging a chat-based thread for interaction between UX evaluators and the AI assistant.

Figure 2: User interface of the voice assistant with integral features for activating voice-based queries through a microphone icon.

Methodology

A Wizard-of-Oz study design was employed with 20 participants—each tasked with evaluating usability tests using either the text or the voice modal interface. The simulated AI assistant was operated by a researcher who generated real-time responses to participant queries. The study's primary goals were to categorize the questions posed by users, compare usage between the two modalities, and collect qualitative feedback on session experiences.

Question Categories

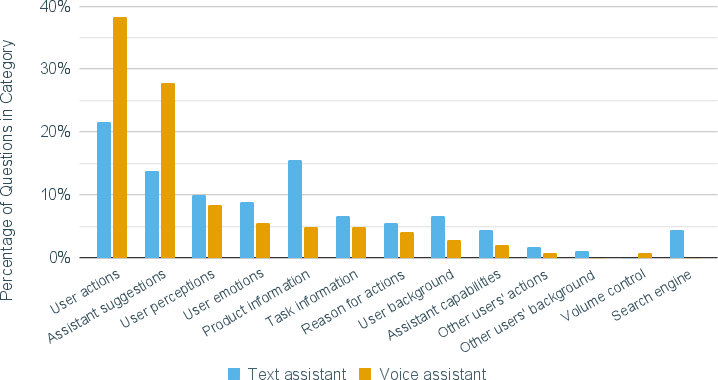

The study identified five primary categories of questions. These include:

- User Actions: Queries about specific interactions or actions taken by users in the test (30.2% of all questions).

- User Mental Model: Questions reflecting on users' perceptions or emotional states (21.5%).

- Product & Task Information: Inquiries about the usability test context or product (16.6%).

- Help from AI Assistant: Assistance-related requests and suggestions (26.2%).

- User Demographics: Questions regarding the background of test users (5.5%).

Comparison of Modalities

The study unveils notable differences between the two modalities:

Results and Implications

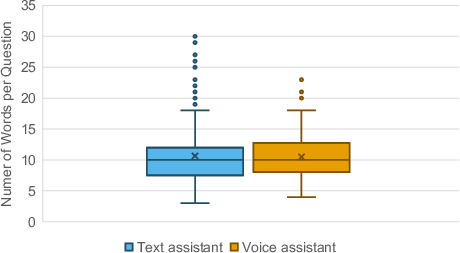

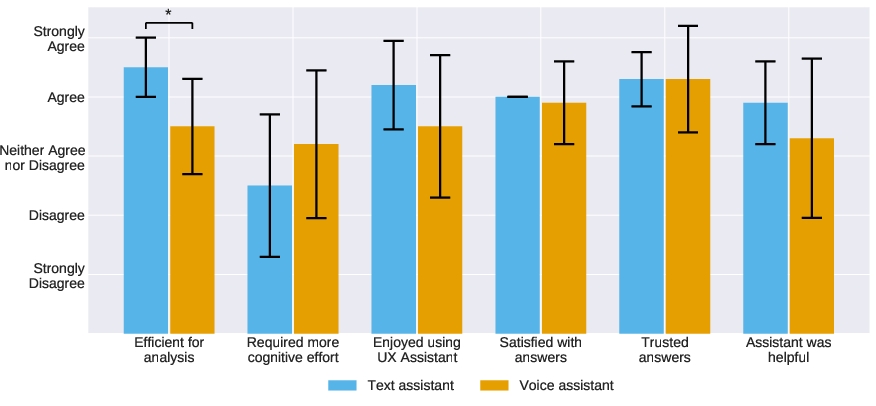

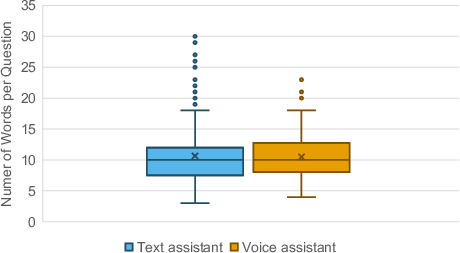

The text interface generally encouraged a higher volume of interactions, with participants asking more questions on average. However, the length of questions did not significantly differ between modalities (Figure 4). Participants cited the convenience of verbal interaction but noted its disruptive potential concerning progress and content accuracy.

Figure 4: Box plot showing the distribution of the number of words per question for text and voice assistants, highlighting engagement in both modalities.

User Satisfaction

User satisfaction favored text assistants, attributed to perceived efficiency and lower cognitive effort. Both voice and text modalities, however, garnered a similar trust level for the answers provided, due in part to the AI assistant's provision of straightforward and factual responses.

Figure 5: Bar chart showing the survey responses for both the text and voice assistants, highlighting efficiency disparities.

Conclusion

The paper suggests significant design implications for future conversational AI in UX evaluation, advocating for the integration of both text and voice modalities based on context and user preference. This study underscores the potential for conversational assistants to enhance UX evaluations by providing a balance between interactive query-driven insights and efficient, focused feedback mechanisms.

Overall, these findings contribute to a nuanced understanding of how conversational AI can be exploited in usability testing, potentially paving the way for more context-aware, sophisticated evaluation tools in the UX domain.