- The paper presents a thorough historical survey of AIGC, detailing its evolution from early probabilistic models to modern transformer architectures.

- It examines key methodologies and results, including reinforcement learning enhancements and the integration of multimodal generative models.

- Practical applications in chatbots, art, and code generation highlight the transformative impact and real-world relevance of AIGC.

A Comprehensive Survey of AI-Generated Content (AIGC): A History of Generative AI from GAN to ChatGPT

Introduction to AIGC

The paper "A Comprehensive Survey of AI-Generated Content (AIGC): A History of Generative AI from GAN to ChatGPT" (2303.04226) provides a thorough investigation into the evolution and development of Artificial Intelligence Generated Content (AIGC). This includes diverse modalities such as text, image, and audio generation, driven by advancements in Generative AI (GAI) techniques. AIGC encompasses the use of AI models to produce digital content, making the content creation process more efficient and broadly accessible. The paper outlines the historical progression from early generative models such as AR and VAE to contemporary state-of-the-art models including DALL-E-2 and ChatGPT, which have captured public interest due to their impressive capabilities.

Historical Context and Evolution of Generative AI

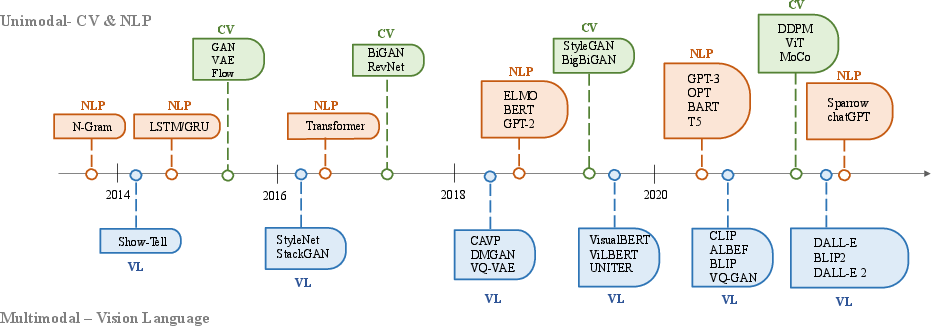

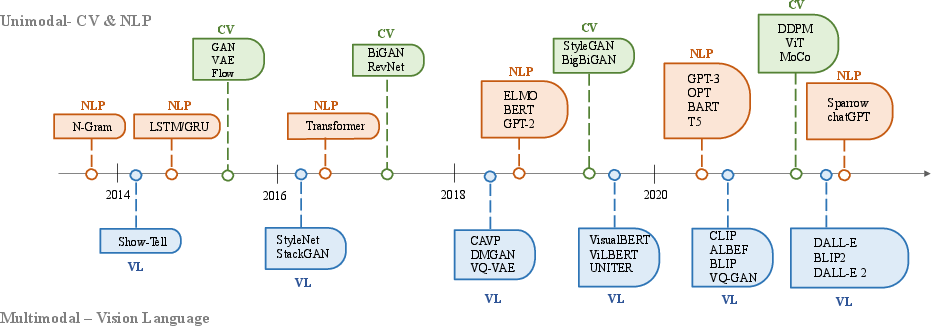

Generative AI has its roots in probabilistic models like Hidden Markov Models (HMMs) and Gaussian Mixture Models (GMMs) designed for sequential data generation. The advent of deep learning brought substantial improvements, facilitating the development of models such as GANs for image generation and RNNs, LSTMs in NLP for language generation. These offered new possibilities for complex data generation, contributing to advances in both unimodal and multimodal settings.

Figure 1: The history of Generative AI in CV, NLP, and VL.

A major turning point was the introduction of Transformer architecture, which revolutionized AI models by providing greater efficiency and scalability. This architecture forms the backbone of many leading generative models such as GPT, BERT, and T5 in NLP, as well as ViT and Swin Transformer in vision-based tasks. These advancements allowed for more sophisticated interactions between different modalities, leading to new models like CLIP that bridged vision and language domains.

Foundation Models and Techniques

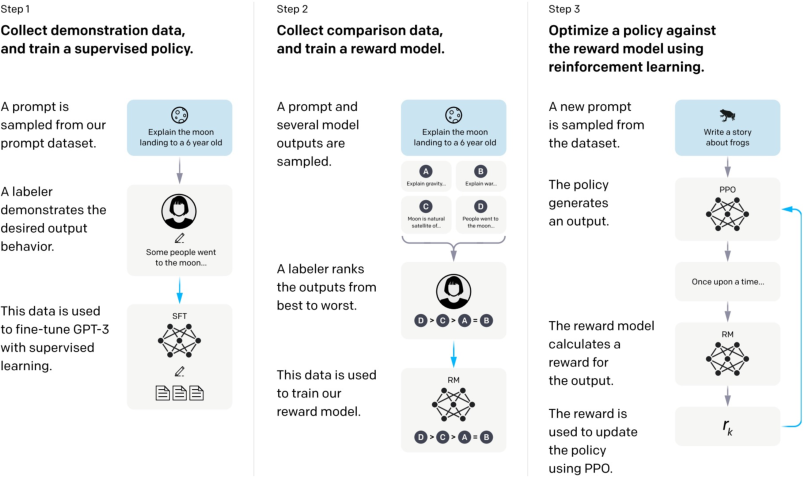

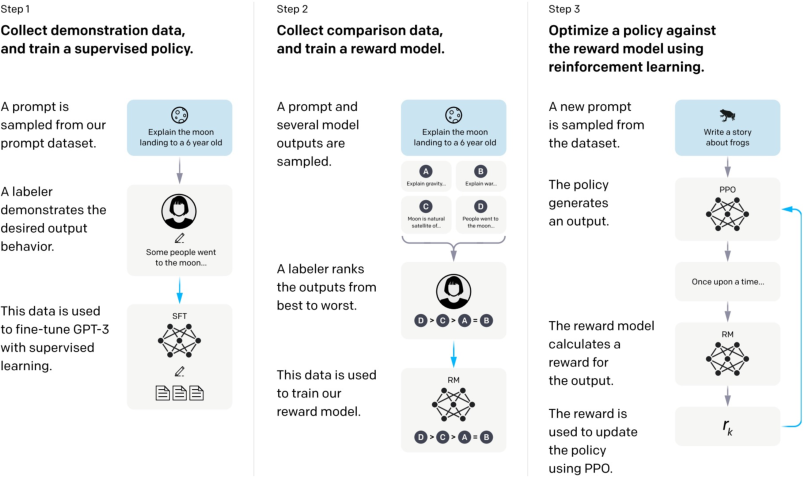

The survey explores the foundational techniques pivotal to contemporary AIGC, particularly focusing on the role of large-scale transformer-based models. These models leverage the self-attention mechanism to efficiently process variable-length sequences, improving both language and image generation processes. Key improvements in training efficiency, such as reinforcement learning from human feedback (RLHF), have been integrated into models like InstructGPT to enhance alignment with human preferences, optimizing content reliability and accuracy.

Figure 2: The architecture of InstructGPT~\cite{ouyang_training_2022.

Moreover, advancements in hardware and distributed training have significantly reduced computational constraints, enabling more complex models and datasets to be processed. These include cloud computing solutions and frameworks simplifying large-scale model training and deployment.

Recent Advances in AIGC

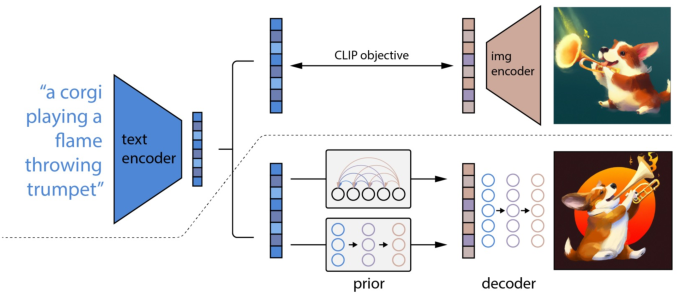

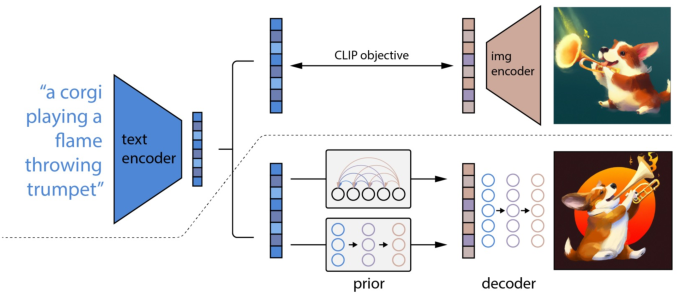

The paper categorizes recent advances in AIGC into unimodal and multimodal generative models, detailing the specifics of each approach. Unimodal models such as GPT-3 and StyleGAN exemplify successes in single modality settings—text and image respectively. Meanwhile, multimodal models like DALL-E-2 and CLIP offer cross-modal interactions, allowing for richer and more context-aware content generation.

Figure 3: The model structure of DALL-E-2.

Key innovations in multimodal models have facilitated the synthesis of diverse content types, employing encoder-decoder architectures that align different modalities through sophisticated pre-training tasks and strategies. The emergence of models capable of generating both text and images or music and audio reflects the breadth and potential of multimodal AIGC applications.

Applications

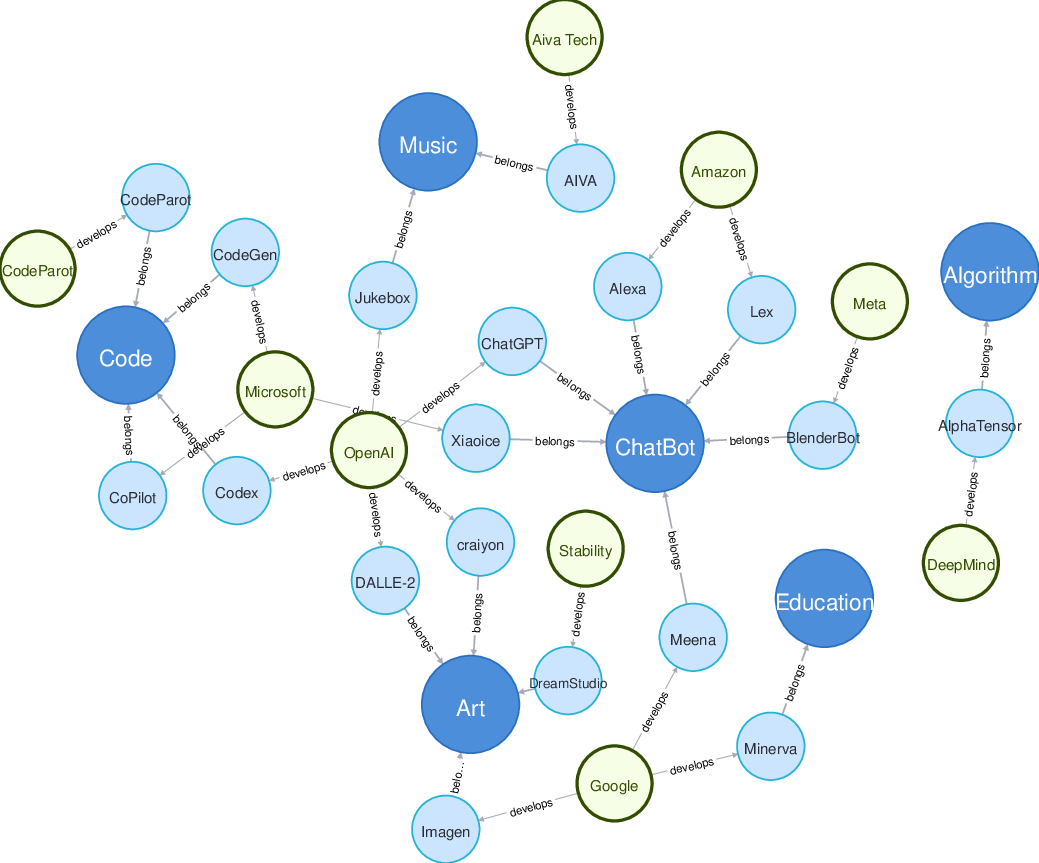

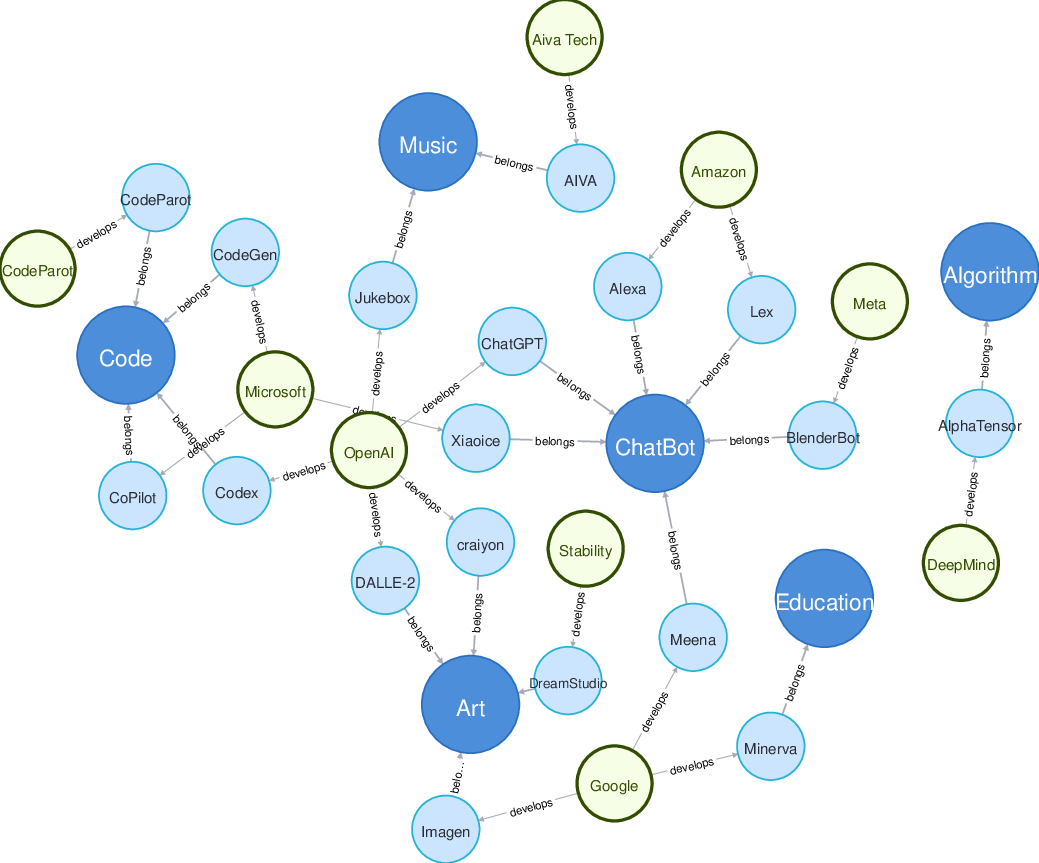

The survey highlights various applications where AIGC has been transformative, from chatbot systems like ChatGPT and Xiaoice to artistic endeavors via platforms like DALL-E-2 and DreamStudio. Text-to-code models such as Codex have streamlined coding efficiency, while AI models in music, like Jukebox, have redefined the music creation process. These applications underscore the wide-ranging implications and societal impact of AIGC technologies.

Figure 4: A relation graph of current research areas, applications, and related companies.

Conclusion

The paper captures the essence and trajectory of AIGC technologies, presenting a comprehensive overview of their evolution, foundational models, recent advancements, applications, challenges, and future directions. It serves as both a primer and a roadmap for further research in this rapidly developing area. As AIGC continues to integrate more complex modalities and tackle higher-stakes applications, its potential for societal transformation is profound and needs careful handling to ensure responsible use and alignment with ethical standards.