- The paper introduces an AR interface that enables remote control of dual-arm robotic manipulators in hazardous hot box environments.

- The paper details a system architecture integrating HoloLens 2, ROS, and Kinova Gen3 arms for intuitive gesture-based manipulation.

- Results demonstrate practical application with real-time updates through live video streams and 3D point cloud visualizations to enhance precision.

Augmented Reality Interface for Remote Hot Box Manipulation

This paper introduces an augmented reality (AR) interface designed to enable remote operation of dual-arm manipulators within hot box environments, addressing the challenges of conducting experiments in hazardous or isolated settings. The system leverages a Microsoft HoloLens 2 headset to provide users with an intuitive and natural control interface, enhancing situational awareness through real-time visualization and interactive elements.

System Architecture and Components

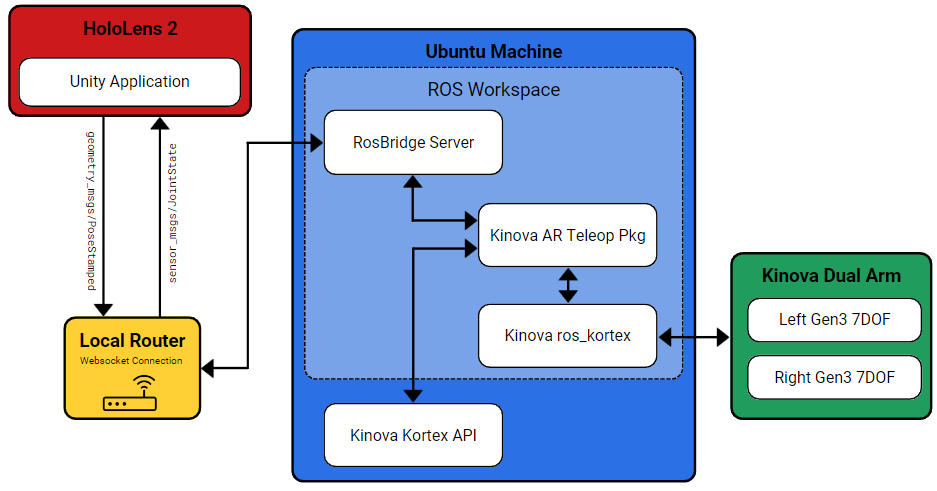

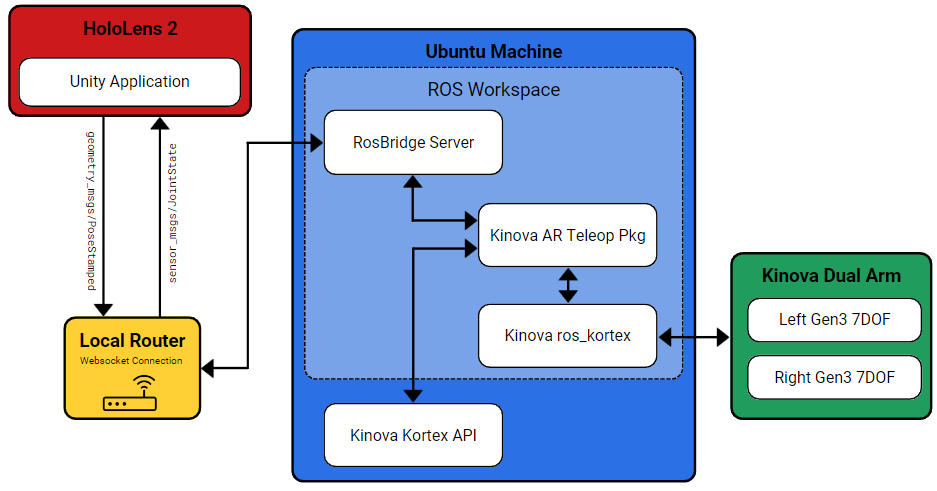

The system integrates a HoloLens 2, ROS packages, and Kinova Gen3 robotic arms to facilitate remote operation through natural controls. The Unity game engine, in conjunction with ROS package scripts, establishes a communication pipeline between the headset and the robotic arms. Users can manipulate the robotic arms by pinching their thumb and index finger on virtual arm grippers within the AR environment. The hand poses tracked by the HoloLens 2 are converted into serialized messages and transmitted to an Ubuntu machine functioning as a control station server. This server deserializes the messages and broadcasts them to specific scripts, which interpret the messages and convert them into twist commands for the robotic arms. Positional updates from the robotic arms are relayed back to the HoloLens 2, updating the virtual arm representations in real-time.

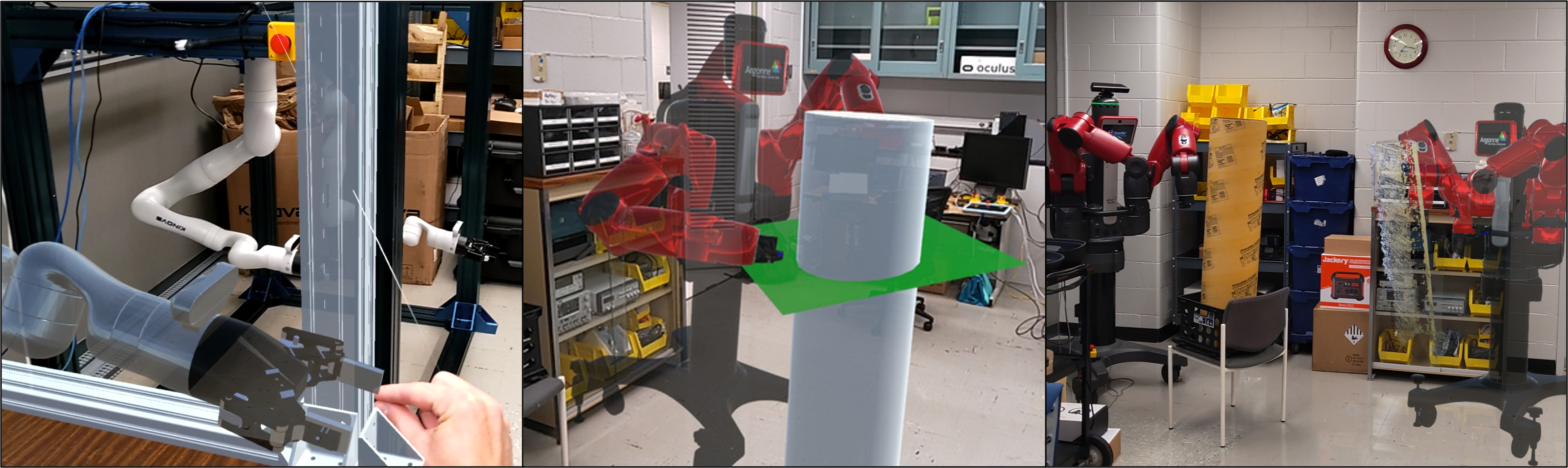

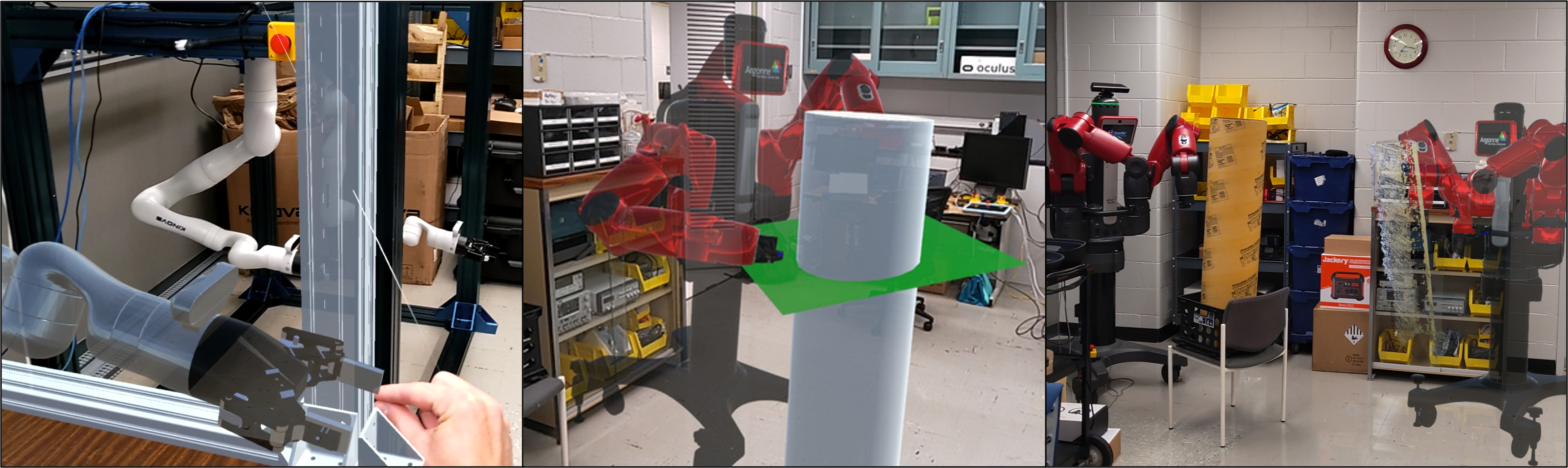

Figure 1: [Left] New hot box design with two robotic arms mounted to the top manipulating chemistry tools. [Right] First-person-view of a HoloLens 2 user seeing both a virtual replica of the hot box atop the table and the real physical hotbox on the right.

Software Implementation

The AR application is built within the Unity game engine, providing HoloLens 2 users with virtual representations of the hot box and processing hand tracking data. The Unity application publishes hand positions and subscribes to the robotic arms' joint positions via ROS topics on a RosBridge server. Standard PoseStamped and JointState ROS messages are used for hand and robot joint positions, respectively. The messages are serialized into JSON format before transmission over the wireless network to ensure rapid data translation across the local router. A ROS package called RosBridge, running on a Linux server, receives and deserializes these messages, broadcasting them locally to ROS nodes on the Linux machine. The Kinova AR Teleop Package, a custom ROS package, converts the PoseStamped positions of the hands into linear and angular velocities (Twist messages) that the robots can understand. These Twist messages are then published to the Kinova ROS Kortex package, which sends the move commands to the arms, utilizing its internal inverse kinematic solver. The ROS Kortex package continuously publishes JointState information back to the Kinova AR Teleop Package, which packages, serializes, and sends the data back to the HoloLens 2 for updating the virtual arm positions.

Figure 2: Images captured from the perspective of a HoloLens 2 user. [Left] User pinching the virtual end effector commanding the physical arm to move. [Middle] Robotic arm interacting with a virtual plane in AR used to assist in teleoperation. [Right] Virtual point cloud visualization of the physical tube.

Practical Applications and Testing

The AR application has been successfully implemented to control both Kinova dual-arm and ReThink Robotics Baxter dual-arm manipulators. Users can manipulate the robots remotely using pinch and grab gestures. The interface includes togglable features such as a live video stream, virtual fixtures, and point cloud visualizations. The virtual robots can also be scaled within the scene to facilitate precise manipulations or confined space arrangements.

Figure 3: System overview of how the HoloLens 2 integrates with the robotic manipulators inside of the hot box.

Conclusion and Future Directions

The AR application enables scientists to remotely operate robotic manipulators within a hot box environment using hand gestures. The system incorporates a live video stream, 3D point cloud visualization, and virtual fixtures to enhance the user experience. Future research will focus on optimizing hand gesturing methods for controlling the robotic arms' position and orientation. The HoloLens 2 captures extensive sensor data, offering opportunities to explore the use of voice, eye gaze, and head position to further improve the remote operation experience.