- The paper introduces a CNN-Transformer hybrid architecture that significantly improves forecasting accuracy for intraday stock price movements.

- The approach leverages CNNs for local feature extraction and Transformers for modeling long-term dependencies, efficiently capturing micro and macro trends.

- Experimental results on S&P 500 data demonstrate enhanced sign prediction accuracy, especially with high-confidence thresholding.

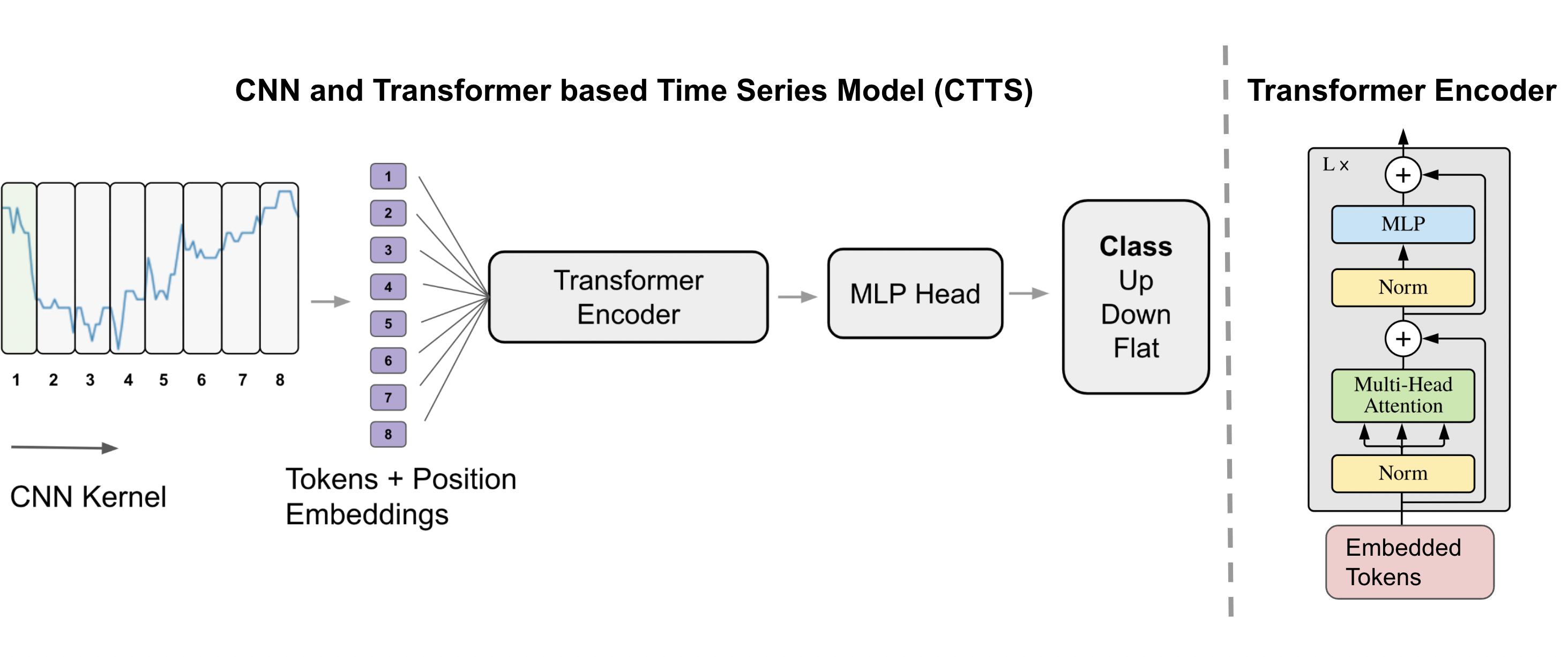

The paper "Financial Time Series Forecasting using CNN and Transformer" presents a methodology that combines Convolutional Neural Networks (CNNs) and Transformers to predict the direction of financial time series changes, specifically forecasting intraday stock price movements for S&P 500 constituents. This approach models both short-term and long-term dependencies within time series, integrating CNNs for local pattern recognition and Transformers for global context modeling.

Introduction

Time series forecasting in the financial industry is a complex task due to the intricate linear and non-linear interactions present in the data. Traditional statistical models such as ARIMA and various smoothing techniques have been used extensively for these tasks but often fall short in capturing complex dependencies over different scales. With the advancement of deep learning, methods like RNNs and LSTMs have offered improvements but still face challenges with long-term dependencies and training time inefficiencies.

The authors address these limitations by leveraging CNNs for capturing local temporal dependencies and Transformers for global patterns. Such integration allows for the modeling of both micro and macro trends in stock data, aiming to classify stock price movements into three categories: up, down, or flat.

Methodology

The proposed model, designated as CNN and Transformer based time series modeling (CTTS), processes financial time series data through a hybrid architecture. The methodology can be summarized as follows:

Experiments and Evaluation

The performance of CTTS was evaluated on a dataset comprising intraday stock prices of S&P 500 companies, using data from the first three quarters of 2019 for training and the last quarter for testing. The experimental setup involved comparisons with state-of-the-art baselines:

- Baselines: Compared with DeepAR, ARIMA, and EMA models. A naive constant class predictor was also used for reference.

- Metrics: The models were evaluated using sign prediction accuracy in both 2-class and 3-class settings. A measure termed thresholded accuracy was additionally used to focus on high-confidence predictions.

The experiments demonstrated that CTTS outperformed competing methods significantly. Notably, in both 2-class and 3-class tasks, CTTS achieved higher prediction accuracy and demonstrated a further increase in accuracy when predictions below a certain confidence threshold were excluded (thresholded accuracy).

Results and Discussion

The results underscore the competency of CTTS in financial time series forecasting, highlighted by distinct advantages when handling complex dependencies across time series data. The integration of CNNs and Transformers caters to the various nuances of financial datasets, efficiently managing both short-term fluctuations and long-term market trends.

Key findings include:

- Superior performance over baseline models in standard and thresholded accuracy metrics.

- High reliability in probabilistic predictions, particularly evident in high-confidence instances which translated to notable accuracy improvements after thresholding.

These results indicate a promising direction for AI-driven financial predictions that could enhance trading strategies by offering probabilistic insights into price movements.

Conclusion

The paper presents a sophisticated approach for financial time series forecasting, effectively leveraging deep learning architectures to tackle inherent challenges in modeling time-dependent data. The combination of CNNs and Transformers provides a robust framework for capturing both intricate short-term patterns and extended long-term dependencies, offering valuable insights into market movements. Future efforts could explore enhancements in model architectures or the inclusion of additional contextual data to further refine prediction accuracies and generalize performance across diverse financial contexts.