- The paper demonstrates a novel latent diffusion framework with classifier guidance that improves compositional image generation by integrating diffusion in latent spaces.

- The methodology leverages pretrained models like StyleGAN2 and Diffusion Autoencoder, achieving competitive FID, ID, and ACC performance metrics.

- The approach simplifies non-linear manipulations into linear arithmetic, offering robust and flexible control for both synthetic and real image editing.

Exploring Compositional Visual Generation with Latent Classifier Guidance

Introduction

The paper "Exploring Compositional Visual Generation with Latent Classifier Guidance" (2304.12536) presents a novel approach for visual generation through the integration of diffusion models in latent spaces. This technique employs classifier guidance to enhance the compositional generation capabilities of pre-trained generative models such as StyleGAN2 and Diffusion Autoencoder.

Methodology

The research utilizes diffusion probabilistic models to facilitate non-linear generation processes within the latent semantic spaces of existing generative models. By training latent diffusion models coupled with auxiliary latent classifiers, the study aims to maximize conditional log probabilities, thus enhancing compentionality in generated images.

Further, the paper introduces a guidance term to preserve original semantics during manipulation. This approach allows a reduction to simpler linear arithmetic for non-linear manipulations under specific conditions. Unlike existing methods restricted to image space, leveraging latent spaces offers both model and latent agnosticism, facilitating manipulation irrespective of the underlying structure.

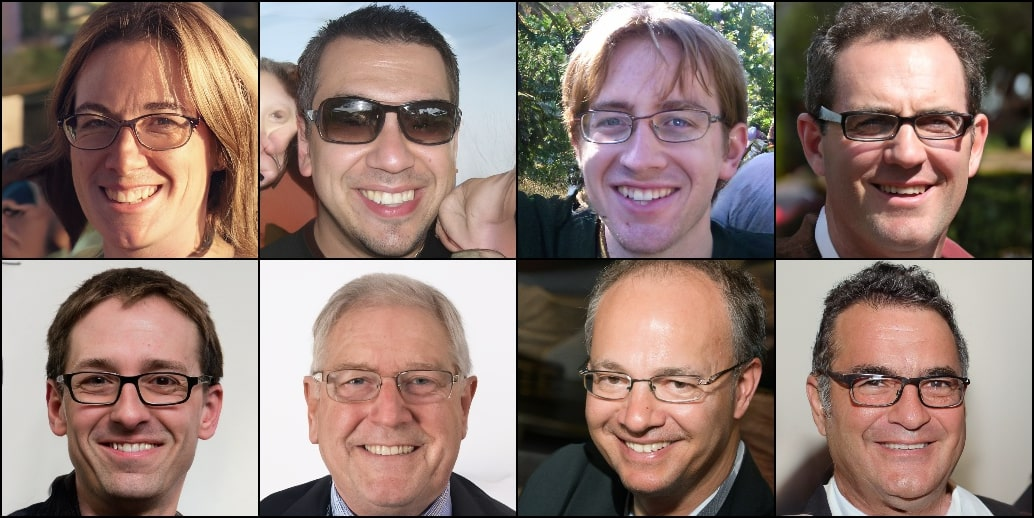

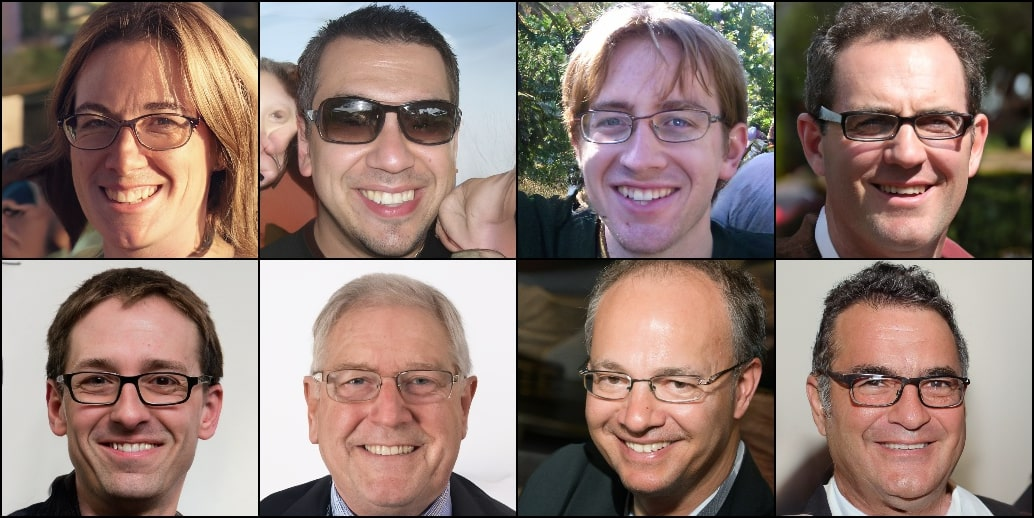

Figure 1: Qualitative comparison among different methods for compositional generation based on the latenet space of the pre-trained StyleGAN2 generator.

Experimentation and Results

Experiments were conducted on compositional generation and manipulation tasks using the latent spaces of StyleGAN2 and Diffusion Autoencoder. Quantitative evaluations based on FID, ID, and ACC metrics highlighted the competitive performance of the proposed Latent Classifier Guidance (LCG) methodologies against existing models such as StyleFlow and LACE.

In particular, the LCG-Linear and LCG-Diffusion models showcased substantial improvements over previous state-of-the-art methods, achieving high accuracy and fidelity in generating and manipulating real and synthetic images.

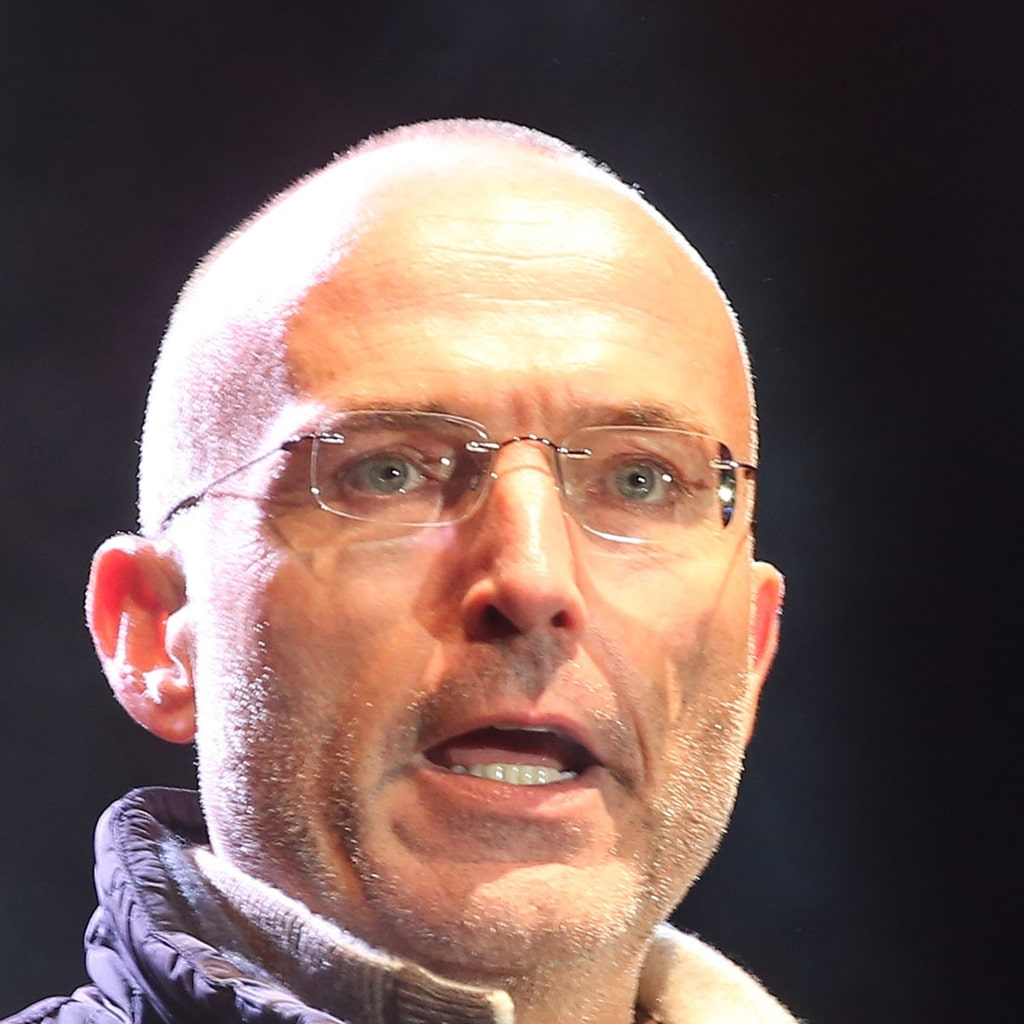

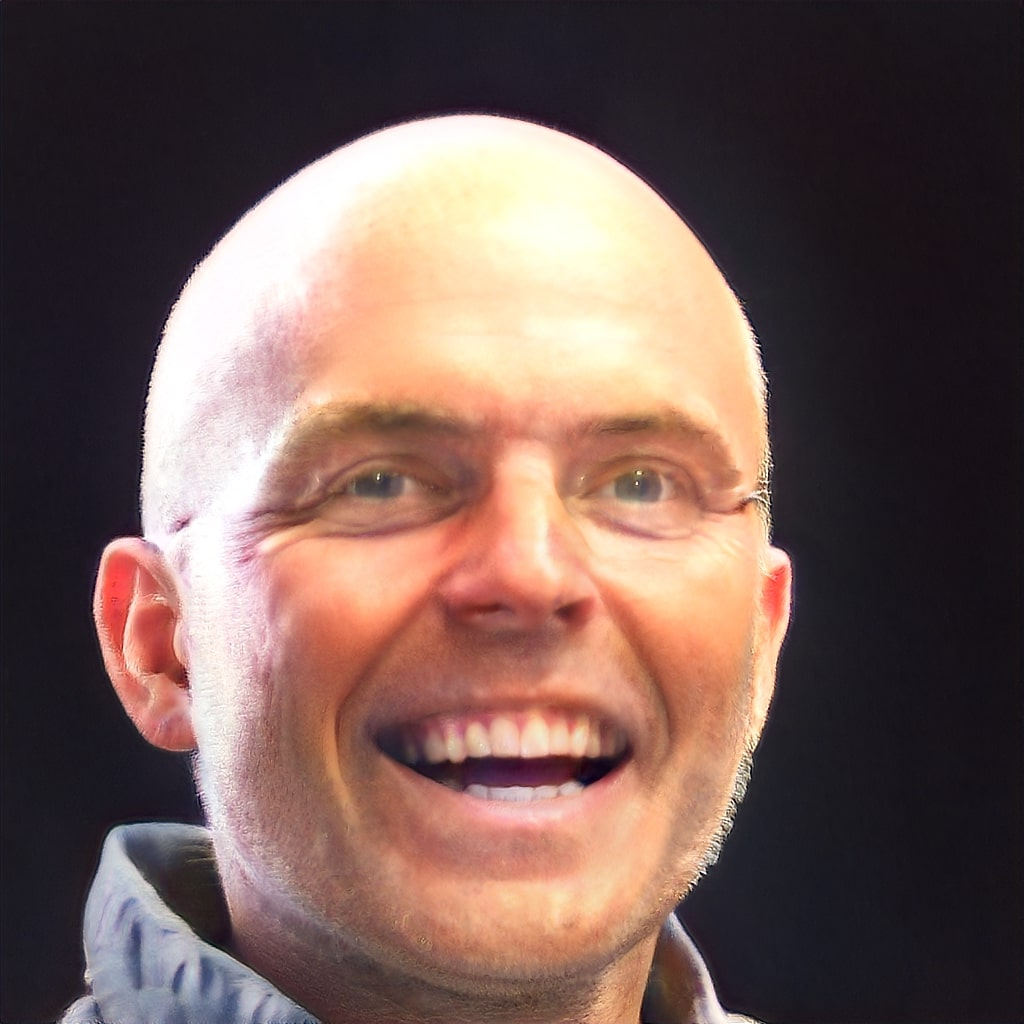

Figure 2: Qualitative comparison of our methods for compositional generation based on the latent space of Diffusion Autoencoder generator.

Discussion

Linear vs. Non-Linear Methods: The study elaborates on the benefits of linear arithmetic in maintaining non-target attributes while manipulating multiple attributes. The competitive performance of the linear approach in disentangled latent spaces underscores its simplicity and efficacy. However, non-linear approaches are preferred in scenarios where latent distributions deviate from normality, as seen in outlier-heavy regions during sequential editing tasks.

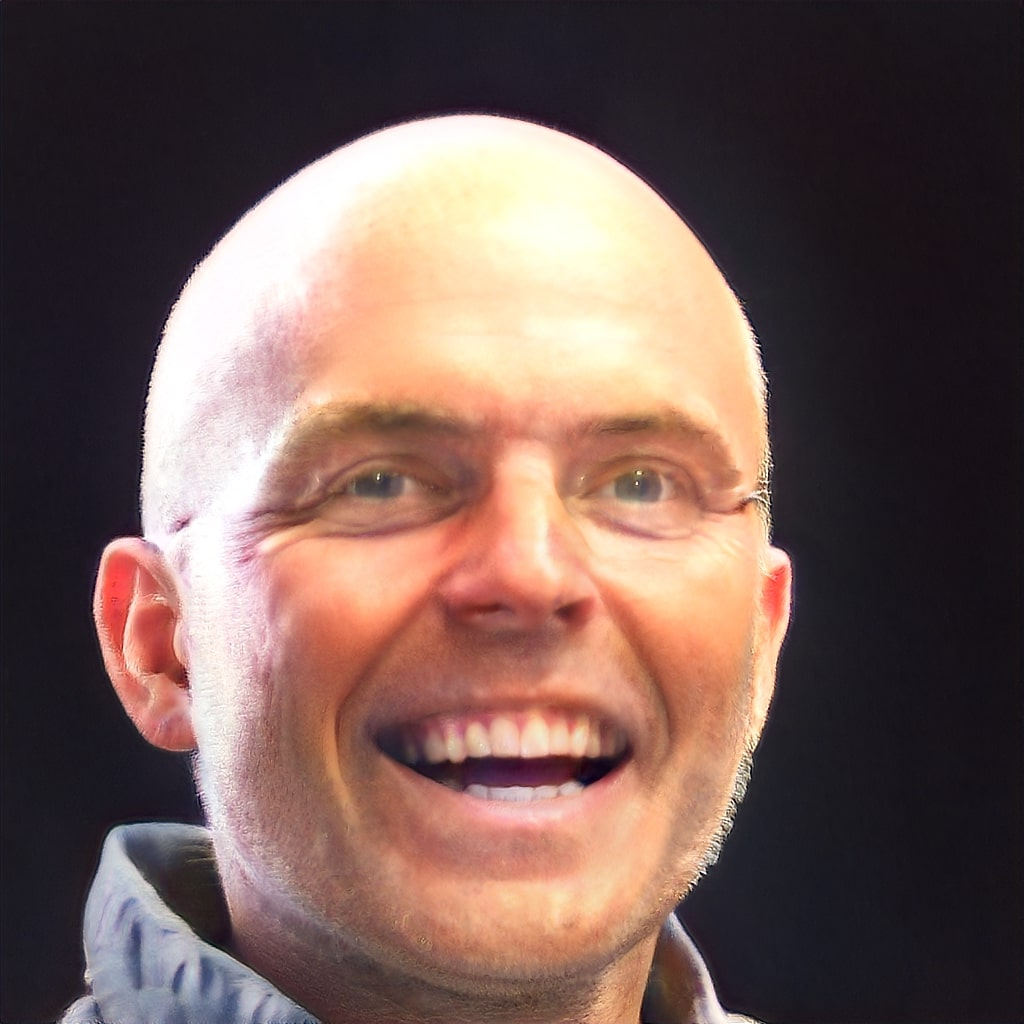

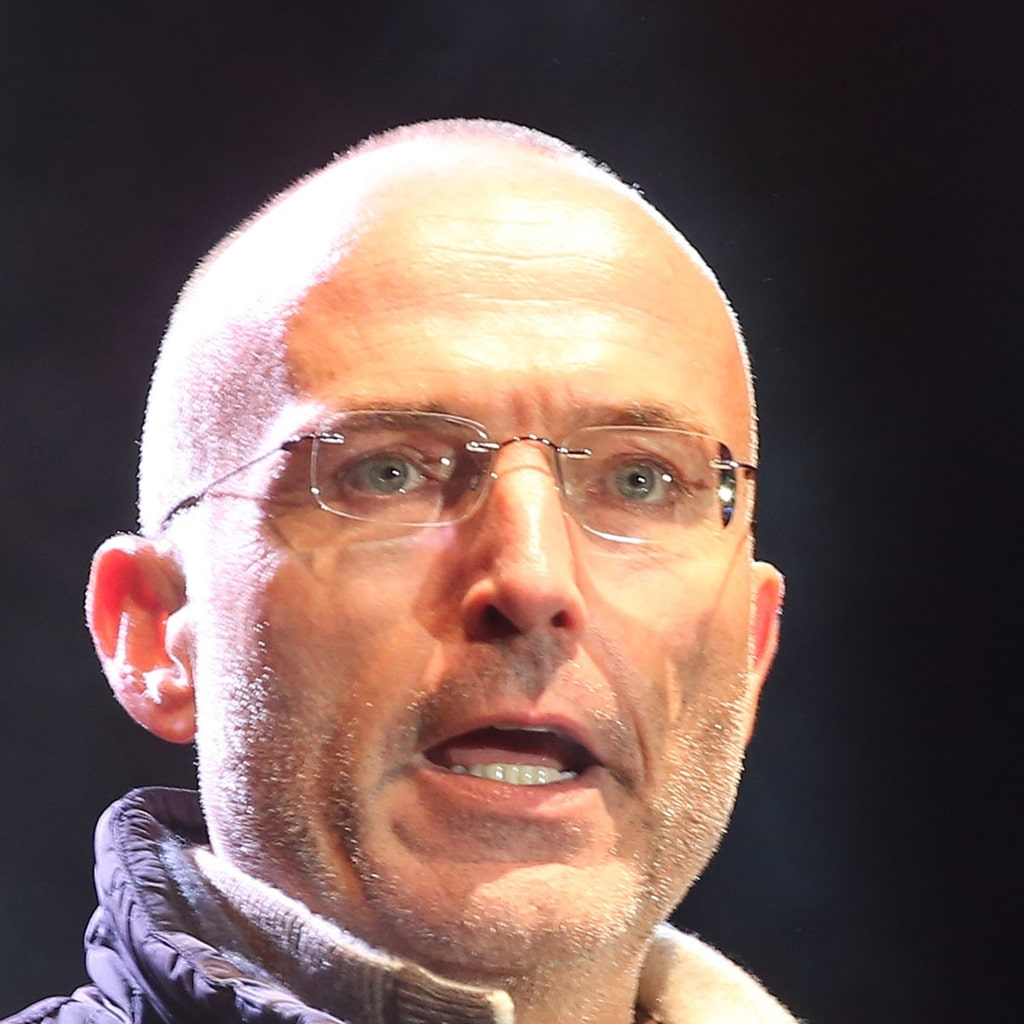

Figure 3: Qualitative comparison among different compositional manipulation methods on real image inputs.

Implications for Real Images: For real image editing, the latent classifier guidance mechanism outperformed existing methods by offering robustness in space manipulation beyond intermediate spaces, thus enhancing image quality and attribute control.

Conclusion

The integration of latent diffusion models with classifier guidance represents a promising direction for visual generation and manipulation. While the paper provides a robust framework for enhancing compositionality, future research could focus on expanding these models to more complex attribute relationships and challenging datasets. Exploring out-of-distribution performances and reorganizing latent spaces to construct semantic spaces post-pretraining are potential advances suggested for subsequent studies.

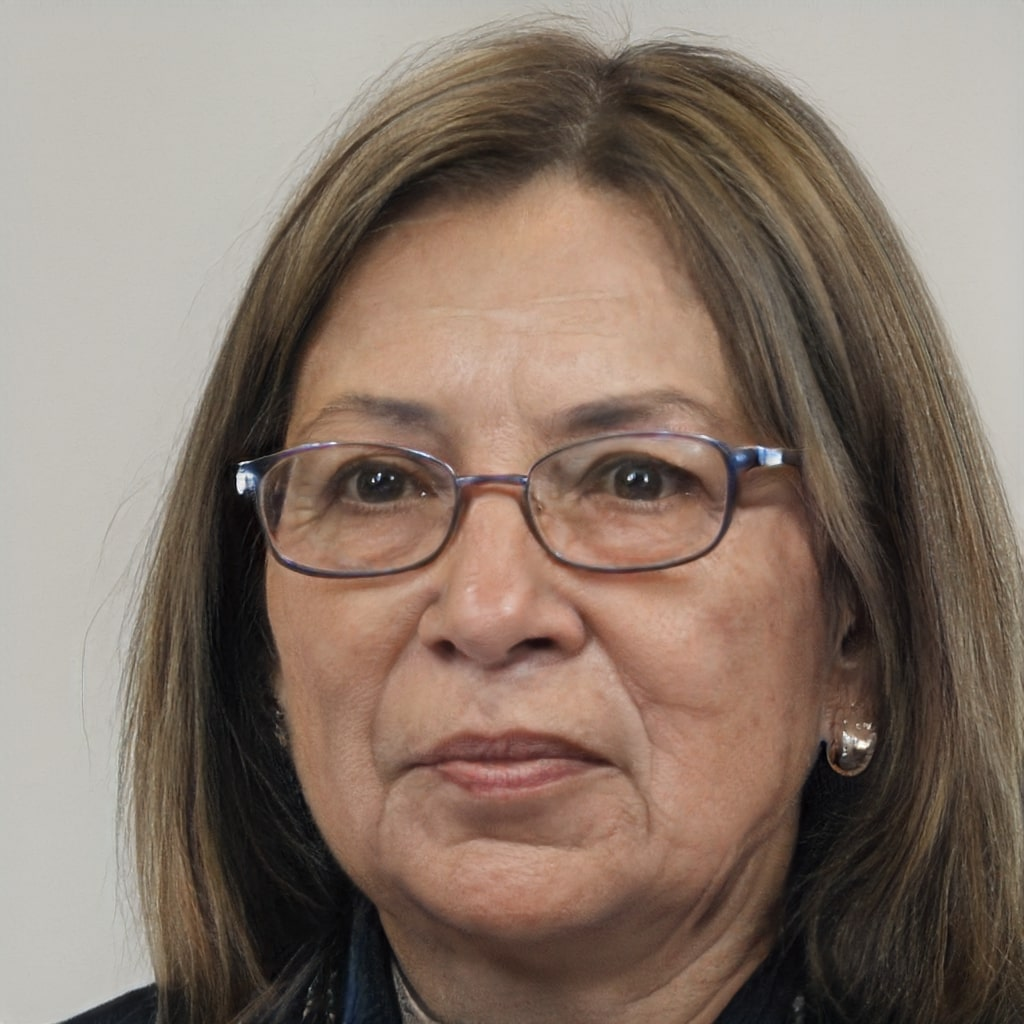

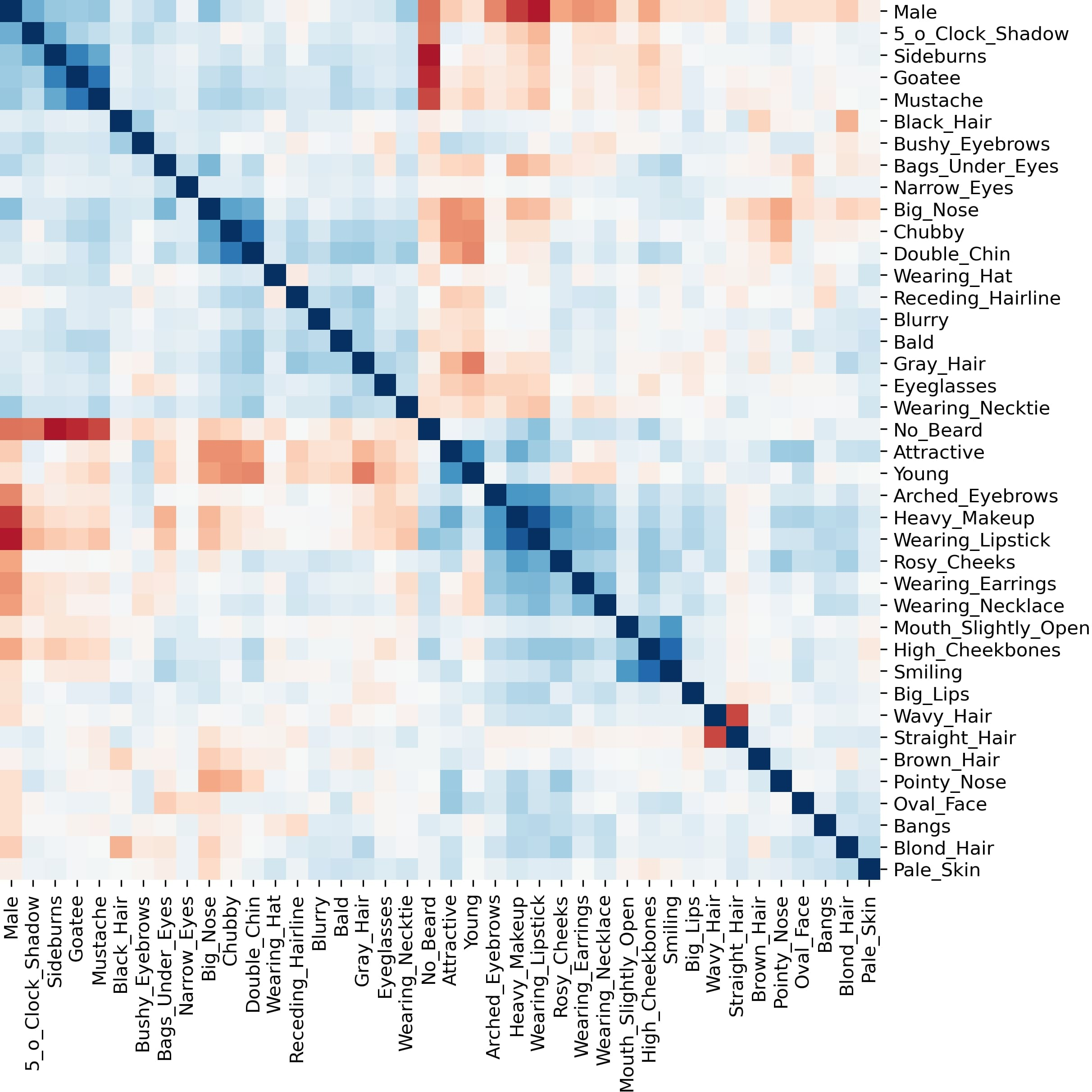

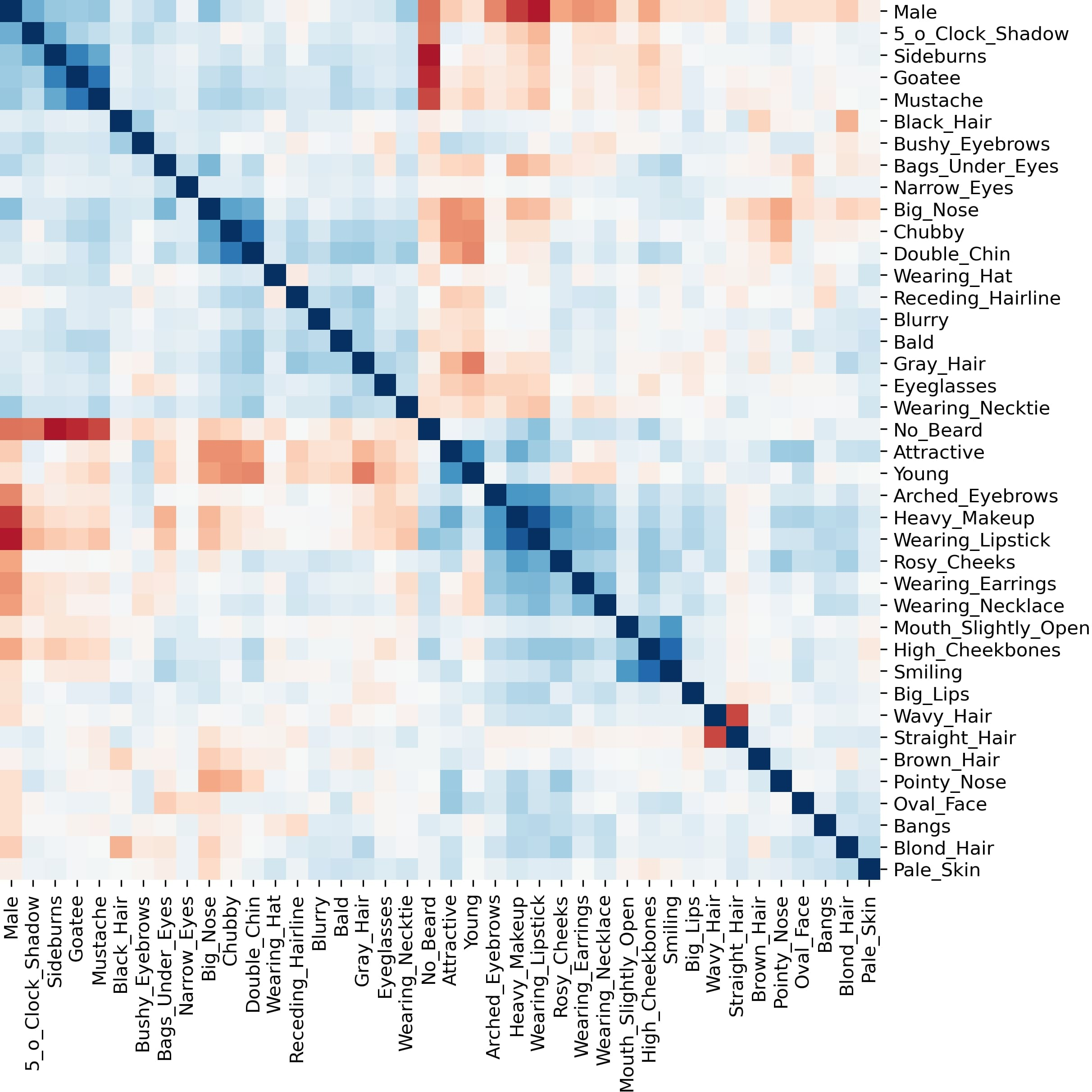

Figure 4: Visualization of Latent semantic correlations of Diffusion Autoencoder.