Interpretable Machine Learning for Science with PySR and SymbolicRegression.jl

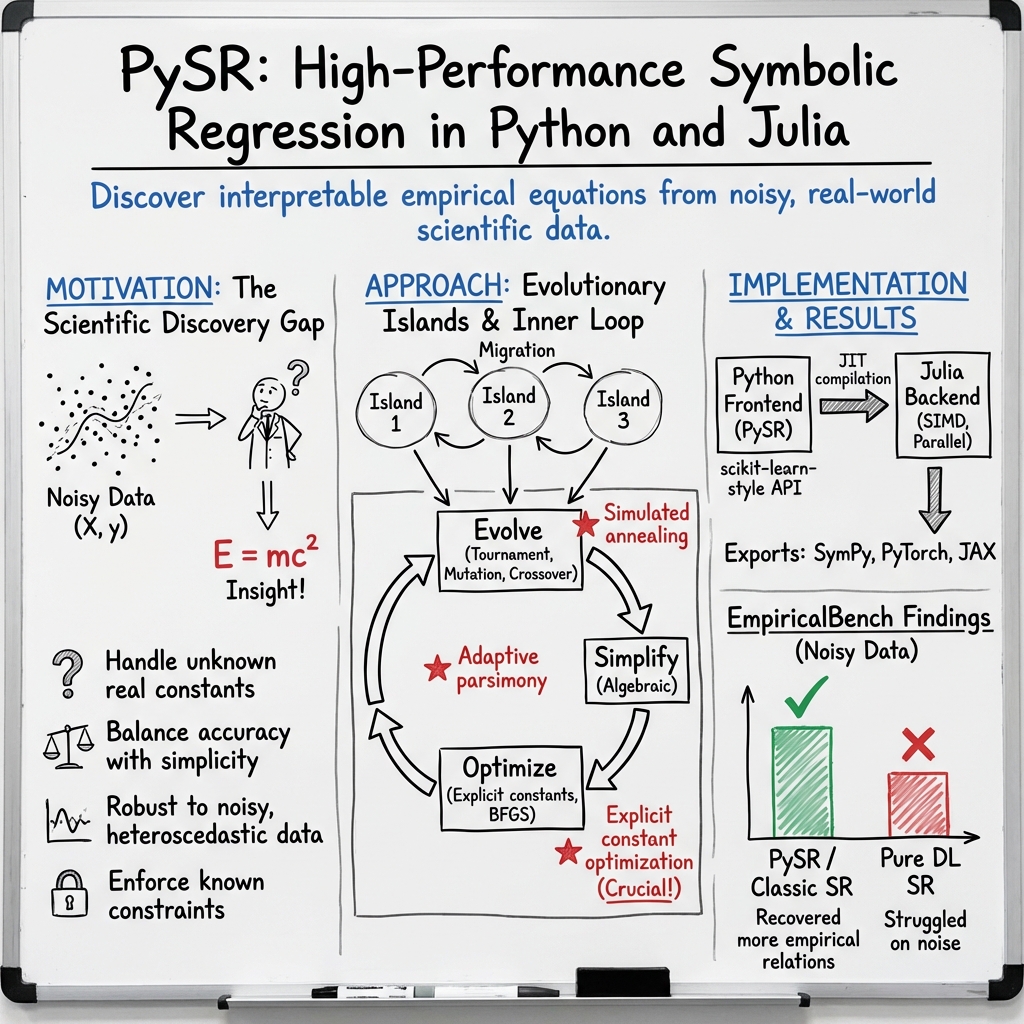

Abstract: PySR is an open-source library for practical symbolic regression, a type of machine learning which aims to discover human-interpretable symbolic models. PySR was developed to democratize and popularize symbolic regression for the sciences, and is built on a high-performance distributed back-end, a flexible search algorithm, and interfaces with several deep learning packages. PySR's internal search algorithm is a multi-population evolutionary algorithm, which consists of a unique evolve-simplify-optimize loop, designed for optimization of unknown scalar constants in newly-discovered empirical expressions. PySR's backend is the extremely optimized Julia library SymbolicRegression.jl, which can be used directly from Julia. It is capable of fusing user-defined operators into SIMD kernels at runtime, performing automatic differentiation, and distributing populations of expressions to thousands of cores across a cluster. In describing this software, we also introduce a new benchmark, "EmpiricalBench," to quantify the applicability of symbolic regression algorithms in science. This benchmark measures recovery of historical empirical equations from original and synthetic datasets.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper introduces PySR, a free and open-source tool that helps scientists find simple, understandable equations from data. Instead of using black-box models (which can be hard to explain), PySR searches for clear mathematical formulas—like those you see in physics or biology—that describe how things relate. It works fast, runs on many computers at once, and connects easily with popular Python and Julia tools.

Key Objectives

The paper focuses on solving a problem called symbolic regression, which means:

- Finding a mathematical formula (like y = a·x + b or y = sin(x) + x²) that fits data well.

- Keeping the formula simple enough that humans can understand it.

- Handling real scientific data, which can be noisy, high-dimensional, and sometimes needs special math functions unique to a field.

In simple terms: the goal is to help scientists discover equations that explain their data, without sacrificing clarity.

Methods and Approach

Think of PySR as a smart, organized treasure hunt for the best equation. Here’s how it works, using everyday ideas:

What is symbolic regression?

- Imagine you have building blocks (numbers, variables, and math operations like +, −, ×, ÷, sin, log).

- Symbolic regression tries different combinations of these blocks to build an equation that matches your data.

- The trick is to find an equation that is both accurate and easy to understand.

Evolutionary search (like tournaments and mutations)

- PySR uses an “evolutionary algorithm,” inspired by natural selection:

- It keeps a “population” of candidate equations.

- It runs mini “tournaments” to pick strong candidates.

- It creates new candidates by:

- Mutation: making small changes (e.g., swap + for −, tweak a number).

- Crossover: mixing parts of two good equations (like combining parents to make a child).

- This process improves equations over time, like breeding faster racehorses.

Evolve–Simplify–Optimize loop

- Evolve: Try many mutations and crossovers to explore new equations.

- Simplify: Clean up equations (for example, turning x + x into 2x).

- Optimize: Fine-tune the numbers in the equation (like turning “2.0” into “2.13” to fit the data better). This is done with a mathematical “knob-turning” method so the constants are just right.

Avoiding getting stuck (simulated annealing)

- PySR sometimes accepts worse changes early on to explore broadly, and later becomes more strict.

- Think of it like a thermostat: at high “temperature” you take more risks; at low temperature, you focus on refining the best ideas.

Keeping models simple (adaptive parsimony)

- Parsimony means preferring simpler equations.

- PySR adjusts how much it penalizes complexity so the search explores both simple and complex formulas fairly.

- This helps avoid getting stuck in complicated formulas that aren’t insightful.

Speed and software engineering

- The engine under PySR (SymbolicRegression.jl) is written in Julia for speed.

- It compiles custom math functions on the fly and fuses operations so equations run quickly.

- It can spread work across many CPU cores or computers.

- PySR has a friendly Python interface, and can export equations to libraries like NumPy, SymPy, PyTorch, and JAX.

Handling real-world scientific needs

- Custom operators: You can add field-specific functions (for example, Bessel functions in physics or special conditional logic).

- Custom losses: You can define how “error” is measured, not just the usual mean squared error. This matters when data has special noise or distributions.

- Noisy data: PySR can denoise inputs (using Gaussian processes) or use data-point weights when some measurements are more uncertain than others.

- Feature selection: It can reduce the number of input variables by picking the most important ones first.

- Constraints: You can set rules (like limiting equation size or nesting) so results remain realistic and interpretable.

- Interfaces: It plays well with deep learning frameworks and can discover patterns in more than just tables (like sequences or grids).

Main Findings and Why They Matter

- PySR makes symbolic regression practical for science:

- It can discover equations with real (non-integer) constants and tune them accurately.

- It supports special operators and custom error measures, which scientists often need.

- It balances accuracy and simplicity, aiming for equations that teach you something—not just ones that fit well.

- It runs fast thanks to Julia’s just-in-time compilation and smart parallelization.

- The paper also introduces EmpiricalBench, a new benchmark to test if symbolic regression tools can rediscover well-known scientific equations from real and synthetic data. This helps measure whether these tools are useful beyond toy problems.

These findings matter because they bring equation discovery closer to everyday scientific practice: not just proving an idea works in theory, but making it usable on real data and workflows.

Implications and Impact

- Better science communication: Clear equations are easier to understand, share, and build upon than opaque models.

- Faster discovery: Automating the search for formulas saves time and lets scientists test more ideas.

- Wider adoption: Because PySR is open-source, easy to use, and connects to popular tools, more researchers can use symbolic regression in their fields.

- Deeper insights: Simple, interpretable equations can reveal underlying rules, guide new experiments, and inspire new theories—much like how historical discoveries (Kepler’s and Planck’s laws) started from fitting data.

In short, PySR helps turn complex data into understandable equations, making machine learning more interpretable and more useful for scientific discovery.

Collections

Sign up for free to add this paper to one or more collections.