Compositional Text-to-Image Synthesis with Attention Map Control of Diffusion Models

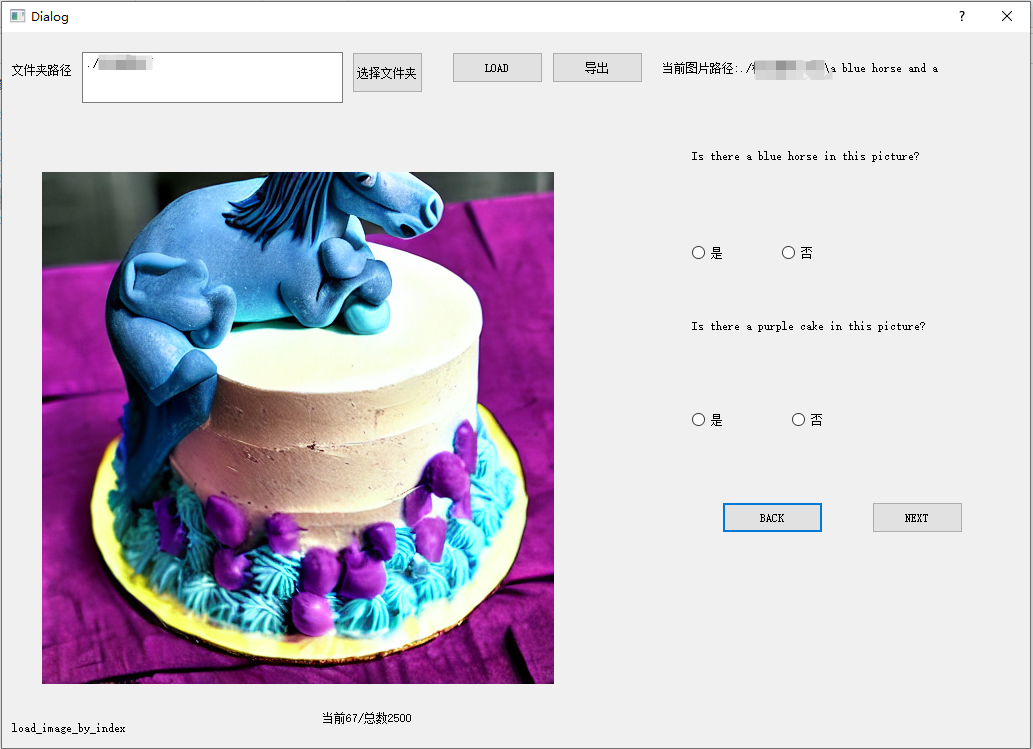

Abstract: Recent text-to-image (T2I) diffusion models show outstanding performance in generating high-quality images conditioned on textual prompts. However, they fail to semantically align the generated images with the prompts due to their limited compositional capabilities, leading to attribute leakage, entity leakage, and missing entities. In this paper, we propose a novel attention mask control strategy based on predicted object boxes to address these issues. In particular, we first train a BoxNet to predict a box for each entity that possesses the attribute specified in the prompt. Then, depending on the predicted boxes, a unique mask control is applied to the cross- and self-attention maps. Our approach produces a more semantically accurate synthesis by constraining the attention regions of each token in the prompt to the image. In addition, the proposed method is straightforward and effective and can be readily integrated into existing cross-attention-based T2I generators. We compare our approach to competing methods and demonstrate that it can faithfully convey the semantics of the original text to the generated content and achieve high availability as a ready-to-use plugin. Please refer to https://github.com/OPPOMente-Lab/attention-mask-control.

- ediffi: Text-to-image diffusion models with an ensemble of expert denoisers. arXiv preprint arXiv:2211.01324.

- End-to-end object detection with transformers. In Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part I 16, 213–229. Springer.

- Attend-and-excite: Attention-based semantic guidance for text-to-image diffusion models. ACM Transactions on Graphics (TOG), 42(4): 1–10.

- Training-Free Layout Control with Cross-Attention Guidance. arXiv preprint arXiv:2304.03373.

- Diffusion models in vision: A survey. IEEE Transactions on Pattern Analysis and Machine Intelligence.

- DOERSCH, C. 2021. Tutorial on Variational Autoencoders. stat, 1050: 3.

- Taming transformers for high-resolution image synthesis. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 12873–12883.

- Training-Free Structured Diffusion Guidance for Compositional Text-to-Image Synthesis. In The Eleventh International Conference on Learning Representations.

- Generative adversarial networks. Communications of the ACM, 63(11): 139–144.

- SVDiff: Compact Parameter Space for Diffusion Fine-Tuning. arXiv preprint arXiv:2303.11305.

- Gans trained by a two time-scale update rule converge to a local nash equilibrium. Advances in neural information processing systems, 30.

- spaCy: Industrial-strength Natural Language Processing in Python.

- Shape-Guided Diffusion with Inside-Outside Attention. arXiv e-prints, arXiv–2212.

- Jiménez, Á. B. 2023. Mixture of Diffusers for scene composition and high resolution image generation. arXiv preprint arXiv:2302.02412.

- Gligen: Open-set grounded text-to-image generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 22511–22521.

- LLM-grounded Diffusion: Enhancing Prompt Understanding of Text-to-Image Diffusion Models with Large Language Models. arXiv preprint arXiv:2305.13655.

- Microsoft coco: Common objects in context. In Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13, 740–755. Springer.

- Compositional visual generation with composable diffusion models. In Computer Vision–ECCV 2022: 17th European Conference, Tel Aviv, Israel, October 23–27, 2022, Proceedings, Part XVII, 423–439. Springer.

- Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection. arXiv preprint arXiv:2303.05499.

- Cones: Concept Neurons in Diffusion Models for Customized Generation. arXiv preprint arXiv:2303.05125.

- Directed Diffusion: Direct Control of Object Placement through Attention Guidance. arXiv preprint arXiv:2302.13153.

- Learning transferable visual models from natural language supervision. In International conference on machine learning, 8748–8763. PMLR.

- Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125.

- Zero-shot text-to-image generation. In International Conference on Machine Learning, 8821–8831. PMLR.

- Generalized intersection over union: A metric and a loss for bounding box regression. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 658–666.

- High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 10684–10695.

- U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015: 18th International Conference, Munich, Germany, October 5-9, 2015, Proceedings, Part III 18, 234–241. Springer.

- Photorealistic text-to-image diffusion models with deep language understanding. Advances in Neural Information Processing Systems, 35: 36479–36494.

- End-to-end people detection in crowded scenes. In Proceedings of the IEEE conference on computer vision and pattern recognition, 2325–2333.

- Attention is all you need. Advances in neural information processing systems, 30.

- Harnessing the spatial-temporal attention of diffusion models for high-fidelity text-to-image synthesis. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 7766–7776.

- Layouttransformer: Scene layout generation with conceptual and spatial diversity. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 3732–3741.

- Diffusion models: A comprehensive survey of methods and applications. ACM Computing Surveys, 56(4): 1–39.

- Adding conditional control to text-to-image diffusion models. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 3836–3847.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.