Ties Matter: Meta-Evaluating Modern Metrics with Pairwise Accuracy and Tie Calibration

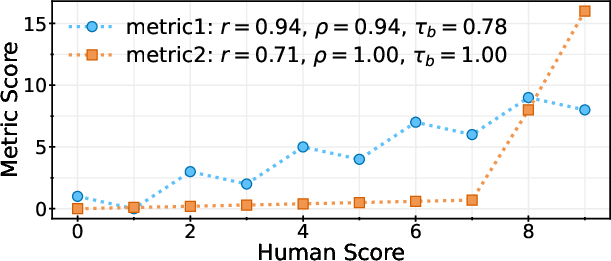

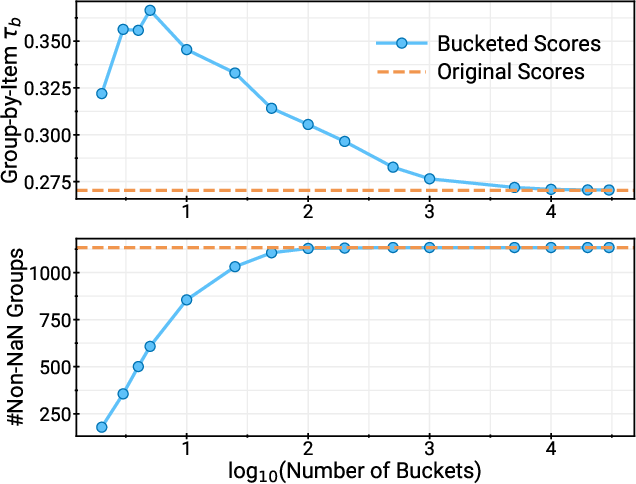

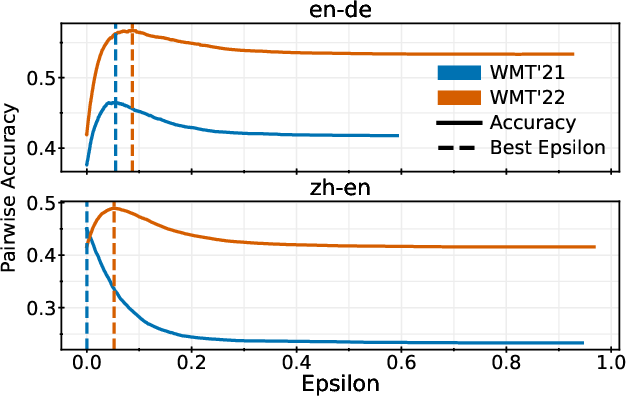

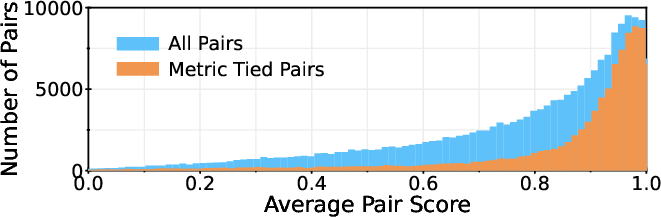

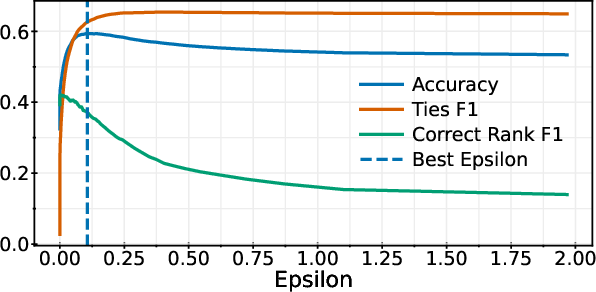

Abstract: Kendall's tau is frequently used to meta-evaluate how well machine translation (MT) evaluation metrics score individual translations. Its focus on pairwise score comparisons is intuitive but raises the question of how ties should be handled, a gray area that has motivated different variants in the literature. We demonstrate that, in settings like modern MT meta-evaluation, existing variants have weaknesses arising from their handling of ties, and in some situations can even be gamed. We propose instead to meta-evaluate metrics with a version of pairwise accuracy that gives metrics credit for correctly predicting ties, in combination with a tie calibration procedure that automatically introduces ties into metric scores, enabling fair comparison between metrics that do and do not predict ties. We argue and provide experimental evidence that these modifications lead to fairer ranking-based assessments of metric performance.

- Results of the WMT17 Metrics Shared Task. In Proceedings of the Second Conference on Machine Translation, pages 489–513, Copenhagen, Denmark. Association for Computational Linguistics.

- Language Models are Few-Shot Learners. Advances in neural information processing systems, 33:1877–1901.

- Findings of the 2010 Joint Workshop on Statistical Machine Translation and Metrics for Machine Translation. In Proceedings of the Joint Fifth Workshop on Statistical Machine Translation and MetricsMATR, pages 17–53, Uppsala, Sweden. Association for Computational Linguistics.

- Quality-Aware Decoding for Neural Machine Translation. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pages 1396–1412, Seattle, United States. Association for Computational Linguistics.

- Experts, Errors, and Context: A Large-Scale Study of Human Evaluation for Machine Translation. Transactions of the Association for Computational Linguistics, 9:1460–1474.

- High Quality Rather than High Model Probability: Minimum Bayes Risk Decoding with Neural Metrics. Transactions of the Association for Computational Linguistics, 10:811–825.

- Results of WMT22 Metrics Shared Task: Stop Using BLEU – Neural Metrics Are Better and More Robust. In Proceedings of the Seventh Conference on Machine Translation (WMT), pages 46–68, Abu Dhabi, United Arab Emirates (Hybrid). Association for Computational Linguistics.

- Results of the WMT21 Metrics Shared Task: Evaluating Metrics with Expert-based Human Evaluations on TED and News Domain. In Proceedings of the Sixth Conference on Machine Translation, pages 733–774, Online. Association for Computational Linguistics.

- Maurice G Kendall. 1938. A New Measure of Rank Correlation. Biometrika, 30(1/2):81–93.

- Maurice G Kendall. 1945. The Treatment of Ties in Ranking Problems. Biometrika, 33(3):239–251.

- Tom Kocmi and Christian Federmann. 2023. Large Language Models Are State-of-the-Art Evaluators of Translation Quality.

- To Ship or Not to Ship: An Extensive Evaluation of Automatic Metrics for Machine Translation. In Proceedings of the Sixth Conference on Machine Translation, pages 478–494, Online. Association for Computational Linguistics.

- Multidimensional Quality Metrics (MQM): A Framework for Declaring and Describing Translation Quality Metrics. Tradumàtica, (12):0455–463.

- Results of the WMT18 Metrics Shared Task: Both characters and embeddings achieve good performance. In Proceedings of the Third Conference on Machine Translation: Shared Task Papers, pages 671–688, Belgium, Brussels. Association for Computational Linguistics.

- Matouš Macháček and Ondřej Bojar. 2013. Results of the WMT13 Metrics Shared Task. In Proceedings of the Eighth Workshop on Statistical Machine Translation, pages 45–51, Sofia, Bulgaria. Association for Computational Linguistics.

- Matouš Macháček and Ondřej Bojar. 2014. Results of the WMT14 Metrics Shared Task. In Proceedings of the Ninth Workshop on Statistical Machine Translation, pages 293–301, Baltimore, Maryland, USA. Association for Computational Linguistics.

- Tangled up in bleu: Reevaluating the evaluation of automatic machine translation evaluation metrics. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 4984–4997.

- Evaluating Machine Translation Output with Automatic Sentence Segmentation. In Proceedings of the Second International Workshop on Spoken Language Translation, Pittsburgh, Pennsylvania, USA.

- MaTESe: Machine Translation Evaluation as a Sequence Tagging Problem. In Proceedings of the Seventh Conference on Machine Translation (WMT), pages 569–577, Abu Dhabi, United Arab Emirates (Hybrid). Association for Computational Linguistics.

- COMET-22: Unbabel-IST 2022 Submission for the Metrics Shared Task. In Proceedings of the Seventh Conference on Machine Translation (WMT), pages 578–585, Abu Dhabi, United Arab Emirates (Hybrid). Association for Computational Linguistics.

- COMET: A Neural Framework for MT Evaluation. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), pages 2685–2702, Online. Association for Computational Linguistics.

- BLEURT: Learning Robust Metrics for Text Generation. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pages 7881–7892, Online. Association for Computational Linguistics.

- Alan Stuart. 1953. The Estimation and Comparison of Strengths of Association in Contingency Tables. Biometrika, 40(1/2):105–110.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.