- The paper shows that open-source LLMs can achieve up to a 90% success rate in tool manipulation tasks through programmatic data generation and system prompts.

- It details an action generation framework that uses API documentation and in-context demonstrations to improve the accuracy of tool invocation.

- Empirical results on the ToolBench benchmark indicate that minimal human supervision yields performance comparable to closed LLM APIs.

Introduction

The capability of LLMs to manipulate software tools has garnered significant attention. However, most attempts in this domain leverage closed LLM APIs, posing security and robustness challenges in industrial settings. This paper addresses the potential of open-source LLMs, augmented with programmatic data generation, system prompts, and in-context demonstration retrievers, to match the capabilities of closed LLM APIs with minimal human supervision.

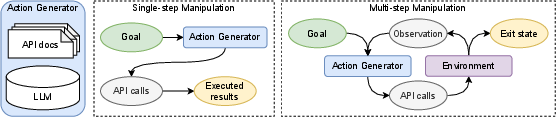

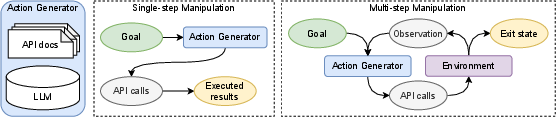

The framework for tool manipulation involves equipping LLMs as action generators that interface with software via API documentation access. In this study, the action generation encompasses both single-step and multi-step scenarios, where the LLM iteratively generates API calls based on environmental feedback until a goal is achieved.

Figure 1: Tool manipulation setup with API documentation access for LLMs to function as action generators.

Challenges in Open-source LLMs

Open-source LLMs encounter several hurdles in tool manipulation, primarily in API selection, argument populating, and the generation of executable code. While closed LLMs like GPT-4 exhibit innate knowledge of APIs, open-source models struggle with correctly selecting and invoking APIs without explicit examples. Additionally, they often falter at generating argument values for API calls even when the selections are correct.

Enhancement Techniques

To mitigate these issues, the paper revisits classical LLM techniques for adaptation in tool manipulation tasks:

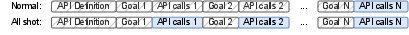

- Model Alignment with Programmatic Data Generation: This involves instruction tuning with synthetic data created from templates, enabling models to learn API usage effectively.

- In-context Demonstration Retrievers: Drawing from retrieval-augmented generation, this module selects contextually similar demonstration examples to inform the model during inference.

- System Prompts: Enhanced system prompts define clear guidelines to restrict models to generate executable API calls exclusively.

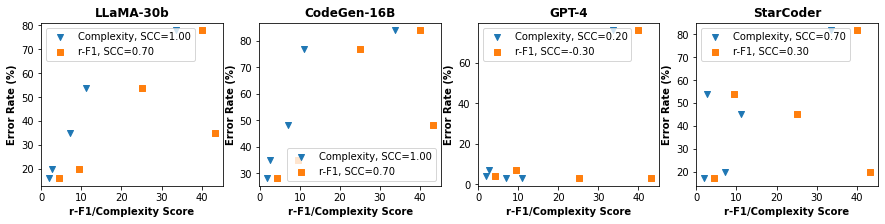

Figure 2: Use of all-shot loss for model alignment, highlighting the concatenation of examples and backpropagation through blue actions.

Evaluation and Results

The presented ToolBench—a benchmark suite of diverse tools—serves as the testing ground for these enhancements. Through empirical evaluations, open-source LLMs demonstrated up to a 90% increase in success rate in tool manipulation tasks, rivaling GPT-4 in half of the benchmarked tools. The minimal supervision requirement, equating to roughly a developer day per tool for data curation and example crafting, underscores the practical viability of these enhancements.

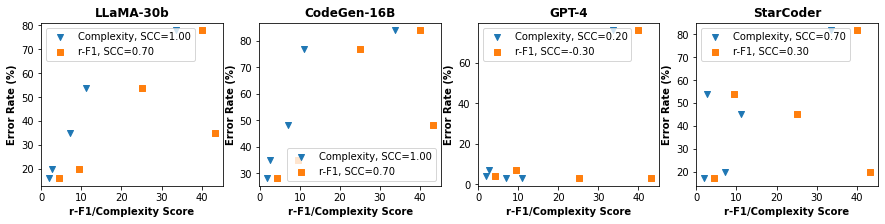

Figure 3: Spearman's correlation coefficient comparisons for complexity score and error rate, showcasing improvements in tasks.

Conclusion

This research substantiates the potential of open-source LLMs in securing advanced tool manipulation capabilities comparable to those of closed LLM APIs. By addressing key challenges with programmatic alignment and demonstrations, these models can achieve substantial improvements with practical levels of human oversight. Future work could expand on these insights by exploring even more nuanced integration of feedback mechanisms and hybrid learning across tasks.