- The paper introduces S2ADet, a novel detector that unifies spectral and spatial feature aggregation for enhanced hyperspectral object detection.

- It employs a dual-stream design with HID and SSA modules to reduce redundancy and improve the fusion of complementary cues.

- Empirical results on the large-scale HOD3K dataset show significant mAP improvements over established baselines, demonstrating robustness.

Unified Spectral-Spatial Feature Aggregation for Hyperspectral Object Detection: The S2ADet Framework

Introduction

Hyperspectral imaging (HSI) provides detailed spectral information beyond RGB by capturing scenes across numerous contiguous spectral bands, yielding enhanced material and class discriminability crucial for fine-grained object detection. However, prevailing object detection methodologies for HSI predominantly focus either on spectral or spatial cues, typically neglecting the complementary and synergistic relationship between them. Moreover, redundancy among closely spaced bands deteriorates detection performance, and the field’s progress is constrained by the lack of large, diverse HSI datasets for object detection.

The paper "Object Detection in Hyperspectral Image via Unified Spectral-Spatial Feature Aggregation" (2306.08370) addresses these challenges through:

- The design of S2ADet, an object detection architecture leveraging spectral-spatial aggregation via dedicated module design,

- Introduction of a comprehensive HSI object detection dataset (HOD3K) far larger and more diverse than prior benchmarks,

- Extensive quantitative and qualitative analyses establishing S2ADet's empirical superiority and robustness on multiple benchmarks.

Problem Definition and Dataset Limitations

Most existing hyperspectral detection approaches are tuned for either target detection or classification, leveraging spectral features on a per-pixel basis or naively integrating spatial structure. These techniques do not fully exploit high-level semantic spatial patterns and cannot effectively disentangle redundant spectral information, especially given the highly correlated nature of adjacent bands in HSI.

Conventional datasets, such as HYDICE, San Diego, and HOD-1, either offer limited scene diversity, insufficient annotation quality, or a small sample count unsuitable for training and benchmarking modern detectors. The paper addresses this by introducing HOD3K—a systematic, large-scale dataset curated for HSI object detection.

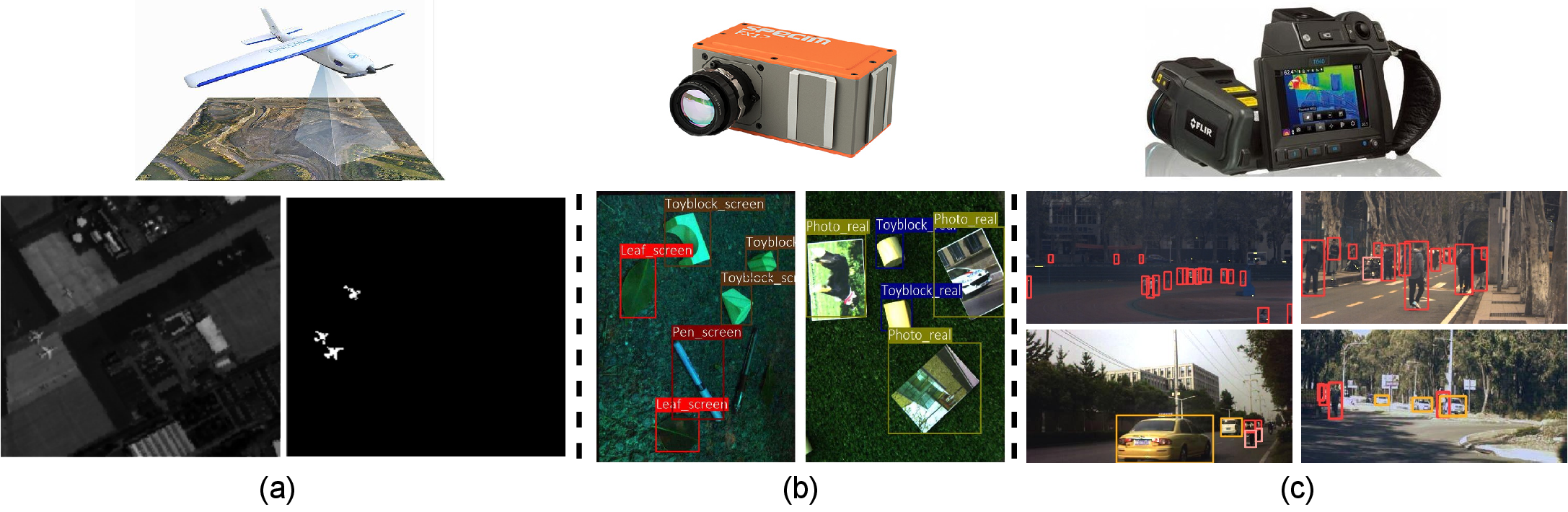

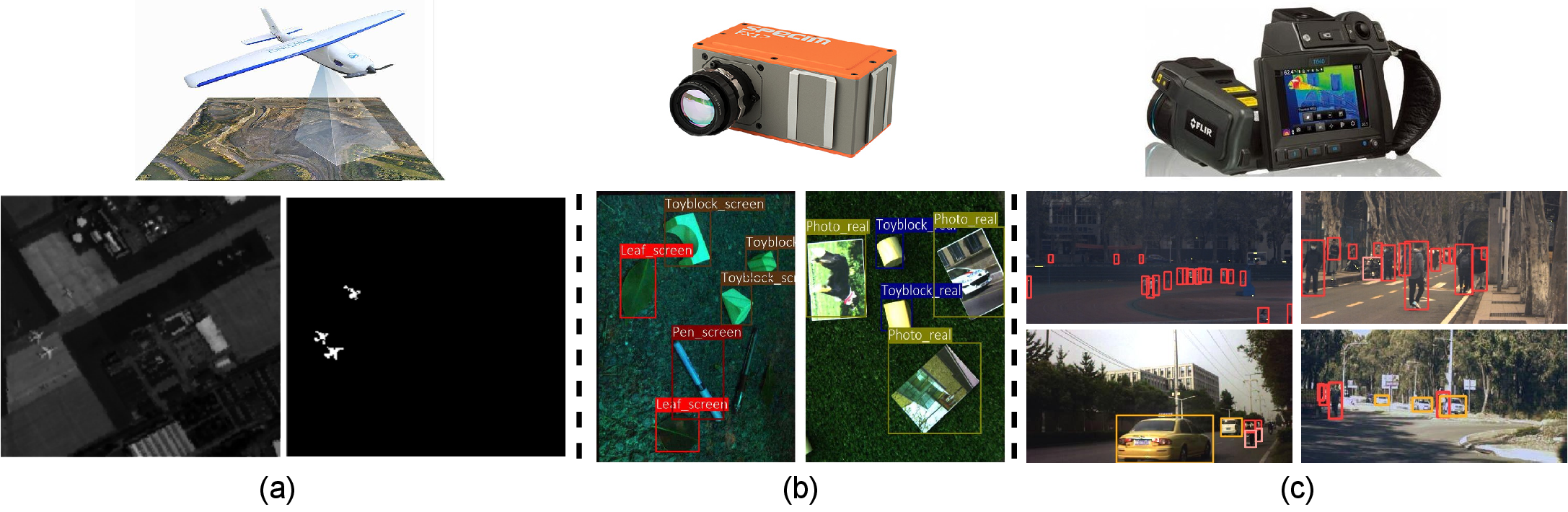

Figure 1: Comparison of existing benchmarks: (a) pixel-level annotation example from San Diego, (b) example from HOD-1 with posed objects, and (c) the diverse HOD3K dataset displaying real-world scenarios with bounding box annotations.

S2ADet Architecture

Core Architectural Design

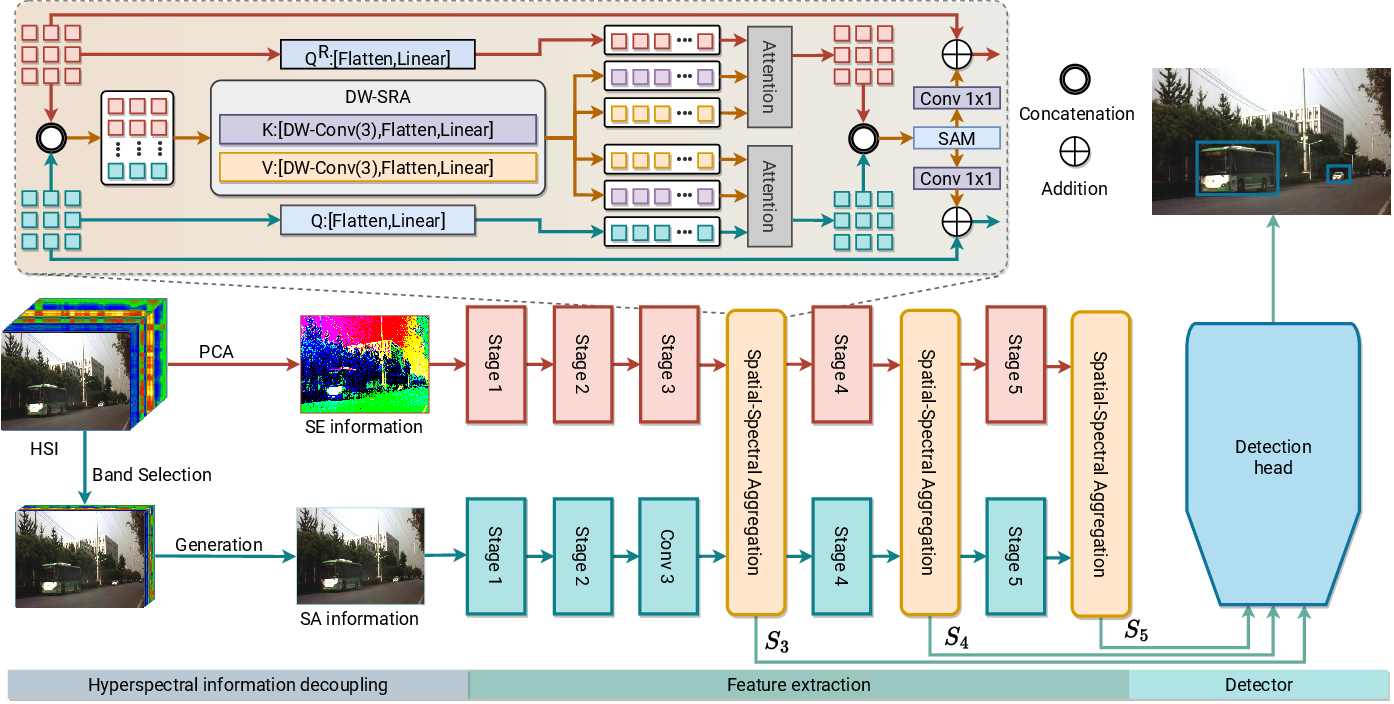

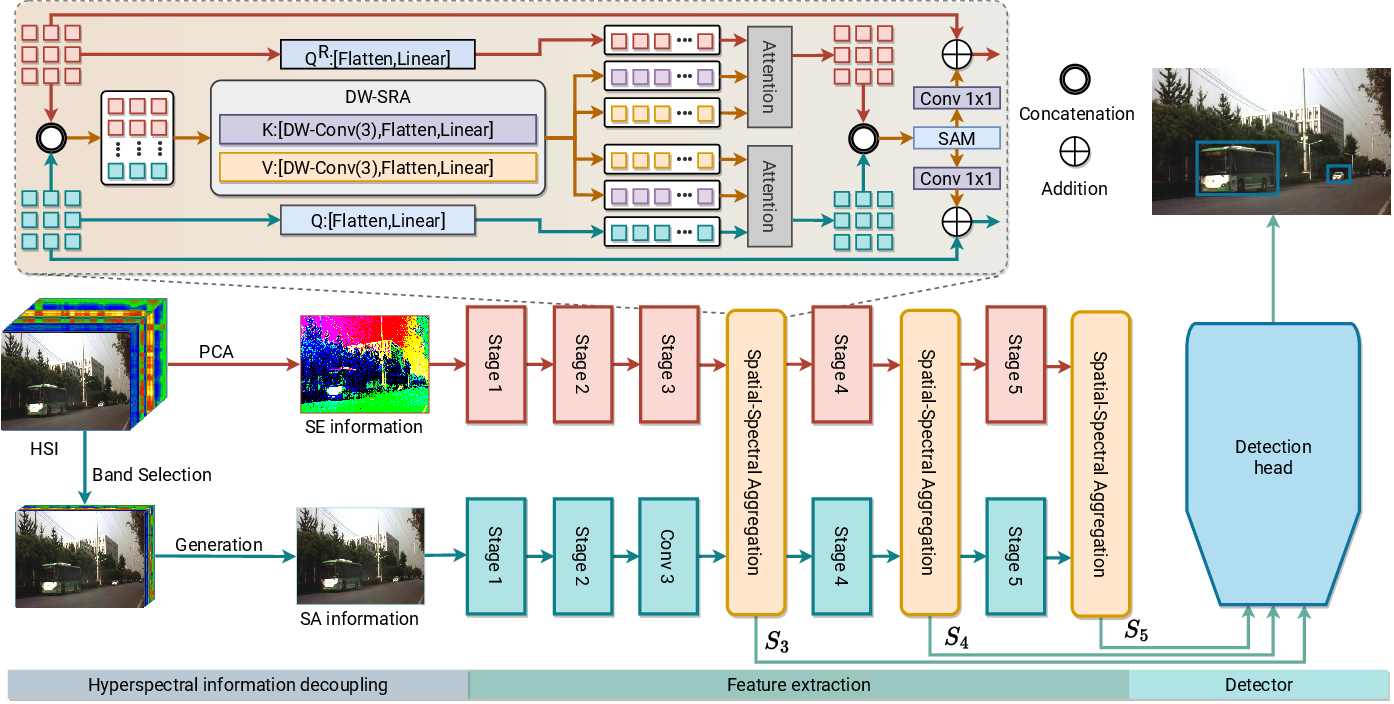

S2ADet is structured around a pipeline that explicitly decouples and then re-aggregates spectral and spatial information for object detection in HSI. The architecture’s pivotal components include:

- Hyperspectral Information Decoupling (HID) Module: This reduces band redundancy using band selection (spatial aggregation, SA) and principal component analysis (spectral aggregation, SE), generating two representative inputs.

- Two-Stream Network: Independently extracts deep features from spatially and spectrally aggregated inputs.

- Spectral-Spatial Aggregation (SSA) Module: Embedded in multiple network stages, this module fuses spectral and spatial features via context-aware operations, including attention mechanisms and spatial reduction.

- One-Stage Detection Head: Adapts feature pyramid conventions to produce per-class bounding-box predictions robustly.

Figure 2: S2ADet consists of HID, a two-stream backbone, SSA modules, and a detection head. The HID module removes redundant spectral information, while SSA enables spectral-spatial feature interaction.

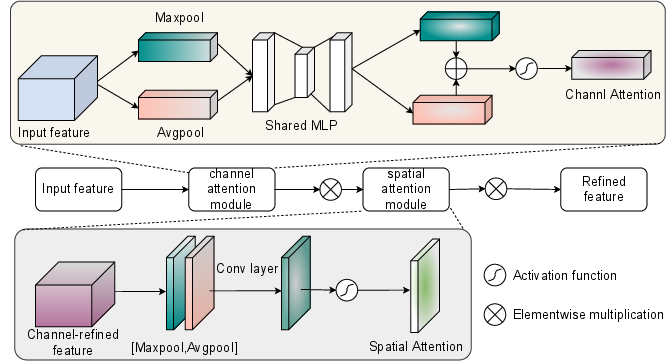

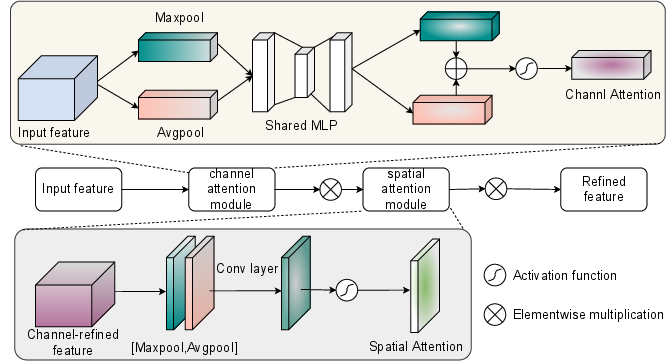

The HID employs parameter-free band selection to retain informative spatial bands and PCA for compact spectral encoding. Subsequently, the SSA module fuses the resulting deep features using both channel and spatial attention mechanisms:

Figure 3: The SAM attention module integrates channel and spatial attention for refined aggregation of hyperspectral features.

This unified spectral-spatial pipeline is tailored for the unique characteristics of hyperspectral data, facilitating more discriminative and less redundant representations for detection tasks.

HOD3K: A Large-Scale Hyperspectral Detection Benchmark

Recognizing the disproportion between hyperspectral and RGB datasets, HOD3K comprises 3,242 hyperspectral images (16 bands, 512×256 pixels each) across multiple environments, covering 15,000+ annotated objects in three classes (people, cars, bikes). This dataset eclipses previous efforts in scale, diversity, and annotation fidelity, providing a pivotal resource for future development and fair benchmarking.

Empirical Results and Analysis

S2ADet achieves new state-of-the-art results on both HOD3K and the HOD-1 dataset, outperforming established baselines across mean average precision (mAP), per-class precision, and recall metrics:

- On HOD3K: S2ADet outperforms Faster RCNN and YOLOv5 by margins of +2.9% and +3.5% mAP, respectively. Across all object types, S2ADet delivers the highest detection accuracy (people: 87.2%, bikes: 97.7%, cars: 95.3% mAP50).

- On HOD-1: S2ADet surpasses the previous best (HOD-1) by +3.1% mAP—despite working on lower-bandwidth, more compact input.

Ablation Studies

Ablation experiments demonstrate:

- Joint spectral–spatial input via HID and their interaction in SSA crucially improves detection versus single-stream or naive dual inputs;

- The SSA module provides significant gains over spatial attention in isolation (+1.8% mAP);

- The synergy between HID and SSA is particularly pronounced, yielding combined improvements exceeding 4% mAP on HOD3K.

Qualitative Analysis

Qualitative inspection shows S2ADet’s capacity to:

- Disambiguate occluded or visually similar objects via context aggregation,

- Reduce background confusion and mitigate missed detections, especially for small and overlapping targets.

Figure 4: S2ADet substantially outperforms the baseline—eliminating spurious detections on background clutter and accurately localizing occluded or small objects.

Figure 5: S2ADet detects people, bikes, and cars (blue, yellow, red) in diverse scenes after hyperspectral-to-pseudo-color conversion for visualization.

Limitations are apparent in particularly dense or highly-overlapped scenarios, where small objects or close-proximity instances may be missed.

Implications and Future Directions

Practical Impact

S2ADet demonstrates clear value for domains in which spectral material signatures and fine-grained localization are crucial (e.g., remote sensing, environmental monitoring, surveillance). The methodology bridges a core gap by explicitly and efficiently fusing spectral and spatial signals, which prototype-level detectors or RGB-adapted baselines systematically neglect.

The introduction of HOD3K also provides a foundation for the broader community to develop generalizable hyperspectral detection models, catalyzing research that can translate advances from RGB domains into the HSI context reliably.

Theoretical Extensions

S2ADet’s modular approach—separating decoupling, attention-driven aggregation, and detection—can be extended by:

- Incorporating transformer architectures for long-range spectral-spatial modeling,

- Exploring self-supervised or semi-supervised pipelines (given HSI data annotation cost),

- Adapting the dual-stream strategy to accommodate multispectral, multimodal, or temporal (video) hyperspectral data.

Addressing small object detection and severe occlusions through hierarchical context modeling or cross-scale attention remains an open research topic identified by this study’s failure analyses.

Conclusion

This paper introduces S2ADet, a dedicated hyperspectral object detector leveraging explicit spectral-spatial feature aggregation. By combining a purpose-built network architecture with the largest and most diverse HSI object detection dataset to date, the framework achieves consistent and significant improvements over state-of-the-art baselines in both accuracy and robustness. These contributions establish new foundations for hyperspectral object detection and open avenues for methodological and application-driven developments in AI-based remote sensing and beyond.