Mutually Guided Few-shot Learning for Relational Triple Extraction

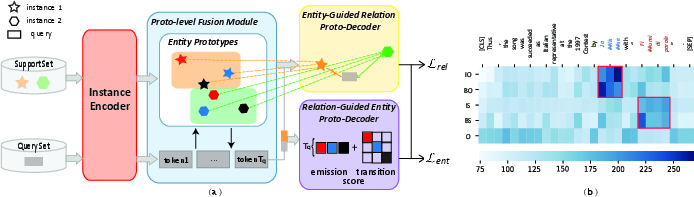

Abstract: Knowledge graphs (KGs), containing many entity-relation-entity triples, provide rich information for downstream applications. Although extracting triples from unstructured texts has been widely explored, most of them require a large number of labeled instances. The performance will drop dramatically when only few labeled data are available. To tackle this problem, we propose the Mutually Guided Few-shot learning framework for Relational Triple Extraction (MG-FTE). Specifically, our method consists of an entity-guided relation proto-decoder to classify the relations firstly and a relation-guided entity proto-decoder to extract entities based on the classified relations. To draw the connection between entity and relation, we design a proto-level fusion module to boost the performance of both entity extraction and relation classification. Moreover, a new cross-domain few-shot triple extraction task is introduced. Extensive experiments show that our method outperforms many state-of-the-art methods by 12.6 F1 score on FewRel 1.0 (single-domain) and 20.5 F1 score on FewRel 2.0 (cross-domain).

- “DBpedia: A Nucleus for a Web of Open Data” In ISWC/ASWC, Lecture Notes in Computer Science, 2007, pp. 722–735

- Kurt D. Bollacker, Robert P. Cook and Patrick Tufts “Freebase: A Shared Database of Structured General Human Knowledge” In AAAI, 2007, pp. 1962–1963

- “DEKR: Description Enhanced Knowledge Graph for Machine Learning Method Recommendation” In SIGIR, 2021, pp. 203–212

- “DiaKG: An Annotated Diabetes Dataset for Medical Knowledge Graph Construction” In CCKS, Communications in Computer and Information Science, 2021, pp. 308–314

- “Few-Shot Event Detection with Prototypical Amortized Conditional Random Field” In ACL/IJCNLP (Findings), Findings of ACL, 2021, pp. 28–40

- “Relation-Guided Few-Shot Relational Triple Extraction” In SIGIR, 2022, pp. 2206–2213

- “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding” In NAACL-HLT (1), 2019, pp. 4171–4186

- Li Fei-Fei, Rob Fergus and Pietro Perona “One-shot learning of object categories” In IEEE transactions on pattern analysis and machine intelligence, 2006, pp. 594–611

- “FewRel 2.0: Towards More Challenging Few-Shot Relation Classification” In EMNLP, 2019, pp. 6249–6254

- “Don’t Stop Pretraining: Adapt Language Models to Domains and Tasks” In ACL, 2020, pp. 8342–8360

- “FewRel: A Large-Scale Supervised Few-shot Relation Classification Dataset with State-of-the-Art Evaluation” In EMNLP, 2018, pp. 4803–4809

- “Reinforced Anchor Knowledge Graph Generation for News Recommendation Reasoning” In KDD, 2021, pp. 1055–1065

- Lance A. Ramshaw and Mitch Marcus “Text Chunking using Transformation-Based Learning” In VLC@ACL, 1995

- Jake Snell, Kevin Swersky and Richard S. Zemel “Prototypical Networks for Few-shot Learning” In NIPS, 2017, pp. 4077–4087

- Charles Sutton, Khashayar Rohanimanesh and Andrew McCallum “Dynamic conditional random fields: factorized probabilistic models for labeling and segmenting sequence data” In ICML, ACM International Conference Proceeding Series, 2004

- Shuai Wang “On the Analysis of Large Integrated Knowledge Graphs for Economics, Banking and Finance” In EDBT/ICDT Workshops, CEUR Workshop Proceedings, 2022

- “A Novel Cascade Binary Tagging Framework for Relational Triple Extraction” In ACL, 2020, pp. 1476–1488

- “Wlinker: Modeling Relational Triplet Extraction As Word Linking” In ICASSP, 2022, pp. 6357–6361

- “Simple and Effective Few-Shot Named Entity Recognition with Structured Nearest Neighbor Learning” In EMNLP (1), 2020, pp. 6365–6375

- “Multi-Level Matching and Aggregation Network for Few-Shot Relation Classification” In ACL, 2019, pp. 2872–2881

- “Bridging Text and Knowledge with Multi-Prototype Embedding for Few-Shot Relational Triple Extraction” In COLING, 2020, pp. 6399–6410

- “Prototype Completion With Primitive Knowledge for Few-Shot Learning” In CVPR, 2021, pp. 3754–3762

- “Multimodal Relation Extraction with Efficient Graph Alignment” In ACM Multimedia, 2021, pp. 5298–5306

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.