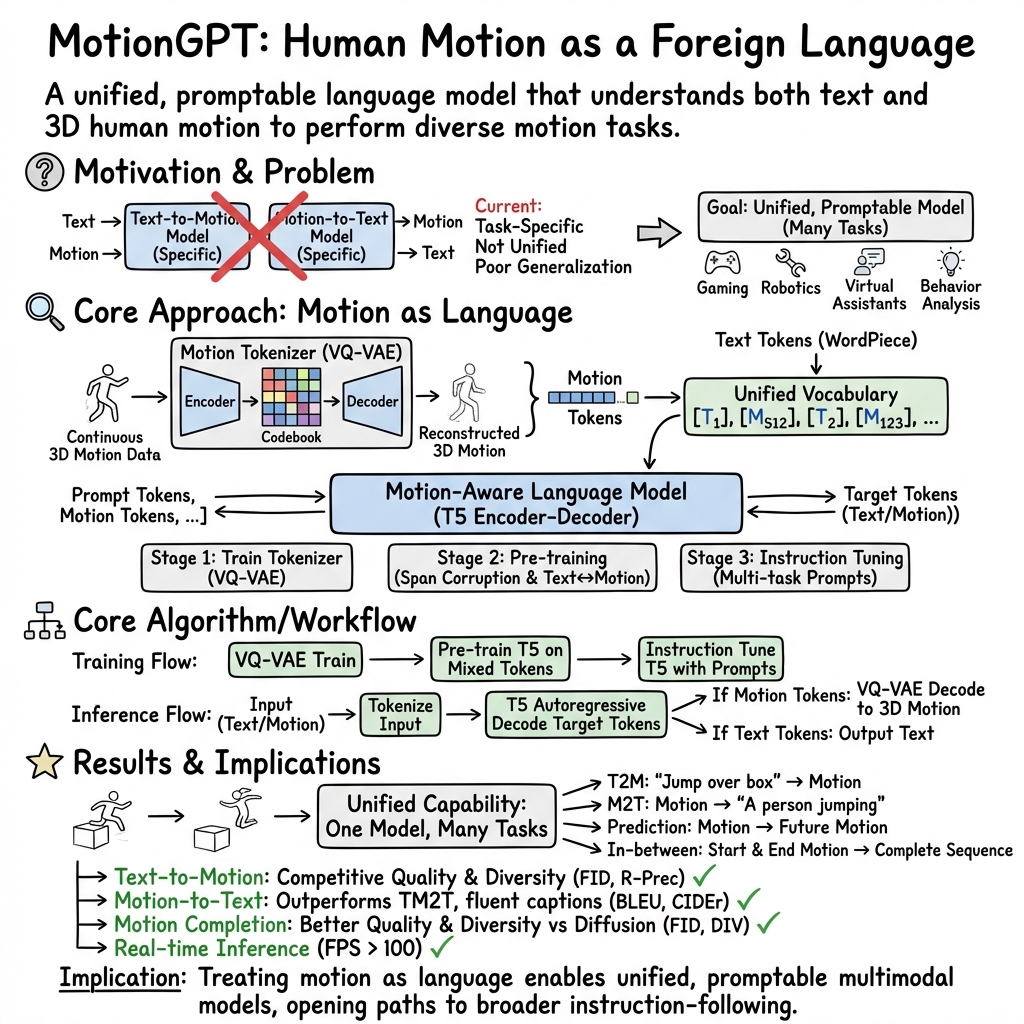

MotionGPT: Human Motion as a Foreign Language

Abstract: Though the advancement of pre-trained LLMs unfolds, the exploration of building a unified model for language and other multi-modal data, such as motion, remains challenging and untouched so far. Fortunately, human motion displays a semantic coupling akin to human language, often perceived as a form of body language. By fusing language data with large-scale motion models, motion-language pre-training that can enhance the performance of motion-related tasks becomes feasible. Driven by this insight, we propose MotionGPT, a unified, versatile, and user-friendly motion-LLM to handle multiple motion-relevant tasks. Specifically, we employ the discrete vector quantization for human motion and transfer 3D motion into motion tokens, similar to the generation process of word tokens. Building upon this "motion vocabulary", we perform language modeling on both motion and text in a unified manner, treating human motion as a specific language. Moreover, inspired by prompt learning, we pre-train MotionGPT with a mixture of motion-language data and fine-tune it on prompt-based question-and-answer tasks. Extensive experiments demonstrate that MotionGPT achieves state-of-the-art performances on multiple motion tasks including text-driven motion generation, motion captioning, motion prediction, and motion in-between.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces MotionGPT, a single computer model that treats human movement (like walking, jumping, or kicking) as if it were a language. Just like sentences are made of words with grammar, human motions are broken into small “motion words” and combined using rules. MotionGPT learns to understand and generate both text and movement, so it can do many tasks: write a caption for a motion, create a motion from a sentence, fill in missing parts of a motion, and predict what comes next in a movement.

What questions did the researchers ask?

They focused on two big questions:

- How can we connect language (text) and human motion in a way that one model can understand both?

- Can one unified model, guided by prompts (simple instructions), handle lots of different motion tasks instead of needing a different model for each task?

How did they do it?

Think of this like translating between two languages: English and “Body Language.”

Turning motion into “words”

- Imagine recording a person moving in 3D. That’s a long, detailed sequence.

- The team built a “motion tokenizer” (like a translator) that turns these movements into short, reusable pieces—motion tokens. You can think of tokens as Lego bricks: each brick represents a small, typical motion snippet (like “step forward” or “lift leg”).

- To do this, they use a technique called VQ-VAE. In everyday terms: it builds a dictionary of common mini-moves and then represents any motion as a sequence of dictionary entries. This makes movement easier for a LLM to read and write.

Teaching the model the “language”

- They take a LLM (T5/FLAN-T5), which is good at reading/writing text, and expand its vocabulary to include these new motion tokens.

- Now the model sees one big “alphabet” that includes both word pieces (for text) and motion tokens (for movement). That way, it can learn how text and motion line up, like how the sentence “a person waves twice” matches a sequence of motion tokens that perform two waves.

Training in three steps

- Motion tokenizer training: Learn the motion “dictionary” so movements can be turned into tokens and back.

- Motion-language pre-training: Mix text and motion so the model learns the “grammar” of both and how they relate.

- Instruction tuning: Give the model lots of prompt-and-answer examples (like “Generate a motion of someone kneeling and standing up” or “Describe this motion”), so it can follow natural instructions.

What can MotionGPT do?

- Text-to-motion: Turn a sentence into a 3D motion.

- Motion-to-text (captioning): Describe a given motion in words.

- Motion prediction: Given the start of a motion, guess what comes next.

- Motion in-between (completion): Fill in missing parts between the start and end of a motion.

- It can also answer simple motion-related questions using prompts.

What did they find?

- One model for many tasks: MotionGPT handled all four motion tasks with the same unified system, instead of needing different models for each job.

- Strong performance: It matched or beat previous top methods on several benchmarks, especially in creating motions from text and writing captions for motions. It also did very well in predicting and completing motions.

- Instruction tuning helps: Training the model to follow prompts made it more flexible and better at multi-task performance.

- Right size matters: A medium-sized version (about 220 million parameters) worked best given the limited amount of motion data. Bigger wasn’t always better here, likely because there isn’t as much motion data as there is text on the internet.

Why this is important:

- Quality: Motions looked realistic and matched the text descriptions well.

- Diversity: It could create varied motions for the same prompt, not just one bland version.

- Generalization: It worked across different tasks without retraining a totally new model.

Why does this matter?

- Easier control: You can ask for motions using natural sentences (“make the character kick twice”) instead of complex settings. This is helpful for games, animation, and virtual reality.

- Fewer separate tools: One model can handle many motion tasks, making development simpler and faster.

- Bridges two worlds: Treating movement like language opens the door to using powerful LLMs for robots, digital characters, and virtual assistants.

Final thoughts and future impact

MotionGPT shows a promising way to use LLMs to understand and create human movement. In the future, this could:

- Help animators and game designers quickly prototype character moves from plain instructions.

- Make robots easier to command (“pick up the box and step back”).

- Improve virtual assistants that understand and demonstrate actions.

Limitations the authors note:

- It focuses on full-body motion and doesn’t yet handle detailed hand, face, or multi-person interactions, nor interactions with objects and environments.

- There isn’t as much motion data as text data, which can limit how big and powerful the model can get.

Overall, MotionGPT is an exciting step toward natural, prompt-based control of human-like motion, using the same ideas that made LLMs so successful.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of concrete gaps and open questions that remain unresolved in the paper and can guide future research:

- Motion data scale and diversity: The model is trained on ~15k sequences (HumanML3D/KIT), leaving open how performance scales with orders-of-magnitude larger, more diverse motion corpora (e.g., AMASS full, MoCap libraries, in-the-wild reconstructions).

- Model scaling behavior: Larger backbones (e.g., 770M) did not consistently improve results; it remains unclear how model size interacts with motion data availability and what scaling laws govern motion-LLMs.

- Multilingual instruction following: The approach uses English T5; cross-lingual motion generation and captioning (and multilingual tokenizers) are untested.

- Physical realism and constraints: No explicit physics or contact modeling is used; physical validity (foot skate, joint limits, self-collision, balance/contact consistency) is not evaluated or enforced.

- Human–object and scene interactions: The model does not handle objects or environment geometry; how to represent objects, affordances, and contact intents in the unified vocabulary is unresolved.

- Multi-person interactions: Joint modeling of interacting agents (coordination, proxemics, collisions) and suitable datasets/objectives for multi-person motion remain open.

- Body-part specialties and non-human motion: Faces, hands, and animals are excluded; extending the tokenizer and LLM framework to fine-grained facial/hand motion or non-human kinematics is unexplored.

- Variable skeletons and retargeting: Generalization across different skeletons, body shapes, and rigs—and a principled retargeting pipeline integrated with MotionGPT—are not addressed.

- Long-horizon and compositional instructions: The ability to follow multi-step, temporally structured instructions (e.g., “pick up, turn, walk, place”) and to compose unseen action combinations is not evaluated.

- Robustness to free-form prompts: Instruction tuning relies on templates; sensitivity to paraphrases, negation, quantifiers, temporal/style modifiers, and noisy/ambiguous prompts is unknown.

- Spatial/temporal control: Conditioning on path constraints, global trajectories, speed, duration, style, or specific contact events is not supported; mechanisms for honoring hard constraints are absent.

- Motion length control and termination: How to precisely control output duration and prevent long-term drift is unspecified; length-conditioning or end-of-sequence criteria need study.

- Tokenization design trade-offs: The VQ-VAE codebook size, downsampling rate, and discretization granularity may lose fine kinematic detail; comparisons with continuous or hybrid tokenizers and variable-rate tokenization are missing.

- Codebook interpretability and utilization: Whether discrete “motion words” align with meaningful kinematic primitives, how to avoid codebook collapse, and how to improve semantic disentanglement are open questions.

- Training objectives for mixed tokens: The choice of masked-span objective and supervised translation is not compared to alternatives (e.g., contrastive, autoregressive next-token, denoising, alignment losses); optimal objectives/curricula remain unknown.

- Language capability degradation: Instruction tuning degraded pure text generation; techniques (adapters, multi-task mixing, EWC, regularizers) to preserve language ability while adding motion skills are not explored.

- Diversity vs. faithfulness: Mechanisms to promote diverse yet instruction-consistent generations (e.g., conditional temperature, nucleus sampling, guidance) and to avoid mode collapse are not studied in depth.

- Evaluation metric adequacy: Reliance on feature-based FID/retrieval metrics may not reflect instruction adherence or physical realism; standardized metrics for contact/balance, action-count accuracy, left/right correctness, and human ratings are needed.

- Error and failure analysis: The paper lacks a taxonomy of common failure modes (e.g., incorrect repetition counts, left/right confusion, style misinterpretation, contact artifacts) and their causes.

- Uncertainty and ambiguity handling: There is no mechanism to detect underspecified prompts or provide calibrated confidence/ambiguity estimates in generated motions or captions.

- Noisy or partial motion inputs: Robustness to noise, missing joints, domain shifts (e.g., video/IMU-derived motions) beyond simple in-betweening has not been assessed.

- Modality extensions: Integration with images/videos/audio (vision-language-motion) and cross-modal pretraining (e.g., CLIP-like) for richer supervision is unaddressed.

- Motion–language Q&A evaluation: Although QA-style prompts are shown, there is no benchmark for motion-grounded question answering; dataset and metrics for QA fidelity are absent.

- Real-time/interactive use: Inference latency and throughput for interactive systems are not reported; non-autoregressive decoding or caching strategies for real-time control remain open.

- Hybrid modeling with diffusion: Whether combining diffusion priors (for realism) with LLMs (for instruction following) yields superior quality/control is unexplored.

- Data biases and safety: Potential biases in motion/text datasets and policies for rejecting harmful/unsafe motion requests are not discussed.

- Benchmark standardization: The “general motion benchmark” is not fully specified; standardized multi-task protocols (lengths, conditions, train/test splits, metrics) across datasets are needed.

- Supervision efficiency: Dependence on paired text-motion data for instruction tuning limits scale; leveraging weak/implicit supervision and unpaired motion data more effectively is an open direction.

Practical Applications

Immediate Applications

- Text-to-motion asset generation for content creation (gaming, animation, VR/AR)

- Description: Rapidly author prototype or production-ready 3D character motions (“walk, turn, wave,” “do three jumping jacks”) via natural-language prompts; retarget outputs to studio rigs for NPC behaviors, cutscenes, previsualization, and choreography.

- Tools/products/workflows: MotionGPT API; Unity/Unreal/Blender plugins; motion retargeting pipelines (BVH/FBX export); prompt libraries for common actions; batch generation for style variants.

- Assumptions/dependencies: High-quality retargeting between MotionGPT’s skeleton and production rigs; GPU inference capacity; motion fidelity sufficient for non-contact scenes; coverage limited to single-person, full-body motions without detailed hands/face or object interactions.

- Motion captioning for mocap management and search (VFX, game studios, academia)

- Description: Auto-generate consistent textual descriptions and tags for existing motion-capture libraries to enable semantic search, retrieval, and dataset cleaning.

- Tools/products/workflows: Indexing service using MotionGPT tokenization + embeddings; search UI integrated with asset libraries; caption QA workflows.

- Assumptions/dependencies: Motion data in supported 3D skeleton format; optionally, video-to-motion preprocessing (pose estimation) for non-mocap sources; domain-specific vocabulary tuning.

- Motion completion and in-between for animation pipelines

- Description: Fill gaps in mocap recordings, smooth transitions between clips, or predict future frames for timeline editing and blending.

- Tools/products/workflows: DCC tool plugins that offer “auto-complete” for motion sequences; batch repair for noisy segments; timeline-aware blending.

- Assumptions/dependencies: Acceptable FID/diversity for production thresholds; relies on current single-person body motions; no guarantees of contact stability or precise foot locking.

- Instruction-driven motion QA and examples in education (education, e-learning)

- Description: Generate demonstrative motions from lesson text (e.g., physical education, dance steps, basic biomechanics), or caption student motion recordings for feedback and rubric alignment.

- Tools/products/workflows: Classroom/learning app integration; templated prompts for curricula; visualization widgets for 3D playback.

- Assumptions/dependencies: Pedagogical validation; alignment with age/ability levels; minimal detailed hand/face nuance; motion capture or pose-estimation setup for student recordings.

- Embodied agents in social platforms and virtual assistants (software, XR)

- Description: Power full-body gestures and movements for avatars based on dialog intent (e.g., “nod, step aside, point to the screen”), improving presence and engagement in social VR and virtual assistants.

- Tools/products/workflows: Middleware that maps text/intent to MotionGPT prompts; avatar motion retargeting; safety filters for inappropriate motions.

- Assumptions/dependencies: Real-time inference constraints; collision avoidance and scene awareness not included; single-person focus limits multi-character interactions.

- Dataset augmentation and rapid prototyping for research (academia)

- Description: Generate motion variants for low-resource tasks; unify evaluation across text-to-motion, motion-to-text, prediction, and in-between using a single backbone.

- Tools/products/workflows: Open-source MotionGPT repo; prompt libraries; benchmarking scripts; ablation-ready tokenizer configurations.

- Assumptions/dependencies: Domain shift if applied outside HumanML3D/KIT style distributions; limited dataset size constrains very large model benefits.

- Semantic indexing and retrieval across motion repositories (software)

- Description: Use MotionGPT’s unified vocabulary to build motion similarity search, clustering, and cross-modal retrieval (text↔motion).

- Tools/products/workflows: Motion-language embedding service; retrieval APIs; curator dashboards.

- Assumptions/dependencies: Tokenization fidelity; consistent skeleton formats; potential need for fine-tuning on organization-specific motion styles.

Long-Term Applications

- Language-to-action for robots and embodied systems (robotics)

- Description: Move from simulated motion generation to physically-executable robot behaviors from natural language (“pick up, kneel, stand, sidestep”), enabling instruction-following in HRI.

- Tools/products/workflows: Policy learning with MotionGPT-generated priors; sim-to-real pipelines; contact/dynamics controllers; safety verification modules.

- Assumptions/dependencies: Robust modeling of contacts, forces, human-object/environment interactions; real-time control; extensive safety and compliance testing.

- Clinically validated rehab and healthcare motion tools (healthcare)

- Description: Personalized exercise generation and motion prediction for monitoring recovery (e.g., gait progression forecasts); automated captions for clinical notes.

- Tools/products/workflows: EHR-integrated analysis; sensor fusion (IMU, video, mocap); clinician-in-the-loop editing; compliance logging.

- Assumptions/dependencies: Clinical validation and regulatory approval; expanded coverage of fine-grained joints, hands, and balance; bias and demographic representation auditing.

- Predictive human motion for safety-critical domains (autonomous systems, surveillance)

- Description: Anticipate pedestrian trajectories and activities from textual rules or scene context to improve planning and risk assessment.

- Tools/products/workflows: Vision-to-motion pipelines; scenario generators; uncertainty quantification and fail-safe mechanisms.

- Assumptions/dependencies: Integration with perception stacks; domain adaptation to real-world scenes; rigorous reliability, calibration, and liability frameworks.

- Advanced sports analytics and coaching (sports)

- Description: Generate practice drills and motion exemplars from coach instructions; predict motion outcomes to guide technique refinement and workload planning.

- Tools/products/workflows: Athlete data integration; motion comparison and captioning dashboards; simulation-based tactic design.

- Assumptions/dependencies: High-fidelity, athlete-specific modeling with multi-modal sensors; longitudinal validation; privacy-preserving pipelines.

- Multi-agent, human-object, and fine-grained motion modeling (software, robotics, XR)

- Description: Extend MotionGPT to hands, faces, interactions, and multiple actors to cover realistic collaboration, manipulation, and social scenes.

- Tools/products/workflows: Expanded tokenizers/codebooks; richer datasets (HOI, multi-human); contact-aware decoders.

- Assumptions/dependencies: Large, diverse datasets; better physical priors; scene understanding and collision handling.

- Motion-language standards and governance (policy)

- Description: Establish standards for motion tokenization, captioning schemas, benchmarking, and watermarking; policies for privacy, consent, and IP around synthetic motion assets.

- Tools/products/workflows: Standards bodies collaboration; dataset documentation templates; provenance and watermarking services.

- Assumptions/dependencies: Cross-industry alignment; legal clarity on motion-capture rights; mechanisms for bias auditing and redress.

- General-purpose multimodal foundation models with motion (AI platforms)

- Description: Integrate MotionGPT into broader LLMs that handle text, image, audio, video, and motion for unified reasoning and generation.

- Tools/products/workflows: Multimodal training infrastructure; shared embedding spaces; cross-domain instruction tuning.

- Assumptions/dependencies: Scale of high-quality motion datasets; efficient training across modalities; robust evaluation metrics.

- Motion asset marketplaces and rights management (media, platforms)

- Description: Create marketplaces for generated motion clips, with licensing, provenance tracking, and automated retargeting services.

- Tools/products/workflows: Asset cataloging via motion captions; IP protection via watermarking; smart contracts.

- Assumptions/dependencies: Clear legal frameworks; creator remuneration models; quality control.

- Adaptive physical education at scale (education, public sector)

- Description: Personalized motion examples and practice plans generated from curricular goals and learner profiles; automated feedback and captions for progress tracking.

- Tools/products/workflows: Learning platforms integrating MotionGPT; accessibility features; teacher dashboards.

- Assumptions/dependencies: Large-scale validation; inclusivity and accessibility considerations; safe deployment in schools.

- Ergonomics and workplace safety simulation (enterprise, manufacturing)

- Description: Simulate and evaluate task motions from textual SOPs to detect risky postures and propose safer alternatives.

- Tools/products/workflows: SOP-to-motion converters; risk scoring; integration with digital twins.

- Assumptions/dependencies: Accurate modeling of loads, tools, and constraints; domain-specific fine-tuning; collaboration with safety experts.

Glossary

- AdamW optimizer: A variant of the Adam optimizer with decoupled weight decay for better regularization in training deep models. "Moreover, all our models employ the AdamW optimizer for training."

- ADE (Average Displacement Error): A trajectory error metric measuring average distance between predicted and ground-truth positions over time. "Average Displacement Error (ADE)"

- AMASS: A large collection of motion capture sequences aggregated into a unified format for human motion research. "a subset of the larger AMASS dataset."

- autoregressive: A generation process where each token is produced conditioned on previously generated tokens. "in an autoregressive manner."

- BEiT-3: A vision-language pre-training framework treating images as a “foreign language,” inspiring analogous treatment for motion. "we follow vision-language pre-training from BEiT-3 [52]"

- BertScore: A text generation evaluation metric using contextual embeddings from BERT to assess similarity. "including BLUE [29], Rouge [24], Cider [51], and BertScore [62]"

- boundary indicators: Special tokens marking the start and end boundaries of a motion token sequence. "boundary indicators, for example, </som> and </eom> as the start and end of the motion."

- CIDEr: A consensus-based metric for evaluating the quality of generated descriptions relative to human references. "Cider [51]"

- CLIP: A multimodal model aligning images and text in a shared embedding space, often used for conditioning. "MDM [48] learns a motion diffusion model with conditional text tokens from CLIP [35]"

- codebook: A learned set of discrete embedding vectors used to quantize continuous latent representations. "Motion codebook is used to represent human motion as discrete tokens."

- codebook reset: A training technique to refresh underutilized codebook entries and improve utilization. "codebook reset techniques [39]"

- commitment loss: A VQ-VAE objective term encouraging encoder outputs to commit to specific codebook entries. "and the commitment loss Cc."

- diffusion-based generative model: A probabilistic generative approach that iteratively denoises data from noise to samples. "diffusion-based generative model [15]"

- discrete vector quantization: Converting continuous latent vectors into discrete indices via nearest codebook entries. "we employ the discrete vector quantization for human motion"

- EMA (exponential moving average): A smoothing technique tracking running averages of parameters to stabilize training. "exponential moving average (EMA) and codebook reset techniques [39]"

- FDE (Final Displacement Error): A trajectory error metric measuring the endpoint distance between predicted and ground-truth positions. "Final Displacement Error (FDE)"

- FID (Fréchet Inception Distance): A distribution-level metric assessing similarity between generated and real data features. "Frechet Inception Distance (FID) is our primary metric"

- Flan-T5-Base: An instruction-tuned T5 LLM variant used as the backbone for MotionGPT. "we employ a 220M pre-trained Flan-T5-Base[38, 5] model as our backbone"

- Generative Pre-trained Transformer (GPT): A transformer-based autoregressive model pre-trained for text generation, adapted here for motion. "Generative Pre-trained Transformer (GPT) for motion generation."

- HumanML3D: A dataset pairing 3D human motions with textual descriptions for text-to-motion research. "HumanML3D dataset [11]"

- InstructGPT: A LLM fine-tuned with human feedback to follow instructions, inspiring prompt-based control. "like InstructGPT [28]"

- instruction tuning: Fine-tuning with instruction–response pairs to improve generalization to task-like prompts. "instruction tuning enhances the versatility of MotionGPT,"

- latent diffusion model: A diffusion model operating in a learned latent space for efficient generation. "advances the latent diffusion model [45, 40]"

- motion auto-encoder: An encoder–decoder model that learns compact latent representations of motion sequences. "align its latent space with a motion auto-encoder."

- motion in-between: A motion completion task generating plausible motion between fixed start and end segments. "motion in-between [48]"

- motion tokenizer: A module that converts continuous motion sequences into discrete token indices. "MotionGPT consists of a motion tokenizer"

- Multi-modal Distance (MM Dist): A metric measuring distance between motion and text in a joint feature space. "Multi-modal Distance (MM Dist) measures the distance between motions and texts."

- MultiModality (MM): A measure of diversity among multiple motions generated from the same text prompt. "MultiModality (MM) measures the diversity of generated motions within the same text description of motion."

- R Precision: A retrieval metric assessing how well generated motions match their corresponding text (Top-k precision). "the motion-retrieval precision (R Precision) evaluates the accuracy of matching between texts and motions"

- Rouge: A set of metrics for evaluating generated text via n-gram overlap with references. "Rouge [24]"

- SentencePiece: A subword tokenizer that learns language-independent units for robust tokenization. "trained the SentencePiece [19] model"

- sentinel token: A special placeholder token used during span-masking objectives in T5-style pre-training. "replaced with a special sentinel token."

- temporal downsampling rate: The factor by which the motion sequence length is reduced when forming tokens. "where l denotes the temporal downsampling rate on motion length."

- transformer encoder/decoder: The two-part transformer architecture mapping input sequences to outputs via attention. "the source tokens are fed into the transformer encoder, and the subsequent decoder predicts the probability distribution"

- VQ-VAE (Vector Quantized Variational Autoencoder): An autoencoder that discretizes latents via a codebook for token-like representations. "Vector Quantized Variational Autoencoders (VQ-VAE)"

- WordPiece: A subword tokenization method that splits text into frequently occurring pieces. "encode text as WordPiece tokens."

- zero-shot transfer: The ability to generalize to new tasks or prompts without task-specific training. "zero-shot transfer abilities"

Collections

Sign up for free to add this paper to one or more collections.