- The paper demonstrates that Meta-Reasoning improves LLM reasoning by deconstructing natural language semantics into symbolic components.

- It employs semantic resolution rules that map entities and operations to simplified symbolic forms, achieving an average +20% boost over Chain-of-Thought methods.

- The approach enhances out-of-domain generalization and stabilizes outputs, reducing unpredictable responses in complex reasoning tasks.

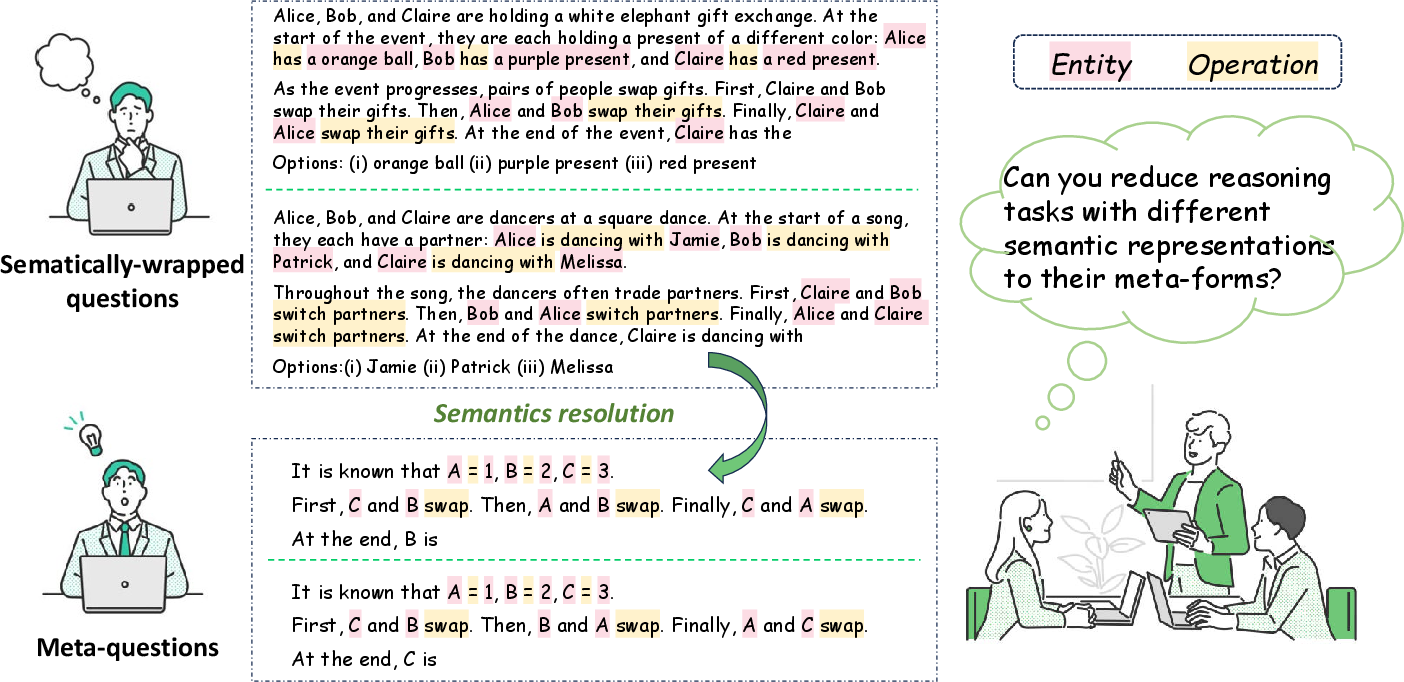

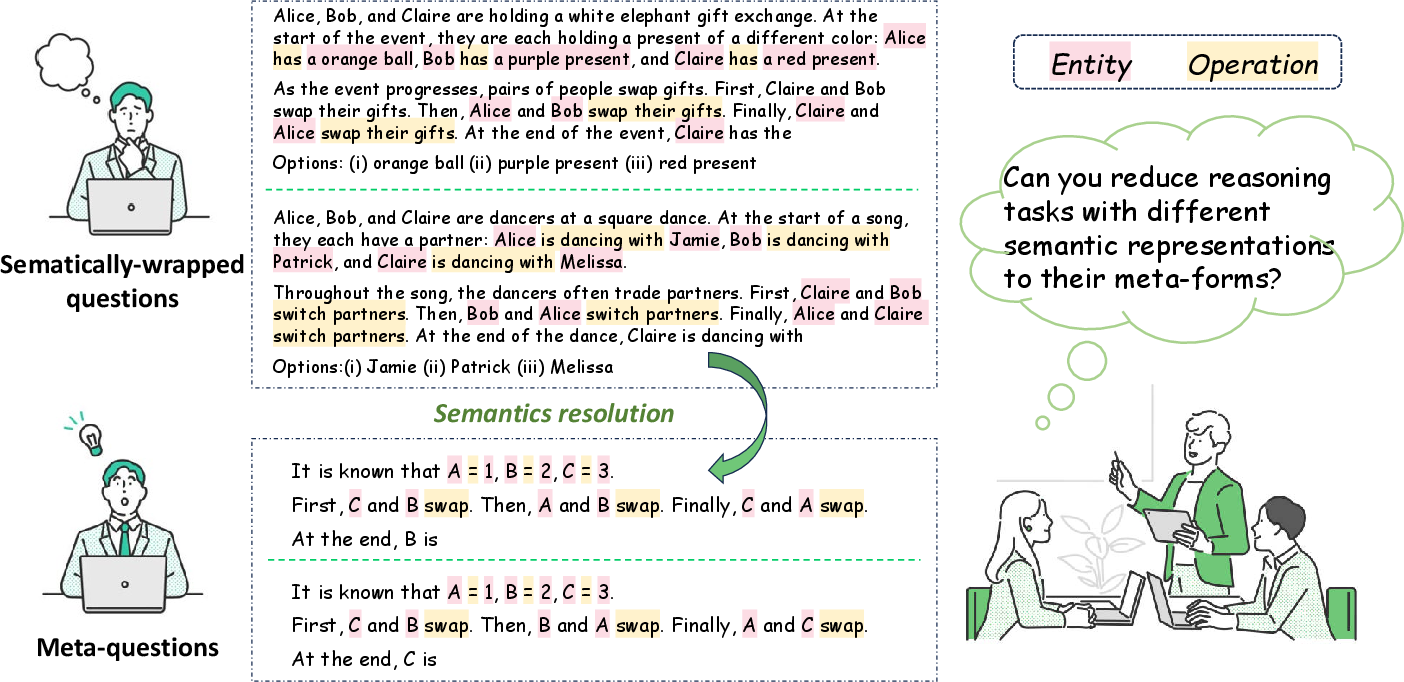

Meta-Reasoning is an innovative paradigm put forward to enhance the in-context reasoning abilities of LLMs. The principal objective is to transform the infinite semantic variations present in natural language to finite symbolic systems, thereby enabling LLMs to generalize reasoning tasks more effectively. This deconstruction aligns closely with the human cognitive process, which relies on symbolic representations to distill complex reasoning tasks into manageable components.

The authors highlight the limitations of existing neural-symbolic methods, which typically translate natural language into programming codes like Python and SQL. These methods constrain reasoning tasks into predefined programmatic structures, which don't align well with human reasoning processes and limit the adaptability of LLMs in diverse scenarios.

Meta-Reasoning introduces the concept of semantic-symbolic deconstruction, or semantic resolution, as a core mechanism. This technique allows LLMs to map natural language semantics onto symbolic representations, independent of specific semantic content, while retaining the inherent meaning of reasoning operations.

Meta-Reasoning uses entities and operations to structure reasoning skeletons, categorizing certain components of the reasoning process as entities (subjects of reasoning tasks) or operations (defining changes in entities). Mapping these components into symbolic forms reduces complex reasoning problems into solvable symbolic equations without external semantic interference.

Figure 1: Semantic Resolution of Meta-Reasoning. We set resolution rules for Entity and Operation.

Comparative Analysis and Experimental Results

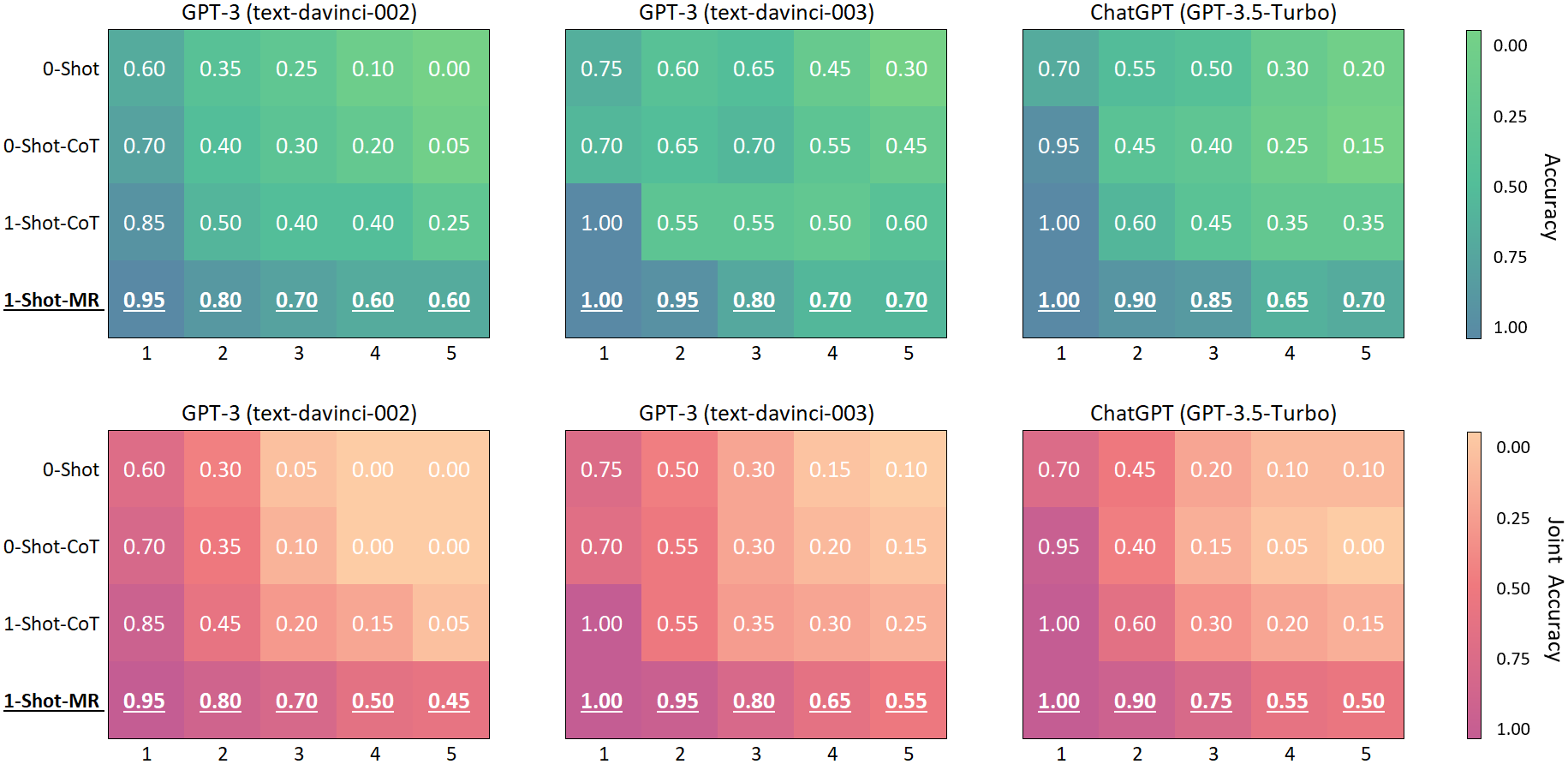

Empirical evaluations against the Chain-of-Thought (CoT) reasoning paradigm using LLMs, like GPT-3 and ChatGPT, reveal significant improvements in reasoning capabilities. Meta-Reasoning achieves notable performance enhancements across various datasets, including arithmetic, symbolic, and logical reasoning tasks, as well as complex theory-of-mind reasoning scenarios.

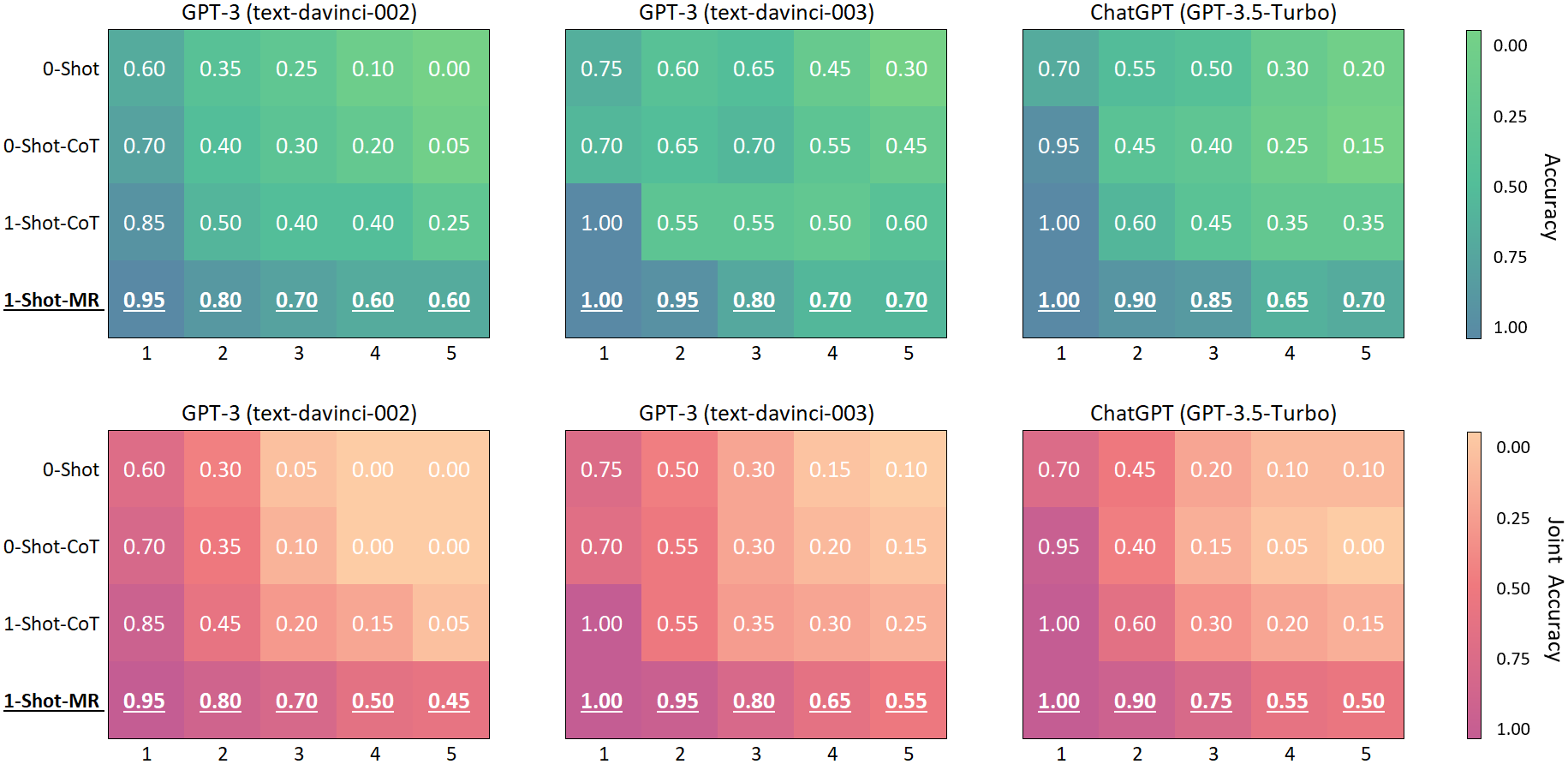

The experimental findings affirm that Meta-Reasoning significantly outperforms the Few-Shot-CoT method, showing an average performance improvement of +20% across all conventional reasoning datasets while requiring fewer demonstrations. Furthermore, for interactive reasoning tasks, Meta-Reasoning maintains higher accuracy than CoT when addressing higher-order theory-of-mind tasks, demonstrating stabilized performance even as problem complexity escalates (ordered reasoning greater than three).

Figure 2: Interactive Reasoning Results: Accuracy and joint accuracy of GPT-3 (text-davinci-002 and -003) and ChatGPT on the Hi-ToM dataset.

Out-of-Domain Generalization and Output Stability

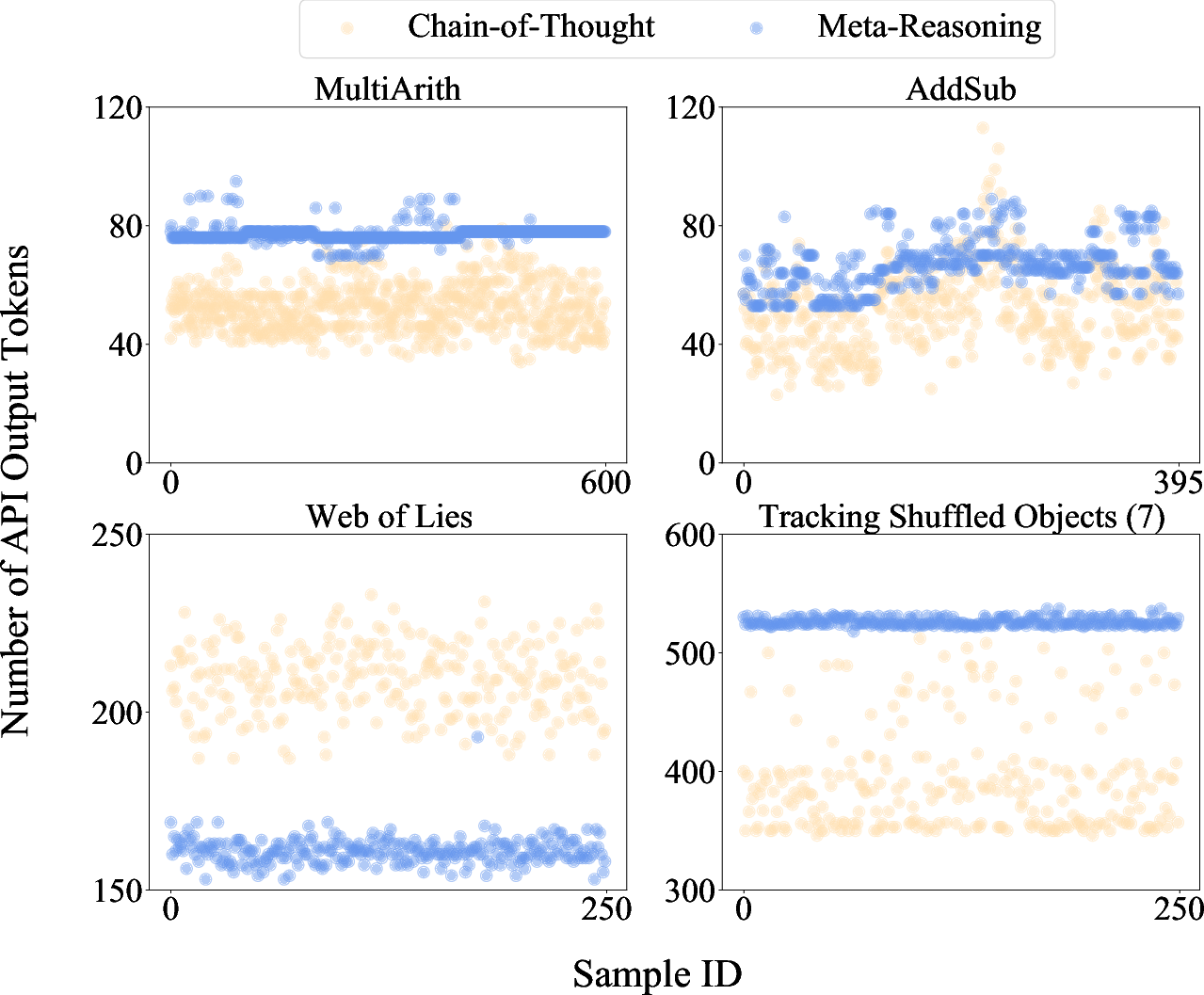

Meta-Reasoning exhibits exceptional out-of-domain (OOD) generalization capabilities, tested through boundary tests on several datasets. Compared to the sharp performance drop observed in CoT when reasoning tasks extend beyond domain boundaries, Meta-Reasoning maintains stability and high BRate scores, indicating robust adaptability to new challenges.

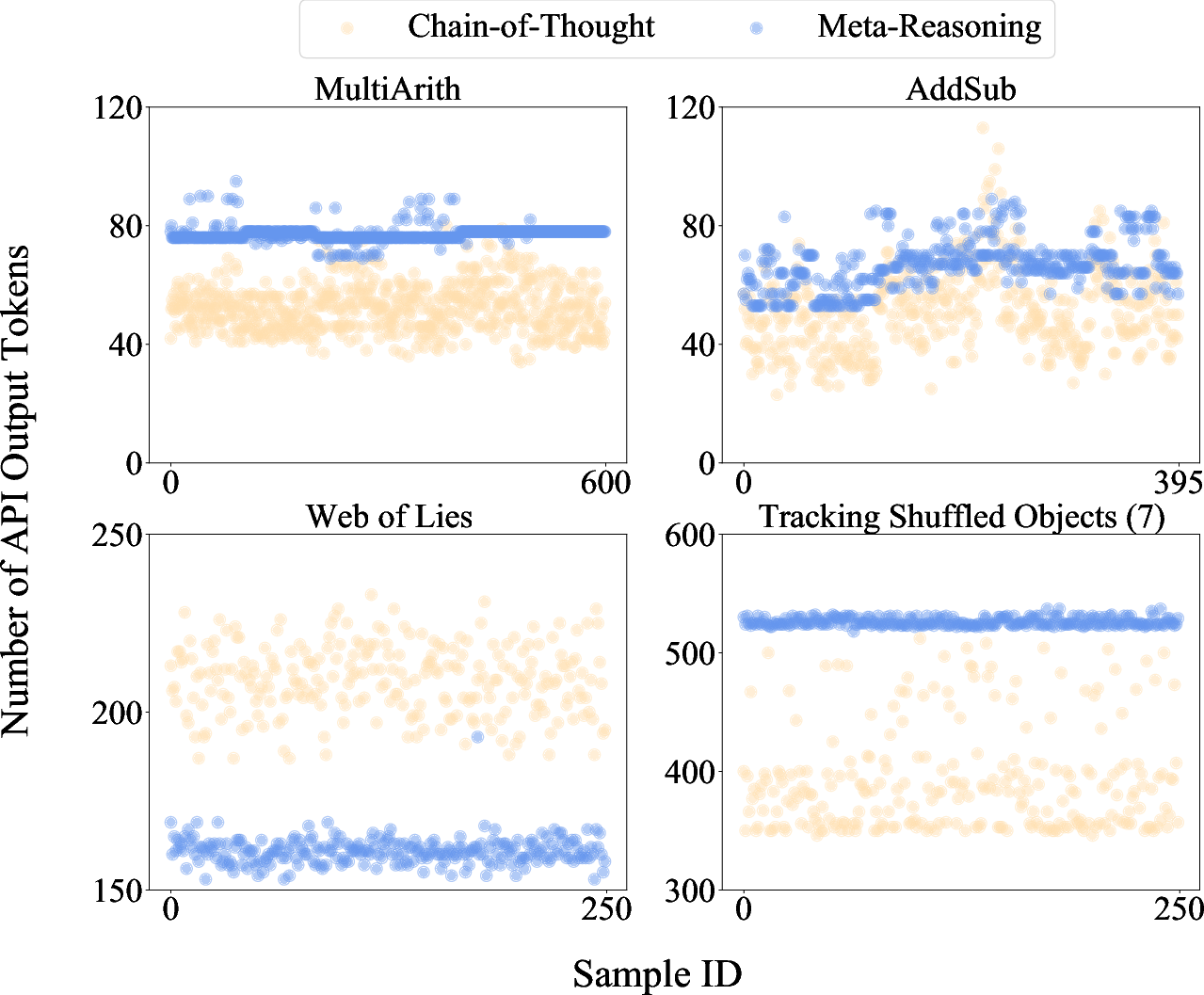

In addition to performance improvements, Meta-Reasoning ensures the stability of output, minimizing the likelihood of unpredictable or erratic outputs, a common user experience issue associated with current LLM usage. It achieves this by standardizing the symbolic resolution of outputs, thereby controlling the variability in token generation lengths.

Figure 3: The token number distributions of the text generated by GPT-3 text-davinci-002 when using the Chain-of-Thought and Meta-Reasoning paradigms.

Conclusions and Future Directions

Meta-Reasoning introduces a powerful framework for reasoning, simplifying semantic complexities and broadening the generalization capabilities of LLMs. This paradigm not only enhances reasoning accuracy but also scales learning efficiencies under limited demonstration scenarios, thus expanding the utility of LLMs across diverse contexts.

Future explorations should focus on further refining the semantic resolution capabilities of Meta-Reasoning to include tasks that demand world knowledge and commonsense reasoning. Additionally, a deeper inquiry into its applicability in real-world agent interactions can be undertaken to showcase its broader practical utility.

Meta-Reasoning stands as a promising advancement in the development of more capable, adaptable LLMs, paving the way for enhanced interaction with complex, real-world problems devoid of excessive semantic intricacies.