- The paper introduces a novel distributed universal adaptive network protocol that allows each node to cooperate locally without degrading performance.

- It employs a dynamic convex combination strategy with periodic feedback to achieve rapid convergence and adapt to varying data conditions.

- Performance analysis shows efficient scalability and reduced mean-square error, outperforming traditional non-cooperative methods in diverse scenarios.

Distributed Universal Adaptive Networks

Introduction

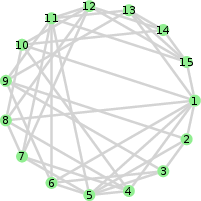

The paper introduces a novel approach to adaptive networks (ANs), specifically focusing on distributed universal estimation. The core objective is to ensure each node in a network benefits from cooperation without any performance degradation compared to non-cooperative scenarios. This approach is critical in environments like sensor networks and IoT applications, where distributed nodes collectively solve a global estimation problem. The work addresses key issues such as performance uniformity and the rejection of low-performance nodes, ensuring that exceptional node estimates are disseminated network-wide.

Key Concepts and Methodologies

Universal Estimation

Universal estimation traditionally involves a supervisor consulting a pool of experts (estimators), each with different parameter settings, and choosing the best performer in terms of a predefined figure of merit (e.g., MSE). However, in a distributed setting, implementing a single, centralized supervisor is impractical. Instead, the paper extends this concept to a network of supervisors, each coordinating locally with neighboring nodes.

Distributed Universal Protocol

The proposed protocol ensures that cooperation among nodes improves the network's overall performance, adhering to the principles of local, global, and non-cooperative universality. The protocol is designed to be robust under different network topologies and data conditions, including scenarios with highly varying spatial data statistics. A significant part of the protocol is the periodic feedback mechanism where local node estimates are adjusted periodically, ensuring rapid convergence and robustness against rapidly changing environments.

Implementation Details

The protocol's implementation can be summarized in the following steps:

- Local Fusion: Each node generates a local estimate based on both its data and its immediate neighbors' estimates.

- Supervisor Weights: Adaptive weights are used to determine the contribution of local and neighboring estimates dynamically.

- Feedback Mechanism: This periodic feedback accelerates the convergence of local estimates to the global best estimate.

An essential aspect of the system's efficiency is the convex combination strategy used for adaptive weighting, which helps nodes selectively listen to more reliable peers, thus enhancing real-time adaptability and robustness.

The analysis highlights several important results:

Practical Implications and Future Directions

The research implications extend to the design of robust and efficient distributed systems crucial for IoT and real-time sensor applications. The proposed distributed adaptive networks can significantly enhance performance reliability and minimize resource consumption in decentralized systems. Future research directions may explore optimizing the trade-off between convergence speed and resource utilization further and extending the protocol to accommodate non-linear models and even more dynamic environments.

Conclusion

This work lays a robust foundation for achieving distributed universal adaptive networks, emphasizing cooperation without degradation. It pioneers a scalable, efficient solution that outperforms traditional methods in both static and dynamic scenarios. The model's adaptability and solid performance metrics illustrate its potential for wide applications, paving the way for future advancements in distributed estimation systems.