ChatDev: Communicative Agents for Software Development

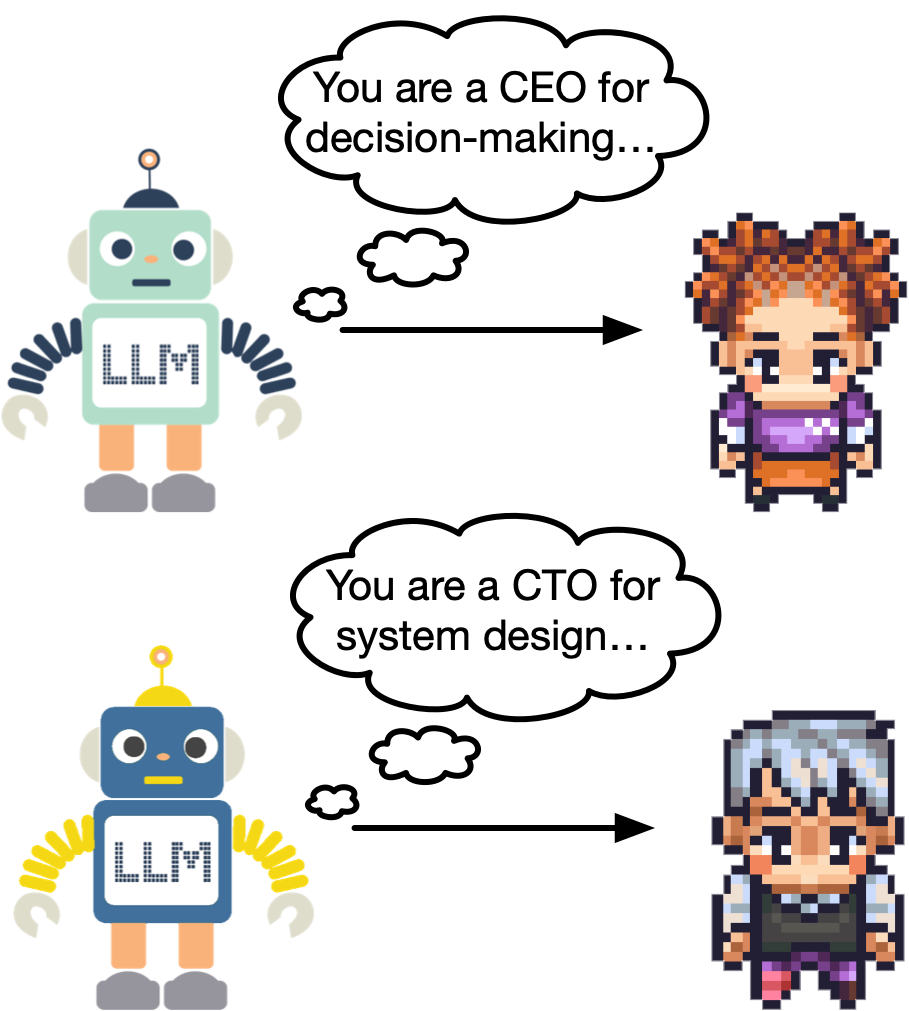

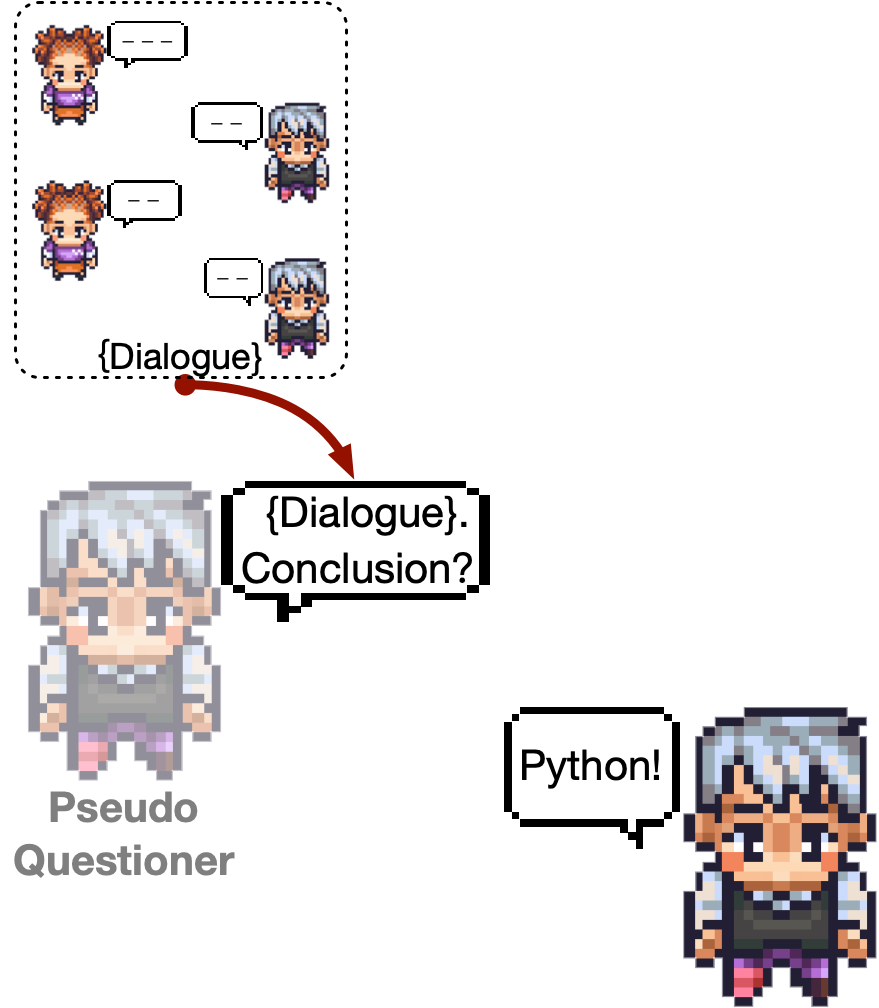

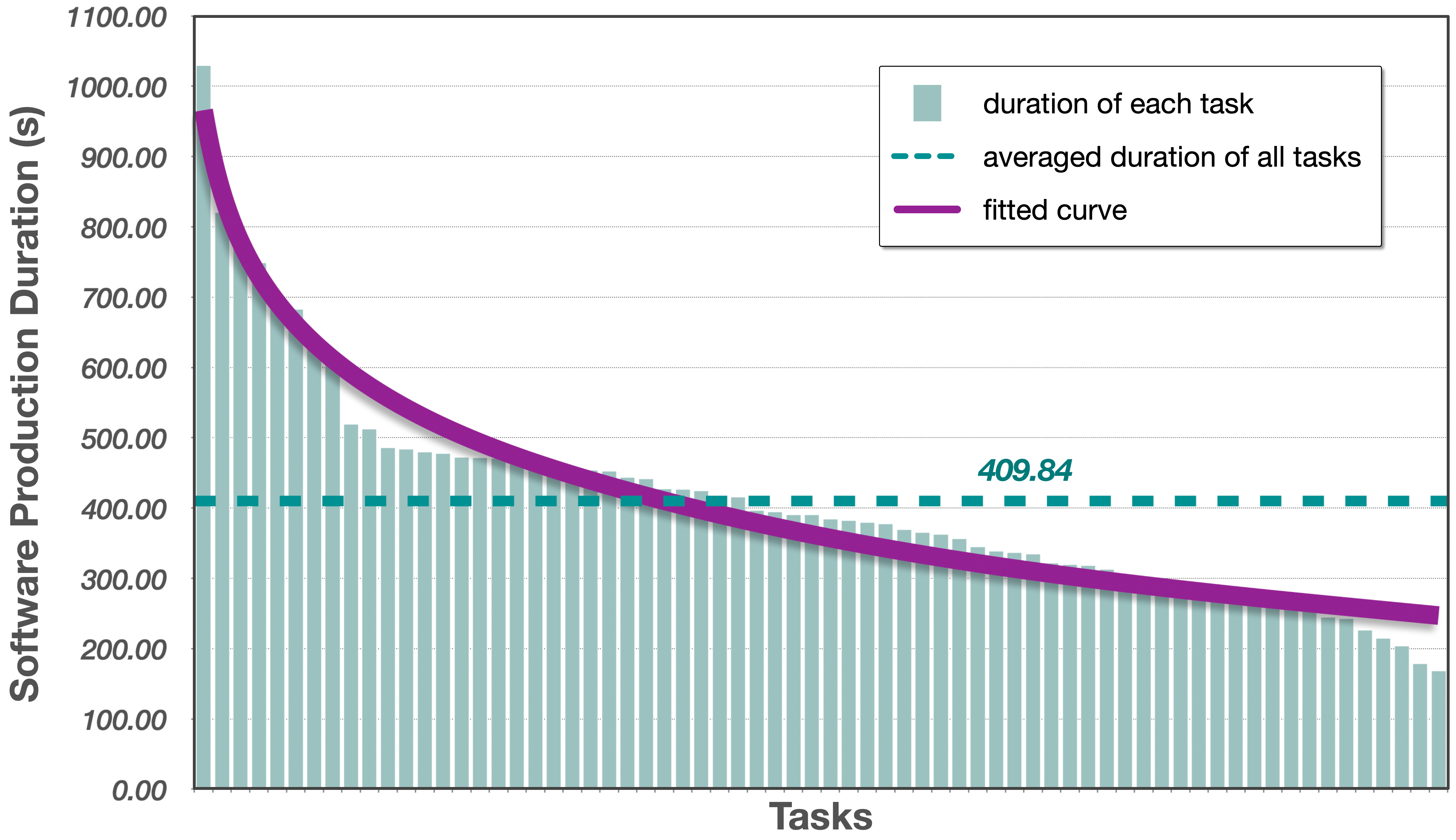

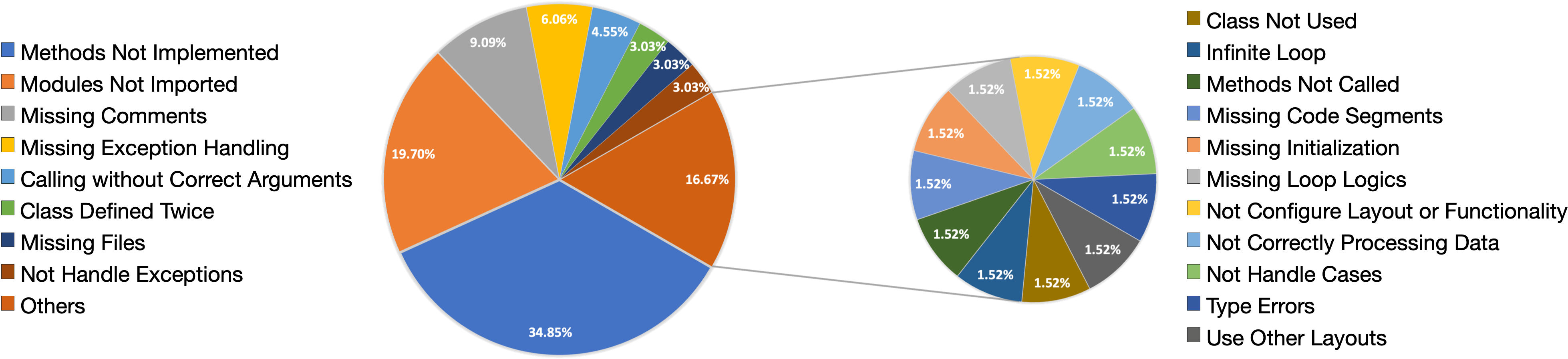

Abstract: Software development is a complex task that necessitates cooperation among multiple members with diverse skills. Numerous studies used deep learning to improve specific phases in a waterfall model, such as design, coding, and testing. However, the deep learning model in each phase requires unique designs, leading to technical inconsistencies across various phases, which results in a fragmented and ineffective development process. In this paper, we introduce ChatDev, a chat-powered software development framework in which specialized agents driven by LLMs are guided in what to communicate (via chat chain) and how to communicate (via communicative dehallucination). These agents actively contribute to the design, coding, and testing phases through unified language-based communication, with solutions derived from their multi-turn dialogues. We found their utilization of natural language is advantageous for system design, and communicating in programming language proves helpful in debugging. This paradigm demonstrates how linguistic communication facilitates multi-agent collaboration, establishing language as a unifying bridge for autonomous task-solving among LLM agents. The code and data are available at https://github.com/OpenBMB/ChatDev.

- Code localization in programming screencasts. Empir. Softw. Eng., 25(2):1536–1572, 2020.

- Large language models and the perils of their hallucinations. Critical Care, 27(1):1–2, 2023.

- Youssef Bassil. A simulation model for the waterfall software development life cycle. arXiv preprint arXiv:1205.6904, 2012.

- Systematic review in software engineering. System engineering and computer science department COPPE/UFRJ, Technical Report ES, 679(05):45, 2005.

- Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Evaluating large language models trained on code. CoRR, abs/2107.03374, 2021.

- Evaluating large language models trained on code. arXiv preprint arXiv:2107.03374, 2021.

- Teaching large language models to self-debug. arXiv preprint arXiv:2304.05128, 2023.

- Lm vs lm: Detecting factual errors via cross examination. arXiv preprint arXiv:2305.13281, 2023.

- The application of a new secure software development life cycle (s-sdlc) with agile methodologies. Electronics, 8(11):1218, 2019.

- Self-collaboration code generation via chatgpt, 2023.

- Improving factuality and reasoning in language models through multiagent debate. CoRR, abs/2305.14325, 2023.

- Automated handling of anaphoric ambiguity in requirements: A multi-solution study. In 44th IEEE/ACM 44th International Conference on Software Engineering, ICSE 2022, Pittsburgh, PA, USA, May 25-27, 2022, pages 187–199. ACM, 2022.

- Software engineering body of knowledge (SWEBOK). In Proceedings of the 23rd International Conference on Software Engineering, ICSE 2001, 12-19 May 2001, Toronto, Ontario, Canada, pages 693–696. IEEE Computer Society, 2001.

- Improving language model negotiation with self-play and in-context learning from AI feedback. CoRR, abs/2305.10142, 2023.

- A neural model for method name generation from functional description. In 26th IEEE International Conference on Software Analysis, Evolution and Reengineering, SANER 2019, Hangzhou, China, February 24-27, 2019, pages 411–421. IEEE, 2019.

- Research in software engineering: an analysis of the literature. Information and Software technology, 44(8):491–506, 2002.

- Fred J Heemstra. Software cost estimation. Information and software technology, 34(10):627–639, 1992.

- Deep code comment generation. In Proceedings of the 26th Conference on Program Comprehension, ICPC 2018, Gothenburg, Sweden, May 27-28, 2018, pages 200–210. ACM, 2018.

- Practitioners’ expectations on automated code comment generation. In 44th IEEE/ACM 44th International Conference on Software Engineering, ICSE 2022, Pittsburgh, PA, USA, May 25-27, 2022, pages 1693–1705. ACM, 2022.

- A systematic review of software development cost estimation studies. IEEE Transactions on Software Engineering, 33(1):33–53, 2007.

- Systematic literature review on security risks and its practices in secure software development. ieee Access, 10:5456–5481, 2022.

- Camel: Communicative agents for" mind" exploration of large scale language model society. arXiv preprint arXiv:2303.17760, 2023.

- CAMEL: communicative agents for "mind" exploration of large scale language model society. CoRR, abs/2303.17760, 2023.

- Encouraging divergent thinking in large language models through multi-agent debate. CoRR, abs/2305.19118, 2023.

- Training socially aligned language models in simulated human society. CoRR, abs/2305.16960, 2023.

- Neural networks for predicting the duration of new software projects. J. Syst. Softw., 101:127–135, 2015.

- Collaboration challenges in building ml-enabled systems: Communication, documentation, engineering, and process. In 44th IEEE/ACM 44th International Conference on Software Engineering, ICSE 2022, Pittsburgh, PA, USA, May 25-27, 2022, pages 413–425. ACM, 2022.

- Codegen: An open large language model for code with multi-turn program synthesis. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net, 2023.

- Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems, 35:27730–27744, 2022.

- Mohd. Owais and R. Ramakishore. Effort, duration and cost estimation in agile software development. In 2016 Ninth International Conference on Contemporary Computing (IC3), pages 1–5, 2016.

- Generative agents: Interactive simulacra of human behavior. arXiv preprint arXiv:2304.03442, 2023.

- Generative agents: Interactive simulacra of human behavior. CoRR, abs/2304.03442, 2023.

- Extraction of system states from natural language requirements. In 27th IEEE International Requirements Engineering Conference, RE 2019, Jeju Island, Korea (South), September 23-27, 2019, pages 211–222. IEEE, 2019.

- Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125, 2022.

- In-context impersonation reveals large language models’ strengths and biases. CoRR, abs/2305.14930, 2023.

- Multi-agent collaboration: Harnessing the power of intelligent LLM agents. CoRR, abs/2306.03314, 2023.

- Feature maps: A comprehensible software representation for design pattern detection. In 26th IEEE International Conference on Software Analysis, Evolution and Reengineering, SANER 2019, Hangzhou, China, February 24-27, 2019, pages 207–217. IEEE, 2019.

- Automated testing of software that uses machine learning apis. In 44th IEEE/ACM 44th International Conference on Software Engineering, ICSE 2022, Pittsburgh, PA, USA, May 25-27, 2022, pages 212–224. ACM, 2022.

- Improving automatic source code summarization via deep reinforcement learning. In Proceedings of the 33rd ACM/IEEE International Conference on Automated Software Engineering, ASE 2018, Montpellier, France, September 3-7, 2018, pages 397–407. ACM, 2018.

- Recagent: A novel simulation paradigm for recommender systems. CoRR, abs/2306.02552, 2023.

- Automatically learning semantic features for defect prediction. In Proceedings of the 38th International Conference on Software Engineering, ICSE 2016, Austin, TX, USA, May 14-22, 2016, pages 297–308. ACM, 2016.

- Automatic unit test generation for machine learning libraries: How far are we? In 43rd IEEE/ACM International Conference on Software Engineering, ICSE 2021, Madrid, Spain, 22-30 May 2021, pages 1548–1560. IEEE, 2021.

- Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems, 35:24824–24837, 2022.

- Multi-party chat: Conversational agents in group settings with humans and models. CoRR, abs/2304.13835, 2023.

- Predicting how to test requirements: An automated approach. In Software Engineering 2020, Fachtagung des GI-Fachbereichs Softwaretechnik, 24.-28. Februar 2020, Innsbruck, Austria, volume P-300 of LNI, pages 141–142. Gesellschaft für Informatik e.V., 2020.

- GUIGAN: learning to generate GUI designs using generative adversarial networks. In 43rd IEEE/ACM International Conference on Software Engineering, ICSE 2021, Madrid, Spain, 22-30 May 2021, pages 748–760. IEEE, 2021.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.