- The paper presents a comprehensive survey of deep learning methods in medical image registration, highlighting novel network architectures and loss functions.

- It introduces uncertainty estimation techniques using Bayesian approaches and evaluates performance with metrics like TRE and Dice coefficients.

- Practical applications such as atlas construction and 2D-3D registration demonstrate the clinical relevance of these advanced methods.

A Survey on Deep Learning in Medical Image Registration: New Technologies, Uncertainty, Evaluation Metrics, and Beyond

Deep learning technology has significantly transformed the field of medical image registration over recent years, resulting in unprecedented precision and efficiency in aligning medical image data sets. This paper provides a comprehensive overview of these advancements, focusing on novel network architectures, loss functions, uncertainty estimation techniques, evaluation metrics, and practical applications.

Fundamentals of Deep Learning-Based Registration

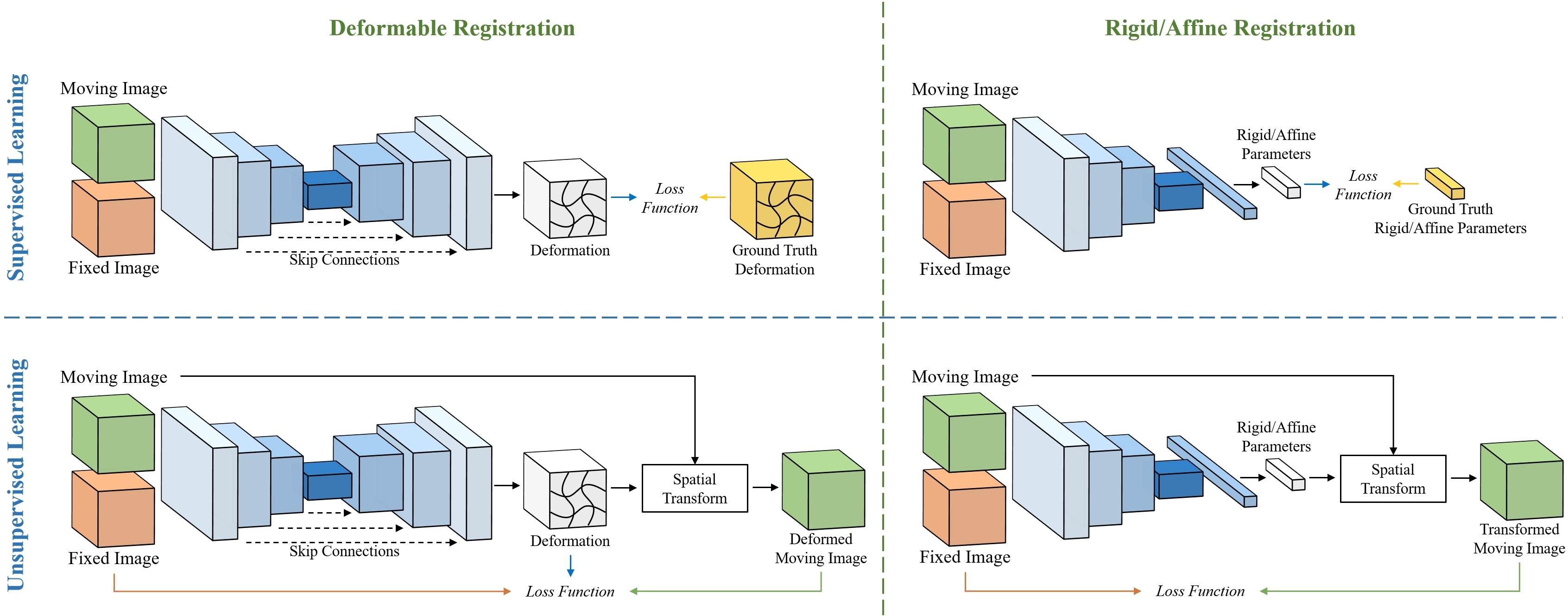

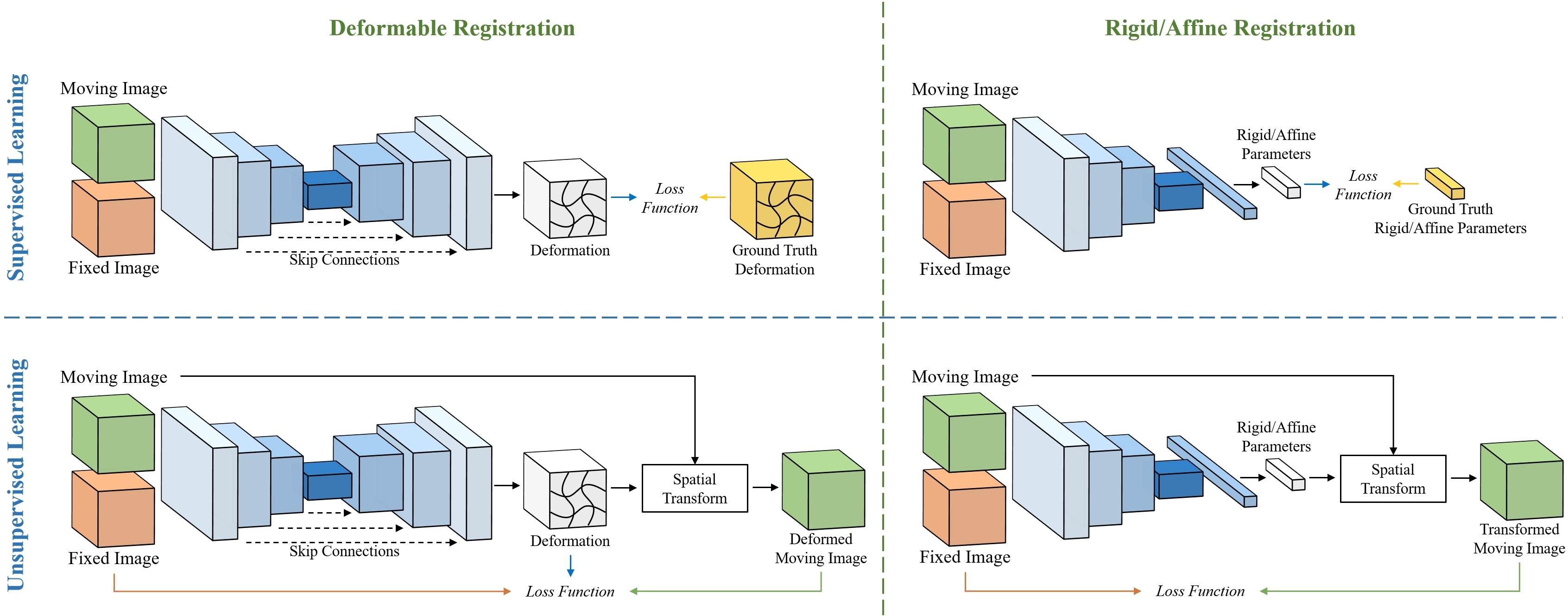

Deep learning approaches to medical image registration primarily leverage neural networks to estimate spatial transformations between fixed and moving images. These transformations can be either rigid/affine or deformable, depending on the nature of the task. Deformable image registration (DIR) is particularly vital for applications requiring detailed alignment of complex anatomical structures. Generally, learning-based registration methods can be divided into supervised, unsupervised, and semi-supervised approaches.

Figure 1: Overview of learning-based image registration. The top panel depicts the common pipeline for supervised learning in medical image registration, which necessitates ground truth transformations. The bottom panel demonstrates the unsupervised learning pipeline, wherein the network learns to perform registration using only input images. The left panel presents the learning-based DIR pipeline, typically employing an encoder-decoder-style network architecture. The right panel exhibits the learning-based rigid/affine registration, which usually involves only an encoder.

Novel Network Architectures

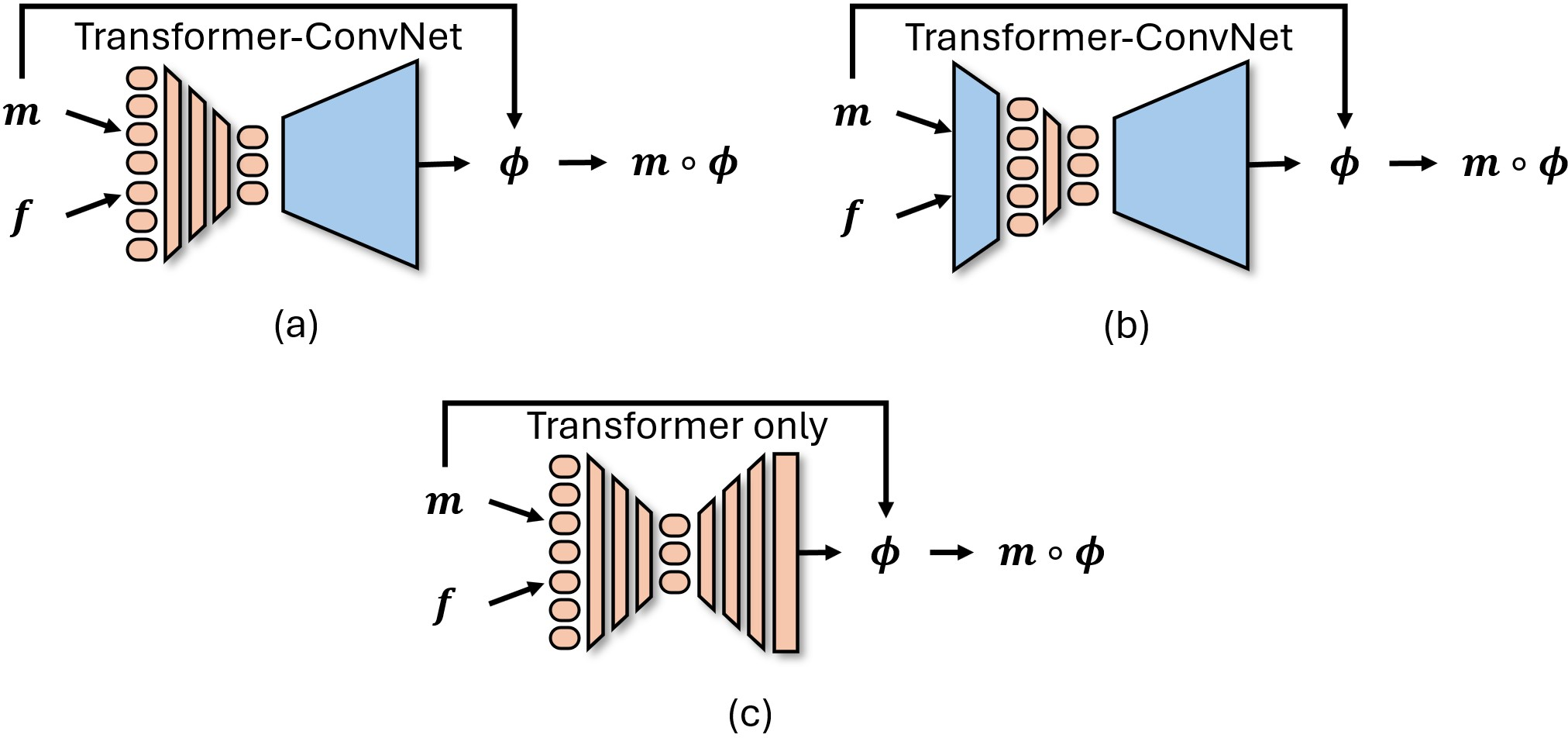

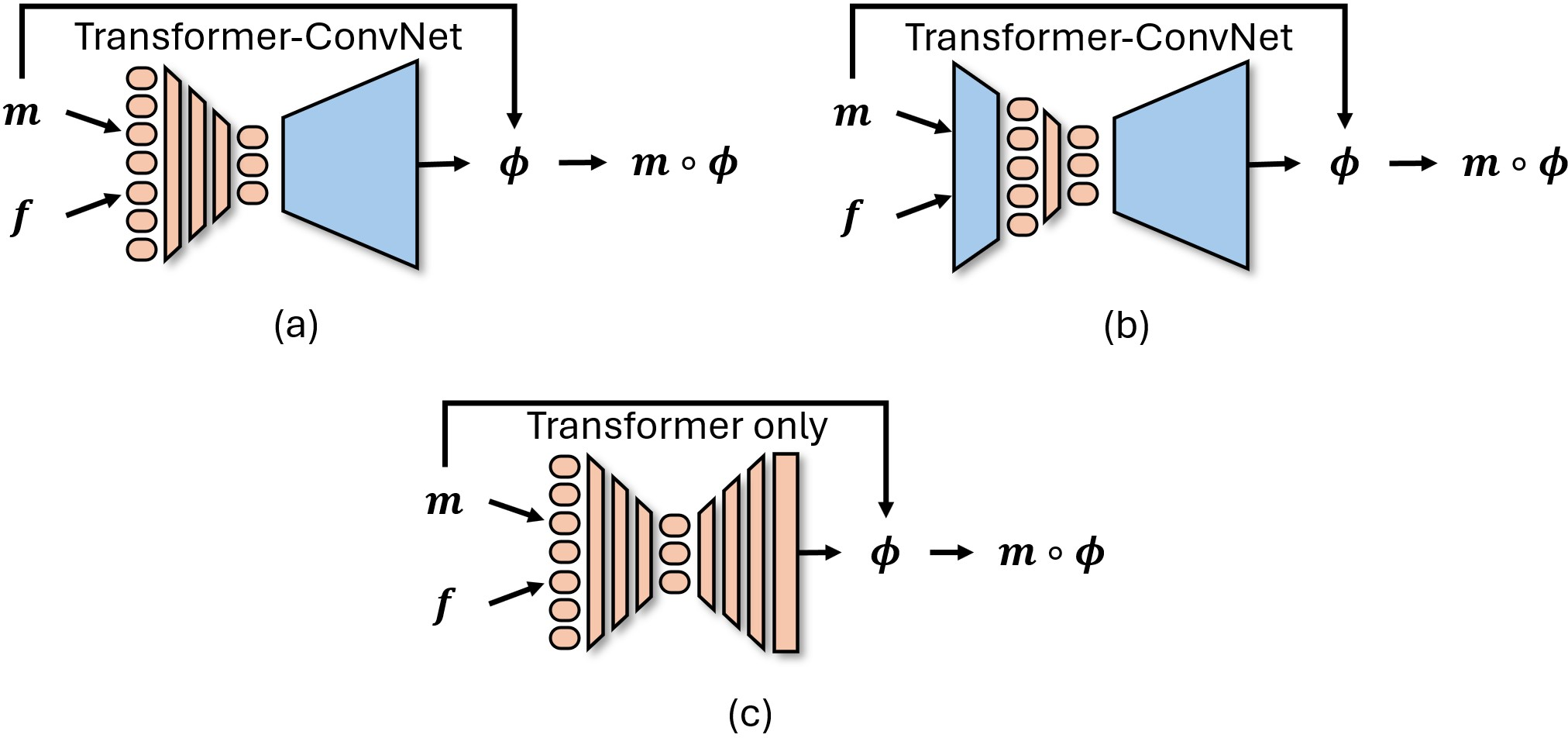

Recent advances in neural architectures have transformed how registration tasks are approached. Convolutional neural networks (CNNs) have been traditionally employed, but architectures inspired by Transformers, diffusion models, and implicit neural representations are gaining traction due to their ability to capture spatial relationships more effectively. Transformers, for instance, utilize self-attention mechanisms to align image features over long distances, resulting in improved registration performance.

Figure 2: Graphical representation of Transformers used in medical image registration, with m and f indicating the moving and fixed images, respectively, and ϕ representing the deformation field.

Loss Functions and Regularization

The choice of loss function and regularization strategy is pivotal in learning-based medical image registration. Common choices include mean squared error (MSE), normalized cross-correlation (NCC), and mutual information (MI). Regularization methods, including diffeomorphic constraints, ensure spatial smoothness and topological consistency of transformations, preventing undesirable artifacts such as folding.

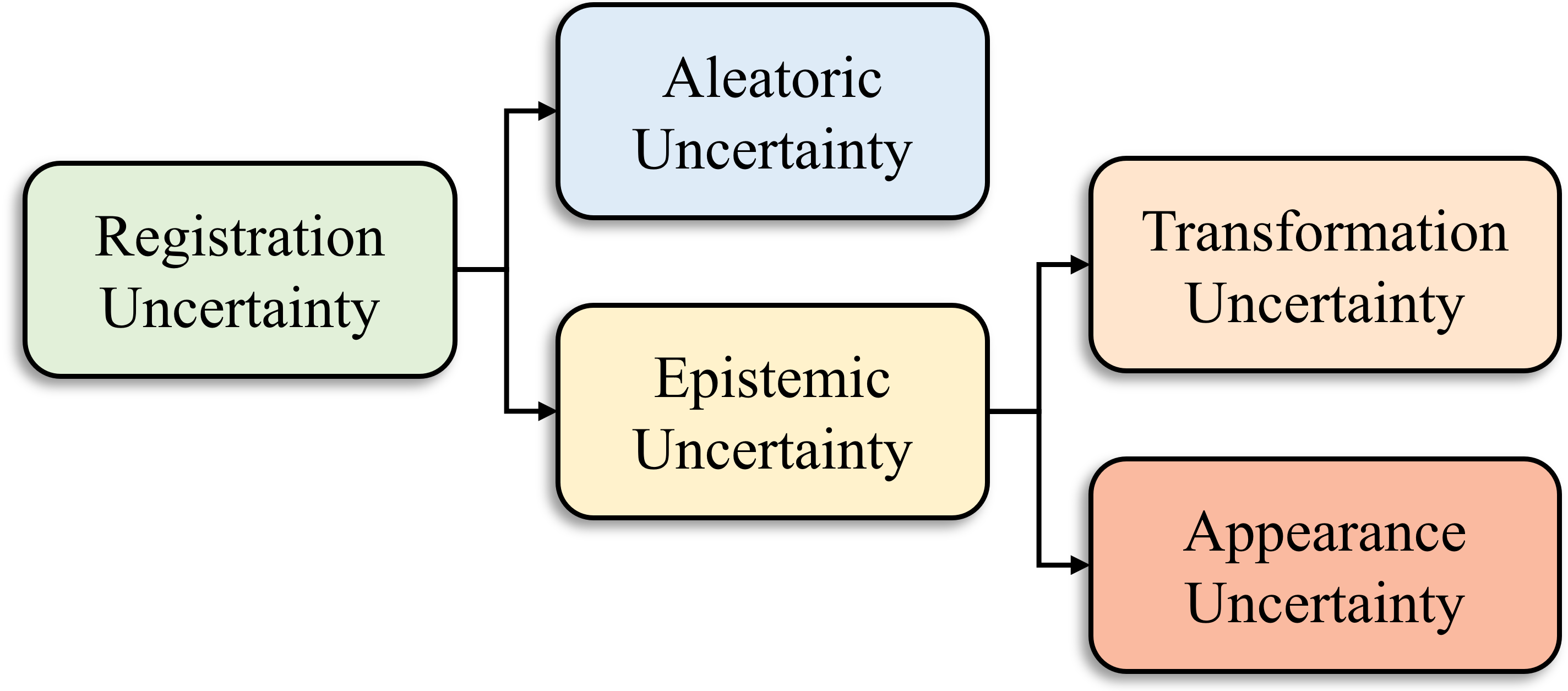

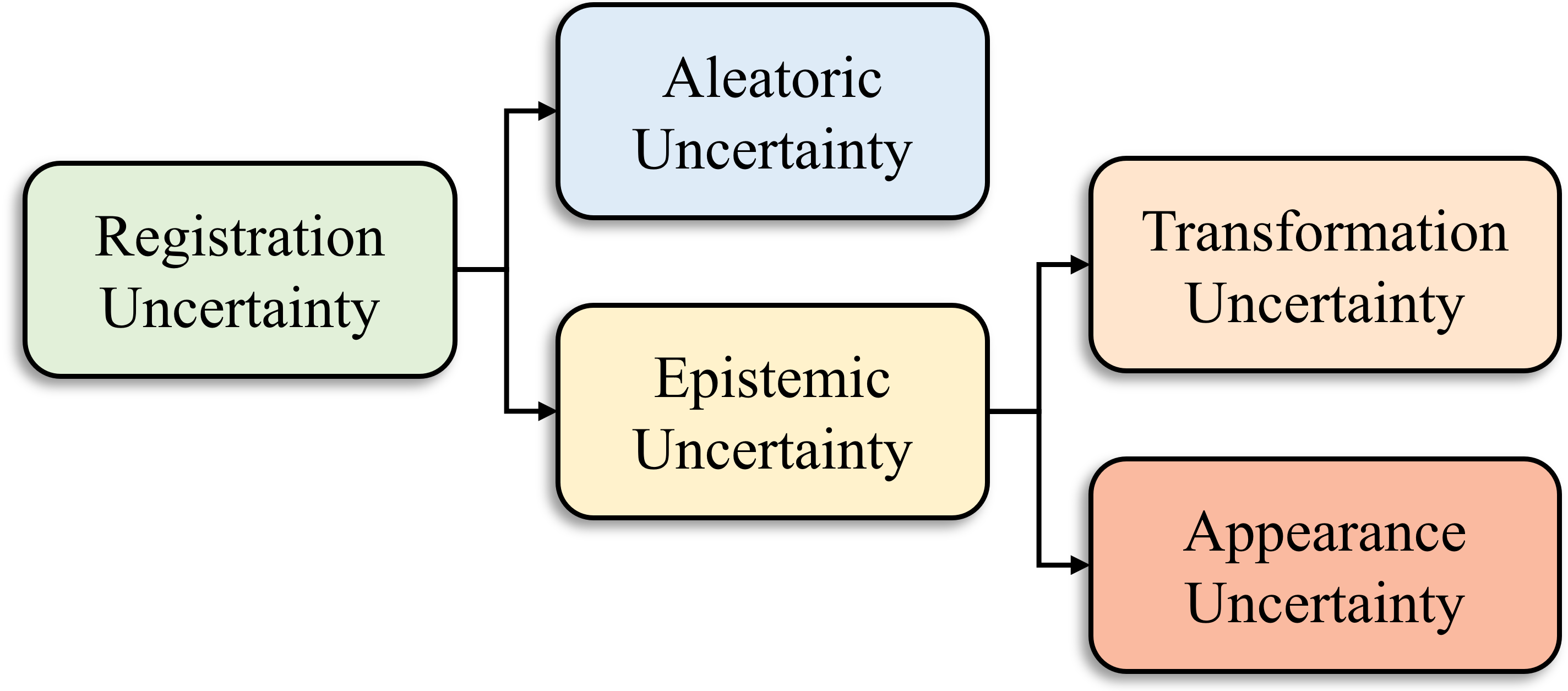

Estimation of Registration Uncertainty

Quantifying uncertainty in registration results is crucial for clinical decision-making. Current methods involve Bayesian deep learning techniques, including Monte Carlo dropout, to estimate both aleatoric and epistemic uncertainties. Understanding the sources of uncertainty helps practitioners evaluate the reliability of registration results in clinical settings.

Figure 3: Various types of registration uncertainty can be estimated using DNNs.

Evaluation Metrics

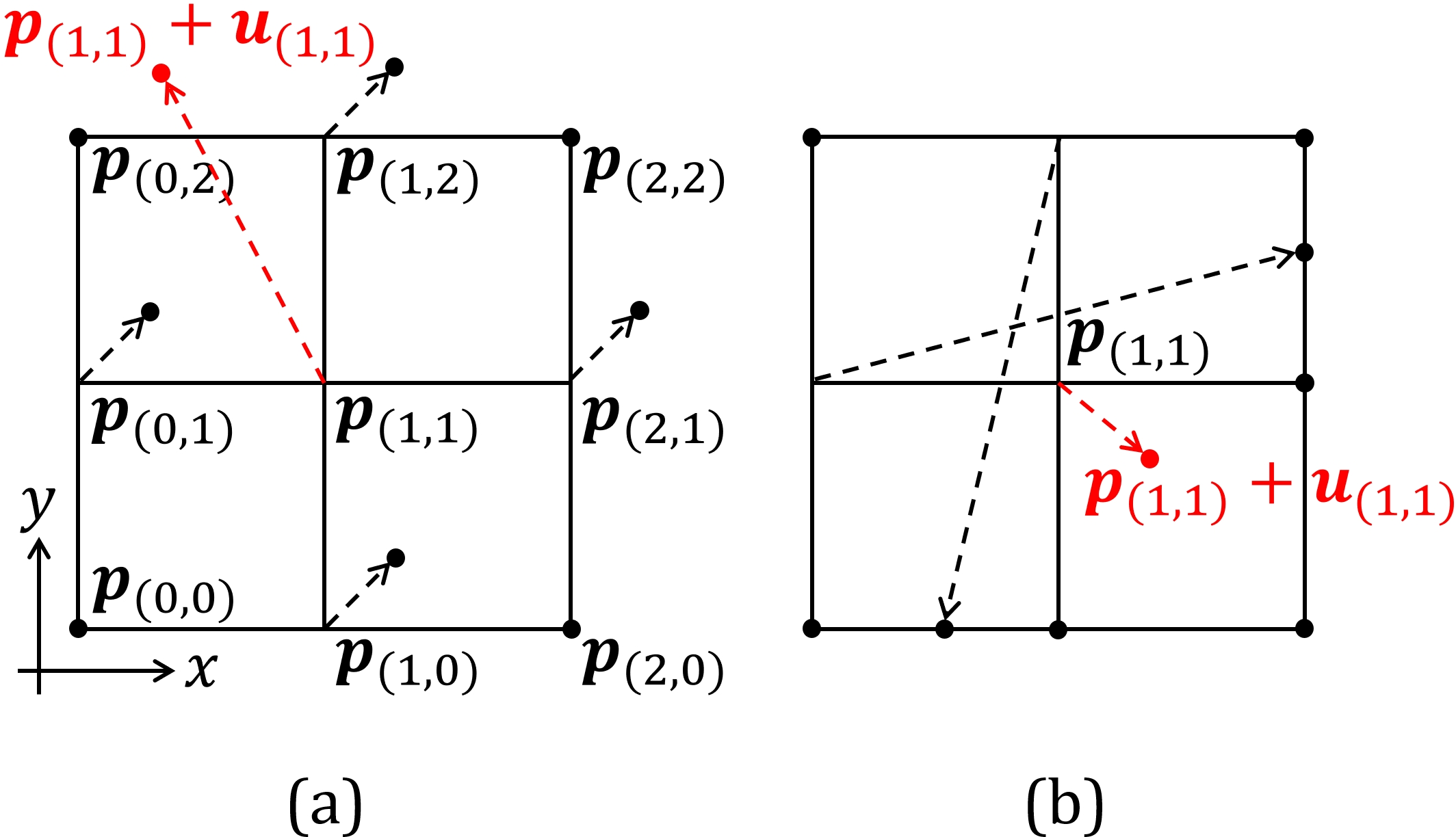

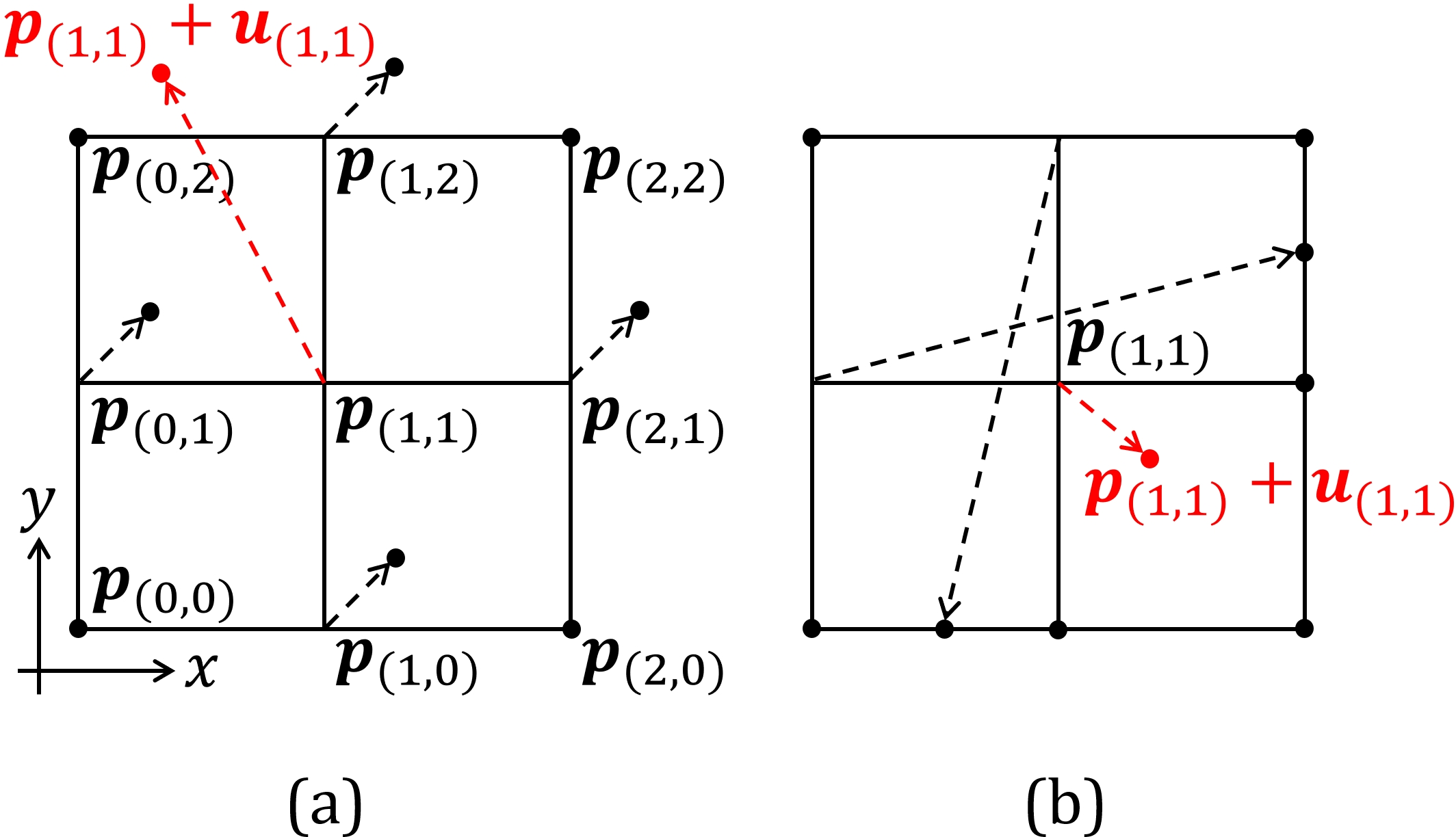

Assessing the performance of registration algorithms is achieved through measures such as target registration error (TRE), Dice similarity coefficients, and Hausdorff distances. These metrics offer insights into the accuracy and regularity of transformations, guiding the development of better registration techniques.

Figure 4: Examples of the checkerboard problem~(a) and the self-intersection problem~(b) for the central difference approximated Jacobian determinant |J| on a 3\times3 grid. The transformations are visualized as a displacement field and the displacement of the center pixel is highlighted in red. In~(a), the central difference approximated |J| for the center pixel equals one but the displacement of the center pixel is not involved in the computation. Even if the center pixel moves outside the field of view, the central difference approximated |J| still equals one. In~(b), the transformation around the center pixel already introduced folding in space regardless of the displacement of the center pixel but the central difference approximated |J| is positive.

Practical Applications and Future Directions

The application scope of learning-based medical image registration is vast, including atlas construction, multi-atlas segmentation, motion estimation, and 2D-3D registration. Future research should focus on enhancing the generalizability and robustness of existing methods to cater to diverse clinical scenarios. Moreover, integrating advanced techniques such as contrastive learning and cross-attention into registration pipelines holds promise for further improvements.

Conclusion

Deep learning is reshaping the landscape of medical image registration through innovative methods and architectures that provide increased accuracy and efficiency. By tackling existing challenges and exploring new applications, researchers can realize the potential of these technologies in clinical practice, thereby optimizing patient outcomes and operational efficiency.