Markerless human pose estimation for biomedical applications: a survey

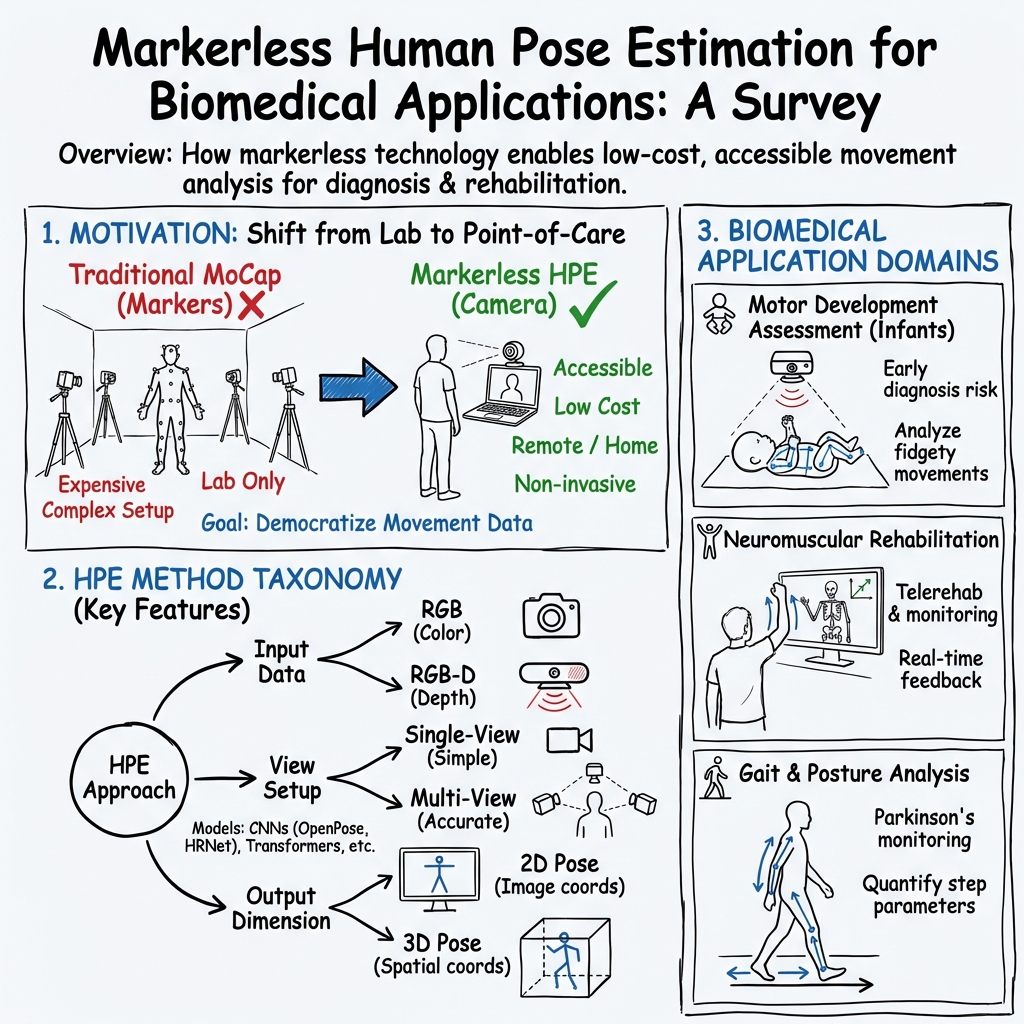

Abstract: Markerless Human Pose Estimation (HPE) proved its potential to support decision making and assessment in many fields of application. HPE is often preferred to traditional marker-based Motion Capture systems due to the ease of setup, portability, and affordable cost of the technology. However, the exploitation of HPE in biomedical applications is still under investigation. This review aims to provide an overview of current biomedical applications of HPE. In this paper, we examine the main features of HPE approaches and discuss whether or not those features are of interest to biomedical applications. We also identify those areas where HPE is already in use and present peculiarities and trends followed by researchers and practitioners. We include here 25 approaches to HPE and more than 40 studies of HPE applied to motor development assessment, neuromuscolar rehabilitation, and gait & posture analysis. We conclude that markerless HPE offers great potential for extending diagnosis and rehabilitation outside hospitals and clinics, toward the paradigm of remote medical care.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is a survey, which means it reviews and organizes lots of earlier studies instead of running one new experiment. Its topic is “markerless human pose estimation” (HPE) for health and medicine. That’s a way for computers to find where your body joints (like knees, elbows, and shoulders) are by looking at normal videos, without needing sticky markers on your skin. The authors look at how well this works today, where it’s already being used in healthcare, what the main tools and tricks are, and what still needs improvement.

What questions did the paper ask?

In simple terms, the authors wanted to know:

- What are the main kinds of markerless pose estimation methods, and how do they differ?

- Where in medicine are these methods already being used (for example, with babies, in rehabilitation, or in walking/posture analysis)?

- How good are they for real clinical use, and what trade-offs exist (like accuracy versus cost or ease of use)?

- What trends and future directions could make these tools more helpful in everyday healthcare, including at home?

How did they study it?

This is a review of the field:

- They examined 25 different pose-estimation approaches and over 40 biomedical studies that used them.

- They grouped and compared methods by important features and explained how those choices affect healthcare use.

To make the technical terms approachable, here are the main ideas they explain and compare:

Human pose estimation, in everyday words

- Think of the computer trying to draw a stick figure on a person in a video, putting dots on joints and connecting them.

- “Markerless” means it does this from regular cameras—no special reflective markers, no lab-only equipment.

Key design choices, explained with analogies

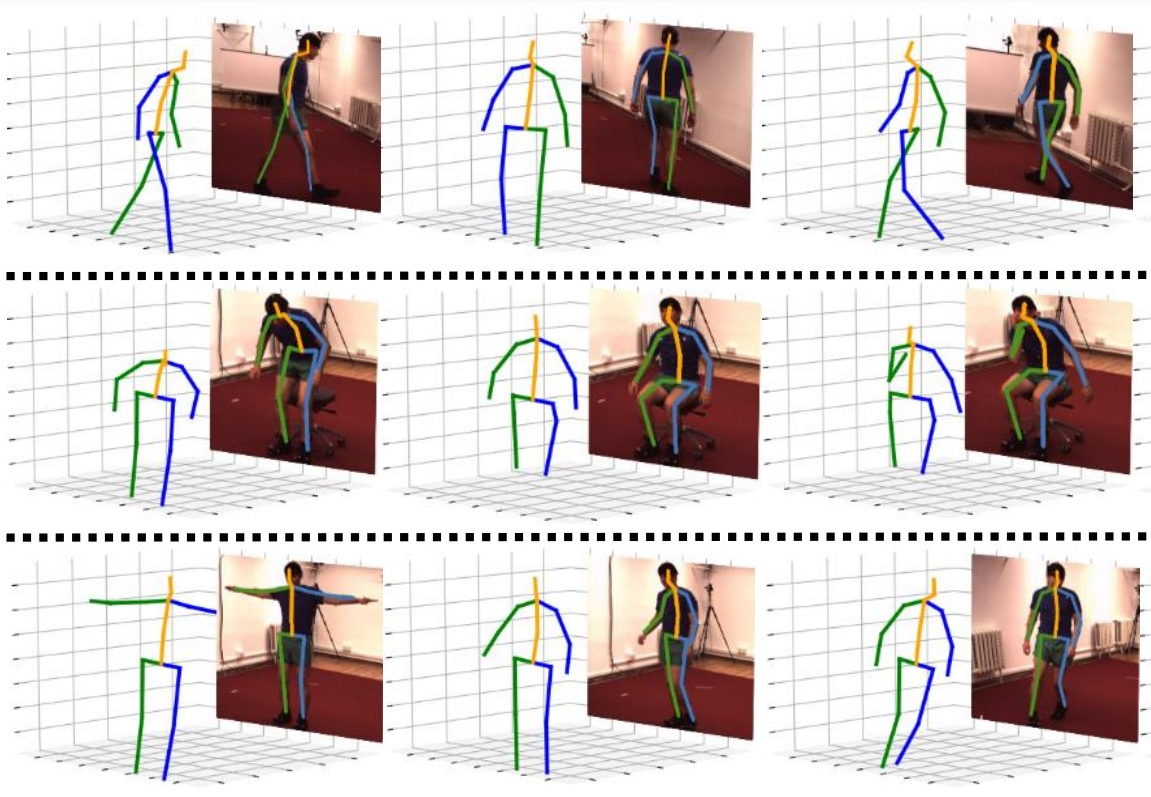

- 2D vs 3D:

- 2D is like drawing the stick figure on a flat photo.

- 3D is like placing the stick figure in real space, with depth. 3D is more useful clinically but harder to get right.

- Top-down vs bottom-up:

- Top-down: first find each person in the image, then place the joints on that person. Like spotting people in a crowd, then labeling their body parts.

- Bottom-up: first find all joints in the image, then group them into people. Like collecting puzzle pieces first, then assembling the people.

- Single-view vs multi-view:

- Single-view uses one camera—cheaper and simpler, but depth is guessed.

- Multi-view uses two or more cameras—by “crossing” lines from each camera (triangulation), it can locate joints in 3D more accurately.

- RGB vs RGB-D:

- RGB is a normal color camera.

- RGB-D adds a depth sensor that measures how far things are (like a built-in ruler). This can help with 3D but still struggles when body parts block each other.

- Temporal reasoning:

- Videos can look “jittery” frame-by-frame. Methods use smoothing or look at several frames in a row to keep the stick figure steady over time.

How the review connects to healthcare

- The paper looks at how these choices play out in three areas:

- Motor development in babies and toddlers

- Neuromuscular rehabilitation (e.g., after injury or stroke)

- Gait and posture analysis (e.g., walking patterns)

It also discusses practical realities: what works on smartphones, what needs more cameras, where accuracy is “good enough,” and where it isn’t yet.

What did they find, and why is it important?

Below is a brief, readable summary. Each topic includes what researchers tried and what it means for patients and clinicians.

1) Motor development assessment (especially newborns and infants)

- Goal: Spot early signs of movement problems (like risk of cerebral palsy) by analyzing how babies move.

- What’s hard: Babies are tiny, move unpredictably, and have different body proportions than adults—most AI models were trained on adults.

- What researchers did:

- Used simple setups (often a camera above a bed) and sometimes depth cameras.

- Used pose tools like OpenPose, HRNet, and newer infant-specific methods.

- Focused on “fidgety” and “writhing” movements in early months, which are important clinical signals.

- Explored at-home videos from phones to make screening more accessible.

- Why it matters: These systems can help flag babies who may need further evaluation, possibly earlier and outside the hospital. That can lead to faster interventions when they matter most.

- Key caution: Doctors need to understand why the AI makes certain calls. Newer work adds visual explanations to improve trust.

2) Neuromuscular rehabilitation

- Goal: Help patients do exercises correctly, track recovery, and even guide robot-assisted therapy.

- What’s hard: People may have unusual body shapes or movement limits (e.g., post-surgery), which can confuse generic pose models. Safety is crucial.

- What researchers did:

- Built systems that run on phones, browsers, edge devices, or in the cloud to make home use realistic.

- Mixed RGB and depth cameras when needed for better 3D accuracy.

- Measured joint angles, detected mistakes, and provided feedback in real time or near-real time.

- Compared different pose models and found some are fast and accurate enough for clinical angles (like elbows or shoulders).

- Why it matters: Patients could get quality guidance at home, reducing travel and costs. Clinicians can monitor progress more objectively.

3) Gait and posture analysis

- Goal: Measure how people walk and stand to spot problems and plan treatment.

- What’s hard: Self-occlusion (body parts hiding others) with a single camera; getting true 3D angles; precise force/torque estimates often need additional sensors.

- What researchers did:

- Used one or two cameras, often placed to capture side and front views, and focused on key angles rather than the whole body.

- Found that while markerless systems are less precise than lab-grade motion capture, many still perform well enough for practical clinical measures.

- Explored ways to combine 2D video with models or depth to estimate gait parameters over time.

- Why it matters: Clinics and even small practices can assess gait more easily, and patients can be monitored outside the lab.

General lessons and trade-offs

- Accuracy vs accessibility: Multi-camera or lab systems are more accurate but cost more and require expertise. Single-camera, phone-based systems are cheaper and easier but less precise.

- 2D-to-3D “lifting” can work, but it’s an estimate. Multi-view 3D is more reliable.

- Depth cameras help but aren’t a magic fix—occlusions and noise still matter.

- For some tasks, “good enough” accuracy is already helpful, especially if the system assists a clinician rather than replacing one.

- For detailed physics (like forces and torques), current markerless methods often aren’t precise enough without extra sensors.

What’s the big picture?

- Markerless HPE can move parts of diagnosis and rehabilitation out of specialized labs and into homes or regular clinics. This supports remote care, which is more convenient and can reach more people.

- It’s cheaper, easier to set up, and less intrusive than traditional marker-based systems.

- It’s not perfect yet. Future work should:

- Improve reliability for babies and for patients with atypical body shapes.

- Make models faster and lighter for phones and low-cost devices.

- Provide better explanations so clinicians trust and understand results.

- Bridge the gap for biomechanics that need very precise 3D and force estimates.

In short, the survey shows that markerless pose estimation is already useful in several medical areas and has strong potential to make care more accessible—especially through remote monitoring and at-home assessments—while highlighting where more progress is needed to meet clinical standards fully.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

The following list distills what remains missing, uncertain, or unexplored in the surveyed literature on markerless HPE for biomedical applications, with concrete directions for future research.

- Clinical accuracy thresholds: Define task-specific error tolerances (e.g., allowable joint angle error, spatiotemporal gait parameter error) required for diagnosis, triage, or therapy decisions, rather than reporting only pose-estimation metrics.

- Benchmark datasets in clinical populations: Create standardized, publicly available datasets for infants, toddlers, and diverse patient groups (stroke, spinal cord injury, obesity, amputees) with synchronized MoCap/force plates and clinical labels to enable reproducible comparisons.

- Domain shift and generalization: Develop and validate methods that robustly transfer from adult-trained models to infants/toddlers, atypical body habitus, and patients using assistive devices (walkers, canes, prostheses, wheelchairs), including systematic evaluation protocols.

- Infant-specific modeling: Build and validate age-specific skeletal models and priors (e.g., for 0–12 months and 1–4 years), with 3D ground truth, to reduce reliance on adult skeletons and 2D-only proxies; quantify sim-to-real gaps for SMIL/SMPL-based approaches.

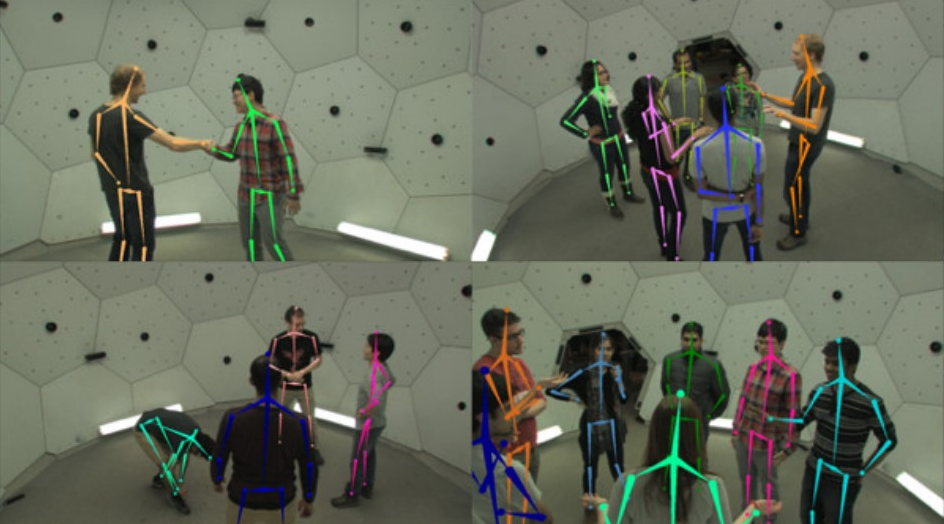

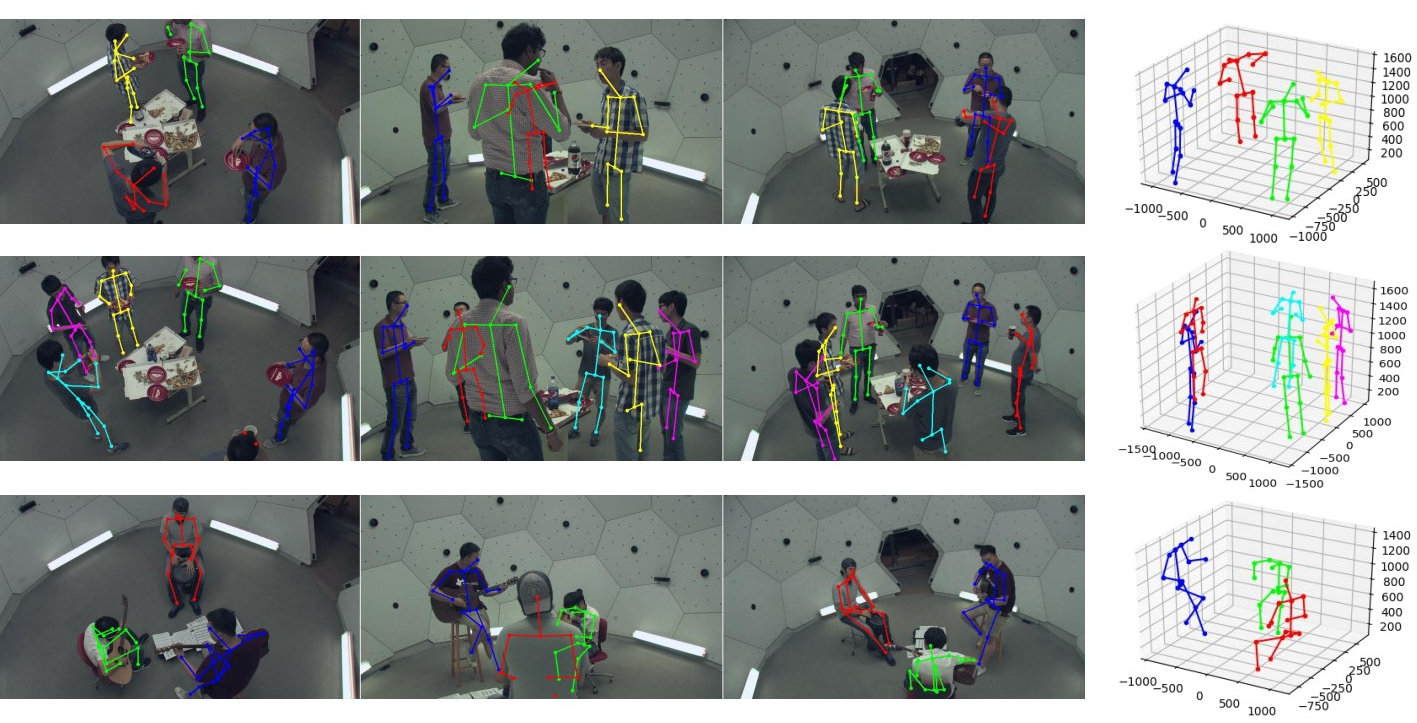

- Multi-view association in clinical scenes: Design calibration-light, robust cross-view matching that performs reliably under clutter, reflective surfaces, medical equipment, and partial views, overcoming the practical NP-hard matching challenge in clinics.

- Monocular 3D “lifting” reliability: Characterize failure modes and clinical impact of monocular 3D lifting (no geometric guarantees), including uncertainty-aware outputs and thresholds for when results are too unreliable for clinical use.

- Temporal consistency without latency: Develop online temporal models that reduce jitter and preserve range of motion without introducing clinically unacceptable latency, with explicit latency–smoothness trade-off benchmarks.

- RGB-D self-occlusion artifacts: Address “flat pose” errors when limbs are occluded in depth, via learned occlusion reasoning or physics/biomechanics priors; compare cost–benefit of multi-view RGB-D versus improved monocular RGB-D.

- Occlusion robustness in practice: Quantify performance under occlusions from therapists, robots, casts, hospital gowns, and cables; devise targeted training/augmentation and occlusion-aware inference strategies for these conditions.

- Explainability for clinicians: Standardize interpretable outputs (e.g., body-part attributions, salient motion segments) linked to clinical constructs (e.g., fidgety movements), and assess whether explanations improve trust and decision-making.

- Kinetics/inverse dynamics without force plates: Advance and validate methods to estimate ground reaction forces and joint torques from video-only or low-cost sensors, with rigorous comparisons to gold standards in patient cohorts.

- Home deployment and calibration: Provide auto-calibration, camera placement guidance, and cross-session normalization to support longitudinal monitoring despite variable home environments and camera repositioning.

- Uncertainty quantification and propagation: Calibrate joint-level confidence measures, propagate uncertainty to derived metrics (e.g., angles, gait events), and define decision rules for flagging unreliable sessions.

- Cross-device variability: Establish calibration and normalization procedures to ensure comparability across smartphones/cameras with different lenses, frame rates, and rolling shutter artifacts; quantify device-induced biases.

- Skeletal model standardization: Harmonize joint definitions (e.g., 14 vs 25+ joints) and provide mappings to biomechanical joint centers; quantify the effect of model choice on clinically relevant angles and spatiotemporal parameters.

- Clinically meaningful evaluation: Report test–retest reliability (ICC), minimal detectable change (MDC), and minimal clinically important difference (MCID) for derived measures, not only average pose errors.

- Prospective outcome studies: Conduct multi-center, prospective trials to assess whether HPE-enabled assessments improve patient outcomes, access, and cost-effectiveness versus standard care.

- Privacy-preserving pipelines: Develop on-device processing, federated learning, and secure data handling tailored to infant and home videos; evaluate performance–privacy trade-offs.

- Fairness and bias analyses: Measure and mitigate performance disparities across skin tones, clothing, cultural contexts, and socioeconomic settings; publish stratified performance metrics and bias mitigation strategies.

- Telerehabilitation feedback safety: Validate closed-loop exercise guidance (error detection/correction) with thresholds tied to injury risk; measure adherence and safety in unsupervised home use.

- Robot-assisted rehab safety: Formalize pose estimation error bounds compatible with safe robot control, including failover strategies when confidence drops; conduct hardware-in-the-loop safety tests.

- Real-time performance budgets: Quantify CPU/GPU/memory/power requirements and achievable FPS on commodity home devices; provide model compression/distillation strategies that preserve clinical fidelity.

- Workflow and EHR integration: Define data schemas, reporting formats, and audit trails for clinical documentation; create APIs and interoperability standards for integration with EHRs and clinical decision support.

- Top-down vs bottom-up in clinics: Provide empirical guidance on method choice under clinical trade-offs (occlusion, false positives from equipment, variable numbers of people), including hybrid strategies.

- Multimodal fusion: Systematically evaluate accuracy gains from combining HPE with IMUs, pressure insoles, or ambient sensors; develop principled fusion methods and ablation studies showing incremental benefit.

- Annotation protocols and tools: Establish labeling guidelines for clinical video (including difficult cases), training for annotators, and inter-rater reliability metrics; release tooling and annotated benchmarks.

- Assistive device handling: Create detectors and priors that explicitly model walkers, canes, orthoses, prosthetics, and wheelchairs, and quantify their effects on pose accuracy and derived metrics.

- Longitudinal personalization: Build adaptive models that learn a patient’s baseline and detect meaningful change over time, with drift detection and calibration for camera/session variability.

- Regulatory pathways: Map validation evidence to medical device regulations (e.g., FDA/CE, IEC 62304, ISO 14971); define performance documentation and risk management tailored to HPE software.

- Standardized smartphone acquisition for infants: Specify distance, angle, lighting, and minimal duration for home recordings; implement automated quality control to reject unusable videos.

- Validation of calibration-less multi-view: Test methods like FLEX/MetaPose in real clinical geometries (non-ideal baselines, rolling shutters, unsynchronized cameras) and quantify robustness.

- Beyond kinematics: Validate HPE-derived metrics for tremor (amplitude/frequency), bradykinesia, and other fine-motor signs against clinical scales (e.g., UPDRS), including sensitivity to change.

- Posture and spinal assessment: Determine accuracy of spinal curvature and alignment measures from external keypoints and define when additional sensing is necessary.

- Missing/occluded keypoint handling: Develop biomechanically constrained imputation and quantify the downstream impact on gait events, angles, and risk scores.

- Dataset diversity and attire: Curate datasets reflecting hospital attire, casts, bandages, and cultural clothing; evaluate and mitigate domain shifts due to garments and accessories.

Practical Applications

Immediate Applications

The following items can be deployed today using existing markerless HPE methods and workflows described in the paper. Each item notes sector(s), indicative tools/workflows, and key assumptions/dependencies that may affect feasibility.

- Home-based infant general movement assessment (GMA) via smartphone video: healthcare, mobile software; OpenPose/EfficientPose/HRNet for 2D single-view infant pose (7–25 joints), pose-derived features (e.g., HOJO2D, HOJD2D), clinician dashboard for FM scoring; assumes standardized recording protocols (camera position, lighting), domain adaptation to infants (non-adult proportions), privacy/consent, sufficient video quality and parent guidance.

- NICU limb pose tracking for preterm infants (bed-mounted RGB-D): healthcare (neonatology), hospital IT; Kinect/RealSense, Mask R-CNN or JFC-based limb pose, RGB–depth alignment, periodic recordings; assumes device placement sterility, sensor calibration, limited occlusion, workflow integration with NICU routines.

- Clinic gait and posture evaluation with two low-cost RGB cameras (sagittal/coronal): healthcare (physiotherapy/orthopedics); OpenPose or DeepLabCut for 2D single-view poses, planar constraints, knee/hip angle extraction; assumes controlled walkway, occlusion management (two-camera placement), tolerance for higher error vs. marker-based systems.

- Telerehabilitation exercise monitoring with lightweight models: healthcare (rehab), patient apps; HRNet variants (EESD), MobileNet/ESPNetv2 modules, real-time browser deployment (PoseNet via TensorFlow.js/WebGL) for feedback and error detection; assumes modern smartphones/laptops, stable connectivity, clear backgrounds, sufficient frame rate for temporal consistency.

- Edge-based rehab monitoring on dedicated devices: healthcare devices, IoT; DeepRehab-style fully convolutional 2D HPE (ResNet101 backbone) on Edge TPU with companion smartphone app; assumes procurement and maintenance of edge hardware, local power, on-device updates, environment free of heavy occlusions.

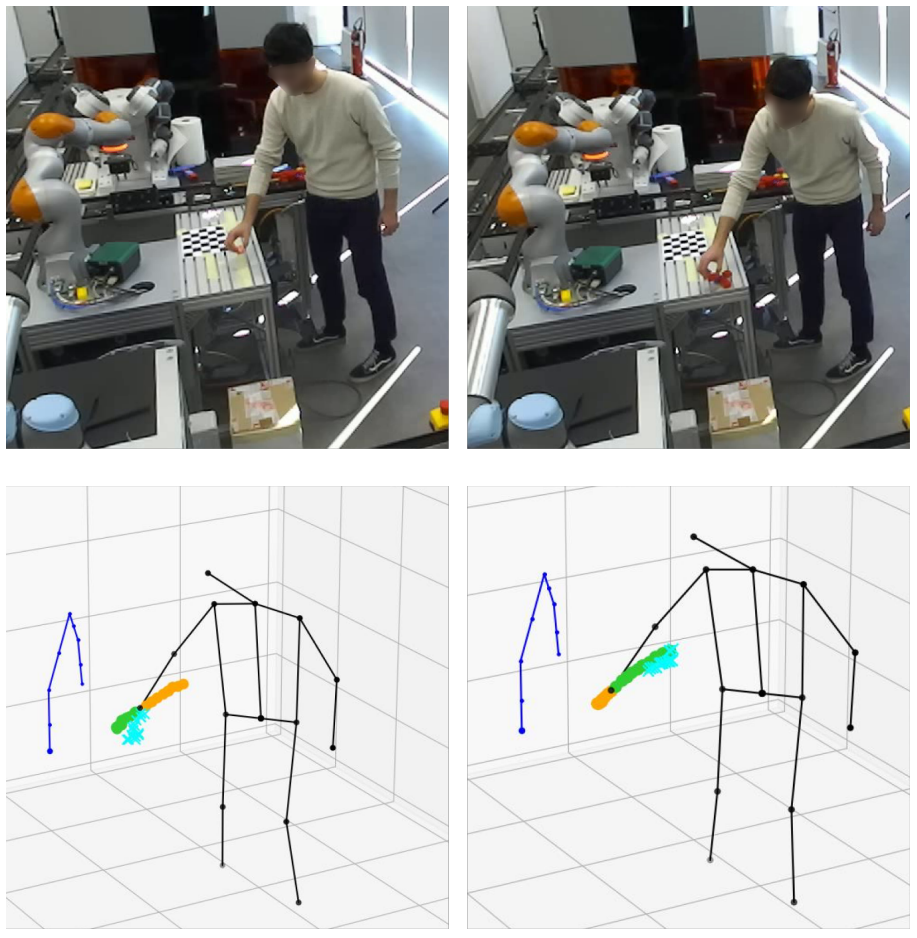

- Robot-assisted therapy safety monitoring (upper-limb): robotics in healthcare; OpenPose for upper-body joint angles at ~10 fps, depth channel mapping for 3D angles and safety thresholds; assumes accurate angle estimation in clinical range, depth sensing availability, clinical validation of thresholds, safety certification of the integrated system.

- Cloud-supported motion assessment pipeline: healthcare IT/cloud; smartphone capture → OpenPose → heuristic 3D lifting (VideoPose3D optional) → joint angles → clinician-readable report; assumes secure data transfer, GDPR/HIPAA compliance, latency tolerances for non-real-time review, robust anonymization.

- Fall detection in home security using markerless HPE: consumer/home security; top-down person detection followed by pose-based fall classification; assumes permission and privacy controls, indoor lighting adequacy, tolerance to false positives/negatives, model robustness to household occlusions.

- Sports coaching for planar tasks (jumping, running) with sagittal 2D HPE: sports/fitness industry; DeepLabCut/OpenPose for 2D poses, optical-flow patch CNNs to derive gait/jump descriptors; assumes consistent camera placement, lighting, athlete compliance, acceptance of moderate error.

- Rapid video coding in developmental research: academia (developmental psychology/neuroscience); batch pose extraction (OpenPose/HRNet), semi-supervised infant body parsing (SiamParseNet + FVGAN) to reduce manual labeling burden; assumes IRB approvals, data governance, annotator validation workflows, computational resources.

- CP risk scoring from infant pose sequences: healthcare (pediatrics/neonatology); EfficientPose + GCN/CTR-GCN on infant joint trajectories for binary/graded risk prediction with visualization of salient movements; assumes representative training data, clinician-in-the-loop review, model interpretability aids, bias assessment.

- Workplace posture screening via RGB cameras: occupational health; 2D HPE for posture metrics, ergonomic risk flags and dashboards; assumes employee consent, privacy safeguards, standardized camera setups, policy-compliant data retention.

Long-Term Applications

These opportunities require further research, scaling, validation, and/or policy frameworks before widespread deployment.

- Hospital-at-home multiview RGB-D motion labs for comprehensive remote diagnostics: healthcare; synchronized consumer-grade camera arrays (multiview RGB/RGB-D), PlaneSweepPose/FasterVoxelPose for robust 3D, clinician dashboards; depends on calibration wizards, affordable hardware bundles, standardized home protocols, service reimbursement, remote technical support.

- Plug-and-play generalizable 3D HPE in unconstrained environments: software/AI platforms; MetaPose/FLEX-style models with attention and meta-learning to reduce calibration and labeled-data needs; depends on larger, diverse training corpora (infants, elderly, post-surgery), robust domain adaptation, rigorous external validation.

- Clinically validated inverse dynamics (forces/torques) from markerless HPE: healthcare biomechanics; fusing 3D HPE with learned ground-reaction estimation or minimal sensor augmentation to replace/augment force plates; depends on multimodal fusion accuracy, longitudinal validation against gold standards, regulatory clearance.

- Closed-loop control of exoskeletons and assistive robots via markerless HPE: robotics/medical devices; low-latency multiview 3D estimation with temporal filtering (Kalman, VideoPose3D) feeding controller constraints; depends on sub-50 ms end-to-end latency, safety certs, fail-safes for occlusion, robust edge inference.

- Automated screening for neurodegenerative diseases from gait using smartphones: healthcare/public health; longitudinal gait feature extraction (stride variability, asymmetry) via HPE, population-scale risk models; depends on large, diverse cohorts, confounder control (footwear, flooring), clinical studies demonstrating predictive value.

- Standardized infant pose datasets and benchmarks for global neonatal screening: academia/healthcare consortia; shared labeled datasets (0–12 months) with pose, movement categories, outcomes; depends on multicenter collaborations, ethical approvals, de-identification infrastructure, annotation standards.

- Telehealth standards and reimbursement policy for markerless motion analysis: policy/healthcare payers; guidelines for camera setups, accuracy thresholds, clinician workflows, CPT codes; depends on cost-effectiveness evidence, stakeholder consensus, privacy frameworks, liability clarity.

- Privacy-preserving, on-device HPE with federated learning: software/edge AI; model training across clinics/devices without centralizing raw video, secure aggregation; depends on edge compute capability, federated optimization robustness, differential privacy guarantees, IT acceptance.

- Multi-modal fusion (RGB-D + IMU) to overcome occlusions and improve accuracy: medical devices/software; wearable IMUs paired with cameras, alignment algorithms for robust 3D reconstruction; depends on user compliance with wearables, synchronization protocols, fusion models.

- Patient-specific digital twins for personalized rehab planning: healthcare/digital health; biomechanical models parameterized by patient HPE data to simulate therapies and predict outcomes; depends on modeling fidelity, integration with EMR, clinical validation of benefits.

- Community-based fall detection networks with governance frameworks: public health/smart cities; ambient cameras in shared spaces with strict privacy/consent, pose-based risk alerts; depends on public acceptance, privacy-by-design architecture, clear governance and opt-out mechanisms.

- Automated clinical documentation and billing from motion metrics: healthcare operations; standardized motion summaries auto-attached to EMR, coding support for tele-rehab sessions; depends on policy alignment, auditability, interoperability (HL7/FHIR), clinician trust.

- Education and ergonomics programs leveraging low-cost HPE kits: education/occupational health; school PE assessment and workplace training using 2D HPE; depends on educator training, simple deployment guides, consent policies, curriculum integration.

Each long-term application will benefit from ongoing advances noted in the paper—better multiview triangulation (PlaneSweepPose/FasterVoxelPose), temporal consistency (VideoPose3D), transformer-based 2D HPE, and models designed for limited labels (weakly/semi-supervised)—as well as sector-specific validation, standards, and infrastructure.

Collections

Sign up for free to add this paper to one or more collections.