- The paper introduces Tree-Planner, a three-phase approach integrating plan sampling, action tree construction, and grounded decision-making for efficient task planning.

- It significantly reduces token consumption by up to 92.2% and minimizes error correction adjustments by 40.5% compared to existing methods.

- The hierarchical action tree structure aggregates similar plan prefixes, enabling efficient exploration of alternative plans without redundant computations.

Tree-Planner: Efficient Close-loop Task Planning with LLMs

Introduction

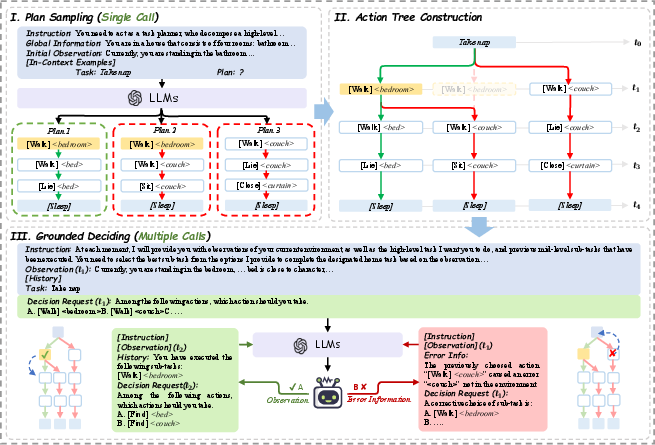

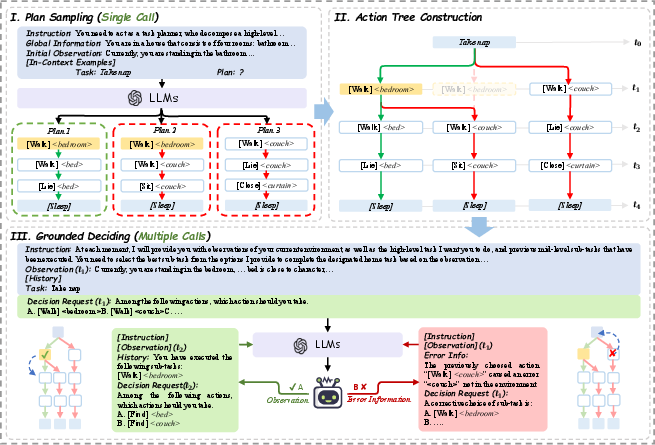

The paper "Tree-Planner: Efficient Close-loop Task Planning with LLMs" explores an innovative approach to optimize task planning using LLMs. Traditional LLM-based planning techniques suffer from high token consumption and inefficient error correction, which limit their performance in large-scale applications. Tree-Planner proposes a three-phase framework to mitigate these limitations by integrating plan sampling, action tree construction, and grounded decision-making into a cohesive system.

Methodology

Plan Sampling

Plan sampling is the first phase where the LLM generates several potential task plans. By harnessing the commonsense reasoning capabilities embedded in pre-trained LLMs, Tree-Planner extracts viable plans through strategically designed prompts. These prompts include instructions, global environmental information, initial observations, and in-context examples. The sampling process aims to create a diversified set of plans from which the most optimal can be selected through subsequent phases.

Action Tree Construction

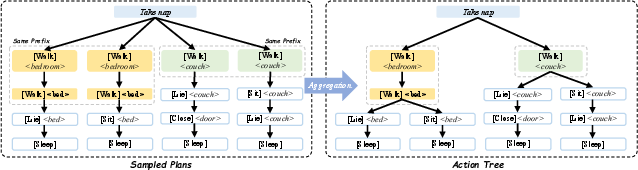

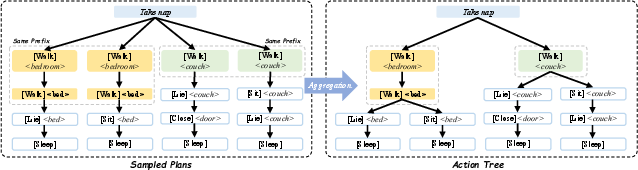

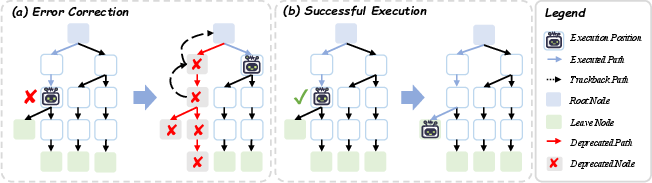

The construction of an action tree represents the core component of Tree-Planner. This phase involves organizing the sampled plans into a hierarchical tree structure, effectively consolidating the common subsequences of actions and branching only on divergent actions. This transformation from linear to tree-based representation facilitates efficient exploration of alternative plans without redundant re-execution of identical action paths.

Figure 1: The process of constructing an action tree. Left: each path represents a sampled plan. Right: plans with similar prefixes are aggregated.

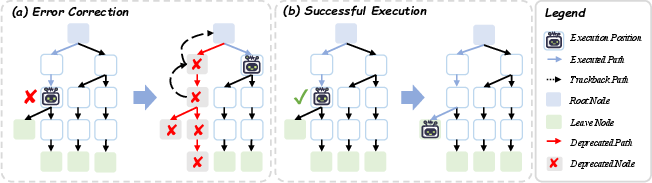

Grounded Deciding

The final phase, grounded deciding, refines the decision-making process by navigating the action tree based on real-time environmental feedback and past actions. Tree-Planner leverages the flexibility of LLMs to expertly choose among child nodes in the action tree. An integrated error correction mechanism enables backtracking within the tree to previous decision points, thereby improving recovery from execution errors without necessitating complete replanning from scratch.

Figure 2: An overview of the process of grounded deciding.

Experimental Evaluation

The method was evaluated in the VirtualHome environment, focusing on both correctness and efficiency. Tree-Planner demonstrated superior state-of-the-art performance, improving success rates and reducing token consumption significantly compared to dynamic and static replanning baselines.

- Token Efficiency: Tree-Planner reduced token consumption by up to 92.2%, addressing redundancy in prompt token usage.

- Error Correction Efficiency: The approach led to a 40.5% reduction in corrective adjustments during plan execution compared to existing methods.

Analysis and Discussion

Tree-Planner highlights the potential of leveraging an action-tree-based formalism for improved task planning. The hierarchical structure of the action tree enables negligible incremental token use in subsequent LLM queries, starkly contrasting with the linear costs of current models. While increasing the number of sampled plans (N) enhances decision-making potential, it requires careful balancing due to the diminishing returns in token savings beyond certain limits (shown in the derived boundary conditions).

Conclusion

Tree-Planner offers a promising direction for efficient and scalable task planning using LLMs, with clear benefits in real-world applications where large-scale, dynamic tasks are prevalent. By effectively splitting the planning problem into sampling, tree construction, and grounded deciding, it sets a foundational framework that could inspire further innovation in LLM-based task planning systems.

Figure 3: An overview of the Tree-Planner pipeline.

The research opens avenues for future exploration, including adaptive resampling strategies and integrative mechanisms for error handling, which will further enhance the robustness and applicability of LLMs in complex task environments.