- The paper presents a conceptual framework that integrates CBR with LLMs, providing persistent memory for improved contextual reasoning.

- It details a technical integration using vector databases and approximate nearest neighbor search to enhance memory storage scalability.

- The proposed approach offers a pathway to AGI by enabling adaptive learning and long-term conversational memory in AI systems.

Integration of Case-Based Reasoning with LLMs

The paper "A Case-Based Persistent Memory for a LLM" (2310.08842) presents a conceptual framework advocating for the integration of Case-Based Reasoning (CBR) with LLMs. This framework proposes leveraging CBR as a methodology to enhance the memory and contextual capabilities of LLMs, aiming to bridge the gap toward AGI.

Theoretical Foundations and Synergies

CBR employs a solution-based reasoning paradigm where new problems are solved by referencing precedents—past cases with solutions. Traditionally, CBR systems faced challenges related to limited computational power and small case bases. Contemporary AI innovations, specifically in LLMs like GPT architectures, have potential synergies with CBR due to their capability to handle vast amounts of data and their emergent properties in language understanding.

The paper suggests that CBR can be utilized to provide persistent and context-rich memory for LLMs. This aligns conceptually as both CBR and LLMs focus on handling vagueness and variability of inputs and outputs. The transformer-based architectures underlying LLMs such as GPT-3.5 draw from enormous datasets, offering a scale leap that could be reciprocally beneficial if married with CBR techniques.

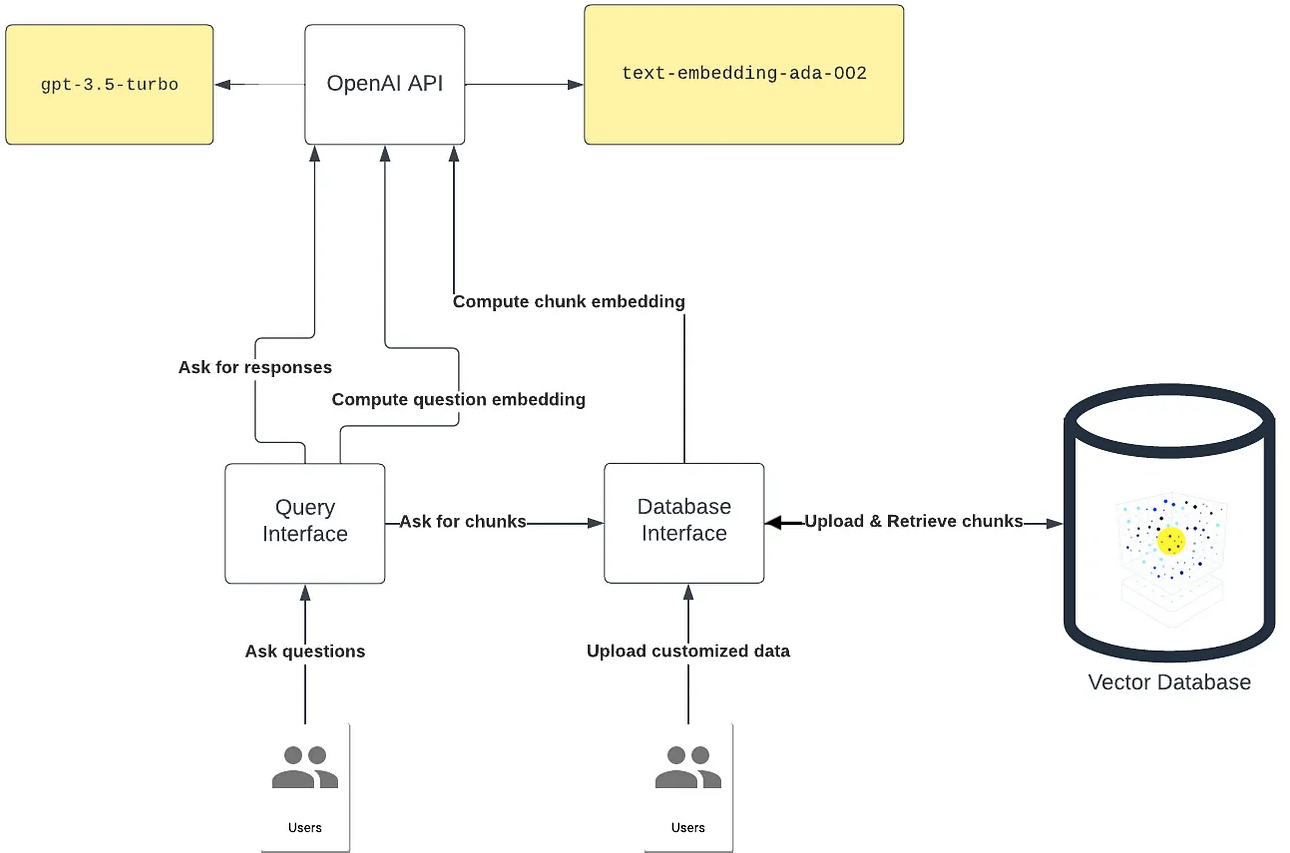

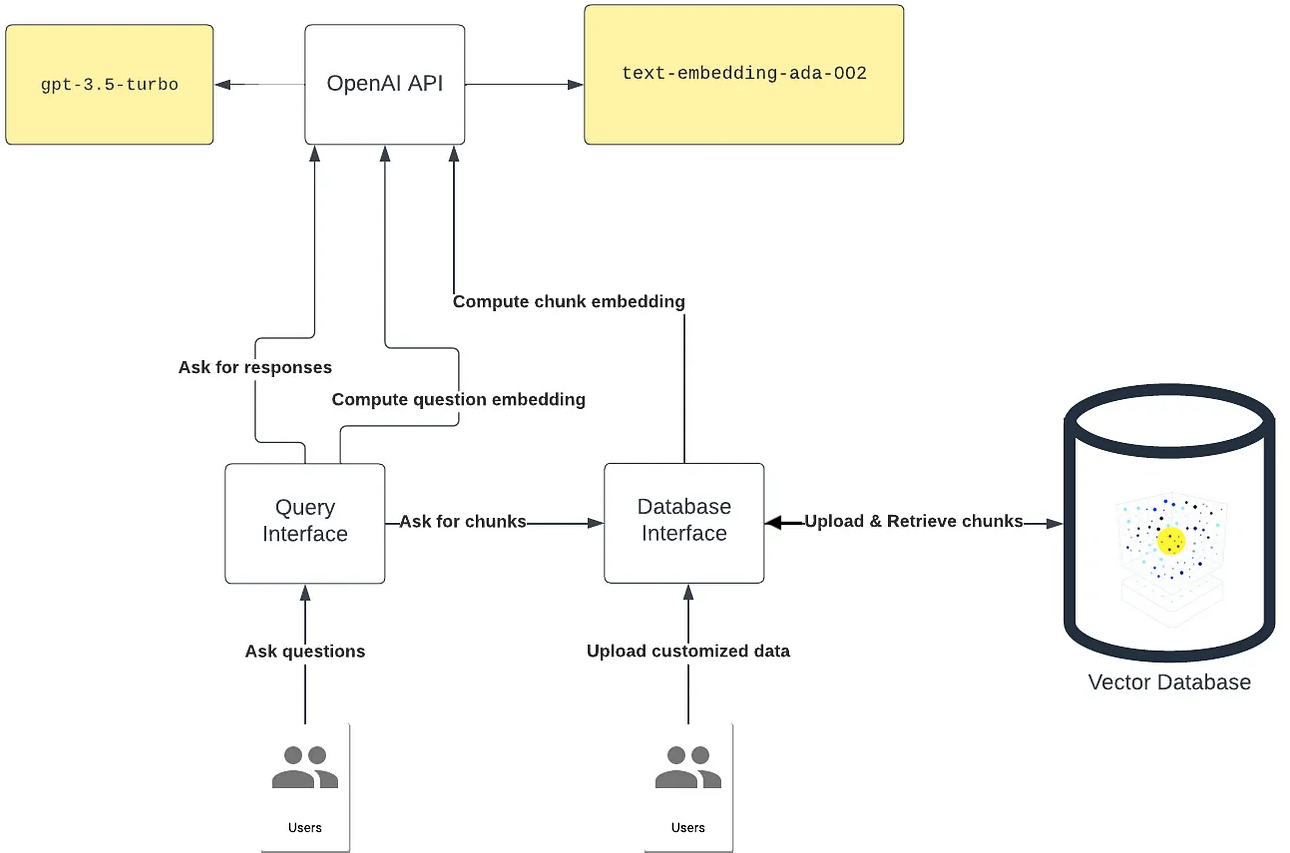

Figure 1: Architecture of GPT-3.5 With External Memory Using a Vector Database, after Jia [20].

Technical Integration and Implementation

The integration of a persistent memory into LLMs involves creating an architecture where the LLM is linked to a vector-database-stored memory. This memory system employs approximate nearest neighbor search, a technique familiar within the CBR community, confirming the practical alignment of these two approaches. In particular, the FAISS platform, an efficient open-source implementation, presents a viable solution for scaling this approach.

The authors reference contemporary works where neural network explanations are augmented with CBR, indicating a path for transparent and interpretable AI systems [14]. The proposal includes modeling similarity metrics using deep learning approaches, allowing CBR systems to handle multi-modal cases across extensive scales similar to that of LLMs, potentially reaching a petabyte scale in storage.

Implications and Future Directions

Integrating CBR as a persistent memory with LLMs poses significant implications for developing AGI. Such integration promises enhanced memory capabilities in AI systems, allowing them to recall interactions contextually, akin to human memory. This development responds to critiques regarding LLMs' lack of persistent memory, addressing the challenge identified by OpenAI’s ChatGPT in lacking long-term conversational memory.

Furthermore, as AI systems advance, their ability to reason and adapt using historical data entrusts them with the opportunity for improved personalization, adaptive learning, and application in AGI domains. The proposed architecture hints towards AI's future, where the division between narrow AI applications and general reasoning systems might blur progressively.

Conclusion

The proposition for employing CBR as persistent memory for LLMs is a notable conceptual leap toward creating more robust and versatile AI systems. By harnessing the respective strengths of CBR's methodological approach and the extensive data handling capabilities of LLMs, the research outlines a potential path forward for AI's evolution toward general intelligence. Continued exploration and experimentation are essential to realize this vision, contributing crucial insights into AI's long-term development and applications.