A Setwise Approach for Effective and Highly Efficient Zero-shot Ranking with Large Language Models

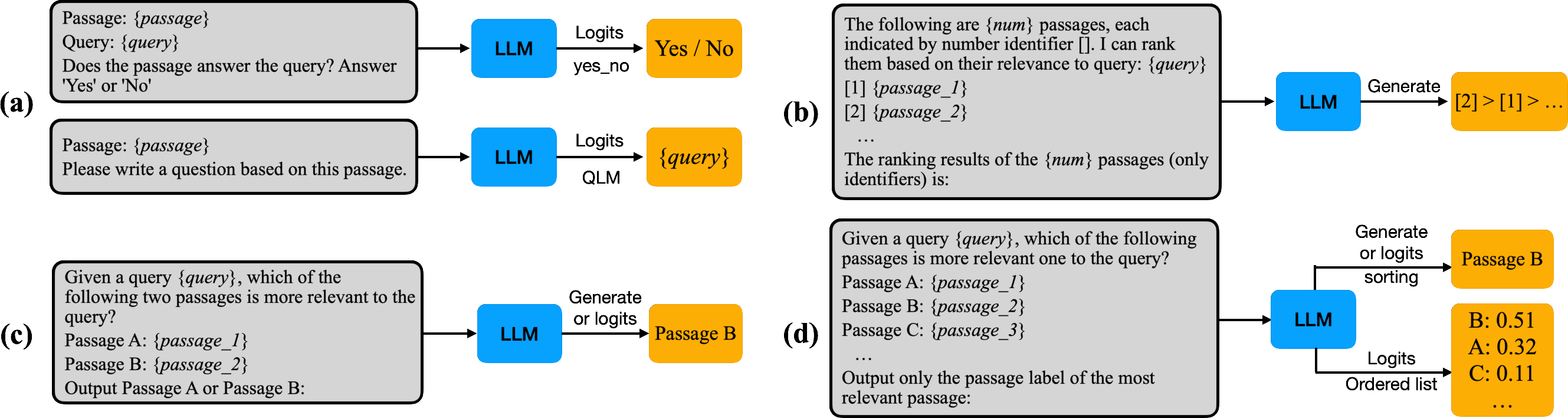

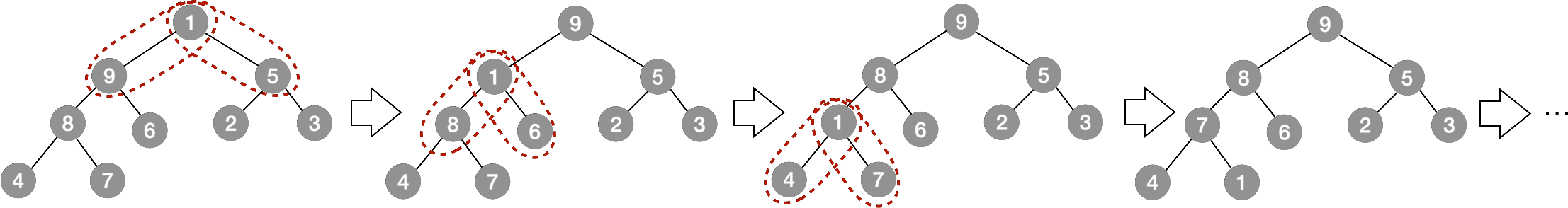

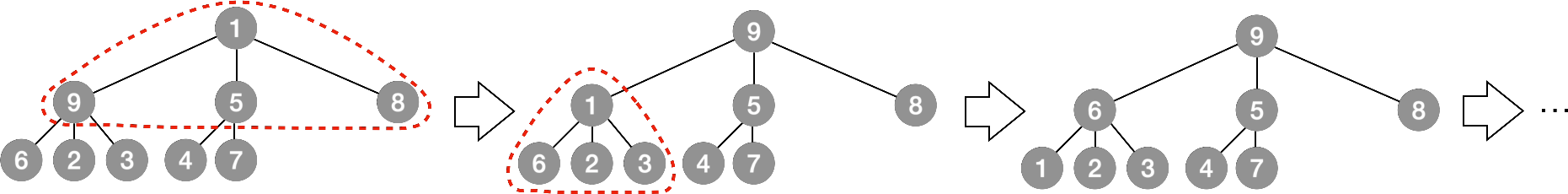

Abstract: We propose a novel zero-shot document ranking approach based on LLMs: the Setwise prompting approach. Our approach complements existing prompting approaches for LLM-based zero-shot ranking: Pointwise, Pairwise, and Listwise. Through the first-of-its-kind comparative evaluation within a consistent experimental framework and considering factors like model size, token consumption, latency, among others, we show that existing approaches are inherently characterised by trade-offs between effectiveness and efficiency. We find that while Pointwise approaches score high on efficiency, they suffer from poor effectiveness. Conversely, Pairwise approaches demonstrate superior effectiveness but incur high computational overhead. Our Setwise approach, instead, reduces the number of LLM inferences and the amount of prompt token consumption during the ranking procedure, compared to previous methods. This significantly improves the efficiency of LLM-based zero-shot ranking, while also retaining high zero-shot ranking effectiveness. We make our code and results publicly available at \url{https://github.com/ielab/LLM-rankers}.

- Large language models are zero-shot clinical information extractors. arXiv preprint arXiv:2205.12689 (2022).

- Language models are few-shot learners. Advances in neural information processing systems 33 (2020), 1877–1901.

- Palm: Scaling language modeling with pathways. arXiv preprint arXiv:2204.02311 (2022).

- Overview of the TREC 2020 deep learning track. arXiv preprint arXiv:2102.07662 (2021).

- Overview of the TREC 2019 deep learning track. arXiv preprint arXiv:2003.07820 (2020).

- Promptbreeder: Self-Referential Self-Improvement Via Prompt Evolution. arXiv preprint arXiv:2309.16797 (2023).

- Sparse Pairwise Re-Ranking with Pre-Trained Transformers. In Proceedings of the 2022 ACM SIGIR International Conference on Theory of Information Retrieval (Madrid, Spain) (ICTIR ’22). Association for Computing Machinery, New York, NY, USA, 72–80. https://doi.org/10.1145/3539813.3545140

- Donald Ervin Knuth. 1997. The art of computer programming. Vol. 3. Pearson Education.

- Large language models are zero-shot reasoners. Advances in neural information processing systems 35 (2022), 22199–22213.

- Holistic evaluation of language models. arXiv preprint arXiv:2211.09110 (2022).

- Pyserini: A Python Toolkit for Reproducible Information Retrieval Research with Sparse and Dense Representations. In Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval (Virtual Event, Canada) (SIGIR ’21). Association for Computing Machinery, New York, NY, USA, 2356–2362. https://doi.org/10.1145/3404835.3463238

- Zero-Shot Listwise Document Reranking with a Large Language Model. arXiv preprint arXiv:2305.02156 (2023).

- Active Sampling for Pairwise Comparisons via Approximate Message Passing and Information Gain Maximization. In 2020 IEEE International Conference on Pattern Recognition (ICPR).

- Document Ranking with a Pretrained Sequence-to-Sequence Model. In Findings of the Association for Computational Linguistics: EMNLP 2020. 708–718.

- Jay M Ponte and W Bruce Croft. 2017. A language modeling approach to information retrieval. In ACM SIGIR Forum, Vol. 51. ACM New York, NY, USA, 202–208.

- The expando-mono-duo design pattern for text ranking with pretrained sequence-to-sequence models. arXiv preprint arXiv:2101.05667 (2021).

- RankVicuna: Zero-Shot Listwise Document Reranking with Open-Source Large Language Models. arXiv preprint arXiv:2309.15088 (2023).

- Large language models are effective text rankers with pairwise ranking prompting. arXiv preprint arXiv:2306.17563 (2023).

- Improving Passage Retrieval with Zero-Shot Question Generation. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, Abu Dhabi, United Arab Emirates, 3781–3797. https://doi.org/10.18653/v1/2022.emnlp-main.249

- Is ChatGPT Good at Search? Investigating Large Language Models as Re-Ranking Agent. arXiv preprint arXiv:2304.09542 (2023).

- BEIR: A Heterogeneous Benchmark for Zero-shot Evaluation of Information Retrieval Models. In Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track (Round 2).

- Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971 (2023).

- Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288 (2023).

- Attention is All You Need. In Proceedings of the 31st International Conference on Neural Information Processing Systems (Long Beach, California, USA) (NIPS’17). Curran Associates Inc., Red Hook, NY, USA, 6000–6010.

- Can ChatGPT Write a Good Boolean Query for Systematic Review Literature Search?. In Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval (Taipei, Taiwan) (SIGIR ’23). Association for Computing Machinery, New York, NY, USA, 1426–1436. https://doi.org/10.1145/3539618.3591703

- Finetuned Language Models are Zero-Shot Learners. In International Conference on Learning Representations.

- Large language models as optimizers. arXiv preprint arXiv:2309.03409 (2023).

- Deep query likelihood model for information retrieval. In Advances in Information Retrieval: 43rd European Conference on IR Research, ECIR 2021, Virtual Event, March 28–April 1, 2021, Proceedings, Part II 43. Springer, 463–470.

- Shengyao Zhuang and Guido Zuccon. 2021. TILDE: Term independent likelihood moDEl for passage re-ranking. In Proceedings of the 44th International ACM SIGIR Conference on Research and Development in Information Retrieval. 1483–1492.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.