SiDA-MoE: Sparsity-Inspired Data-Aware Serving for Efficient and Scalable Large Mixture-of-Experts Models

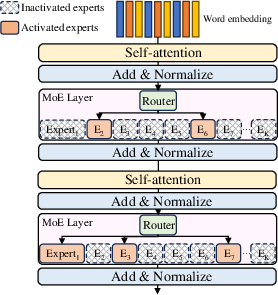

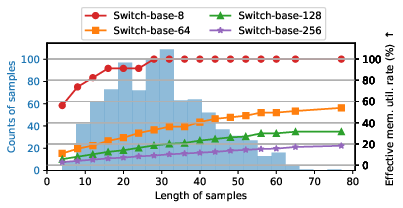

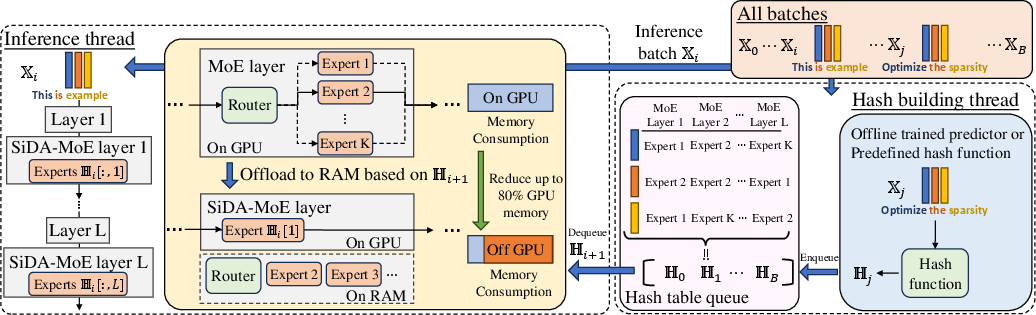

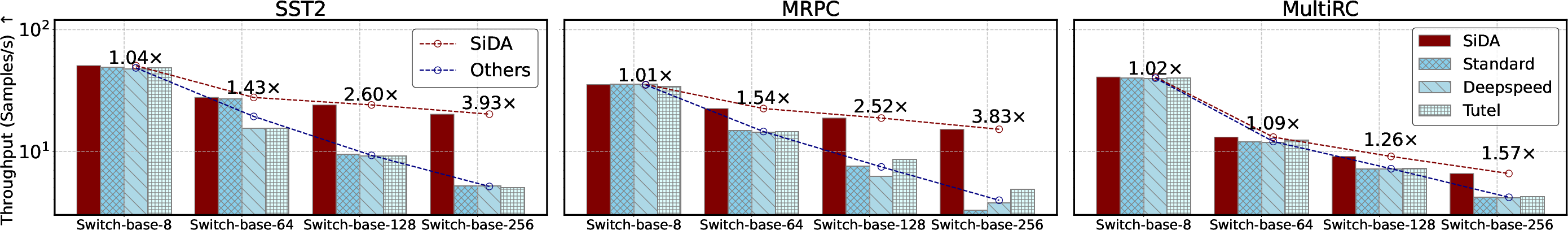

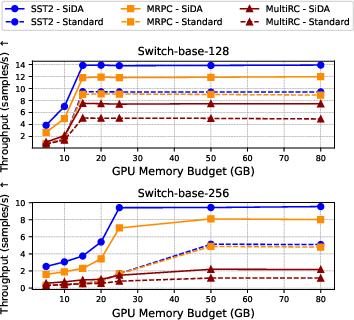

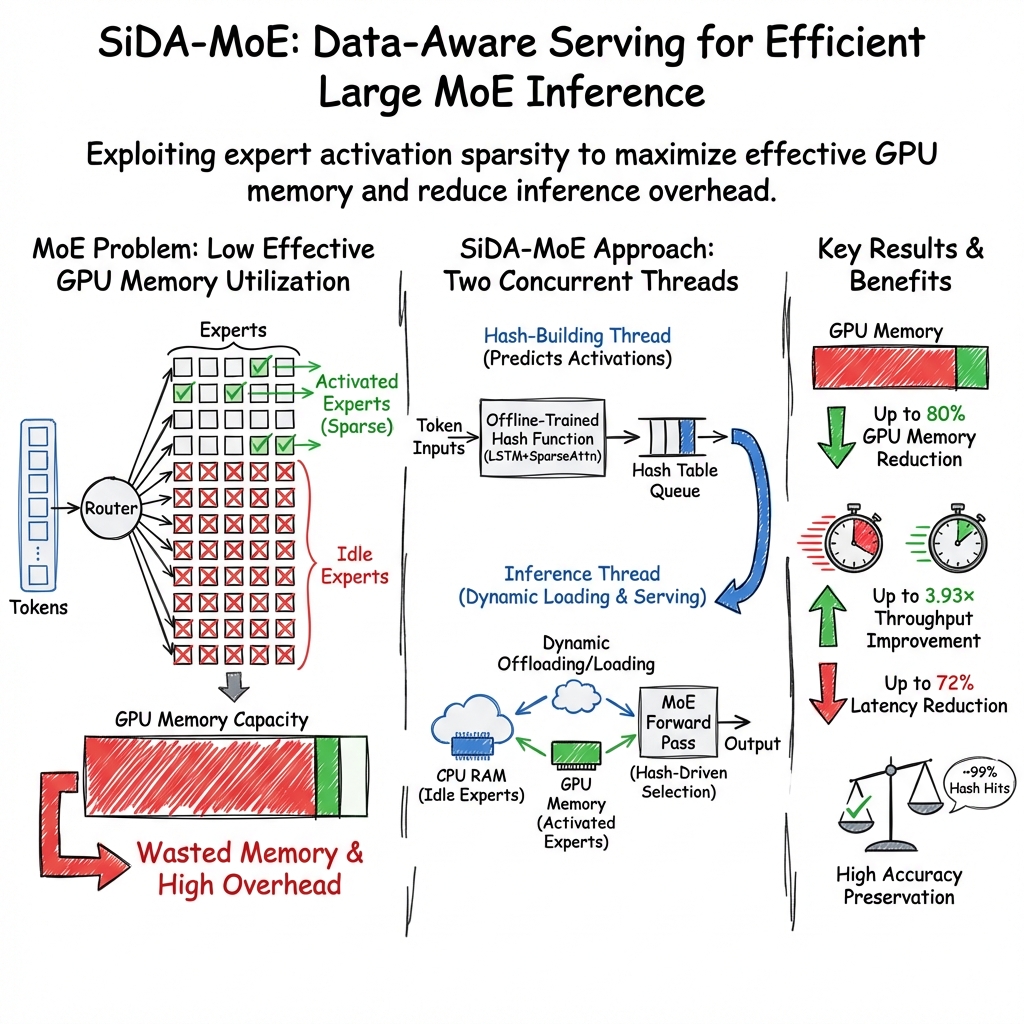

Abstract: Mixture-of-Experts (MoE) has emerged as a favorable architecture in the era of large models due to its inherent advantage, i.e., enlarging model capacity without incurring notable computational overhead. Yet, the realization of such benefits often results in ineffective GPU memory utilization, as large portions of the model parameters remain dormant during inference. Moreover, the memory demands of large models consistently outpace the memory capacity of contemporary GPUs. Addressing this, we introduce SiDA-MoE ($\textbf{S}$parsity-$\textbf{i}$nspired $\textbf{D}$ata-$\textbf{A}$ware), an efficient inference approach tailored for large MoE models. SiDA-MoE judiciously exploits both the system's main memory, which is now abundant and readily scalable, and GPU memory by capitalizing on the inherent sparsity on expert activation in MoE models. By adopting a data-aware perspective, SiDA-MoE achieves enhanced model efficiency with a neglectable performance drop. Specifically, SiDA-MoE attains a remarkable speedup in MoE inference with up to $3.93\times$ throughput increasing, up to $72\%$ latency reduction, and up to $80\%$ GPU memory saving with down to $1\%$ performance drop. This work paves the way for scalable and efficient deployment of large MoE models, even with constrained resources. Code is available at: https://github.com/timlee0212/SiDA-MoE.

- Deepspeed-inference: enabling efficient inference of transformer models at unprecedented scale. In SC22: International Conference for High Performance Computing, Networking, Storage and Analysis, pp. 1–15. IEEE, 2022.

- Neural machine translation by jointly learning to align and translate. In Bengio, Y. and LeCun, Y. (eds.), 3rd International Conference on Learning Representations, ICLR 2015, San Diego, CA, USA, May 7-9, 2015, Conference Track Proceedings, 2015. URL http://arxiv.org/abs/1409.0473.

- Language models are few-shot learners. Advances in neural information processing systems, 33:1877–1901, 2020.

- Quip: 2-bit quantization of large language models with guarantees. arXiv preprint arXiv:2307.13304, 2023.

- Towards understanding the mixture-of-experts layer in deep learning. In Oh, A. H., Agarwal, A., Belgrave, D., and Cho, K. (eds.), Advances in Neural Information Processing Systems, 2022. URL https://openreview.net/forum?id=MaYzugDmQV.

- Optimize weight rounding via signed gradient descent for the quantization of llms. arXiv preprint arXiv:2309.05516, 2023.

- Adapting language models to compress contexts. arXiv preprint arXiv:2305.14788, 2023.

- Choquette, J. Nvidia hopper h100 gpu: Scaling performance. IEEE Micro, 2023.

- A parallel mixture of svms for very large scale problems. Advances in Neural Information Processing Systems, 14, 2001.

- Skipdecode: Autoregressive skip decoding with batching and caching for efficient llm inference. arXiv preprint arXiv:2307.02628, 2023.

- Learning factored representations in a deep mixture of experts. arXiv preprint arXiv:1312.4314, 2013.

- M3vit: Mixture-of-experts vision transformer for efficient multi-task learning with model-accelerator co-design. Advances in Neural Information Processing Systems, 35:28441–28457, 2022.

- Switch transformers: Scaling to trillion parameter models with simple and efficient sparsity. The Journal of Machine Learning Research, 23(1):5232–5270, 2022.

- Sparsegpt: Massive language models can be accurately pruned in one-shot. 2023.

- Gptq: Accurate post-training quantization for generative pre-trained transformers. arXiv preprint arXiv:2210.17323, 2022.

- Specializing smaller language models towards multi-step reasoning. arXiv preprint arXiv:2301.12726, 2023.

- In-context autoencoder for context compression in a large language model. arXiv preprint arXiv:2307.06945, 2023.

- Knowledge distillation of large language models. arXiv preprint arXiv:2306.08543, 2023.

- Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 770–778, 2016.

- Distilling the knowledge in a neural network. arXiv preprint arXiv:1503.02531, 2015.

- Long short-term memory. Neural computation, 9(8):1735–1780, 1997.

- Towards moe deployment: Mitigating inefficiencies in mixture-of-expert (moe) inference. arXiv preprint arXiv:2303.06182, 2023.

- Gpipe: Efficient training of giant neural networks using pipeline parallelism. Advances in neural information processing systems, 32, 2019.

- Tutel: Adaptive mixture-of-experts at scale. Proceedings of Machine Learning and Systems, 5, 2023.

- Adaptive mixtures of local experts. Neural computation, 3(1):79–87, 1991.

- Pruning large language models via accuracy predictor. arXiv preprint arXiv:2309.09507, 2023.

- Llmlingua: Compressing prompts for accelerated inference of large language models. arXiv preprint arXiv:2310.05736, 2023a.

- Recyclegpt: An autoregressive language model with recyclable module. arXiv preprint arXiv:2308.03421, 2023b.

- Hidden markov decision trees. Advances in neural information processing systems, 9, 1996.

- Hierarchical mixtures of experts and the em algorithm. Neural computation, 6(2):181–214, 1994.

- Scaling laws for neural language models. arXiv preprint arXiv:2001.08361, 2020.

- Segment anything. arXiv preprint arXiv:2304.02643, 2023.

- Serving moe models on resource-constrained edge devices via dynamic expert swapping. arXiv preprint arXiv:2308.15030, 2023.

- Gshard: Scaling giant models with conditional computation and automatic sharding. arXiv preprint arXiv:2006.16668, 2020.

- Base layers: Simplifying training of large, sparse models. In International Conference on Machine Learning, pp. 6265–6274. PMLR, 2021.

- Sparse mixture-of-experts are domain generalizable learners. In The Eleventh International Conference on Learning Representations, 2023a. URL https://openreview.net/forum?id=RecZ9nB9Q4.

- Symbolic chain-of-thought distillation: Small models can also” think” step-by-step. arXiv preprint arXiv:2306.14050, 2023b.

- Losparse: Structured compression of large language models based on low-rank and sparse approximation. arXiv preprint arXiv:2306.11222, 2023c.

- Awq: Activation-aware weight quantization for llm compression and acceleration. arXiv preprint arXiv:2306.00978, 2023.

- Qllm: Accurate and efficient low-bitwidth quantization for large language models. arXiv preprint arXiv:2310.08041, 2023a.

- Llm-qat: Data-free quantization aware training for large language models. arXiv preprint arXiv:2305.17888, 2023b.

- Deja vu: Contextual sparsity for efficient llms at inference time. In International Conference on Machine Learning, pp. 22137–22176. PMLR, 2023c.

- Decoupled weight decay regularization. In International Conference on Learning Representations, 2019. URL https://openreview.net/forum?id=Bkg6RiCqY7.

- Llm-pruner: On the structural pruning of large language models. arXiv preprint arXiv:2305.11627, 2023.

- Modnn: Local distributed mobile computing system for deep neural network. In Design, Automation & Test in Europe Conference & Exhibition (DATE), 2017, pp. 1396–1401. IEEE, 2017.

- From softmax to sparsemax: A sparse model of attention and multi-label classification. In International conference on machine learning, pp. 1614–1623. PMLR, 2016.

- Specinfer: Accelerating generative llm serving with speculative inference and token tree verification. arXiv preprint arXiv:2305.09781, 2023.

- Skeleton-of-thought: Large language models can do parallel decoding. arXiv preprint arXiv:2307.15337, 2023.

- OpenAI. Gpt-4 technical report, 2023.

- Deep learning challenges and prospects in wireless sensor network deployment. Archives of Computational Methods in Engineering, pp. 1–24, 2024.

- Exploring the limits of transfer learning with a unified text-to-text transformer. The Journal of Machine Learning Research, 21(1):5485–5551, 2020.

- Deepspeed-moe: Advancing mixture-of-experts inference and training to power next-generation ai scale. In International Conference on Machine Learning, pp. 18332–18346. PMLR, 2022.

- Hierarchical text-conditional image generation with clip latents. arXiv preprint arXiv:2204.06125, 1(2):3, 2022.

- Scaling vision with sparse mixture of experts. Advances in Neural Information Processing Systems, 34:8583–8595, 2021.

- Hash layers for large sparse models. In Beygelzimer, A., Dauphin, Y., Liang, P., and Vaughan, J. W. (eds.), Advances in Neural Information Processing Systems, 2021. URL https://openreview.net/forum?id=lMgDDWb1ULW.

- Photorealistic text-to-image diffusion models with deep language understanding. Advances in Neural Information Processing Systems, 35:36479–36494, 2022.

- Edge-moe: Memory-efficient multi-task vision transformer architecture with task-level sparsity via mixture-of-experts. In 2023 IEEE/ACM International Conference on Computer Aided Design (ICCAD), pp. 01–09. IEEE, 2023.

- Pb-llm: Partially binarized large language models. arXiv preprint arXiv:2310.00034, 2023.

- Omniquant: Omnidirectionally calibrated quantization for large language models. arXiv preprint arXiv:2308.13137, 2023.

- Outrageously large neural networks: The sparsely-gated mixture-of-experts layer. In International Conference on Learning Representations, 2017. URL https://openreview.net/forum?id=B1ckMDqlg.

- Se-moe: A scalable and efficient mixture-of-experts distributed training and inference system. arXiv preprint arXiv:2205.10034, 2022.

- Using deepspeed and megatron to train megatron-turing nlg 530b, a large-scale generative language model. arXiv preprint arXiv:2201.11990, 2022.

- Accelerating llm inference with staged speculative decoding. arXiv preprint arXiv:2308.04623, 2023.

- A simple and effective pruning approach for large language models. arXiv preprint arXiv:2306.11695, 2023.

- [industry] gkd: A general knowledge distillation framework for large-scale pre-trained language model. In The 61st Annual Meeting Of The Association For Computational Linguistics, 2023.

- Tresp, V. Mixtures of gaussian processes. Advances in neural information processing systems, 13, 2000.

- Llmzip: Lossless text compression using large language models. arXiv preprint arXiv:2306.04050, 2023.

- Chatgpt for robotics: Design principles and model abilities. Microsoft Auton. Syst. Robot. Res, 2:20, 2023.

- Glue: A multi-task benchmark and analysis platform for natural language understanding. arXiv preprint arXiv:1804.07461, 2018.

- Superglue: A stickier benchmark for general-purpose language understanding systems. Advances in neural information processing systems, 32, 2019.

- Scott: Self-consistent chain-of-thought distillation. arXiv preprint arXiv:2305.01879, 2023.

- Convergence of edge computing and deep learning: A comprehensive survey. IEEE Communications Surveys & Tutorials, 22(2):869–904, 2020.

- Huggingface’s transformers: State-of-the-art natural language processing. arXiv preprint arXiv:1910.03771, 2019.

- Lamini-lm: A diverse herd of distilled models from large-scale instructions. arXiv preprint arXiv:2304.14402, 2023.

- Flash-llm: Enabling cost-effective and highly-efficient large generative model inference with unstructured sparsity. arXiv preprint arXiv:2309.10285, 2023.

- Smoothquant: Accurate and efficient post-training quantization for large language models. In International Conference on Machine Learning, pp. 38087–38099. PMLR, 2023.

- Tensorgpt: Efficient compression of the embedding layer in llms based on the tensor-train decomposition. arXiv preprint arXiv:2307.00526, 2023.

- Go wider instead of deeper. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, pp. 8779–8787, 2022.

- Auto-gpt for online decision making: Benchmarks and additional opinions. arXiv preprint arXiv:2306.02224, 2023.

- Edgemoe: Fast on-device inference of moe-based large language models. arXiv preprint arXiv:2308.14352, 2023.

- Distilling script knowledge from large language models for constrained language planning. arXiv preprint arXiv:2305.05252, 2023a.

- Rptq: Reorder-based post-training quantization for large language models. arXiv preprint arXiv:2304.01089, 2023b.

- Distillspec: Improving speculative decoding via knowledge distillation. arXiv preprint arXiv:2310.08461, 2023.

- Edge intelligence: Paving the last mile of artificial intelligence with edge computing. Proceedings of the IEEE, 107(8):1738–1762, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.