- The paper introduces 27 open problems in XAI, emphasizing novel explanation strategies for complex AI systems.

- It details methodological improvements by advocating portfolio approaches to address issues like sensitivity and computational efficiency in current XAI techniques.

- It calls for standardized evaluation frameworks and human-centered explanations to boost trustworthiness and mitigate negative societal impacts.

Explainable Artificial Intelligence (XAI) 2.0: A Manifesto of Open Challenges and Interdisciplinary Research Directions

Introduction

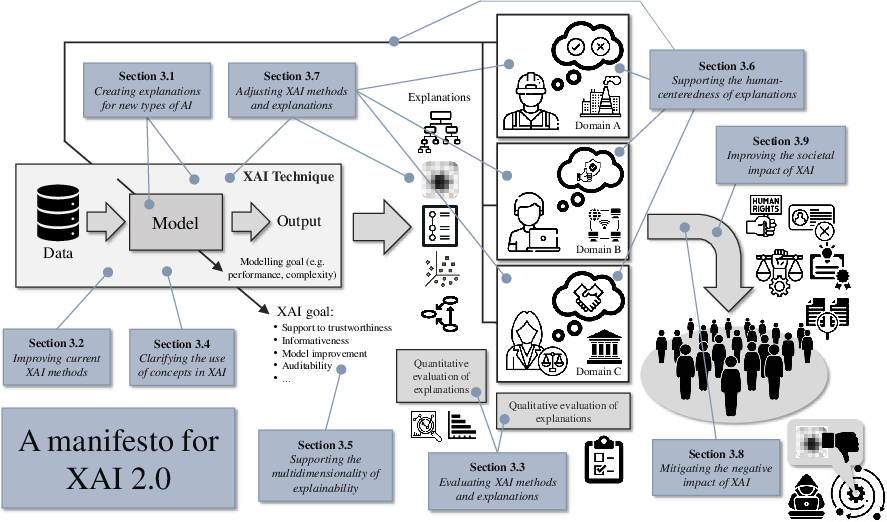

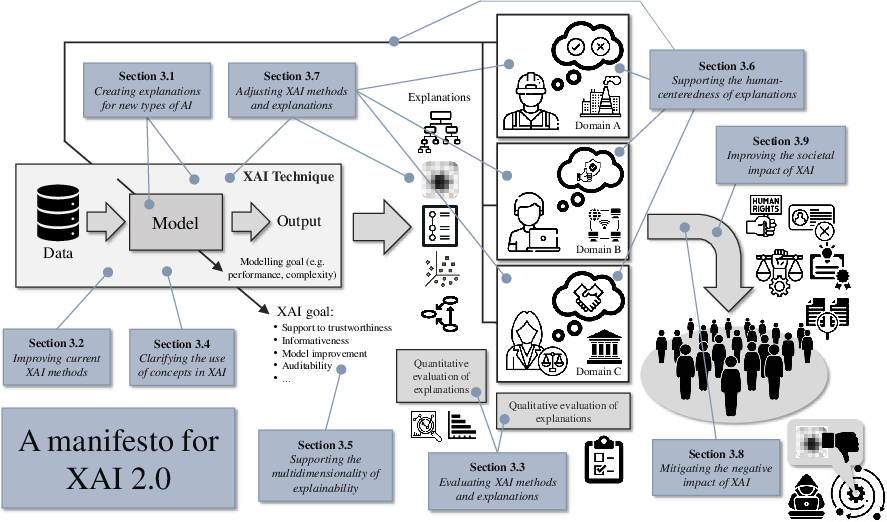

The paper "Explainable Artificial Intelligence (XAI) 2.0: A Manifesto of Open Challenges and Interdisciplinary Research Directions" puts forth an ambitious agenda aimed at advancing eXplainable Artificial Intelligence (XAI) through the identification and resolution of key challenges. As AI systems continue to evolve, the need for transparency and explainability has grown, prompting researchers from diverse fields to collaborate on the development of solutions. This manifesto introduces 27 open problems categorized into nine major themes, each representing a unique set of challenges encountered in XAI research and application. Ultimately, the paper seeks to foster collaboration across disciplines to propel XAI forward and contribute to human society's benefit.

Figure 1: A manifesto for eXplainable Artificial Intelligence (XAI): High-level challenges

Advances and Applications of XAI Research

The paper elaborates on the research and practical advancements in XAI by emphasizing its growing importance in various domains. The document discusses breakthroughs in understanding AI, particularly in addressing challenges in interpretability and transparency. These include improved methods for attribution and synthesis, as well as actionable explanations grounded in causal models. Importantly, XAI has become foundational across fields such as healthcare, finance, environmental science, and education. For instance, XAI methods like LIME and SHAP are employed in healthcare to enhance diagnostics by providing interpretable insights into AI models' decisions.

Challenges and Research Directions

Creating Explanations for New Types of AI

The complexity of AI systems necessitates novel strategies for explanation. Generative models and concept-based learning algorithms demand innovative techniques for producing insightful explanations. The paper outlines challenges such as the polysemantic nature of neurons in LLMs and uncharted territories in concept learning, urging a redefinition of explanation methods for these AI types.

Improving Current XAI Methods

The limitations of existing XAI methods, particularly attribution techniques, are highlighted. Pioneering efforts strive to enhance these methods by addressing issues such as sensitivity to hyper-parameters, computational efficiency, and context-specific applicability. The paper suggests a portfolio approach combining various XAI methods to overcome individual limitations.

Evaluating XAI Methods and Explanations

The lack of standardized evaluation protocols for XAI systems poses a hurdle in assessing their efficacy. The paper advocates for comprehensive methodologies involving human evaluations, quantitative metrics, and standardized evaluation frameworks like Quantus. This multidimensional approach aims to ascertain the validity and impact of explanations in real-world scenarios.

Clarifying the Use of Concepts in XAI

Conceptual ambiguity in XAI terminology necessitates clearer definitions. Distinctions between terms like explainability and interpretability, as well as their relationship with trustworthiness, are urged. This clarification serves as a cornerstone for consistent interdisciplinary dialogue and enables effective interdisciplinary work.

Supporting the Multi-Dimensionality of Explainability

The need for multifaceted explanations that integrate various dimensions of trustworthiness is emphasized. Such explanations should encompass factors like safety, fairness, and robustness, ensuring comprehensive transparency. Furthermore, facilitating interdisciplinary collaboration is crucial to embrace the complex nature of XAI.

Supporting the Human-Centeredness of Explanations

Human-understandable explanations require adaptations to stakeholder needs, domain specificity, and reality drift. Tailored explanations must accommodate different user requirements, balancing technical detail with user preferences. Concept-based explanations offer a promising avenue by aligning explanations with familiar human concepts.

Adjusting XAI Methods and Explanations

Adjusting explanations to the varying needs of stakeholders, domains, and goals is pivotal. This includes crafting explanations that factor in the background, expectations, and objectives of different users, ensuring that each explanation aligns with its intended purpose.

Mitigating the Negative Impact of XAI

The paper addresses the potential negative impacts of XAI by highlighting challenges such as falsifiability and secure explanations. Solutions include devising methods for verifying explanations and protecting explanations from malicious exploitation by either human or superintelligent agents.

Improving the Societal Impact of XAI

Finally, the paper emphasizes societal implications, advocating for originality attribution in AI-generated data, supporting the right to be forgotten, and addressing power imbalances between individuals and companies. These facets underscore the importance of ethical considerations in deploying XAI systems.

Conclusion

The paper concludes with a manifesto for XAI, delineating key areas for future research and collaboration. By embracing an interdisciplinary approach and addressing the outlined challenges, XAI can further contribute to AI systems' transparency, trustworthiness, and societal benefit. The manifesto serves as a guiding framework to propel scientific inquiry and advance XAI in practical, ethical, and technical dimensions.