- The paper introduces a novel α-stable noise augmentation method that enhances neural network robustness against non-Gaussian impulsive noise.

- It demonstrates that networks trained with α-stable noise, particularly with Cauchy (α=1), outperform traditional Gaussian noise training across multiple datasets.

- Results across MNIST, CIFAR10, ECG200, and LIBRAS benchmarks indicate broader application and improved performance under varying noise conditions.

Robustness Enhancement in Neural Networks with Alpha-Stable Training Noise

The paper "Robustness Enhancement in Neural Networks with Alpha-Stable Training Noise" proposes a novel data augmentation method aimed at improving the robustness of neural networks, particularly in the presence of non-Gaussian impulsive noise. This method replaces the conventional Gaussian noise with α-stable noise during the training phase, leveraging the heavy-tailed properties of this noise model to better tolerate abrupt and peaked disturbances.

Background and Motivation

Importance of Robustness

Robustness refers to the ability of a neural network to maintain stable performance when subjected to corrupted or noisy data. Given the increasing deployment of neural networks in real-world scenarios where data are often imperfect due to environmental noise or adversarial attacks, enhancing robustness is critical. Traditional methods achieve robustness through Gaussian noise injection, as Gaussian noise serves as a convenient and mathematically tractable model following the Central Limit Theorem (CLT). However, real-world data may often be subject to impulsive noise that deviates from Gaussian assumptions.

α-Stable Noise

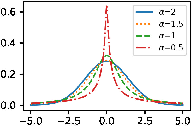

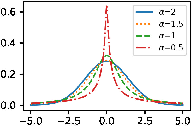

The α-stable distribution generalizes the Gaussian distribution to model impulsive noise, characterized by a parameter α, controlling tail heaviness. Unlike Gaussian noise (α=2), smaller α values provide heavier tails, indicating greater impulsiveness. This property aligns with the observations that impulsive noise—frequently occurring in environments such as communications, radar, and medical data—displays frequent abrupt peaks.

Figure 1: Probability density function curves of symmetric α-stable distribution with α=2,1.5,1,0.5.

Methodology

Dataset and Noise Augmentation

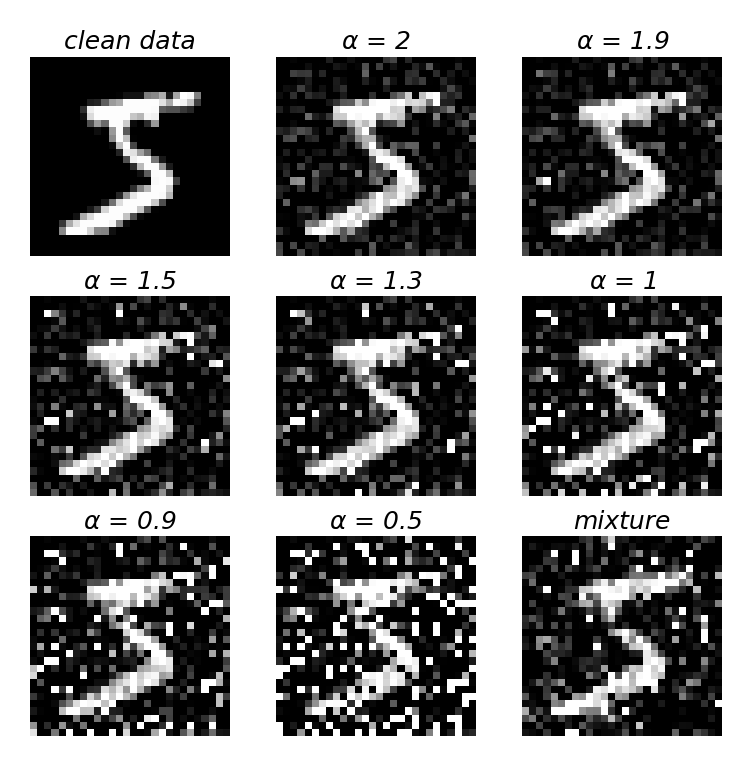

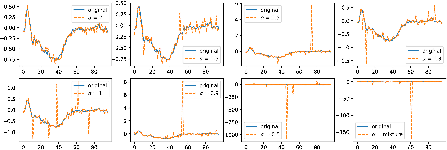

The experimental analysis covers four datasets: MNIST, CIFAR10, ECG200, and LIBRAS, utilizing both image and time series data. The noise model involves generating samples from a symmetric α-stable distribution, controlling the α parameter to induce varying noise levels. Each dataset is processed under different α values and Gaussian setups for comparison.

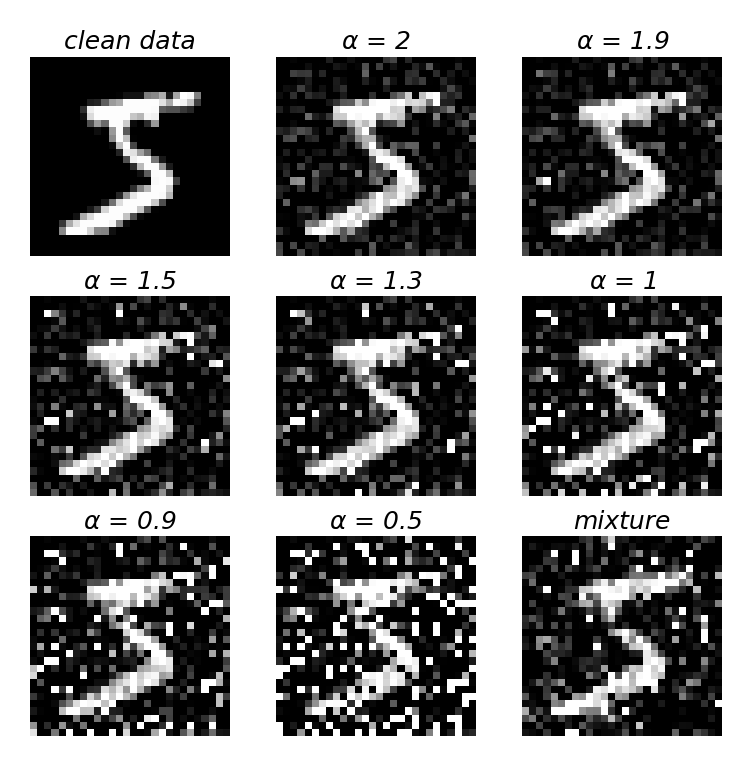

Figure 2: An example of MNIST dataset with noise of different α with γ=0.283.

Figure 3: An example of ECG200 dataset with noise of different α with γ=0.021, where the horizontal coordinates indicate the time and the vertical coordinates indicate the ECG voltage.

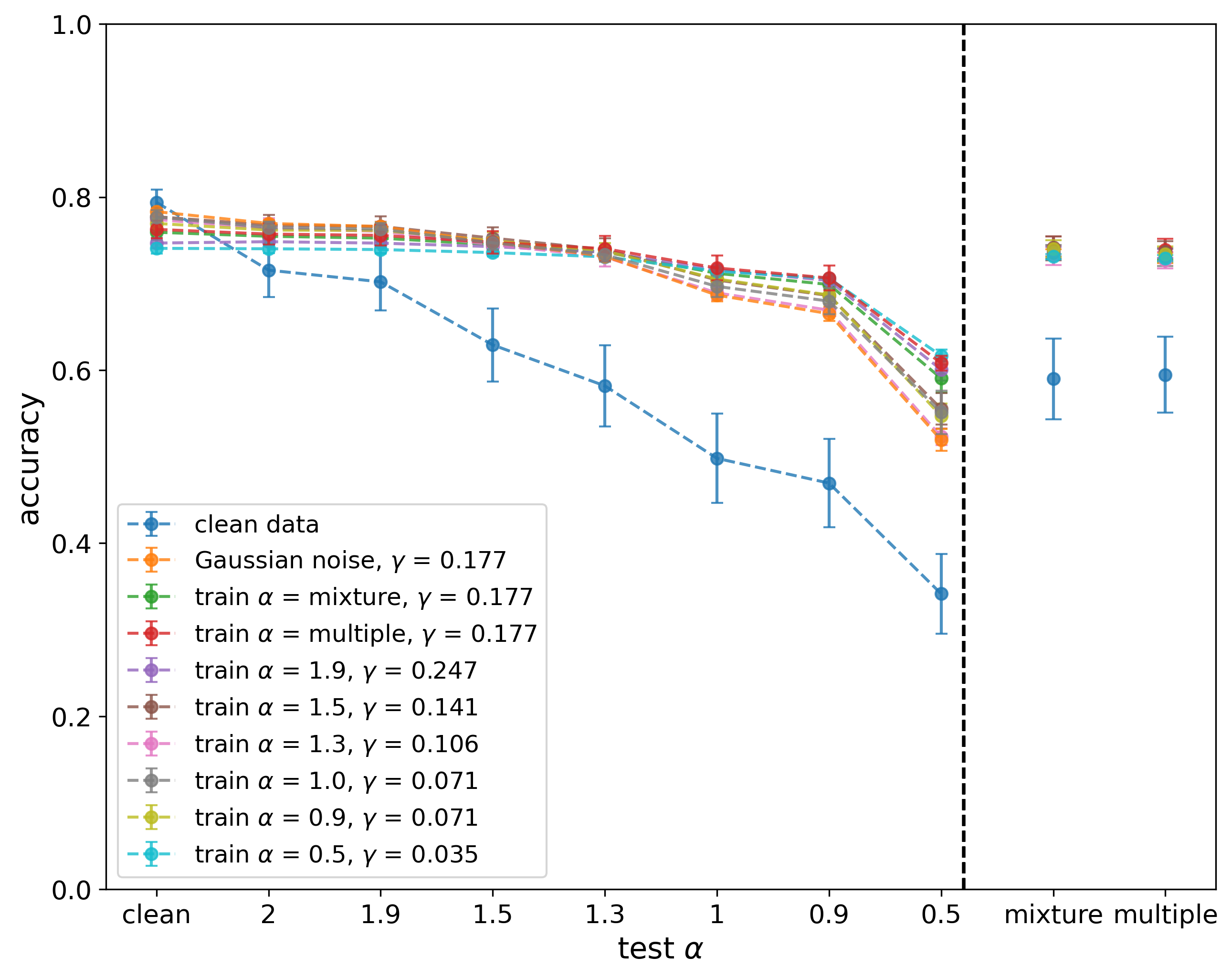

Training Scenarios

The study includes single-noise and combined-noise training scenarios. Models are trained using individual α-stable noises as well as combinations of multiple noise components, aiming to diversify the training exposure across noise types. Training architectures vary in depth and width, encompassing FCNs, ResNets, VGGs, and LSTMs, according to the dataset structure.

Experimental Results

Robustness Evaluation

Results indicate that models trained with α-stable noise, especially with α=1 (Cauchy distribution), demonstrate superior robustness compared to those trained with Gaussian noise. This holds across various testing scenarios, including different noise corruptions, demonstrating its applicability across data modalities.

Benchmarks on Corrupted Datasets

Using MNIST-C and CIFAR10-C benchmarks, models trained with α-stable noise outperform Gaussian-trained models in various corruption types, not limited to impulsive noise, underscoring the versatility and practicality of the approach.

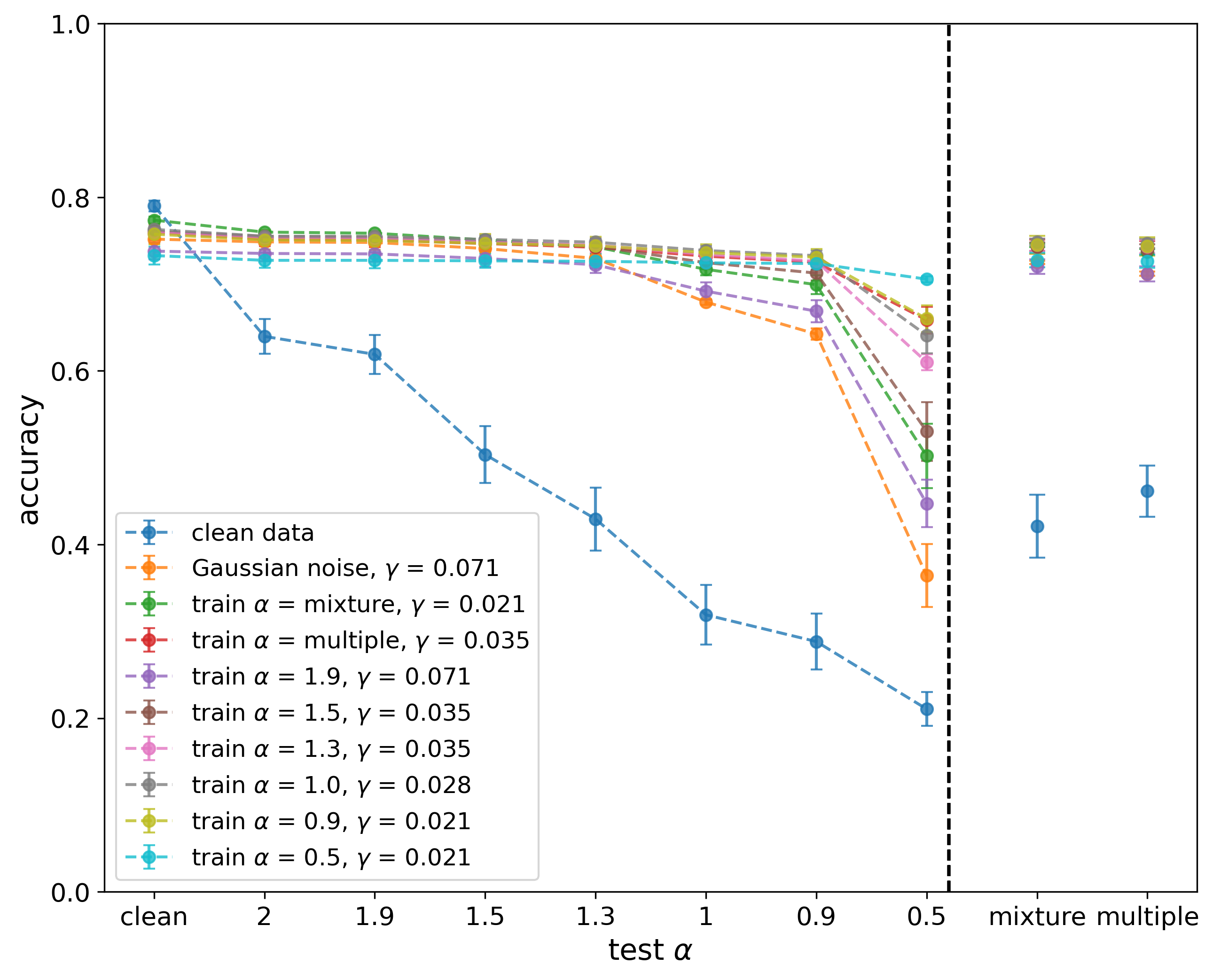

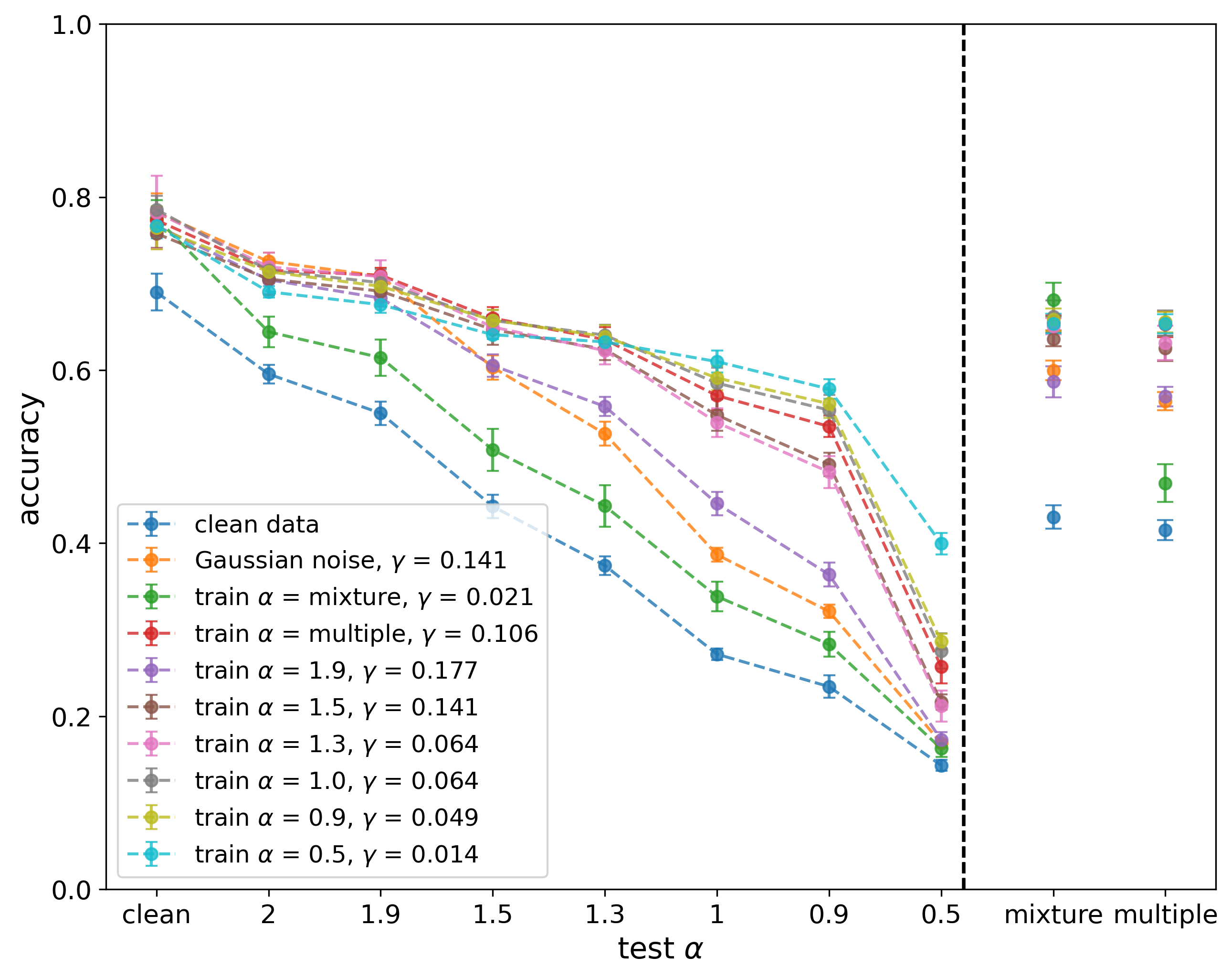

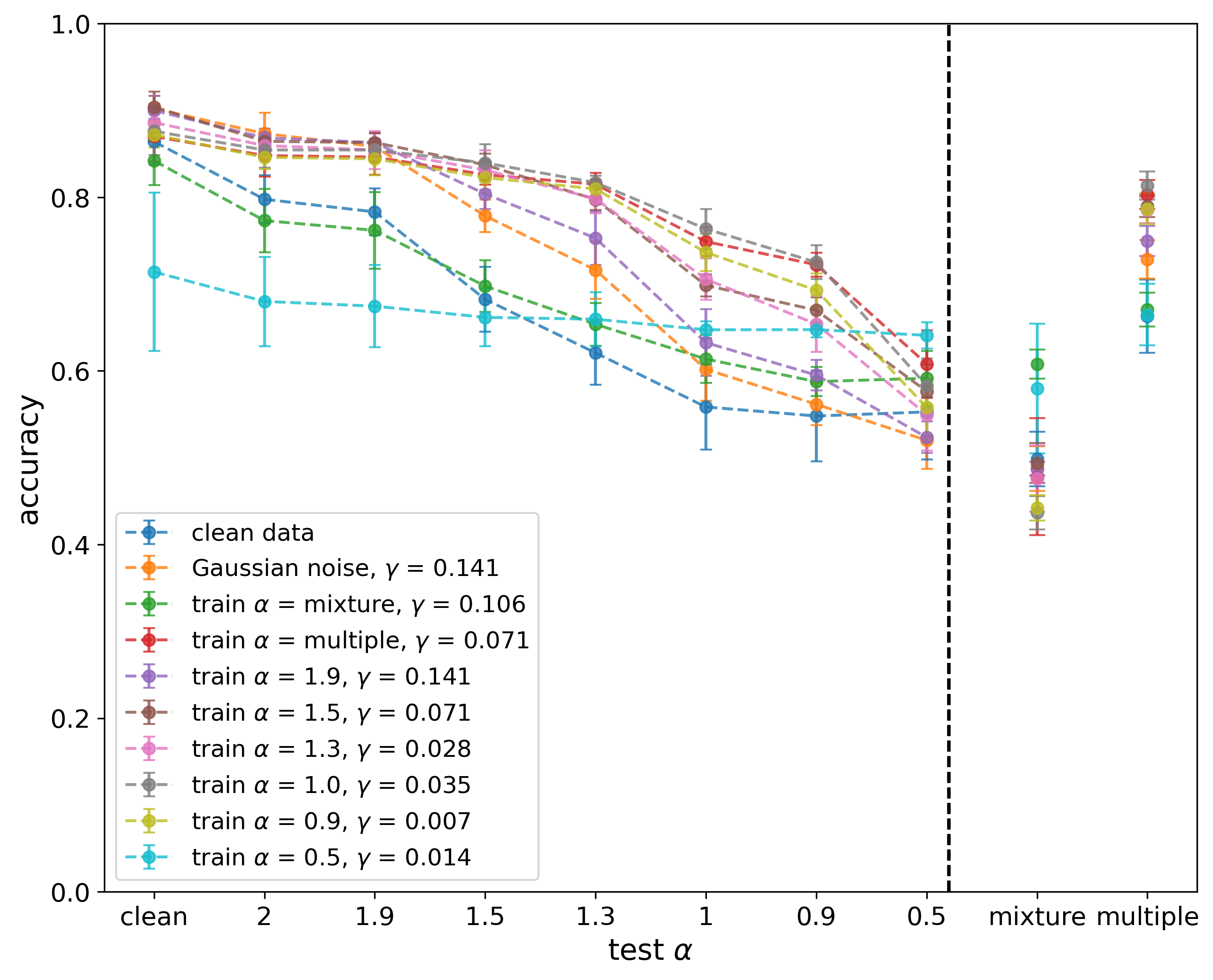

Figure 6: Results of VGG on ECG200 of single and combined training α.

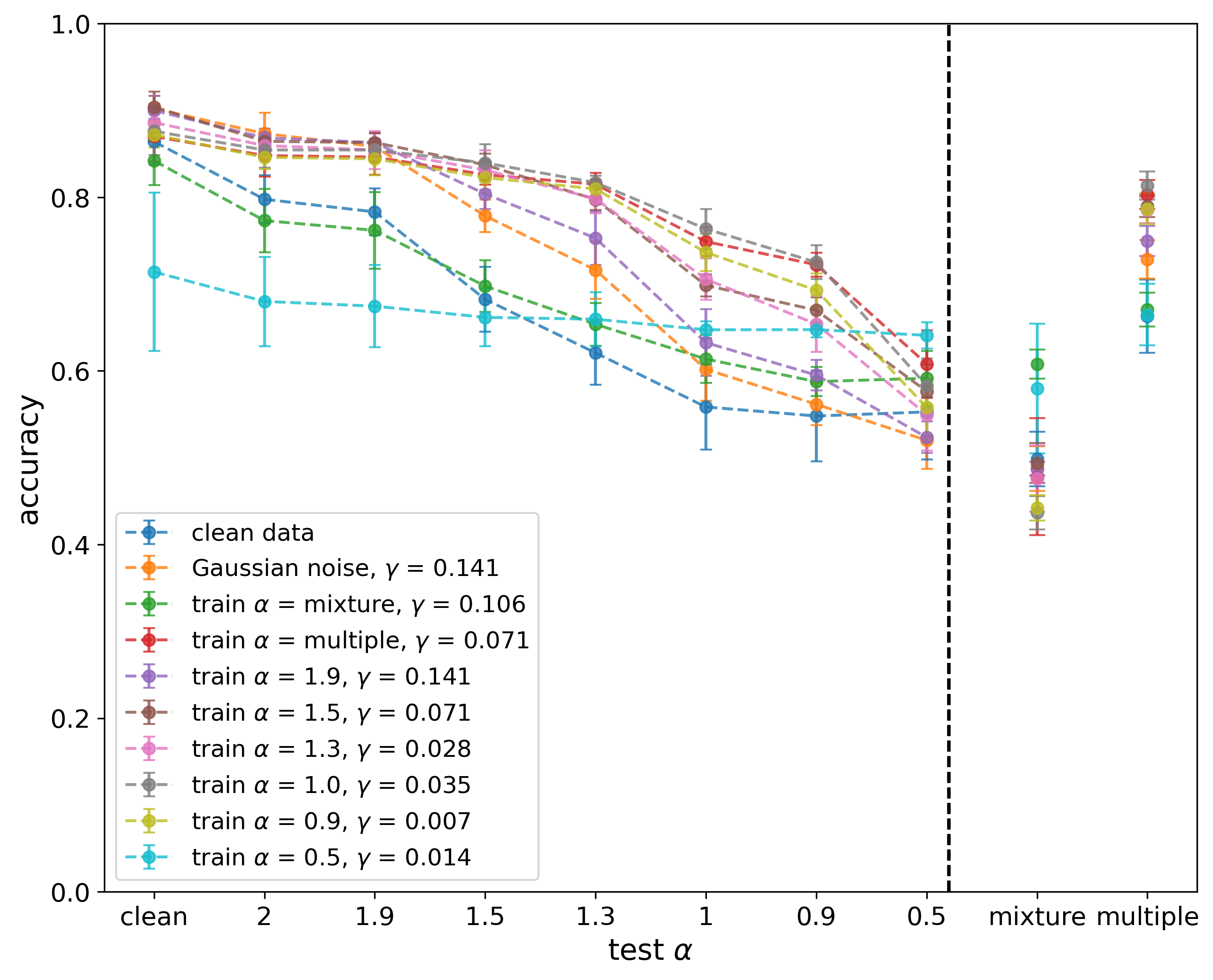

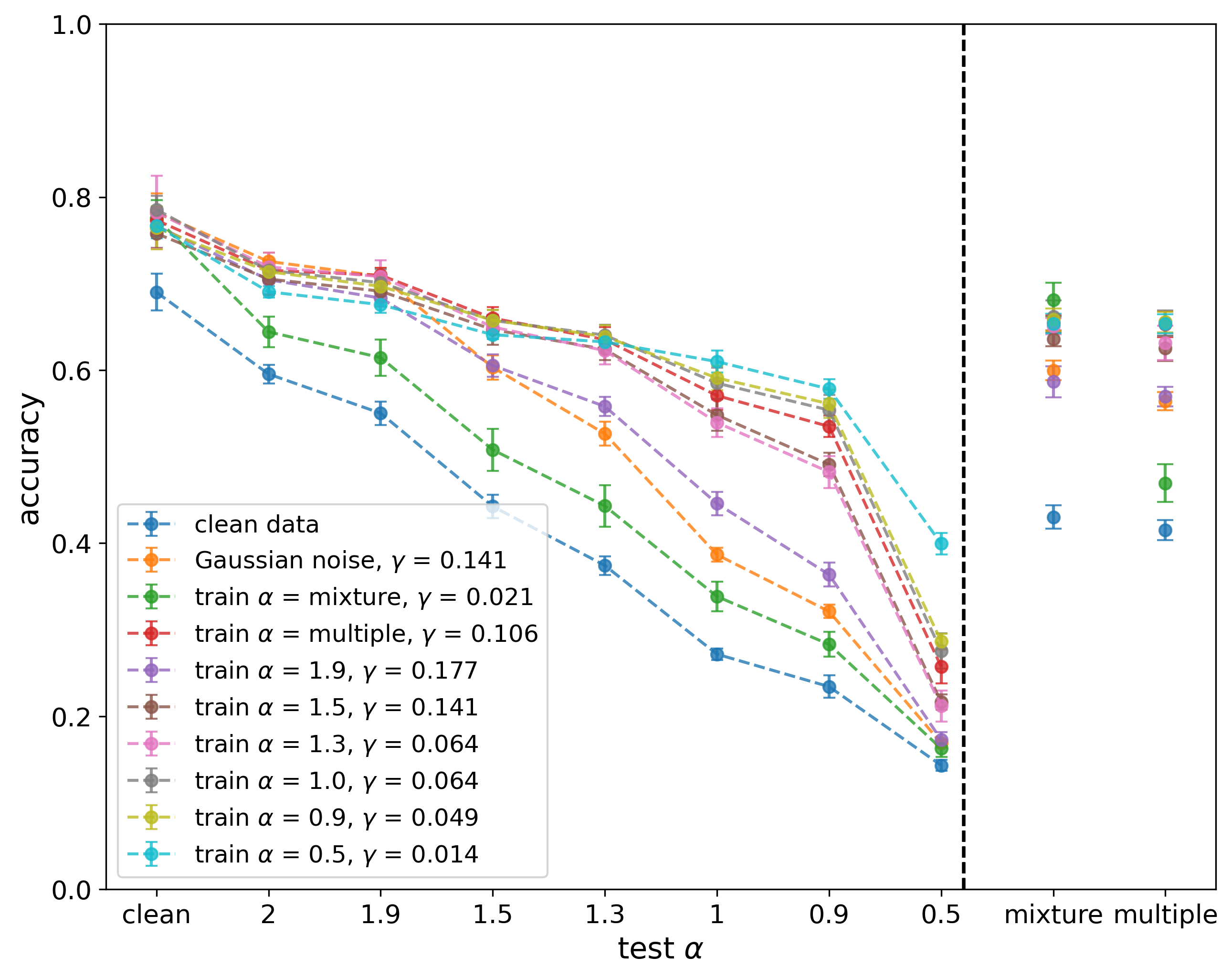

Figure 7: Results of LSTM on LIBRAS of single and combined training α.

Conclusions and Future Directions

The incorporation of α-stable noise in training robustifies neural networks, particularly augmenting their performance in the presence of unexpected noise distortions. These findings suggest that α-stable noise can be a compelling alternative to Gaussian noise in training environments devoid of prior noise character understanding.

Future research could explore adaptive noise perturbation level adjustment, enabling dynamic responses to varying noise severities during training. Extending the application of α-stable augmentation to regression tasks, object detection challenges, or other modalities beyond images and time series presents promising avenues for further exploration. Additionally, methodologies to optimize the dispersion parameter γ in real-time scenarios would increase the method's applicability in diverse practical contexts.