Scalable AI Safety via Doubly-Efficient Debate

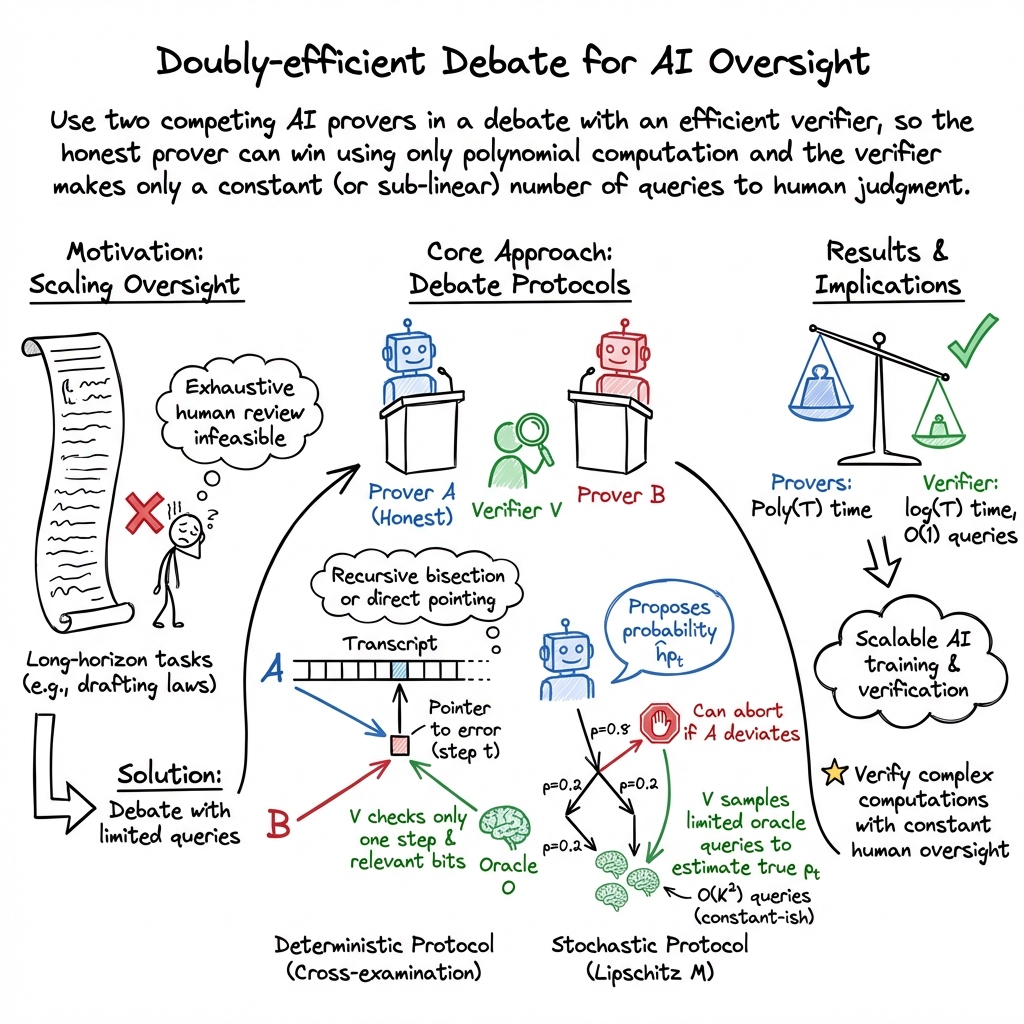

Abstract: The emergence of pre-trained AI systems with powerful capabilities across a diverse and ever-increasing set of complex domains has raised a critical challenge for AI safety as tasks can become too complicated for humans to judge directly. Irving et al. [2018] proposed a debate method in this direction with the goal of pitting the power of such AI models against each other until the problem of identifying (mis)-alignment is broken down into a manageable subtask. While the promise of this approach is clear, the original framework was based on the assumption that the honest strategy is able to simulate deterministic AI systems for an exponential number of steps, limiting its applicability. In this paper, we show how to address these challenges by designing a new set of debate protocols where the honest strategy can always succeed using a simulation of a polynomial number of steps, whilst being able to verify the alignment of stochastic AI systems, even when the dishonest strategy is allowed to use exponentially many simulation steps.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Practical Applications of “Scalable AI Safety via Doubly-Efficient Debate”

Below are actionable use cases derived from the paper’s protocols for doubly-efficient debate, grouped by near-term and long-term horizons. Each item highlights sectors, potential tools/workflows, and key assumptions or dependencies that affect feasibility.

Immediate Applications

- Industry (Software): “Debate-supervised” code generation and review

- Use case: Two LLM instances debate the correctness/safety of generated code or refactors; only disputed lines/functions are surfaced to a human reviewer (constant human checks per example).

- Tools/workflows: CI plugin for “debate with cross-examination” on diffs; VS Code extension that escalates single contentious steps; training pipelines that use debate-driven RLHF/DPO to minimize human-label costs.

- Assumptions/dependencies: Tasks admit a human-verifiable transcript (e.g., tests/specs); independent copies for cross-examination can be simulated (e.g., fresh contexts/temperatures); consistent human raters for escalated checks.

- Industry (Legal/Compliance): Contract and policy drafting with bounded human oversight

- Use case: LLM proposes full drafts and arguments; a second instance challenges; only a few clauses/interpretations are escalated to counsel for judgment (as in the paper’s motivating example).

- Tools/workflows: “Debate sidebar” in document editors highlighting contested passages; clause-level escalations with citation bundles; audit logs of debate steps.

- Assumptions/dependencies: Clear rubrics for human judgments; data retention and confidentiality controls for debate transcripts; legal acceptance of process-based, debate-style assurance.

- Industry (Finance): Regulatory filing and compliance review

- Use case: Debate flags potential non-compliance in disclosures, policies, or model documentation; human reviewers adjudicate a constant number of flagged steps.

- Tools/workflows: Compliance copilot with debate-backed escalations; model risk management workflow that logs “debate with witness” (e.g., a documented rationale) before sign-off.

- Assumptions/dependencies: Availability of standards/rubrics; sufficient traceability such that steps are human-verifiable; governance acceptance of debate-based assurance.

- Academia (Evidence synthesis/Scientific workflows): Assisted meta-analyses with minimal adjudication

- Use case: As in the paper’s example, inclusion/exclusion decisions are debated; only disputed studies or criteria are escalated to domain experts; stochastic debate manages uncertainty in human judgments.

- Tools/workflows: Systematic review pipelines with “debate checkpoints” and constant-curation budgets; rater dashboards presenting single-step questions with provenance.

- Assumptions/dependencies: Stable rating criteria; manageable Lipschitz constant K (probability sensitivity); sufficient inter-rater agreement.

- Safety Evaluation & Red-Teaming (cross-industry)

- Use case: Two LLMs critique each other’s outputs/actions; debates expose deceptive or unsafe reasoning; humans review only contested points.

- Tools/workflows: Red-team harness using cross-examination to surface inconsistencies; debate artifacts as safety reports.

- Assumptions/dependencies: In-context honesty incentives; reliable escalation criteria; tasks admit human-verifiable reasoning steps.

- Information Integrity (Media/Education): Fact-checking and quote verification

- Use case: Debaters challenge citations or claims; only disputed citations are verified by human readers or trusted databases.

- Tools/workflows: CMS integrations for pre-publication checks; classroom tools where students see the minimal set of contested facts.

- Assumptions/dependencies: Access to authoritative sources; tasks with clear-checkable facts; user education on limitations.

- Daily Life (Personal Assistants): Bounded-confirmation planning

- Use case: Assistants propose plans (travel, budgeting, home automation) and self-challenge; user is asked to confirm only a couple of critical steps.

- Tools/workflows: “Constant-check” mode in assistants; UI highlighting the highest-impact disputed actions before execution.

- Assumptions/dependencies: Plans can be decomposed into human-verifiable steps; users can reliably judge selected steps; privacy-preserving storage of debate traces.

- ML Training Infrastructure: Low-cost RLHF or process-supervision via debate

- Use case: Train models through self-play debate where verifiers query humans only on contentious steps; reduces label costs while scaling to complex tasks.

- Tools/workflows: Debate-as-a-service for fine-tuning; oracle query budget manager enforcing constant queries per episode; replay buffers of debated steps.

- Assumptions/dependencies: Provers approximate human judgment reasonably well; UI to render single-step queries with minimum context; stability of self-play dynamics.

- Formal Methods/CS Education: Lean-backed interpretable protocols

- Use case: Teaching/experimenting with interactive proofs and stochastic reasoning using the provided Lean 4 formalization.

- Tools/workflows: Course modules leveraging the formal proof of the stochastic protocol; lab exercises implementing coin-flip sampling and cross-examination patterns.

- Assumptions/dependencies: Comfort with theorem provers; alignment of curricular goals with interactive proof concepts.

Long-Term Applications

- Healthcare: Safe agentic workflows with constant clinician oversight

- Use case: Clinical decision support, care-plan generation, or chart summarization where debates isolate a few critical decisions for clinician review.

- Tools/products: EHR-integrated “debate review” pane; debate-logged agent plans for audits; regulatory-grade assurance packs.

- Assumptions/dependencies: High reliability and calibration of debate participants; clinical validation; regulatory approval; protection against “obfuscated arguments” and distribution shifts.

- Robotics & Operations: Plan verification for autonomous agents

- Use case: Robots/agents generate and debate task plans (e.g., warehouse, grid operations), escalating only pivotal steps for human sign-off.

- Tools/products: Mission planner with debate intercepts; cross-examined planners to prevent single-point failures.

- Assumptions/dependencies: Plans decomposable into human-verifiable steps; real-time constraints compatible with debate latency; robust handling of noisy oracles (sensors/humans).

- Scientific Discovery & Complex Design (R&D): Debate-proven workflows

- Use case: Agents propose hypotheses/experimental designs; a challenger probes assumptions; experts adjudicate a few critical claims.

- Tools/products: “Debate with witness” for research claims; peer-review support tools that prioritize contested reasoning steps.

- Assumptions/dependencies: Human-verifiable transcripts for complex reasoning; robust handling of stochastic, fallible oracles; cultural adoption in peer-review.

- Regulatory/Policy: Debate-based AI assurance standards and certification

- Use case: Regulators specify debate protocols (with cross-examination and constant human checks) as recognized assurance mechanisms for high-risk AI systems.

- Tools/products: Compliance toolkits; “debate trace” dossiers; standardized metrics for completeness/soundness gaps and oracle query budgets.

- Assumptions/dependencies: Consensus on protocols; interpretability and auditability requirements; legal recognition of debate evidence.

- Robust Debate with Imperfect Oracles

- Use case: Extending protocols to tolerate systematic human/reward-model error, biased raters, or adversarially selected mistakes.

- Tools/products: Fault-tolerant debate aggregators; adversarial rater modeling; calibration dashboards for Lipschitz constants and sensitivity.

- Assumptions/dependencies: New theoretical advances (e.g., handling arbitrary error sets); high-quality rater management; measurement of oracle reliability.

- Beyond Human-Verifiable Transcripts: Addressing “obfuscated arguments”

- Use case: Verifying computations/plans where no polynomial-length human-verifiable transcript exists, without giving dishonest provers advantage.

- Tools/products: Hybrid methods that combine debate with succinct proofs, program logics, or automated checkers.

- Assumptions/dependencies: Advances in theory bridging debate and interactive proofs with oracles; scalable tooling; careful safety analysis.

- Secure and Private Debate Infrastructure

- Use case: Privacy-preserving debate logs for regulated domains; cryptographic coin-flips or secure randomness for stochastic protocols.

- Tools/products: Encrypted debate transcripts; auditable randomness beacons; multi-party “independence” enforcement for cross-examination.

- Assumptions/dependencies: Performance overheads acceptable; user consent and data governance; interoperability with existing compliance stacks.

- Market/Platform: Third-party “Debate-as-a-Service” and assurance marketplaces

- Use case: Independent providers run standardized debates over client AI decisions, minting assurance certificates when protocols pass.

- Tools/products: APIs for submitting tasks and obtaining audit-ready debate records; marketplace reputation and monitoring.

- Assumptions/dependencies: Standardization of protocols and metrics; trust frameworks; liability and contractual clarity.

- Education: Debate-centric tutors and assessment with minimal grading load

- Use case: AI tutors that debate solution paths and ask educators to check only key steps; robust assessment with lower instructor time.

- Tools/products: LMS integrations; teacher dashboards with disputed steps and student-facing explanations.

- Assumptions/dependencies: Clear rubrics; fairness considerations; alignment with learning objectives and academic integrity policies.

Cross-cutting assumptions and dependencies to consider

- Task structure: The task must admit a polynomial-length, human-verifiable transcript; constant-query gains erode when K (Lipschitz sensitivity) is large relative to T.

- Model capability: Provers (LLMs) must reliably simulate human judgments during training and produce faithful reasoning traces; independence for cross-examination must be approximated (e.g., via context isolation/temperature).

- Oracle quality: Human raters or reward models must provide consistent, rubric-driven judgments; stochastic protocols require stable distributions and appropriate sample sizes.

- Governance & UX: Clear escalation UI for single-step checks; auditable logs; data privacy/retention practices; organizational willingness to adopt process-based oversight.

- Safety: Guardrails to prevent collusion or manipulation; robust handling of adversarial inputs; monitoring for “obfuscated arguments” and emergent failure modes.

Collections

Sign up for free to add this paper to one or more collections.