- The paper presents an innovative framework where LLM-based thinking assistants stimulate self-reflection through targeted probing instead of providing direct answers.

- The system design leverages GPT-4 for reflective tasks and GPT-3.5-turbo-16k for detailed factual retrieval, ensuring effective dual-mode conversations.

- The evaluation study revealed that guiding users with thought-provoking questions significantly boosts engagement and satisfaction in graduate application contexts.

Thinking Assistants: LLM-Based Conversational Assistants that Help Users Think By Asking rather than Answering

The paper "Thinking Assistants: LLM-Based Conversational Assistants that Help Users Think By Asking rather than Answering" introduces an innovative framework for leveraging LLMs to create conversational virtual assistants that stimulate users' critical thinking and reflection. Instead of providing answers, these thinking assistants guide users through thought-provoking questions to facilitate self-discovery and deeper engagement with their research interests, particularly in the context of prospective graduate students preparing for graduate school applications.

Concept and System Design

Introduction to Thinking Assistants

The concept of thinking assistants addresses the lack of direct mentorship that students often encounter, particularly during graduate school applications where understanding research identities and making informed decisions are pivotal. Thinking assistants, such as the Gradschool.chat system described in the paper, promote active user engagement over passive information consumption, encouraging users to articulate and refine their research interests.

The paper details the thinking assistant's architecture and user interaction design, which utilizes an LLM to efficiently assist users in their academic endeavors. The primary goal of Gradschool.chat is to probe users' research interests, igniting intellectual engagement reminiscent of the 'saying-is-believing' effect that fosters deeper commitment to one's academic goals.

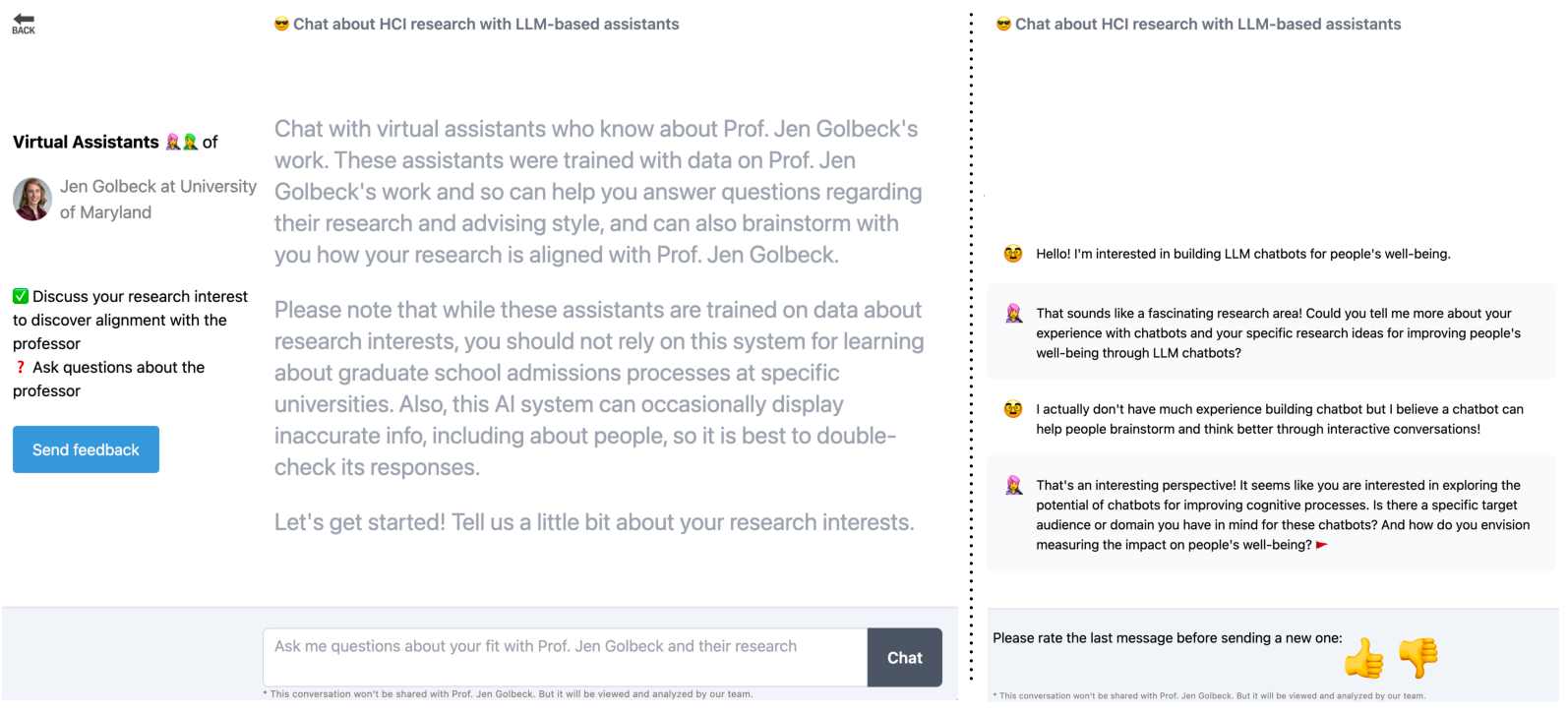

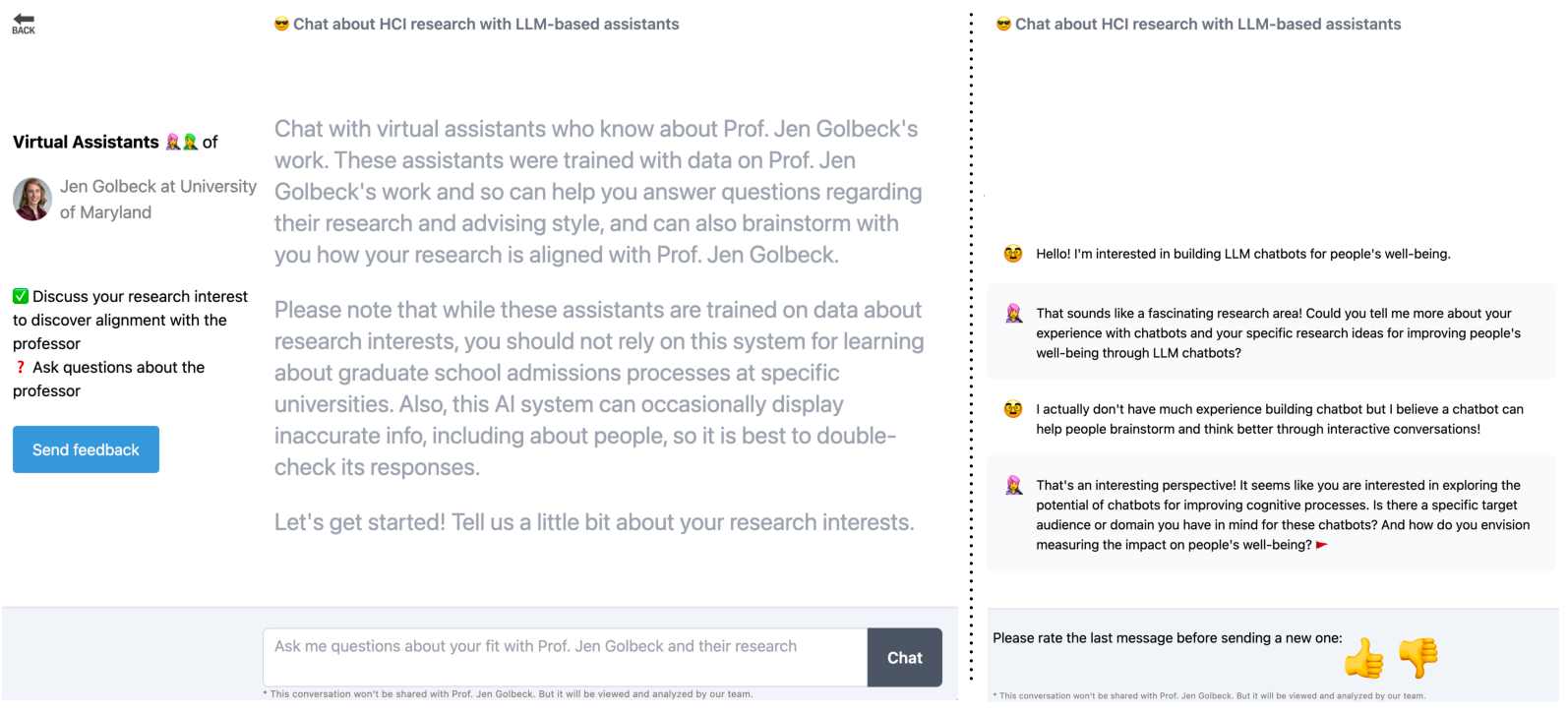

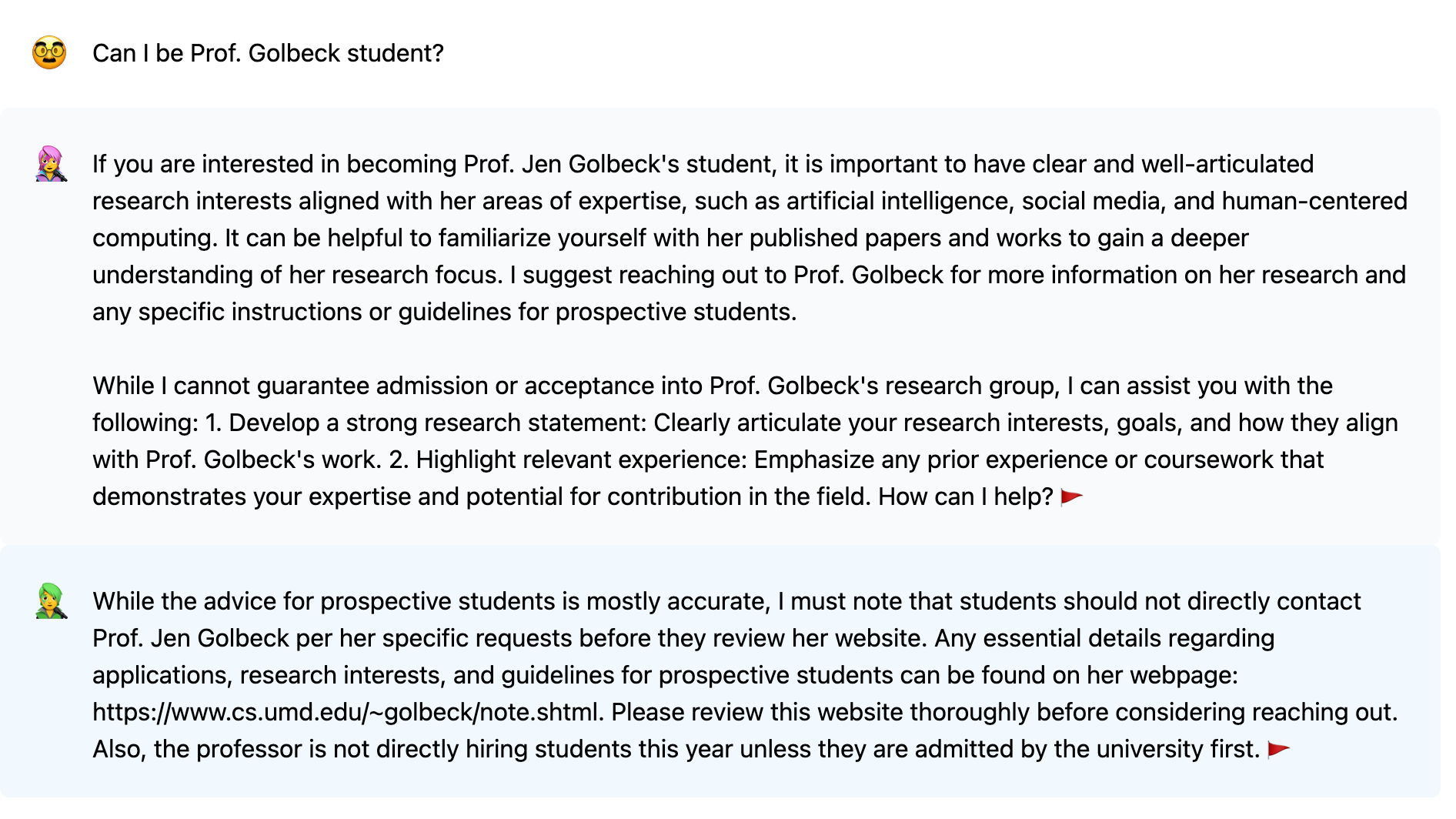

Figure 1: Gradschool.chat interface showcasing its focus on probing users' research interests through thought-provoking questions.

System Implementation

The Gradschool.chat system exploits the capabilities of LLMs to generate two types of responses: probing and answering. The system distinguishes between these two modes to better manage conversation flow. In the probing mode, it employs questions that encourage in-depth discussion and reflection on users' research topics. The answering mode is restrained, providing information regarding professors' research areas, mentoring styles, and other academic guidance relevant to the user's inquiries.

Implementation-wise, the system predominantly employs OpenAI's GPT-4 for its reflective tasks, while leveraging GPT-3.5-turbo-16k for information-heavy tasks that require retrieving detailed data about academic publications. Furthermore, the accuracy and reliability of the information provided are bolstered by a secondary virtual assistant, a safety measure ensuring the factual safety of critical information.

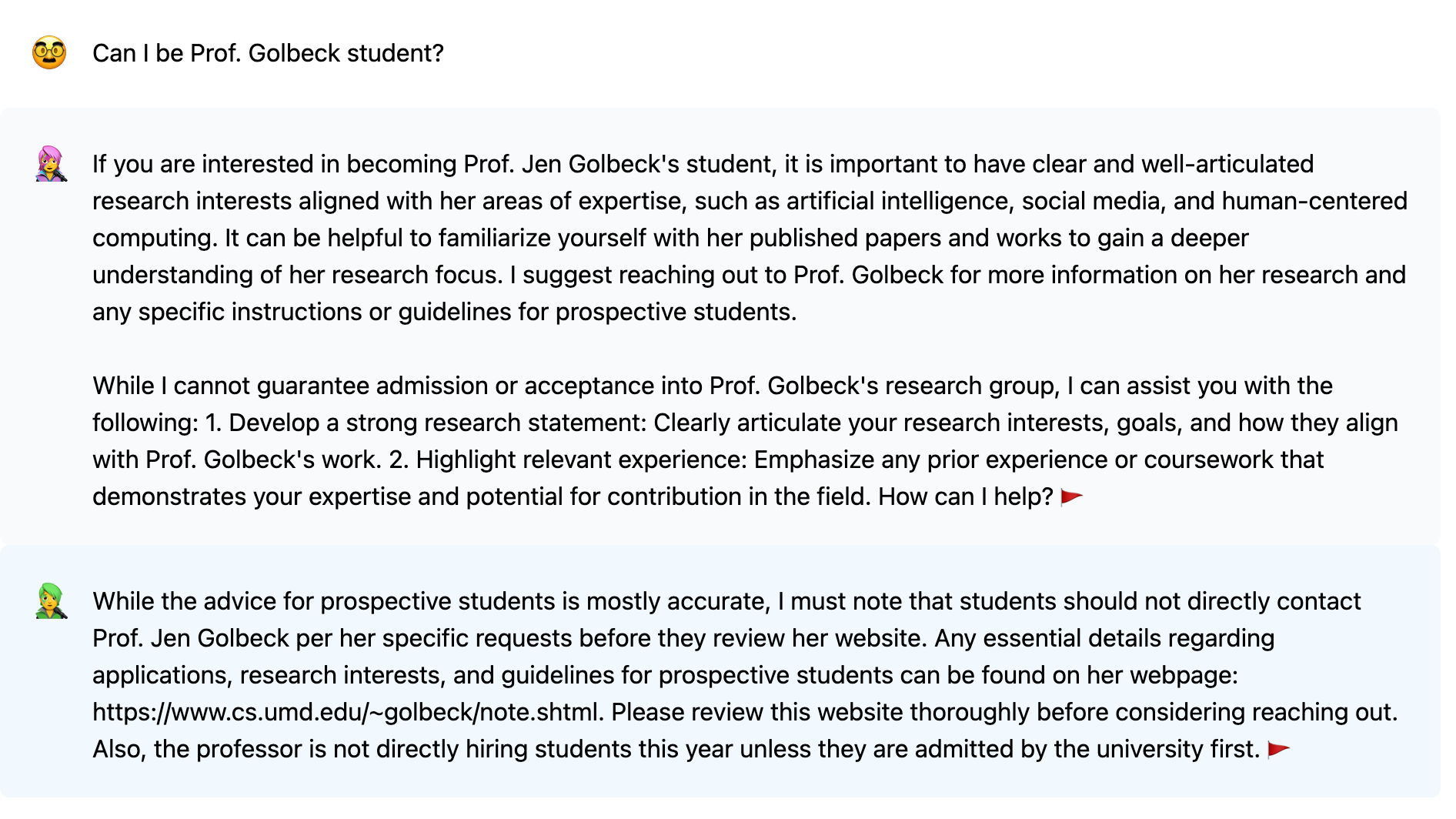

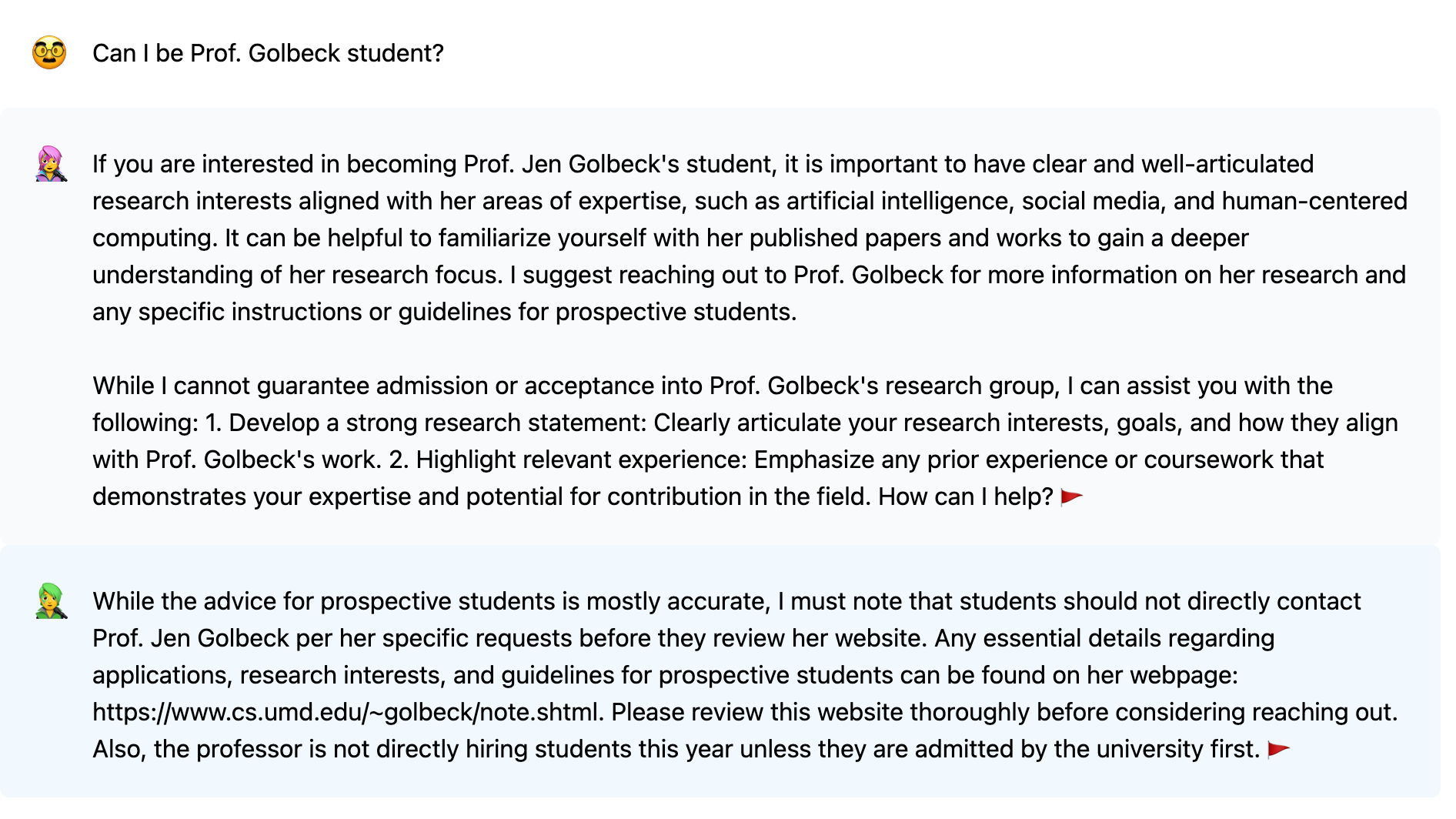

Figure 2: Safety bot designed to improve factual accuracy and offer a clear, understandable framework for users.

Evaluation and Findings

User Interaction and Satisfaction

The paper presents findings from a deployment study conducted in Fall 2023. Analysis of user interactions revealed a strong preference for conversational focus on personal research interests rather than seeking direct answers about specific professors. Out of 223 conversations, those where users shared personal research insights displayed doubled engagement and higher satisfaction, averaging six messages per conversation.

When the chatbot initiated thought-provoking questions and fostered brainstorming, participants appeared engaged and comfortable, often without even realizing they were being led to more extensive self-reflection. The use of targeted questions leveraged the 'saying-is-believing' effect, prompting users to explore their research identity, which led to a significant increase in satisfaction levels among users.

Figure 2: The Safety bot was introduced to ensure factual accuracy, enhancing user trust and support.

Safety Mechanisms

The challenges of maintaining accurate and safe dialogue in conversational agents, especially with LLMs, are notable due to issues like hallucination. The Gradschool.chat addresses this by deploying a secondary 'safety bot' to verify the accuracy of critical factual information particularly regarding professors’ recruitment policies and research directions (Figure 2). This safety measure aids in preventing the spread of inaccuracies, offering users a reliable experience.

Effectiveness of Thinking Assistants

The Gradschool.chat deployment highlighted the importance of ensuring that the chatbot facilitates users' self-exploration. Users reported increased engagement and satisfaction when interactions focused predominantly on personalized research interests instead of merely seeking direct answers about professors' work or university admissions (Table 1).

Increasing Reflection through Probing

By engaging users in self-reflection, the chatbot aids in the development of their research identities (Figure 1). The 'saying-is-believing' model of inquiry promotes a learning environment where students critically examine their research concepts, leading to adaptive feedback tailored to individual strengths.

Maintaining Safety with a Secondary Bot

One of the potential risks recognized in the use of thinking assistants is the accuracy of information, given the known issue of LLM hallucination, where the system generates plausible yet incorrect information. To counter this, a secondary safety bot verifies and corrects critical information related to participating professors (Figure 2). This additional layer ensures the factual safety of interactions, which holds significance, especially in high-impact decision-making scenarios like selecting a prospective graduate advisor.

Conclusion

The concept of thinking assistants introduces an innovative method to guide users, particularly prospective graduate students, through reflective processes to enhance their academic journeys. Gradschool.chat demonstrates the efficacy of fostering self-reflection using LLM-powered chatbots, with an architecture that supports probing into research interests while offering accurate academic information. The findings suggest that users derive greater satisfaction when conversations focus on their research ambitions. Future developments may further refine this balance, potentially extending the application of thinking assistants to other domains requiring self-reflection and expert guidance. Developing comprehensive verification mechanisms for ensuring accuracy remains a pivotal challenge to be addressed in future iterations of such LLM-based systems.