- The paper introduces a novel ER-MRL framework that integrates evolutionary algorithms, reservoir computing, and Meta-RL to optimize learning in POMDP and locomotion tasks.

- It leverages CMA-ES to fine-tune reservoir hyperparameters, enabling agents to reconstruct missing information and generate effective oscillatory dynamics.

- Results show enhanced generalization and faster adaptation across varied environments, highlighting the potential for advanced, adaptive AI systems.

Introduction

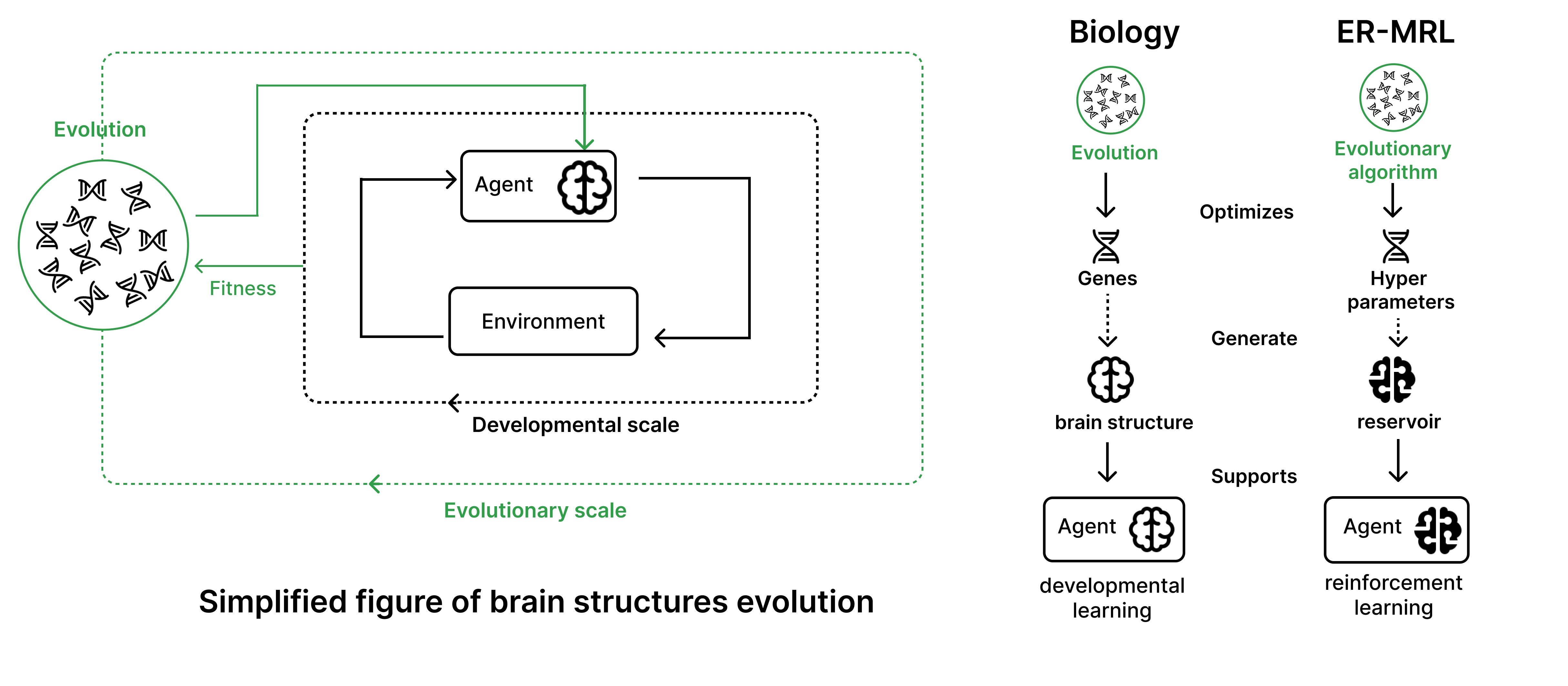

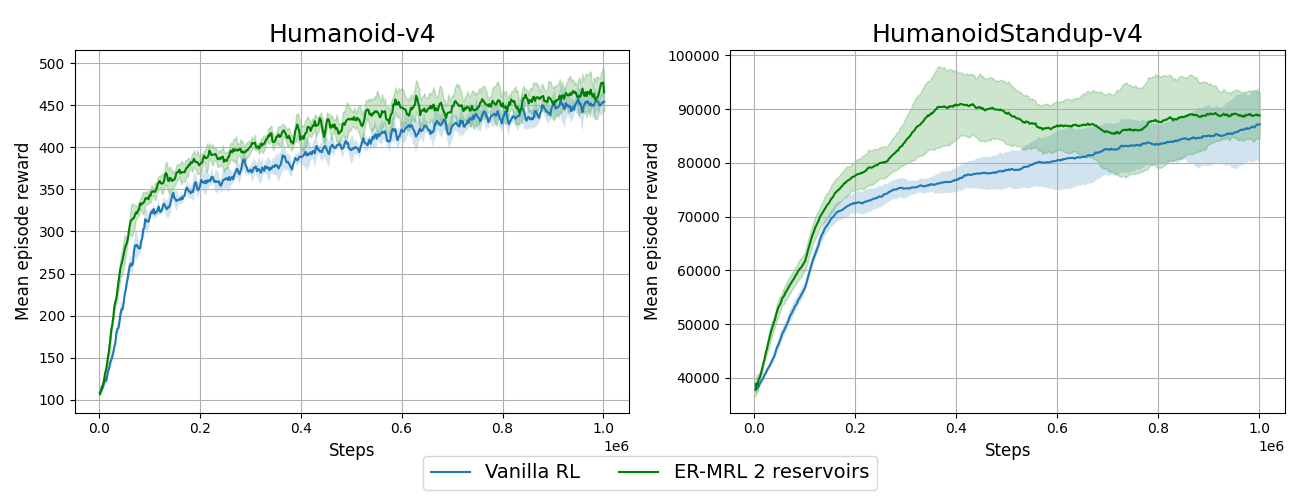

"Evolving Reservoirs for Meta Reinforcement Learning" (2312.06695) explores a novel integration of evolutionary computation and reservoir computing within the context of Meta Reinforcement Learning (Meta-RL). The paper proposes a computational model inspired by the evolutionary principles of adapting neural structures to enhance agents' learning capabilities. By employing reservoirs as recurrent neural networks, the framework optimizes hyperparameters (HPs) to generate neural architectures that facilitate reinforcement learning (RL) tasks. This study evaluates the proposed approach across various simulated environments, assessing its efficacy in solving tasks with partial observability, generating oscillatory dynamics for locomotion tasks, and facilitating the generalization of learned behaviors to new environments.

Figure 1: A comparison between the evolutionary mechanisms of brain structures and the computational framework proposed for optimizing reservoirs and RL agents.

Methodology

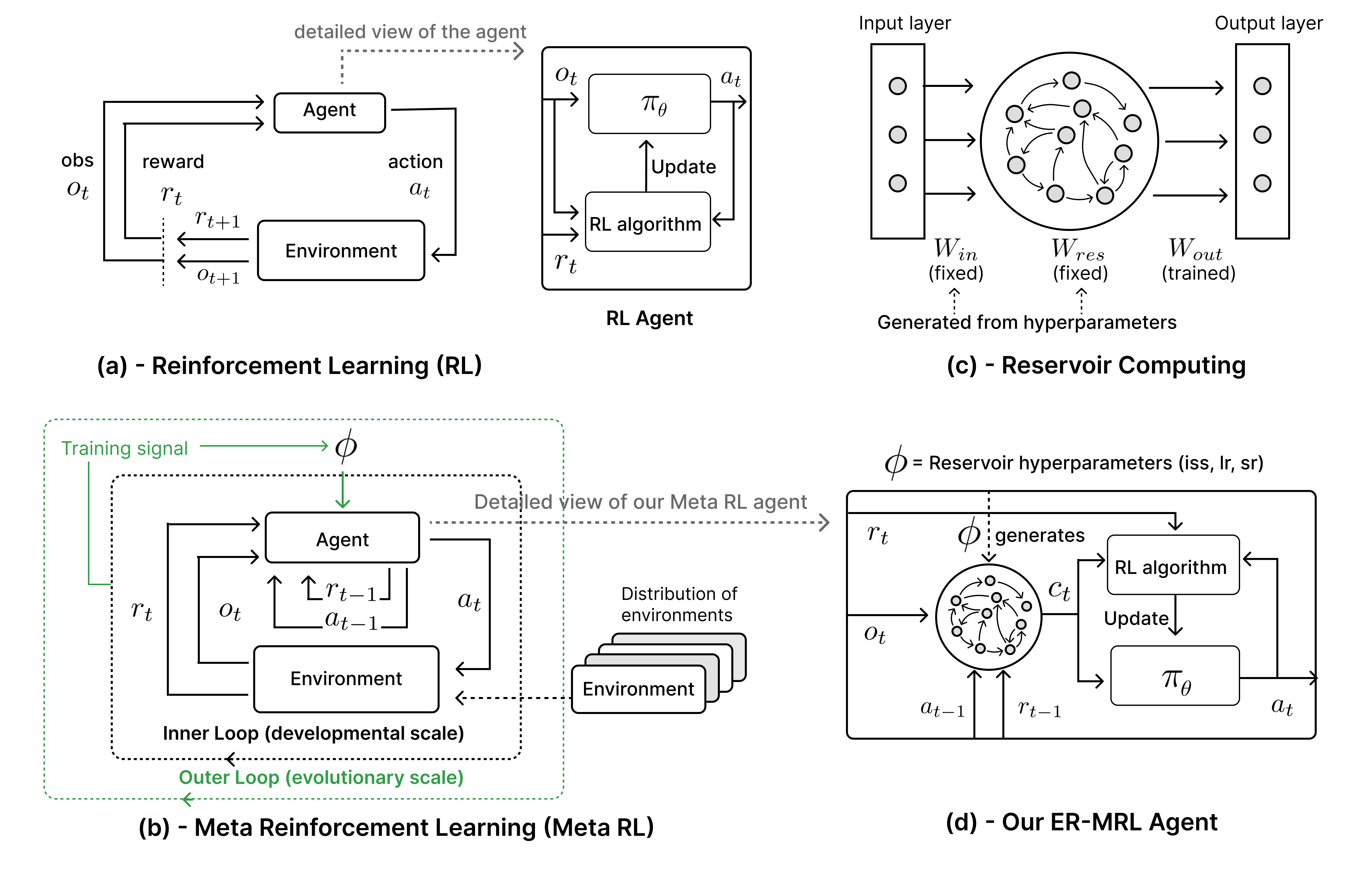

The paper introduces the Evolving Reservoirs for Meta Reinforcement Learning (ER-MRL) framework, comprising several integrated machine learning paradigms:

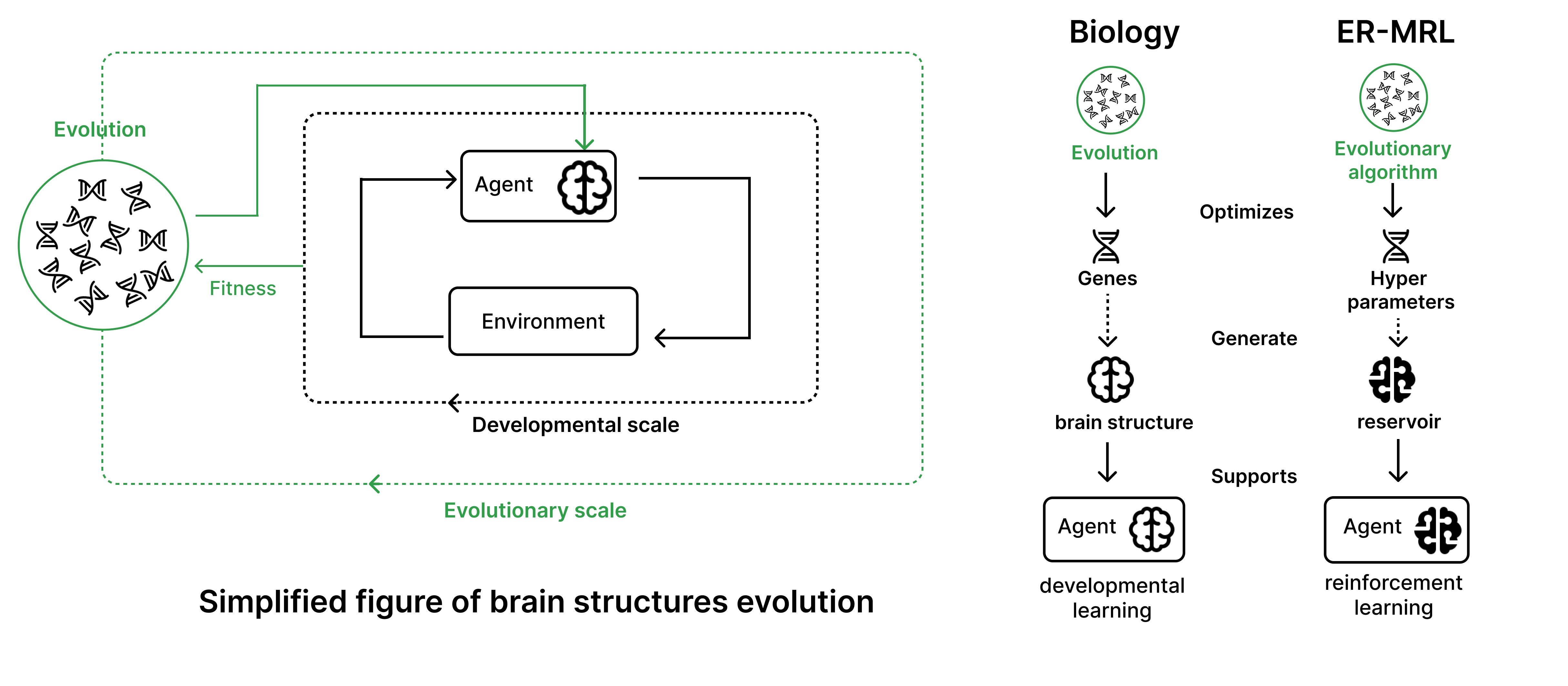

Reinforcement Learning (RL): In the RL model used, agents interact with environments through actions, observations, and rewards, trained to maximize accumulated rewards in a Markov Decision Process (MDP) framework. Here, RL represents the developmental scale where agents learn behavioral policies during their lifetime (2491.01386).

Reservoir Computing (RC): The model utilizes RC to represent the neural structure optimization at an evolutionary scale. RC generates recurrent neural networks from macro-level hyperparameters rather than synaptic weights. RC's indirect encoding aligns with the principle of the genomic bottleneck, optimizing general neural structures shared across environments (0912.33909).

Evolutionary Algorithms (EAs): The Covariance Matrix Adaptation Evolution Strategy (CMA-ES) is employed to optimize reservoir hyperparameters. EAs simulate natural selection, iteratively improving generation of reservoirs that facilitate learning in RL environments (Perugia et al., 2021).

Meta-Reinforcement Learning (Meta-RL): Meta-RL is leveraged for the interplay between evolutionary and developmental scales, optimizing HPs for RL tasks across diverse environments. This involves two nested loops—an outer evolutionary loop optimizing reservoir HPs and an inner RL loop optimizing action policies (Fu et al., 2023).

Figure 2: The proposed ER-MRL architecture showing the nested adaptive loops and reservoir integration for RL policy optimization.

Results

The study systematically evaluates ER-MRL under three main hypotheses:

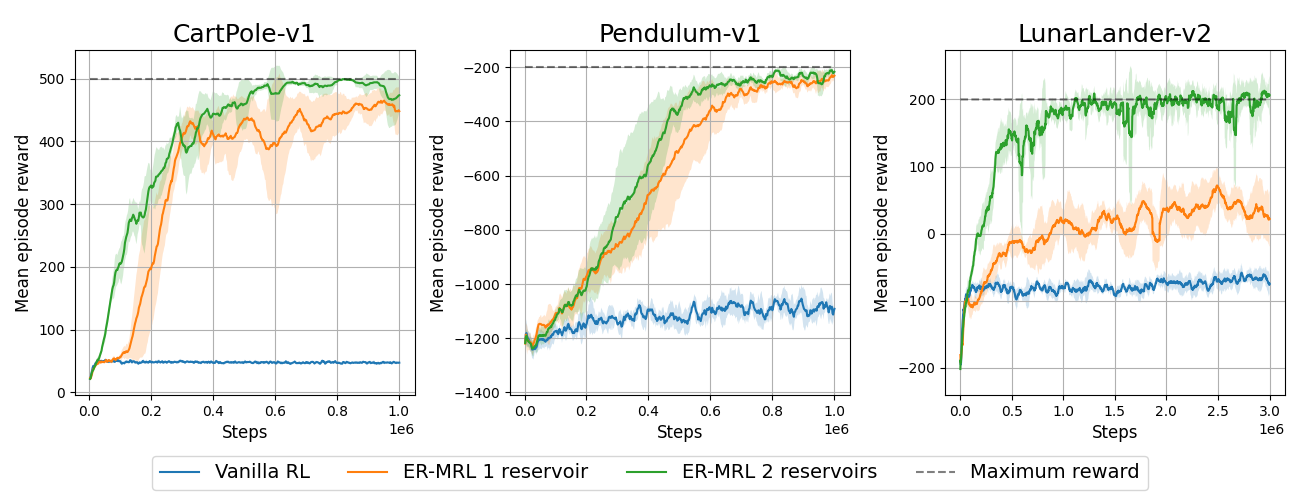

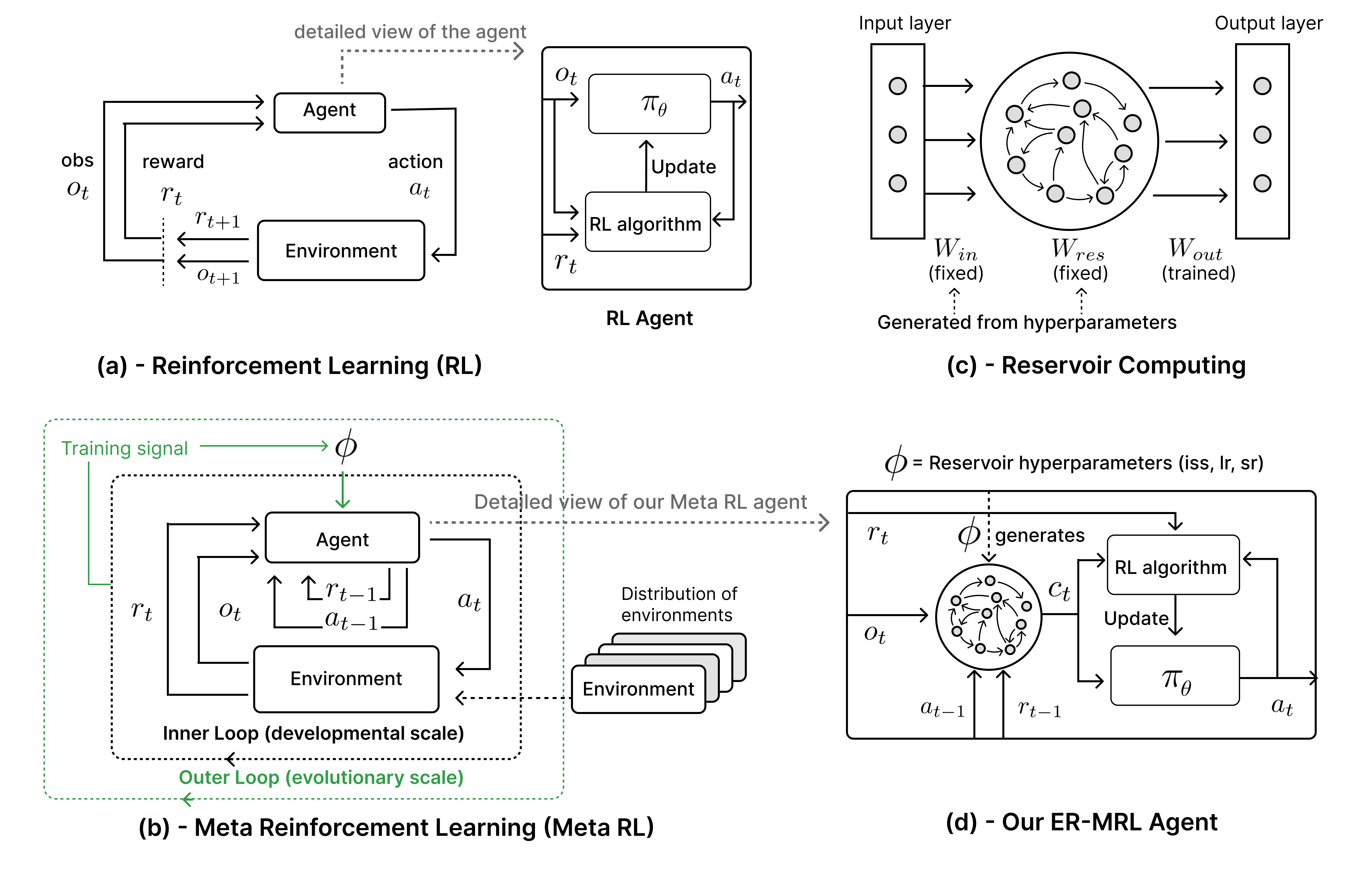

Partial Observability: ER-MRL agents were tested on modified environments like CartPole and LunarLander where velocity-related observations were removed. The evolved reservoirs facilitate the reconstruction of missing information, allowing agents to achieve near-optimal performance even under POMDP constraints.

Figure 3: Learning curves for solving tasks with partial observability using ER-MRL.

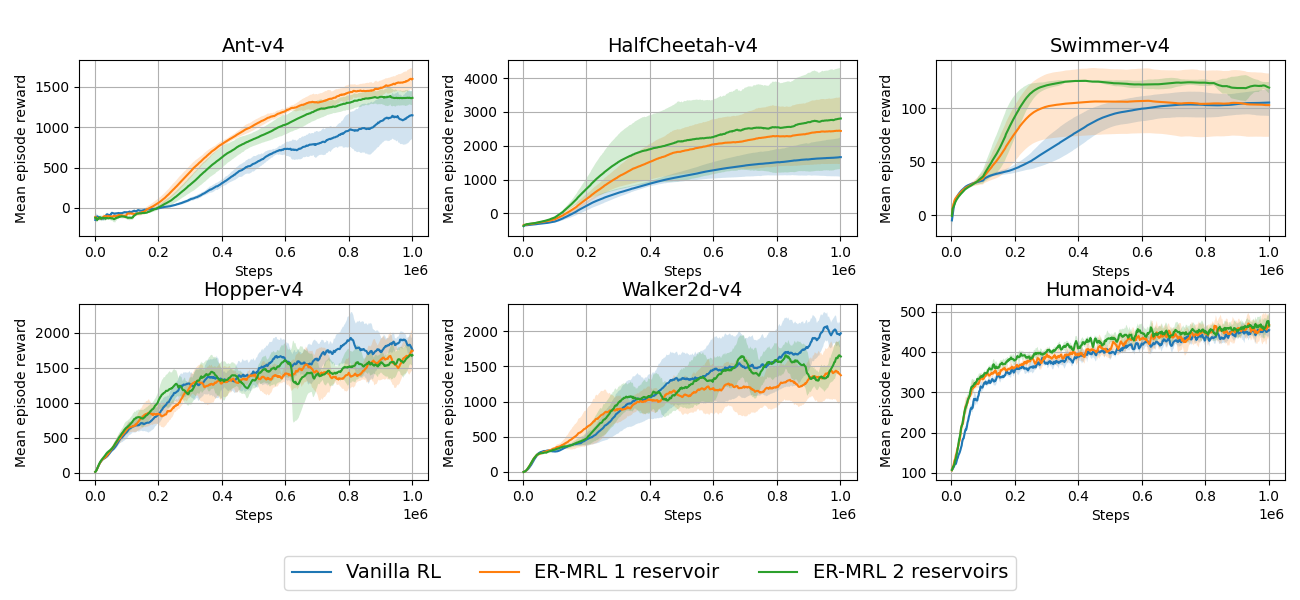

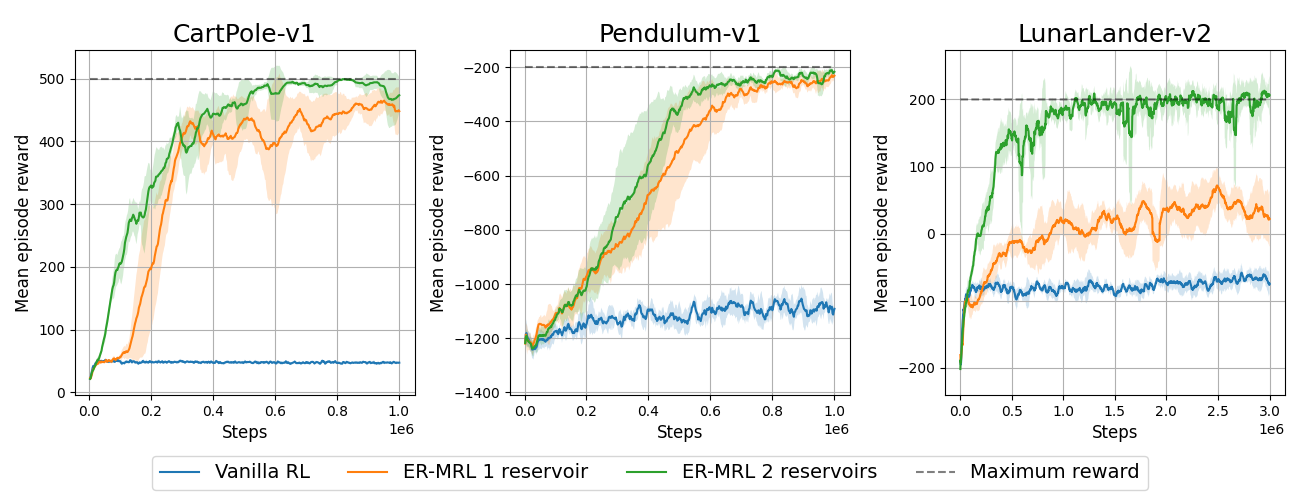

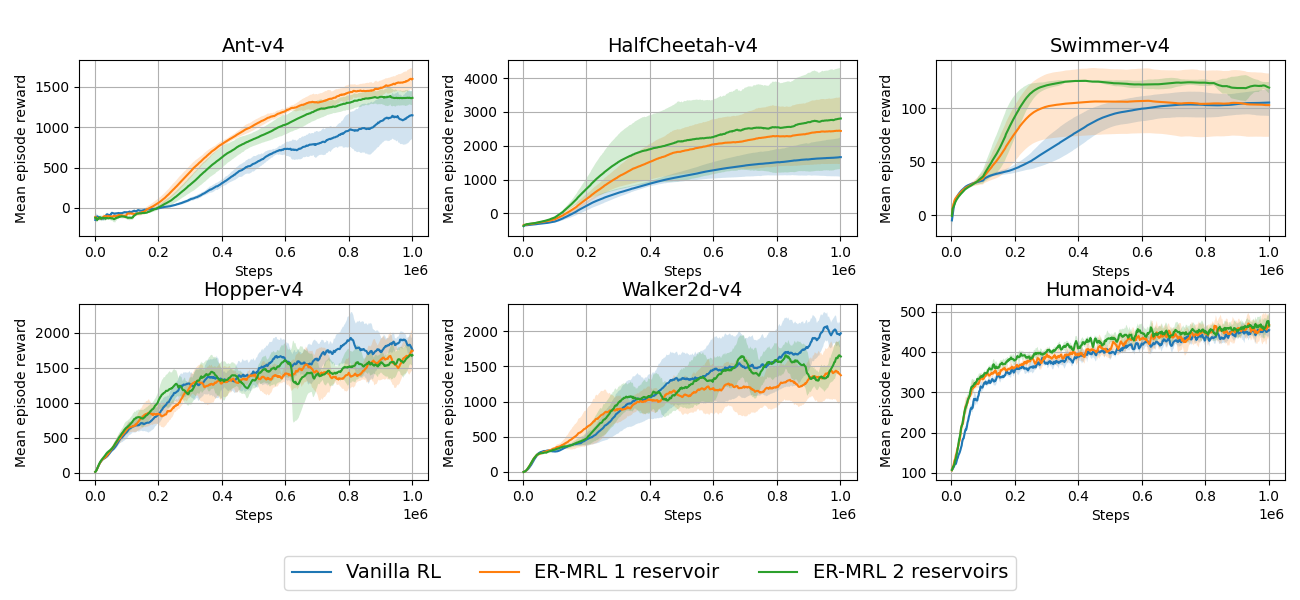

Oscillatory Dynamics in Locomotion: In 3D MuJoCo locomotion tasks such as Ant and HalfCheetah, ER-MRL agents exhibit enhanced learning through reservoir-generated oscillatory patterns, akin to biological CPGs. This rhythm assists in the coordination of complex motor tasks, leading to improved episodic rewards compared to standard RL agents.

Figure 4: Learning curves demonstrating improved performance in locomotion tasks with ER-MRL agents.

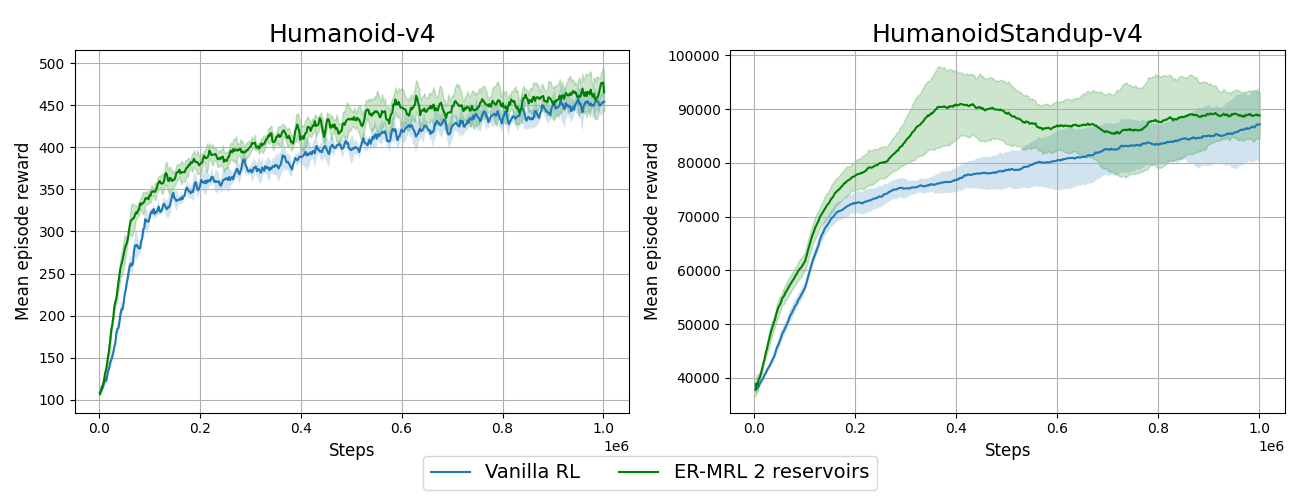

Generalization of Learned Behaviors: Evolved reservoirs significantly improve the generalization to new tasks, particularly when agents are trained on environments with similar morphologies and objectives. This capability is demonstrated in environments like Humanoid and Swimmer, underscoring the adaptability of the reservoir approach.

Figure 5: Generalization of learned behaviors across varied locomotion tasks with similar morphologies using ER-MRL.

Implications and Future Directions

This study highlights the potential for integrating evolutionary and reservoir computing methods within reinforcement learning frameworks to address complex adaptive challenges. The promise of ER-MRL lies in its ability to meta-optimize neural structures, offering insights into how neural and morphological traits can evolve to enhance behavioral learning. Future research could explore integrating more sophisticated Meta-RL algorithms, understanding the dynamic interactions between RL and RC, and expanding the scope to more diverse and complex environments. Additionally, refining and testing the computational cost-efficiency of ER-MRL hold crucial implications for practical implementations in AI systems.

Conclusion

"Evolving Reservoirs for Meta Reinforcement Learning" presents a compelling approach combining evolutionary algorithms, reservoir computing, and reinforcement learning to advance the learning capabilities of agents. This integration opens trajectories toward new paradigms of computational models aiming to mimic the intricate processes of evolution and development observed in biological systems. Such interdisciplinary explorations hold promise for advancing AI and deepening understanding of the mechanisms underpinning lifelong learning and adaptation.