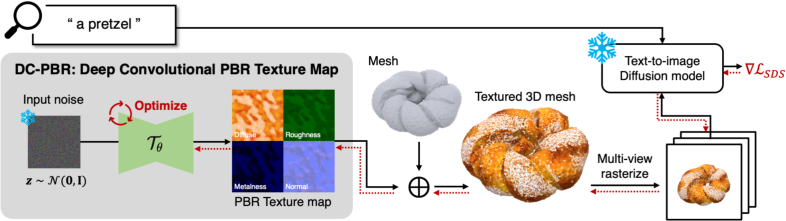

Paint-it: Text-to-Texture Synthesis via Deep Convolutional Texture Map Optimization and Physically-Based Rendering

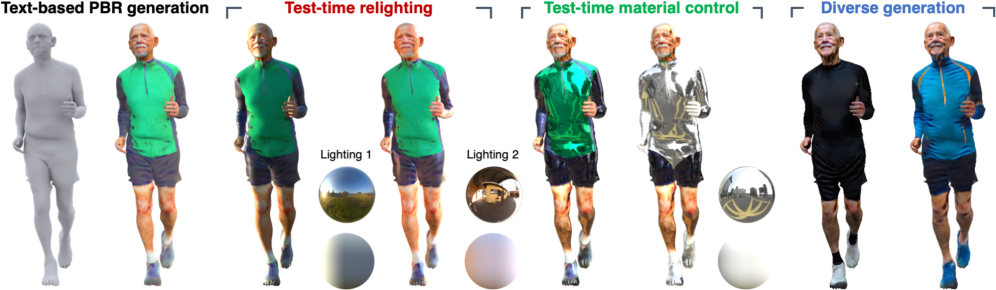

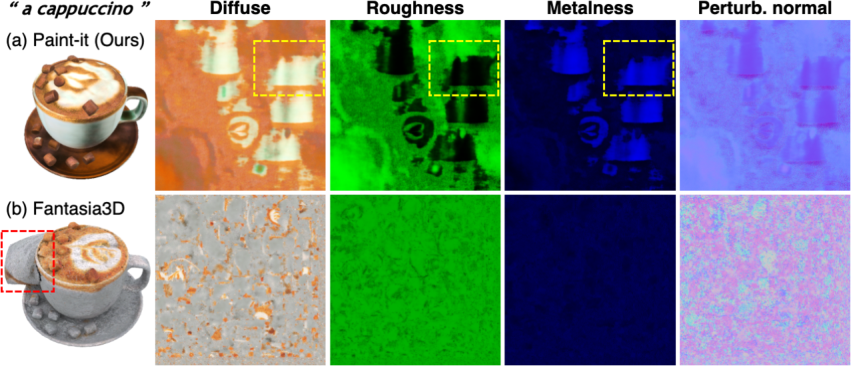

Abstract: We present Paint-it, a text-driven high-fidelity texture map synthesis method for 3D meshes via neural re-parameterized texture optimization. Paint-it synthesizes texture maps from a text description by synthesis-through-optimization, exploiting the Score-Distillation Sampling (SDS). We observe that directly applying SDS yields undesirable texture quality due to its noisy gradients. We reveal the importance of texture parameterization when using SDS. Specifically, we propose Deep Convolutional Physically-Based Rendering (DC-PBR) parameterization, which re-parameterizes the physically-based rendering (PBR) texture maps with randomly initialized convolution-based neural kernels, instead of a standard pixel-based parameterization. We show that DC-PBR inherently schedules the optimization curriculum according to texture frequency and naturally filters out the noisy signals from SDS. In experiments, Paint-it obtains remarkable quality PBR texture maps within 15 min., given only a text description. We demonstrate the generalizability and practicality of Paint-it by synthesizing high-quality texture maps for large-scale mesh datasets and showing test-time applications such as relighting and material control using a popular graphics engine. Project page: https://kim-youwang.github.io/paint-it

- https://ami.postech.ac.kr/members.

- http://virtualhumans.mpi-inf.mpg.de/people.html.

- https://renderpeople.com/, 2023.

- The generalized PatchMatch correspondence algorithm. In European Conference on Computer Vision (ECCV), 2010.

- Who left the dogs out?: 3D animal reconstruction with expectation maximization in the loop. In European Conference on Computer Vision (ECCV), 2020.

- Texfusion: Synthesizing 3d textures with text-guided image diffusion models. In IEEE International Conference on Computer Vision (ICCV), 2023a.

- Dreamavatar: Text-and-shape guided 3d human avatar generation via diffusion models. arXiv preprint, 2304.00916, 2023b.

- SMPLitex: A Generative Model and Dataset for 3D Human Texture Estimation from Single Image. In British Machine Vision Conference (BMVC), 2023.

- Efficient geometry-aware 3D generative adversarial networks. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Text2tex: Text-driven texture synthesis via diffusion models. In IEEE International Conference on Computer Vision (ICCV), 2023a.

- Fantasia3d: Disentangling geometry and appearance for high-quality text-to-3d content creation. In IEEE International Conference on Computer Vision (ICCV), 2023b.

- gdna: Towards generative detailed neural avatars. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022a.

- Tango: Text-driven photorealistic and robust 3d stylization via lighting decomposition. In Advances in Neural Information Processing Systems (NeurIPS), 2022b.

- Cross-attention of disentangled modalities for 3d human mesh recovery with transformers. In European Conference on Computer Vision (ECCV), 2022.

- A reflectance model for computer graphics. ACM Transactions on Graphics (SIGGRAPH), 1(1), 1982.

- Object removal by exemplar-based inpainting. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2003.

- Objaverse: A universe of annotated 3d objects. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- AG3D: Learning to generate 3D avatars from 2D image collections. In IEEE International Conference on Computer Vision (ICCV), 2023.

- Learning an animatable detailed 3D face model from in-the-wild images. ACM Transactions on Graphics (SIGGRAPH), 40(8), 2021.

- Texture synthesis using convolutional neural networks. In Advances in Neural Information Processing Systems (NeurIPS), 2015.

- Humans in 4D: Reconstructing and tracking humans with transformers. In IEEE International Conference on Computer Vision (ICCV), 2023.

- Human poseitioning system (hps): 3d human pose estimation and self-localization in large scenes from body-mounted sensors. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2021.

- Denoising and regularization via exploiting the structural bias of convolutional generators. In International Conference on Learning Representations (ICLR), 2020.

- Gans trained by a two time-scale update rule converge to a local nash equilibrium. In Advances in Neural Information Processing Systems (NeurIPS), 2017.

- Avatarclip: Zero-shot text-driven generation and animation of 3d avatars. ACM Transactions on Graphics (SIGGRAPH), 41(4):1–19, 2022.

- Dreamtime: An improved optimization strategy for text-to-3d content creation. arXiv preprint, 2306.12422, 2023a.

- Dreamwaltz: Make a scene with complex 3d animatable avatars. arXiv preprint, 2305.12529, 2023b.

- Zero-shot text-guided object generation with dream fields. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Avatarcraft: Transforming text into neural human avatars with parameterized shape and pose control. In IEEE International Conference on Computer Vision (ICCV), 2023.

- Flame: Free-form language-based motion synthesis & editing. In AAAI Conference on Artificial Intelligence (AAAI), 2022.

- Modular primitives for high-performance differentiable rendering. ACM Transactions on Graphics (SIGGRAPH), 39(6), 2020.

- 360-degree textures of people in clothing from a single image. In International Conference on 3D Vision (3DV), 2019.

- Magic3d: High-resolution text-to-3d content creation. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Learning to dress 3d people in generative clothing. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020.

- Latent-nerf for shape-guided generation of 3d shapes and textures. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Nonparametric blind super-resolution. In IEEE International Conference on Computer Vision (ICCV), 2013.

- Text2mesh: Text-driven neural stylization for meshes. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Nerf: Representing scenes as neural radiance fields for view synthesis. In European Conference on Computer Vision (ECCV), 2020.

- Deepsdf: Learning continuous signed distance functions for shape representation. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019.

- Expressive body capture: 3D hands, face, and body from a single image. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2019.

- Dreamfusion: Text-to-3d using 2d diffusion. In International Conference on Learning Representations (ICLR), 2022.

- Learning transferable visual models from natural language supervision. In International Conference on Machine Learning (ICML), 2021.

- Humor: 3d human motion model for robust pose estimation. In IEEE International Conference on Computer Vision (ICCV), 2021.

- Texture: Text-guided texturing of 3d shapes. ACM Transactions on Graphics (SIGGRAPH), 2023.

- High-resolution image synthesis with latent diffusion models. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Photorealistic text-to-image diffusion models with deep language understanding. In Advances in Neural Information Processing Systems (NeurIPS), 2022.

- Deep marching tetrahedra: a hybrid representation for high-resolution 3d shape synthesis. In Advances in Neural Information Processing Systems (NeurIPS), 2021.

- On measuring and controlling the spectral bias of the deep image prior. International Journal of Computer Vision, 2022.

- Texturify: Generating textures on 3d shape surfaces. In European Conference on Computer Vision (ECCV), 2022.

- Consistency models. In International Conference on Machine Learning (ICML), 2023.

- Laughtalk: Expressive 3d talking head generation with laughter, 2023.

- Dinar: Diffusion inpainting of neural textures for one-shot human avatars. In IEEE International Conference on Computer Vision (ICCV), 2023.

- Human motion diffusion model. In International Conference on Learning Representations (ICLR), 2023.

- Deep image prior. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.

- Score jacobian chaining: Lifting pretrained 2d diffusion models for 3d generation. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023a.

- Non-local neural networks. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.

- Prolificdreamer: High-fidelity and diverse text-to-3d generation with variational score distillation. In Advances in Neural Information Processing Systems (NeurIPS), 2023b.

- Learning a probabilistic latent space of object shapes via 3d generative-adversarial modeling. In Advances in Neural Information Processing Systems (NeurIPS), 2016.

- Visibility aware human-object interaction tracking from single rgb camera. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- 3D human texture estimation from a single image with transformers. In IEEE International Conference on Computer Vision (ICCV), 2021.

- Nsf: Neural surface fields for human modeling from monocular depth. In IEEE International Conference on Computer Vision (ICCV), 2023.

- Unified 3d mesh recovery of humans and animals by learning animal exercise. In British Machine Vision Conference (BMVC), 2021.

- CLIP-Actor: Text-driven recommendation and stylization for animating human meshes. In European Conference on Computer Vision (ECCV), 2022.

- A large-scale 3d face mesh video dataset via neural re-parameterized optimization, 2023.

- Towards metrical reconstruction of human faces. In European Conference on Computer Vision (ECCV), 2022.

- 3D menagerie: Modeling the 3D shape and pose of animals. In IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.