Single-Microphone Speaker Separation and Voice Activity Detection in Noisy and Reverberant Environments

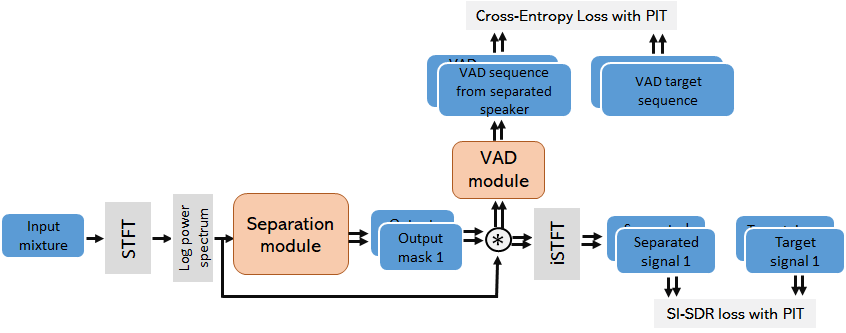

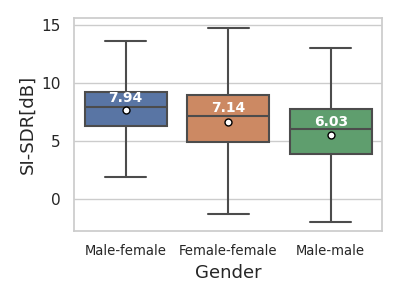

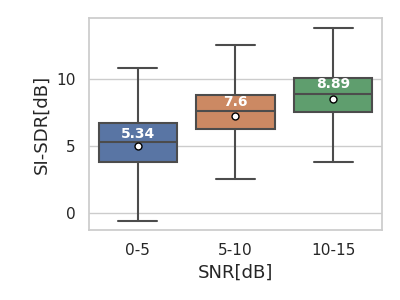

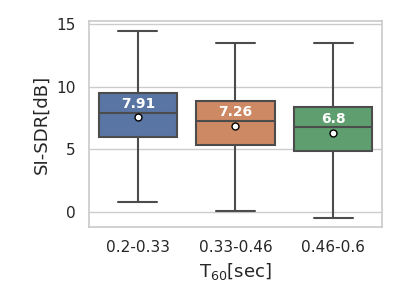

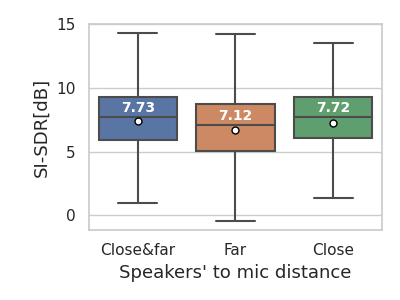

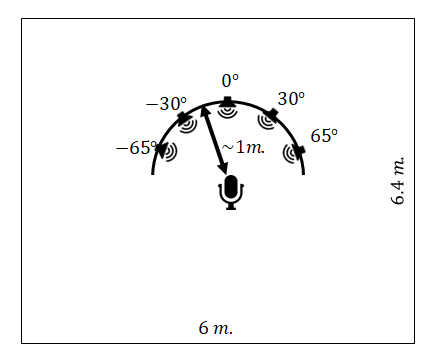

Abstract: Speech separation involves extracting an individual speaker's voice from a multi-speaker audio signal. The increasing complexity of real-world environments, where multiple speakers might converse simultaneously, underscores the importance of effective speech separation techniques. This work presents a single-microphone speaker separation network with TF attention aiming at noisy and reverberant environments. We dub this new architecture as Separation TF Attention Network (Sep-TFAnet). In addition, we present a variant of the separation network, dubbed $ \text{Sep-TFAnet}{\text{VAD}}$, which incorporates a voice activity detector (VAD) into the separation network. The separation module is based on a temporal convolutional network (TCN) backbone inspired by the Conv-Tasnet architecture with multiple modifications. Rather than a learned encoder and decoder, we use short-time Fourier transform (STFT) and inverse short-time Fourier transform (iSTFT) for the analysis and synthesis, respectively. Our system is specially developed for human-robotic interactions and should support online mode. The separation capabilities of $ \text{Sep-TFAnet}{\text{VAD}}$ and Sep-TFAnet were evaluated and extensively analyzed under several acoustic conditions, demonstrating their advantages over competing methods. Since separation networks trained on simulated data tend to perform poorly on real recordings, we also demonstrate the ability of the proposed scheme to better generalize to realistic examples recorded in our acoustic lab by a humanoid robot. Project page: https://Sep-TFAnet.github.io

- J. R. Hershey, Z. Chen, J. Le Roux, and S. Watanabe, “Deep clustering: Discriminative embeddings for segmentation and separation,” in IEEE international conference on acoustics, speech and signal processing (ICASSP), pp. 31–35, 2016.

- D. Yu, M. Kolbæk, Z.-H. Tan, and J. Jensen, “Permutation invariant training of deep models for speaker-independent multi-talker speech separation,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 241–245, 2017.

- Y. Luo and N. Mesgarani, “Conv-tasnet: Surpassing ideal time–frequency magnitude masking for speech separation,” IEEE/ACM transactions on audio, speech, and language processing, vol. 27, no. 8, pp. 1256–1266, 2019.

- Y. Luo, Z. Chen, and T. Yoshioka, “Dual-path RNN: efficient long sequence modeling for time-domain single-channel speech separation,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 46–50, 2020.

- N. Zeghidour and D. Grangier, “Wavesplit: End-to-end speech separation by speaker clustering,” IEEE/ACM Transactions on Audio, Speech, and Language Processing, vol. 29, pp. 2840–2849, 2021.

- S. Zhao and B. Ma, “Mossformer: Pushing the performance limit of monaural speech separation using gated single-head transformer with convolution-augmented joint self-attentions,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2023.

- E. Nachmani, Y. Adi, and L. Wolf, “Voice separation with an unknown number of multiple speakers,” in International Conference on Machine Learning (ICML), pp. 7164–7175, 2020.

- S. Lutati, E. Nachmani, and L. Wolf, “SepIt approaching a single channel speech separation bound,” arXiv preprint arXiv:2205.11801, 2022.

- J. Le Roux, S. Wisdom, H. Erdogan, and J. R. Hershey, “SDR–half-baked or well done?,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 626–630, 2019.

- C. Subakan, M. Ravanelli, S. Cornell, M. Bronzi, and J. Zhong, “Attention is all you need in speech separation,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 21–25, 2021.

- C. Subakan, M. Ravanelli, S. Cornell, F. Grondin, and M. Bronzi, “On using transformers for speech-separation,” arXiv preprint arXiv:2202.02884, 2022.

- E. Tzinis, Z. Wang, X. Jiang, and P. Smaragdis, “Compute and memory efficient universal sound source separation,” Journal of Signal Processing Systems, vol. 94, no. 2, pp. 245–259, 2022.

- G. Wichern, J. Antognini, M. Flynn, L. R. Zhu, E. McQuinn, D. Crow, E. Manilow, and J. L. Roux, “WHAM!: Extending Speech Separation to Noisy Environments,” in Proc. Interspeech, pp. 1368–1372, 2019.

- T. Cord-Landwehr, C. Boeddeker, T. Von Neumann, C. Zorilă, R. Doddipatla, and R. Haeb-Umbach, “Monaural source separation: From anechoic to reverberant environments,” in International Workshop on Acoustic Signal Enhancement (IWAENC), 2022.

- J. Heitkaemper, D. Jakobeit, C. Boeddeker, L. Drude, and R. Haeb-Umbach, “Demystifying TasNet: A dissecting approach,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6359–6363, 2020.

- D. Wang, T. Yoshioka, Z. Chen, X. Wang, T. Zhou, and Z. Meng, “Continuous speech separation with ad hoc microphone arrays,” in 2021 29th European Signal Processing Conference (EUSIPCO), pp. 1100–1104, IEEE, 2021.

- Q. Lin, L. Yang, X. Wang, L. Xie, C. Jia, and J. Wang, “Sparsely overlapped speech training in the time domain: Joint learning of target speech separation and personal vad benefits,” in 2021 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), pp. 689–693, IEEE, 2021.

- M. Maciejewski, G. Wichern, E. McQuinn, and J. Le Roux, “WHAMR!: Noisy and reverberant single-channel speech separation,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 696–700, 2020.

- C. Lea, R. Vidal, A. Reiter, and G. D. Hager, “Temporal convolutional networks: A unified approach to action segmentation,” in European Conference on Computer Vision (ECCV), (Amsterdam, The Netherlands), pp. 47–54, Springer, Oct. 2016.

- Z.-Q. Wang, G. Wichern, S. Watanabe, and J. Le Roux, “STFT-domain neural speech enhancement with very low algorithmic latency,” IEEE/ACM Transactions on Audio, Speech, and Language Processing, vol. 31, pp. 397–410, 2022.

- W. Ravenscroft, S. Goetze, and T. Hain, “Deformable temporal convolutional networks for monaural noisy reverberant speech separation,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2023.

- Q. Zhang, X. Qian, Z. Ni, A. Nicolson, E. Ambikairajah, and H. Li, “A time-frequency attention module for neural speech enhancement,” IEEE/ACM Transactions on Audio, Speech, and Language Processing, vol. 31, pp. 462–475, 2023.

- F. Liu, X. Ren, Z. Zhang, X. Sun, and Y. Zou, “Rethinking skip connection with layer normalization,” in Proceedings of the 28th international conference on computational linguistics, pp. 3586–3598, 2020.

- M. Kolbæk, D. Yu, Z.-H. Tan, and J. Jensen, “Multitalker speech separation with utterance-level permutation invariant training of deep recurrent neural networks,” IEEE/ACM Transactions on Audio, Speech, and Language Processing, vol. 25, no. 10, pp. 1901–1913, 2017.

- Y. Yemini, E. Fetaya, H. Maron, and S. Gannot, “Scene-agnostic multi-microphone speech dereverberation,” in Proc. of Interspeech, (Brno, The Czech Republic), 2021.

- M. Delcroix, T. Yoshioka, A. Ogawa, Y. Kubo, M. Fujimoto, N. Ito, K. Kinoshita, M. Espi, T. Hori, T. Nakatani, et al., “Linear prediction-based dereverberation with advanced speech enhancement and recognition technologies for the reverb challenge,” in Reverb workshop, 2014.

- V. Panayotov, G. Chen, D. Povey, and S. Khudanpur, “Librispeech: an asr corpus based on public domain audio books,” in IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 5206–5210, 2015.

- J. B. Allen and D. A. Berkley, “Image method for efficiently simulating small-room acoustics,” The Journal of the Acoustical Society of America, vol. 65, no. 4, pp. 943–950, 1979.

- E. A. Habets, “Room impulse response generator,” tech. rep., Friedrich-Alexander-Universität Erlangen-Nürnberg, 2014.

- E. Tzinis, Z. Wang, and P. Smaragdis, “Sudo rm-rf: Efficient networks for universal audio source separation,” in IEEE International Workshop on Machine Learning for Signal Processing (MLSP), 2020.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.