- The paper introduces Keyframer, a tool that integrates large language models with natural language prompts to generate and edit animation code.

- It employs both decomposed and holistic prompting strategies to facilitate iterative design and precise refinement for users at all skill levels.

- User studies revealed an average response time of 17.4 seconds and minor syntactic errors that did not impede the overall creative workflow.

Keyframer: Empowering Animation Design using LLMs

This essay discusses the paper "Keyframer: Empowering Animation Design using LLMs" (2402.06071), which introduces a novel AI tool designed to simplify and enhance animation design by leveraging LLMs. Keyframer provides designers with a platform to generate and refine animations through natural language prompts combined with direct code editing, thereby bridging the gap between artistic vision and technical execution.

Introduction to Keyframer

Keyframer is positioned at the intersection of AI-driven code generation and animation design, aimed at reducing the complexity associated with creating 2D animations. It employs LLMs to generate animation code from user-provided natural language prompts, enabling iterative design processes that accommodate both novices and experts in animation.

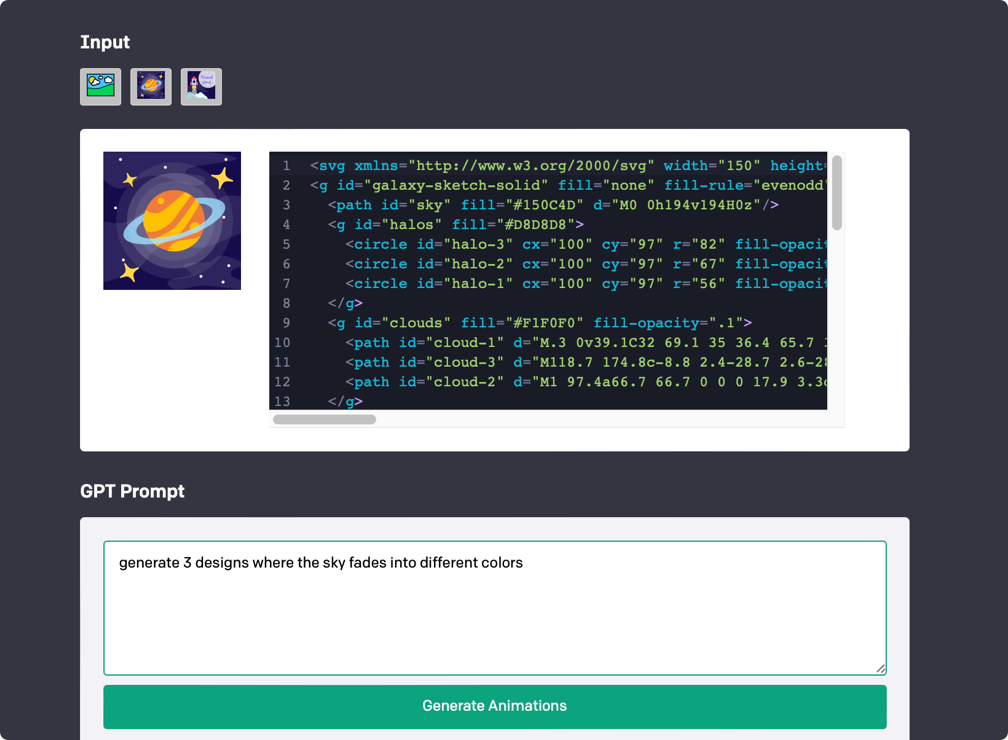

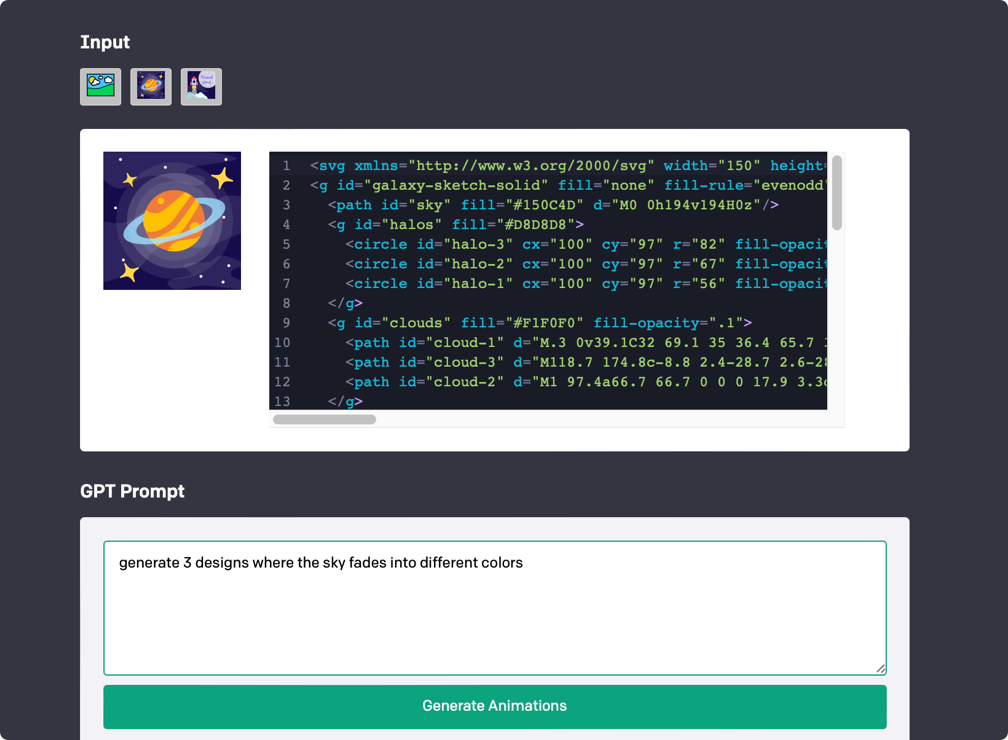

Figure 1: Image input field for adding SVG code and previewing image; GPT Prompt section for entering a natural language prompt.

The tool supports interactive and exploratory design by allowing users to input an SVG image and specify animation requests in plain language. This approach eliminates the steep learning curve associated with traditional animation coding, offering accessibility to users with various levels of expertise.

Semantic Prompting and Iterative Editing

Keyframer distinguishes itself by supporting two main user prompting strategies: decomposed and holistic prompting.

- Decomposed Prompting: This strategy allows users to animate elements one by one, focusing on individual components before considering the whole scene. This approach suits iterative refinement and supports designers in progressively developing their animation concepts.

- Holistic Prompting: In contrast, this strategy involves specifying the animation behavior for multiple elements in a single prompt, enabling quick prototyping of complex scenes but requiring comprehensive initial planning.

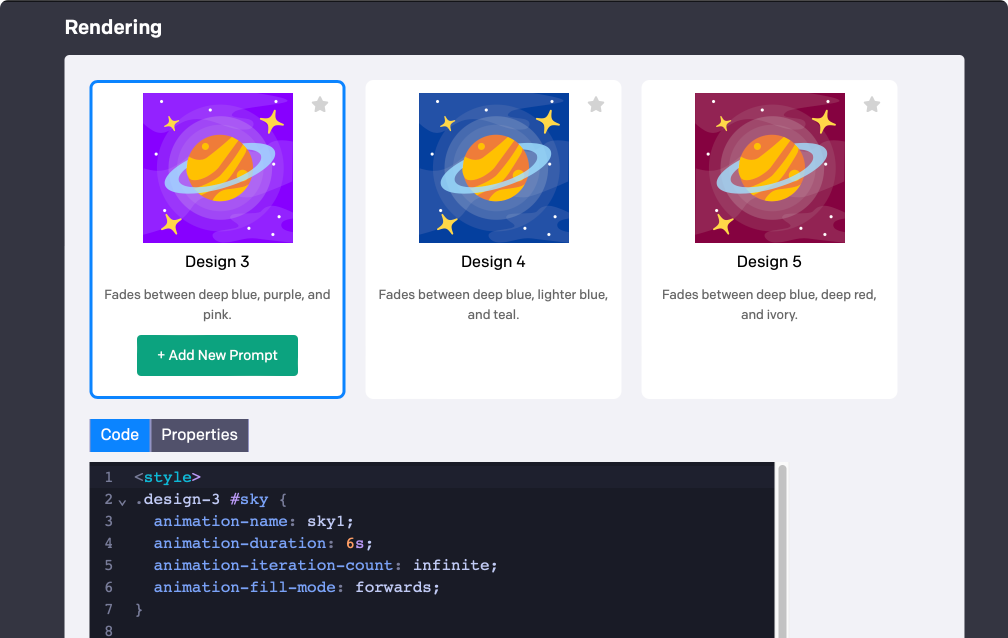

These strategies are complemented by Keyframer’s dual editing capabilities — the Properties and Code Editors. These editors enable fine-grained control over animation parameters, facilitating precise adjustments and encouraging experimentation.

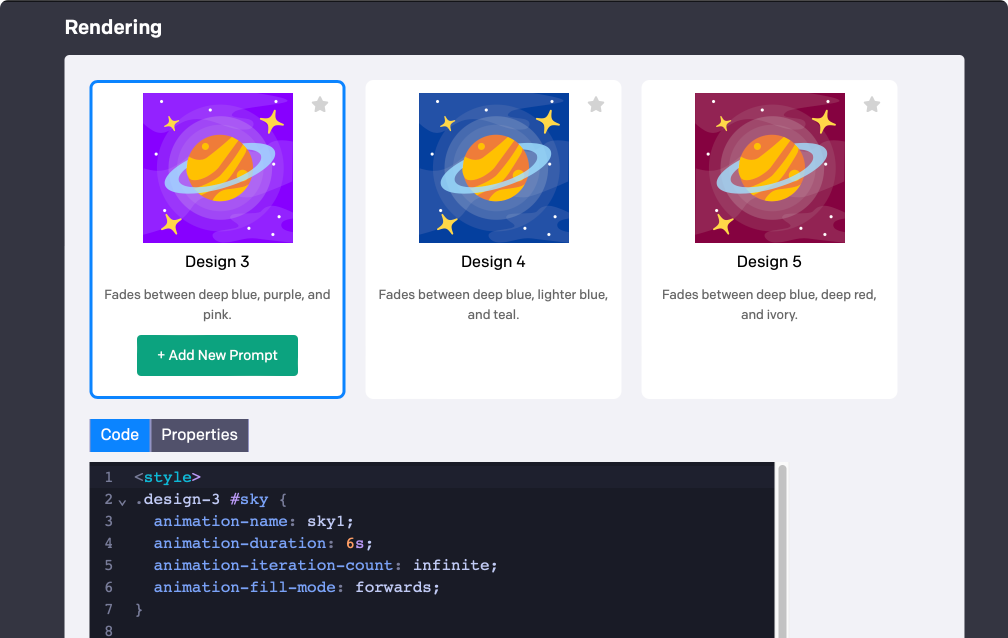

Figure 2: Rendering section for viewing generated designs side by side and editing output code in the Code or Properties editors.

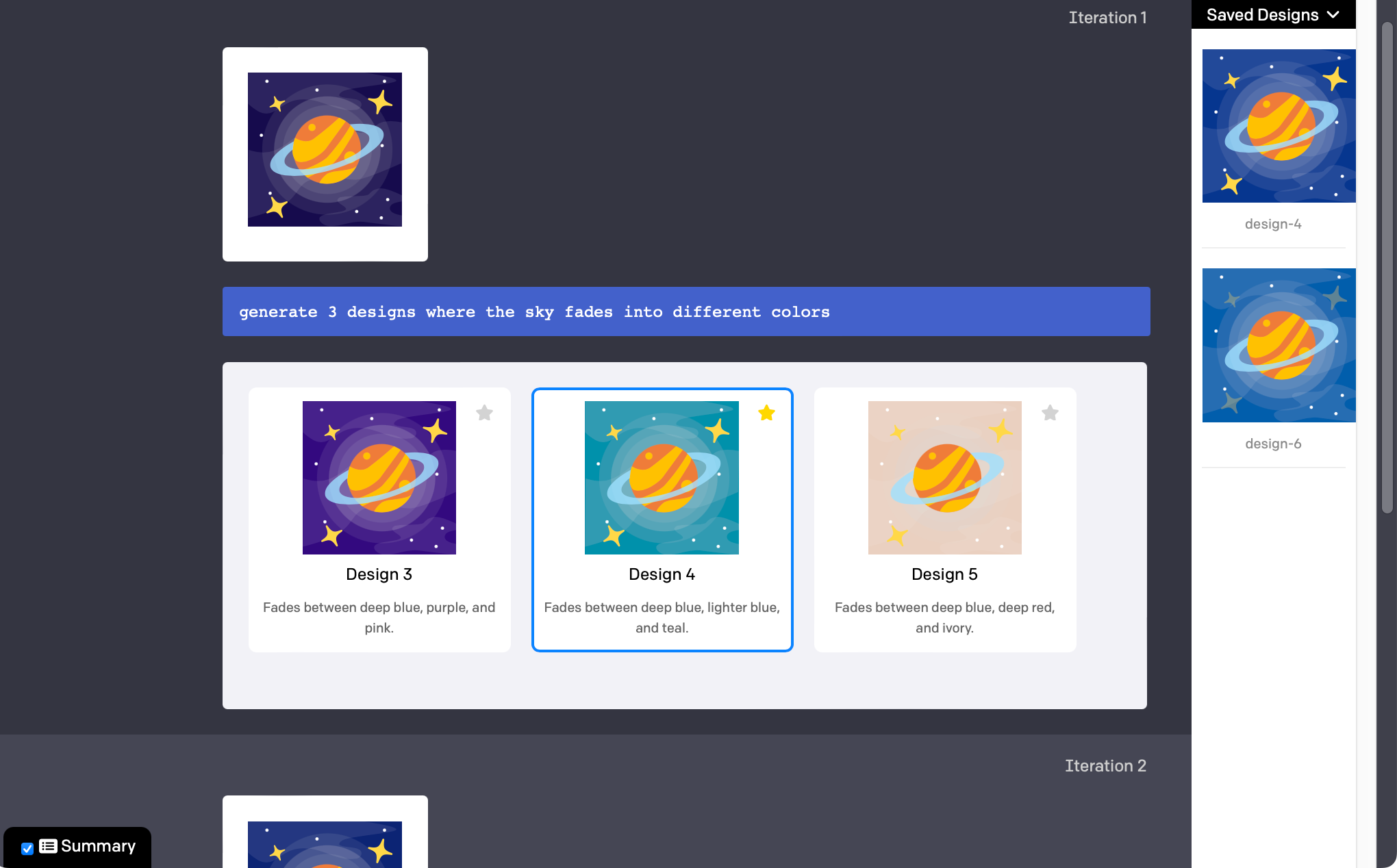

A user study involving 13 participants evaluated Keyframer's effectiveness in supporting animation design. The study revealed that the majority of users effectively employed decomposed prompting, iterating on their designs through sequential prompts and improvements based on generated outputs. Notably, participants appreciated the flexibility to start with a broad concept and refine it by directly modifying the generated code.

Keyframer demonstrated robust performance, generating high-quality CSS animations promptly, with an average response time of 17.4 seconds. While some users encountered syntactical errors in the generated code, these instances were minor and did not significantly hinder the overall design process.

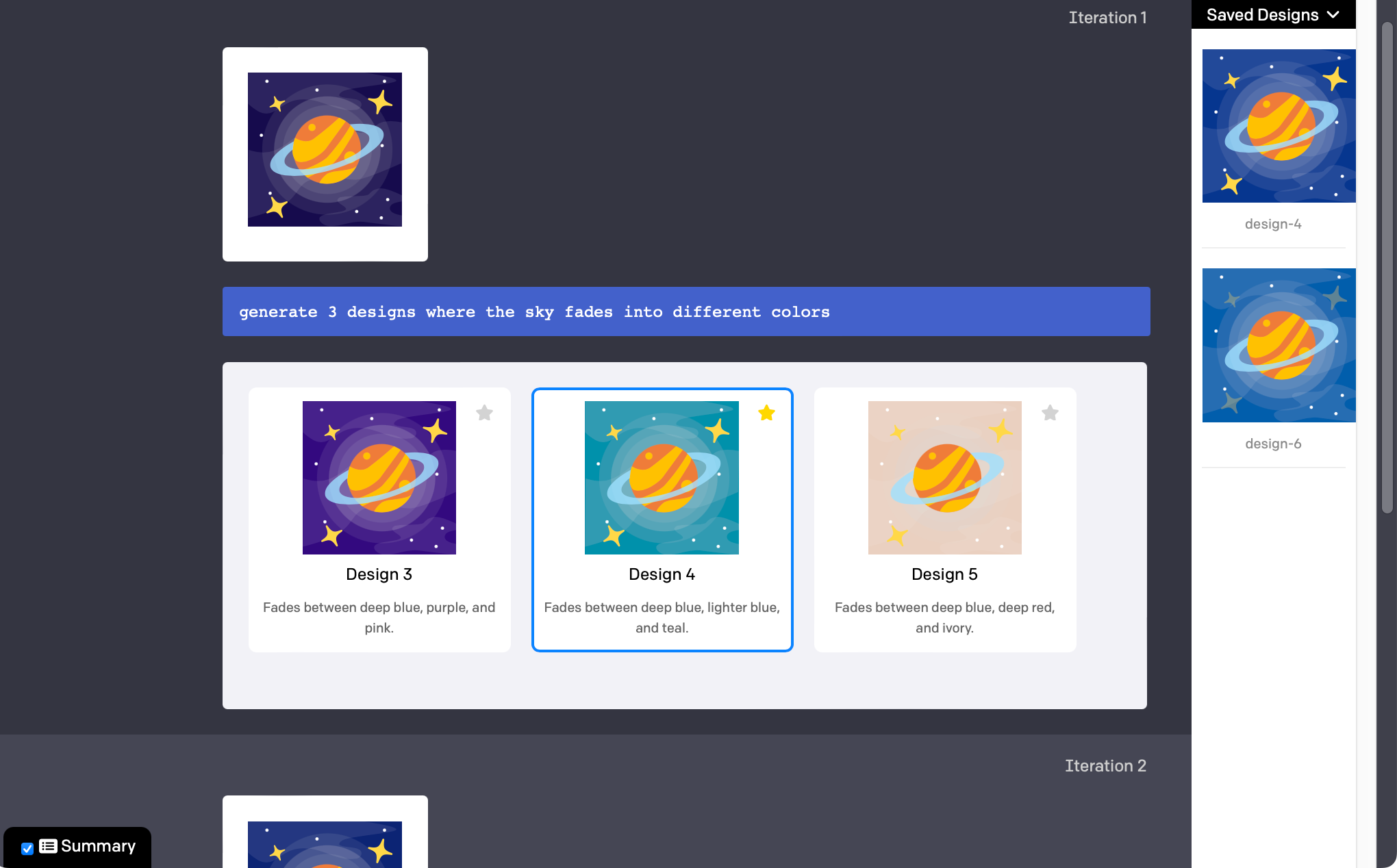

Figure 3: A summary view that hides all text editors and displays the user prompt and generated designs at each iteration.

Implications and Future Work

Keyframer underscores the transformative potential of integrating LLMs into creative workflows, particularly in empowering users to explore and refine animation designs with unprecedented ease. The tool's capacity for decomposed prompting and real-time editing offers a compelling prototype for future AI-driven creative tools, suggesting a pathway towards more intelligent and intuitive design software.

However, there remain opportunities for enhancement. Future iterations of tools like Keyframer could explore the integration of interactive previews, support for a broader range of graphic formats, and expanded capabilities for editing the underlying illustrations. Additionally, investigating the application of multi-modal LLMs may further reduce token limitations and improve system responsiveness.

Conclusion

By leveraging the generative capabilities of LLMs, Keyframer streamlines the animation design process, making it accessible to a broader audience while maintaining the depth required for professional work. Its innovative approach to prompting and code editing represents a significant step forward in the democratization of digital design tools, bridging the gap between natural language and creative expression.