- The paper introduces a mixed Gaussian Flow (MGF) model that transforms a mixed Gaussian prior into trajectory predictions to capture diverse motion patterns.

- It integrates continuously-indexed flows with a tailored training process to enhance prediction alignment and enable controllable trajectory generation.

- Experimental results on ETH/UCY and SDD datasets demonstrate state-of-the-art performance with superior diversity and controllability compared to existing models.

MGF: Mixed Gaussian Flow for Diverse Trajectory Prediction

This paper introduces a novel approach to trajectory prediction using normalizing flows, specifically addressing the limitations of existing methods in capturing diverse and controllable trajectory patterns. The core innovation is the Mixed Gaussian Flow (MGF) model, which transforms a mixed Gaussian prior, constructed from training data statistics, into the future trajectory manifold, enhancing both diversity and controllability.

Addressing Diversity Limitations in Trajectory Prediction

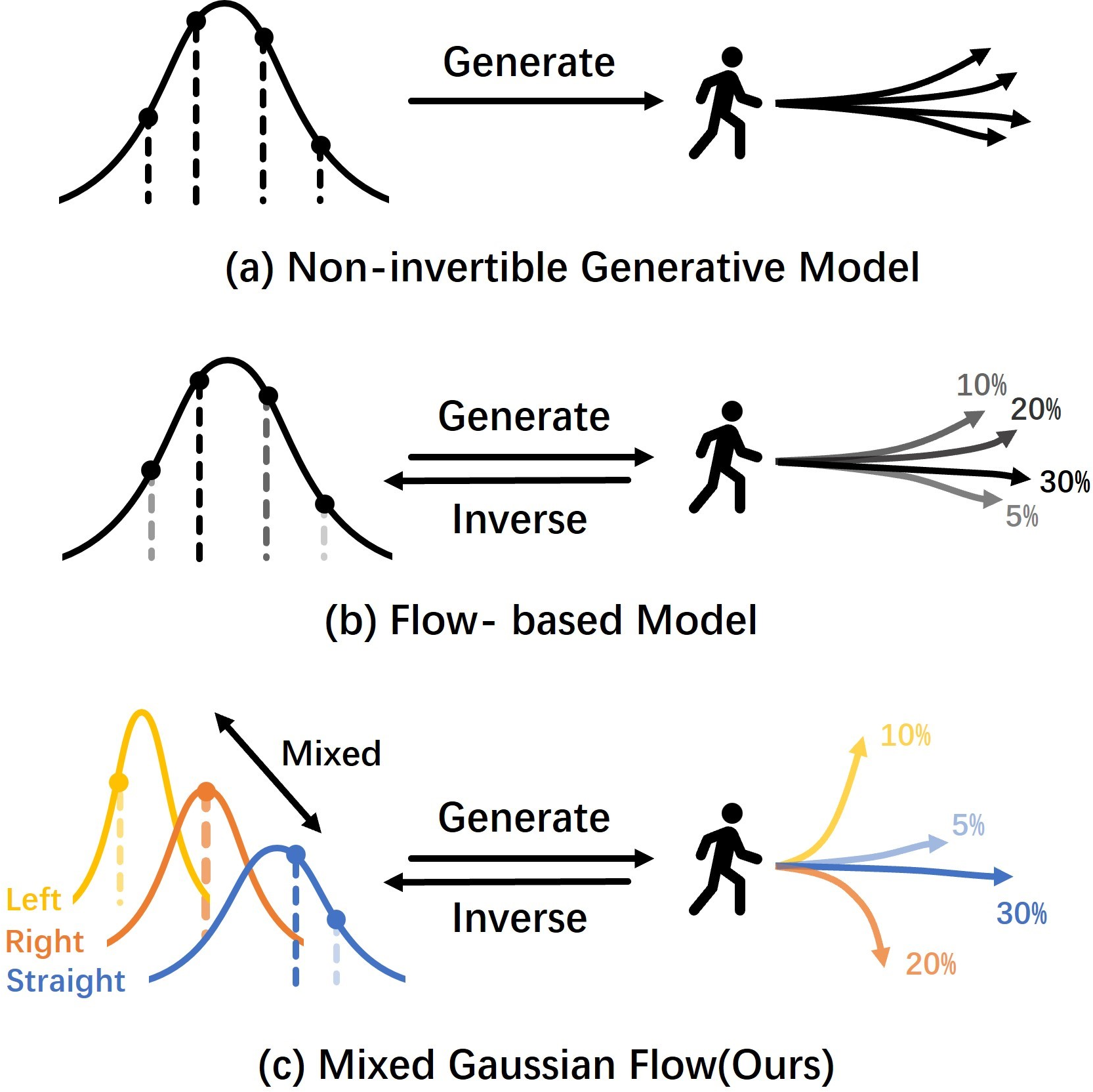

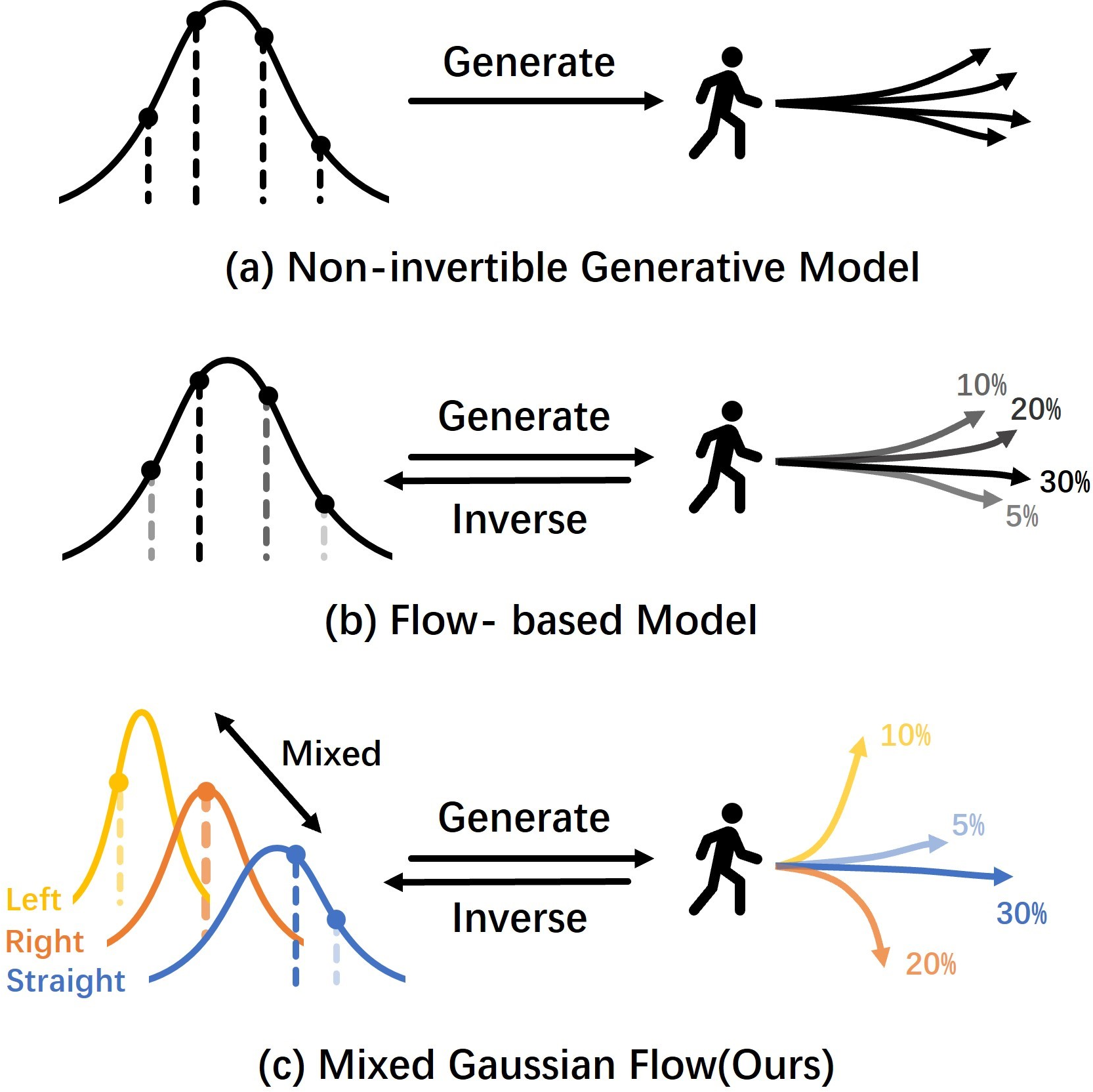

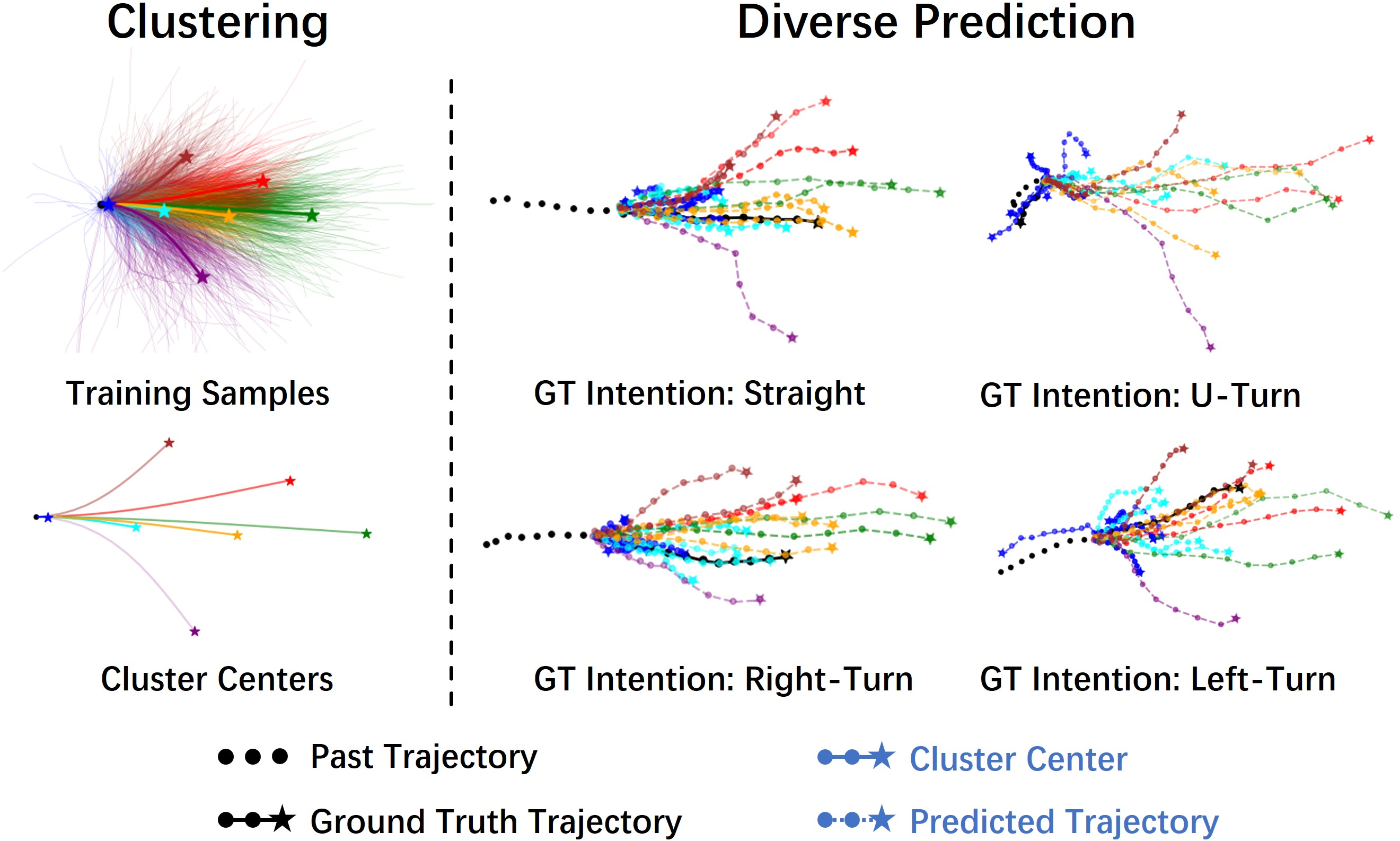

The paper identifies that existing trajectory prediction methods often struggle with diversity due to several factors, including imbalanced datasets, transformation from simple distributions like standard Gaussian, and evaluation metrics that favor the most likely outcome. To address these issues, MGF leverages a mixed Gaussian prior that better summarizes under-represented samples and facilitates learning transformations to a broader space of the final distribution (Figure 1). This approach contrasts with methods employing non-invertible generative models or standard Gaussian priors, which may lack diversity and controllability.

Figure 1: A comparison of generative model architectures, highlighting MGF's mixed Gaussian prior for enhanced diversity and controllability.

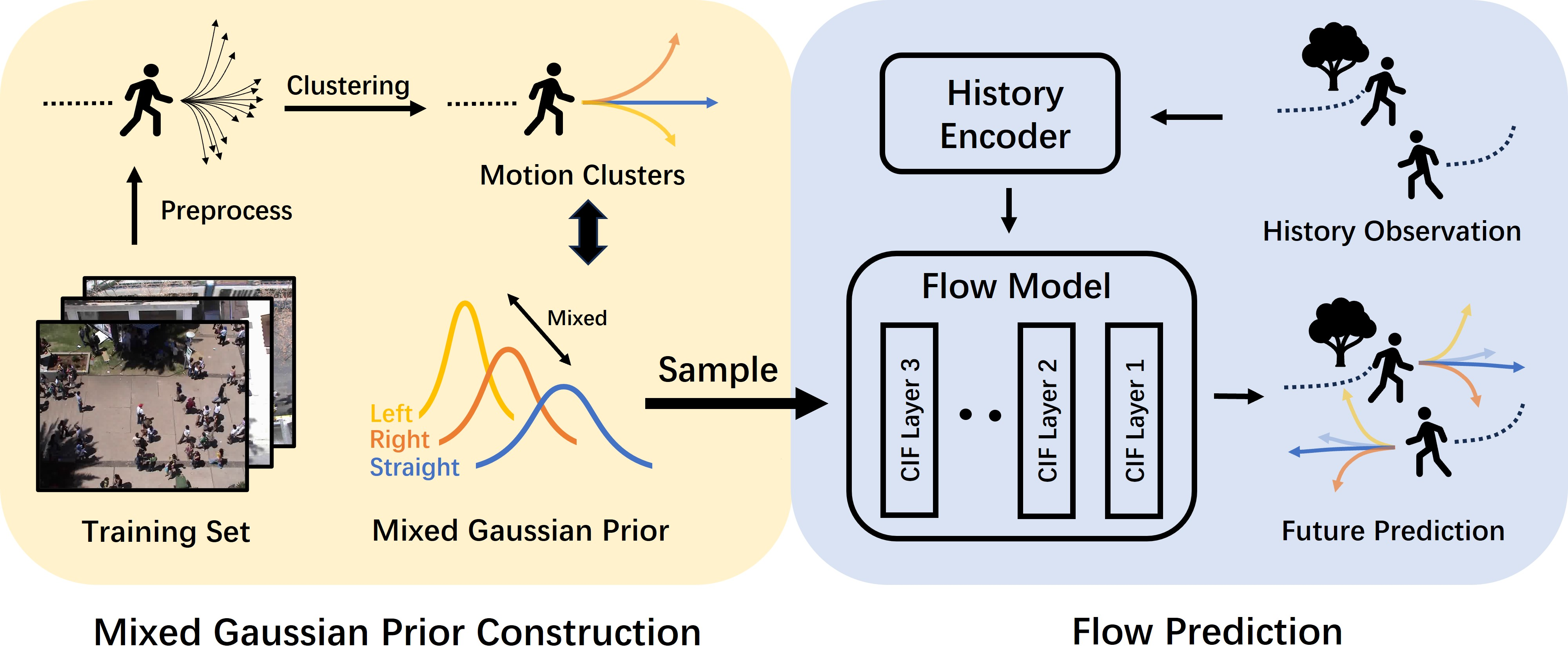

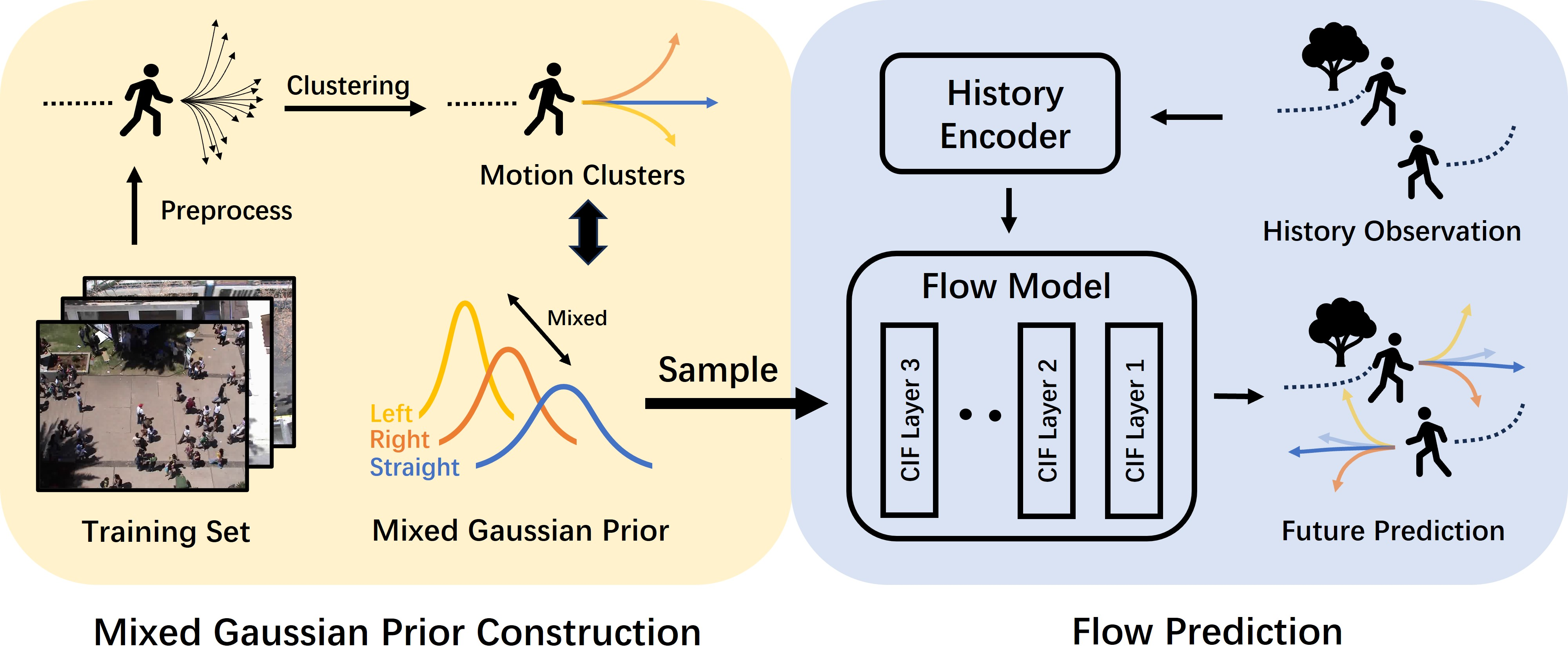

The MGF model comprises two key stages: offline training and inference (Figure 2). During offline training, a mixed Gaussian prior is constructed by summarizing motion patterns from the training samples. This prior is parameterized under a known parametric model, ensuring tractable density and maintaining invertibility. In the inference stage, initial noise points are sampled from this mixed Gaussian prior instead of a naive Gaussian, allowing for better capture of diverse motion intentions. The use of Continuously-indexed Flows (CIFs) further enhances the flexibility of the generation process.

Figure 2: Illustration of the Mixed Gaussian Flow (MGF) model, showing the offline training stage for constructing the mixed Gaussian prior and the inference stage for trajectory prediction.

Implementation Details and Training Strategy

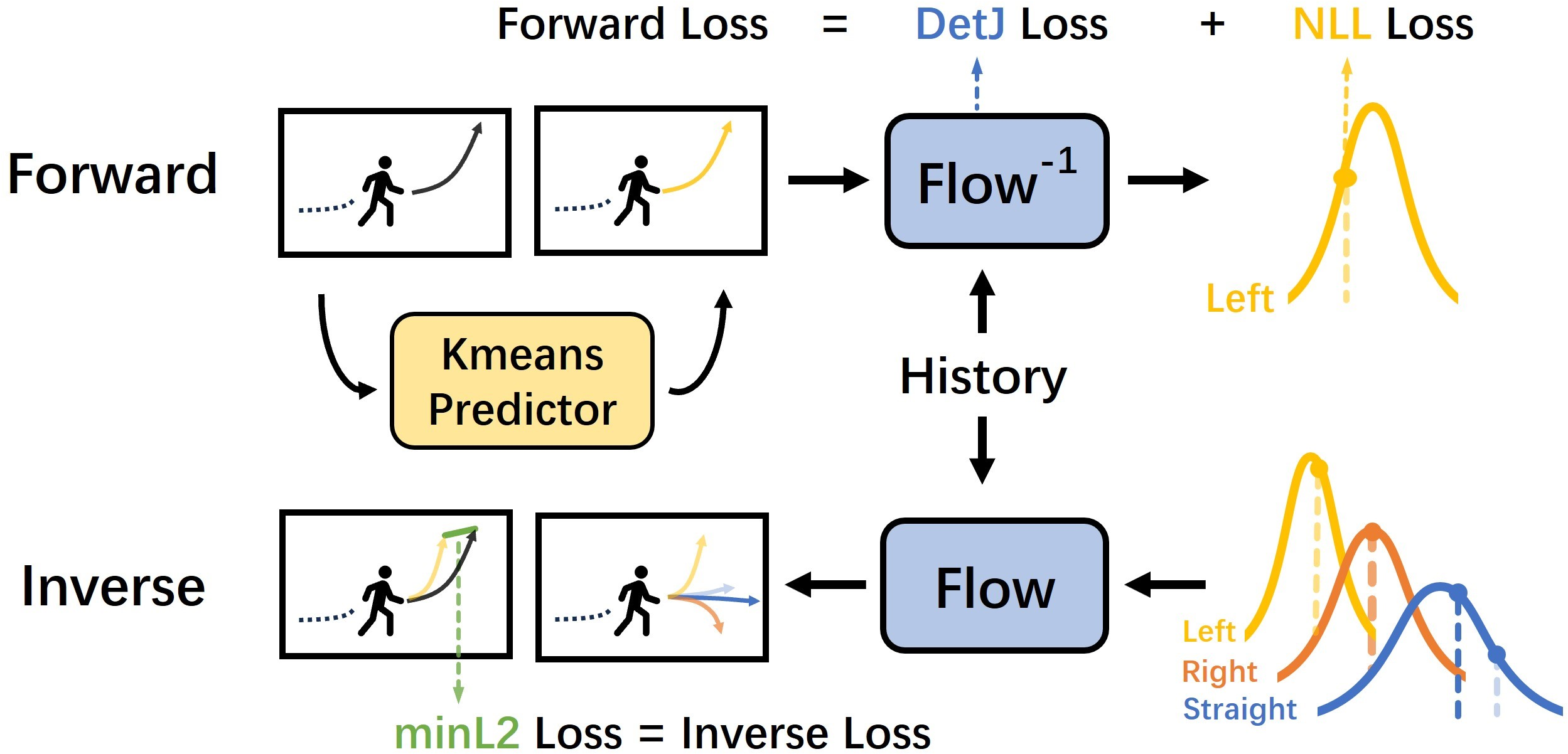

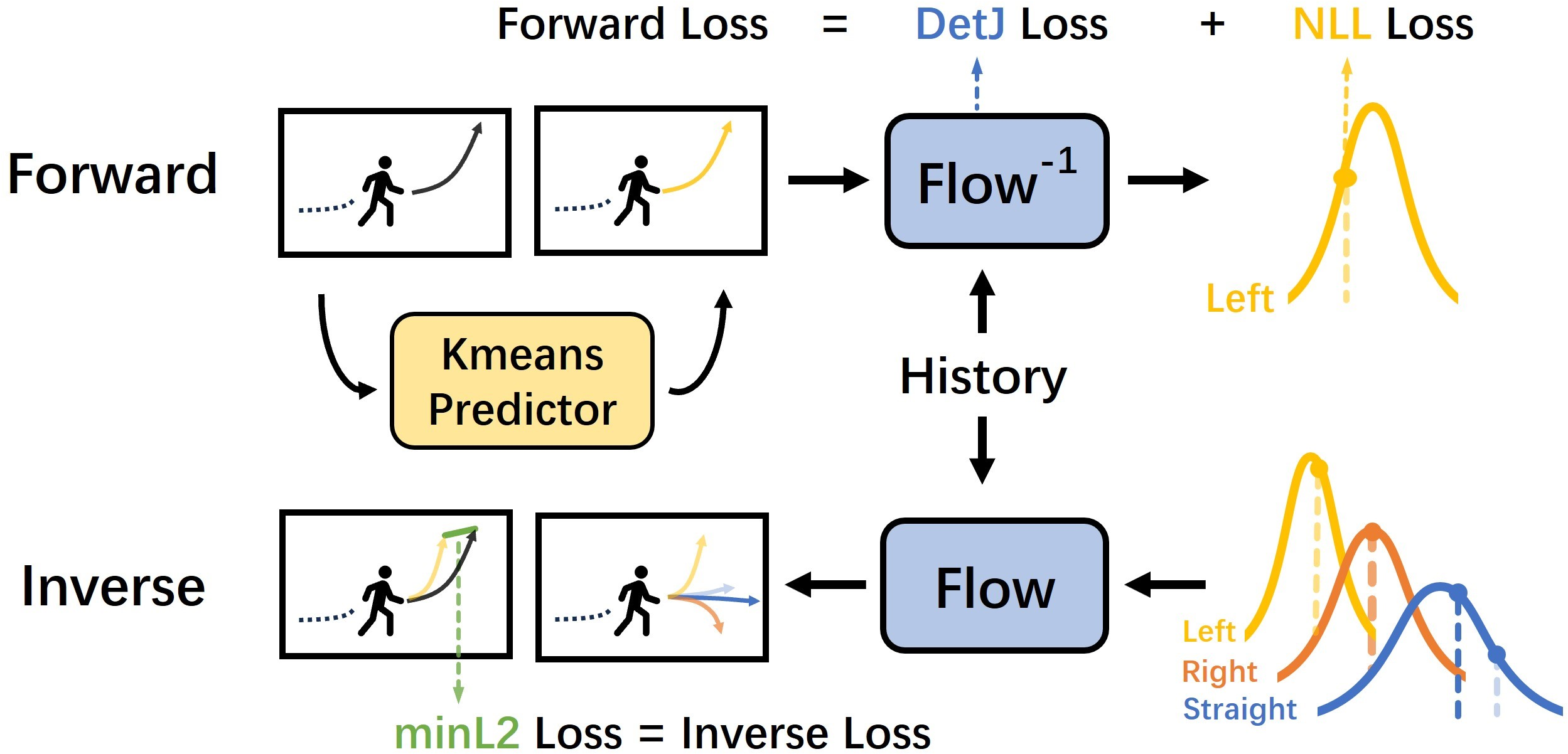

The training process involves both forward and inverse processes of the normalizing flow (Figure 3). The forward process computes a mixed flow loss by assigning a ground truth trajectory sample to a cluster in the mixed Gaussian prior and transforming it into its corresponding latent representation. The inverse process generates trajectories by mapping samples from the mixed Gaussian prior to the target manifold. A minimum ℓ2 loss is computed between the generated trajectories and the ground truth, and a Symmetric Cross-Entropy loss is used for model training.

Figure 3: The training process of the MGF model, illustrating both the forward and inverse processes of the normalizing flow.

Diversity Metrics and Experimental Results

To explicitly measure the diversity of generated trajectories, the paper introduces a metric set of Average Pairwise Displacement (APD) and Final Pairwise Displacement (FPD). These metrics quantify the diversity of a batch of generated samples, providing a concrete study of generation diversity and avoiding bias from the "best-of-M" evaluation protocol.

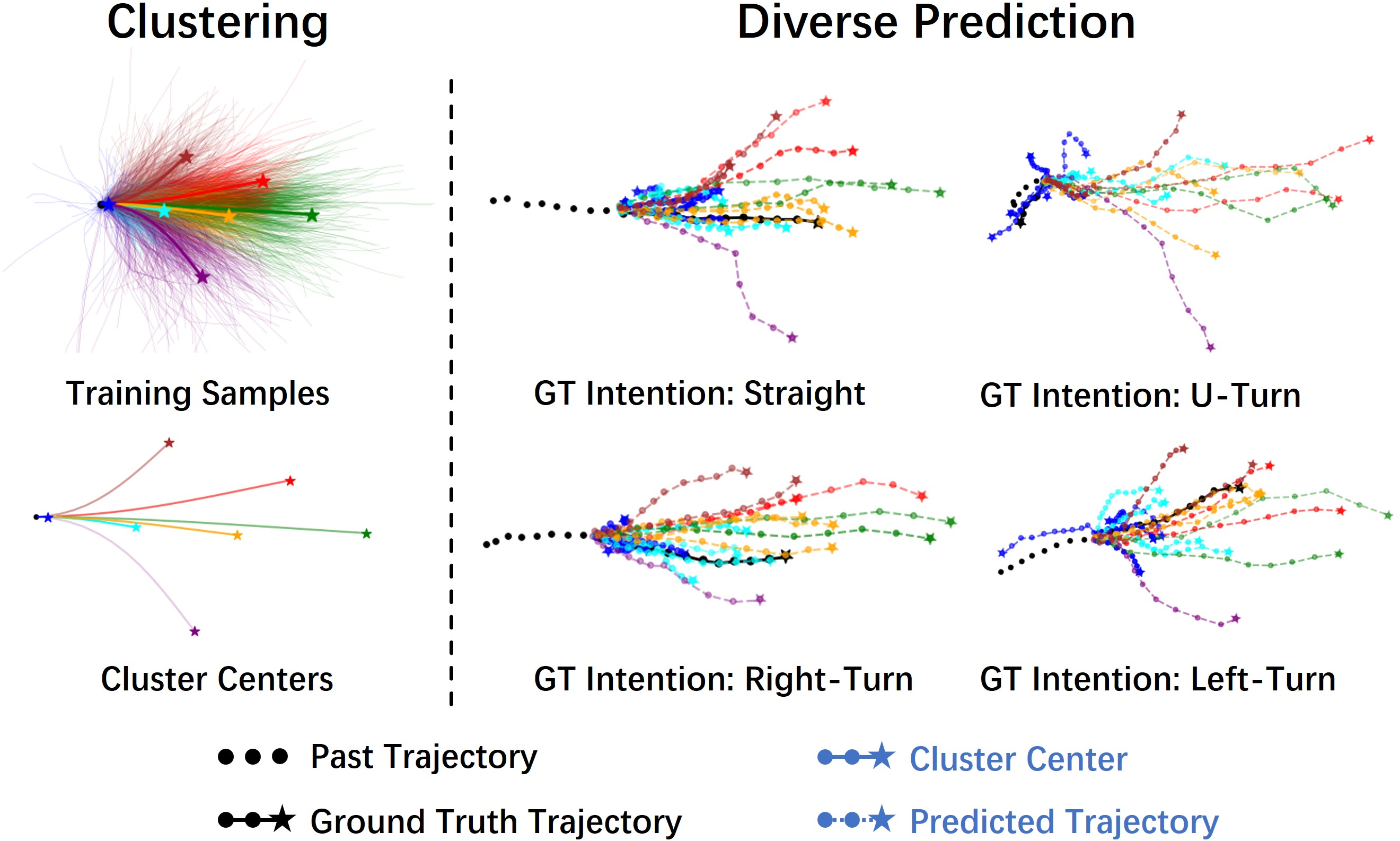

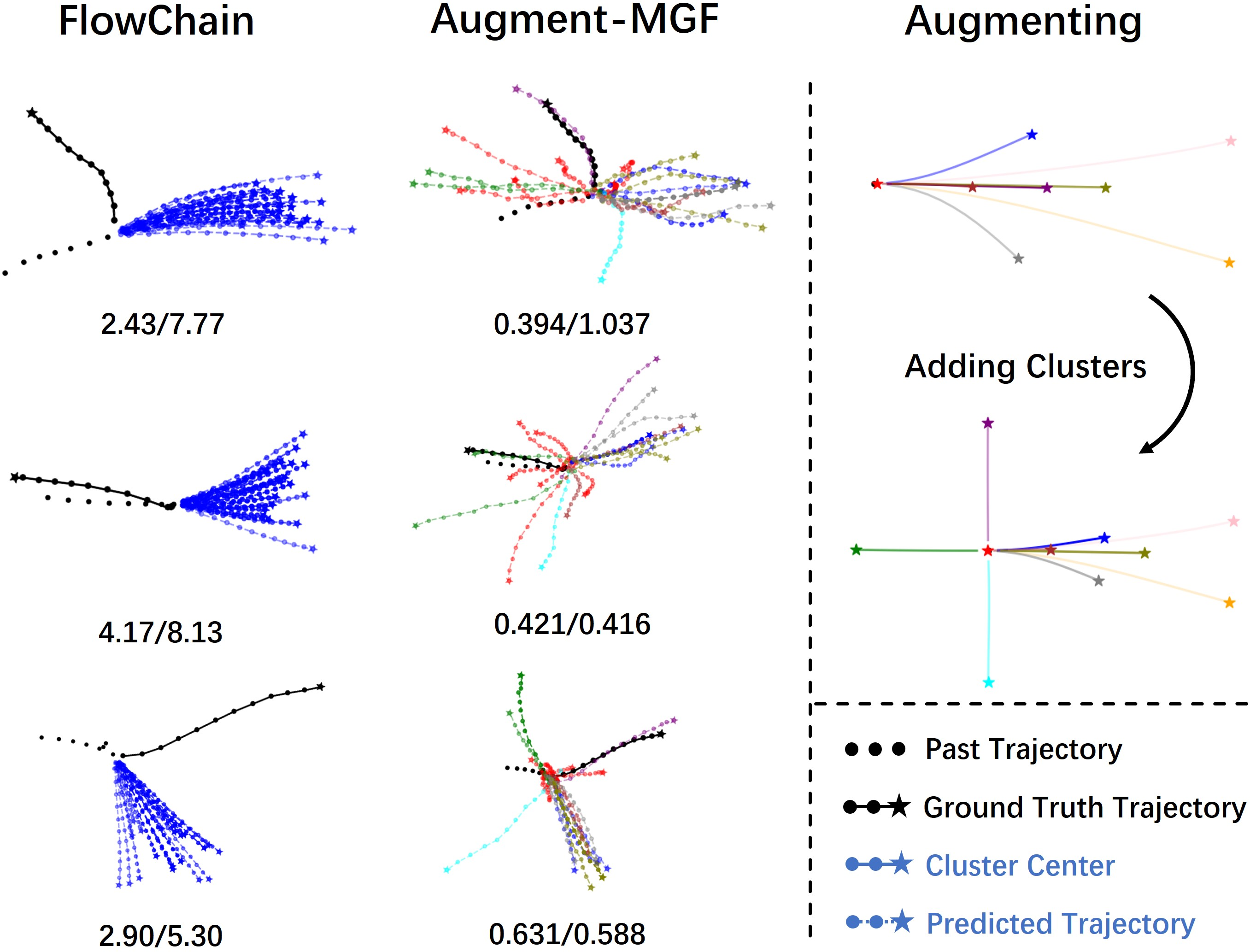

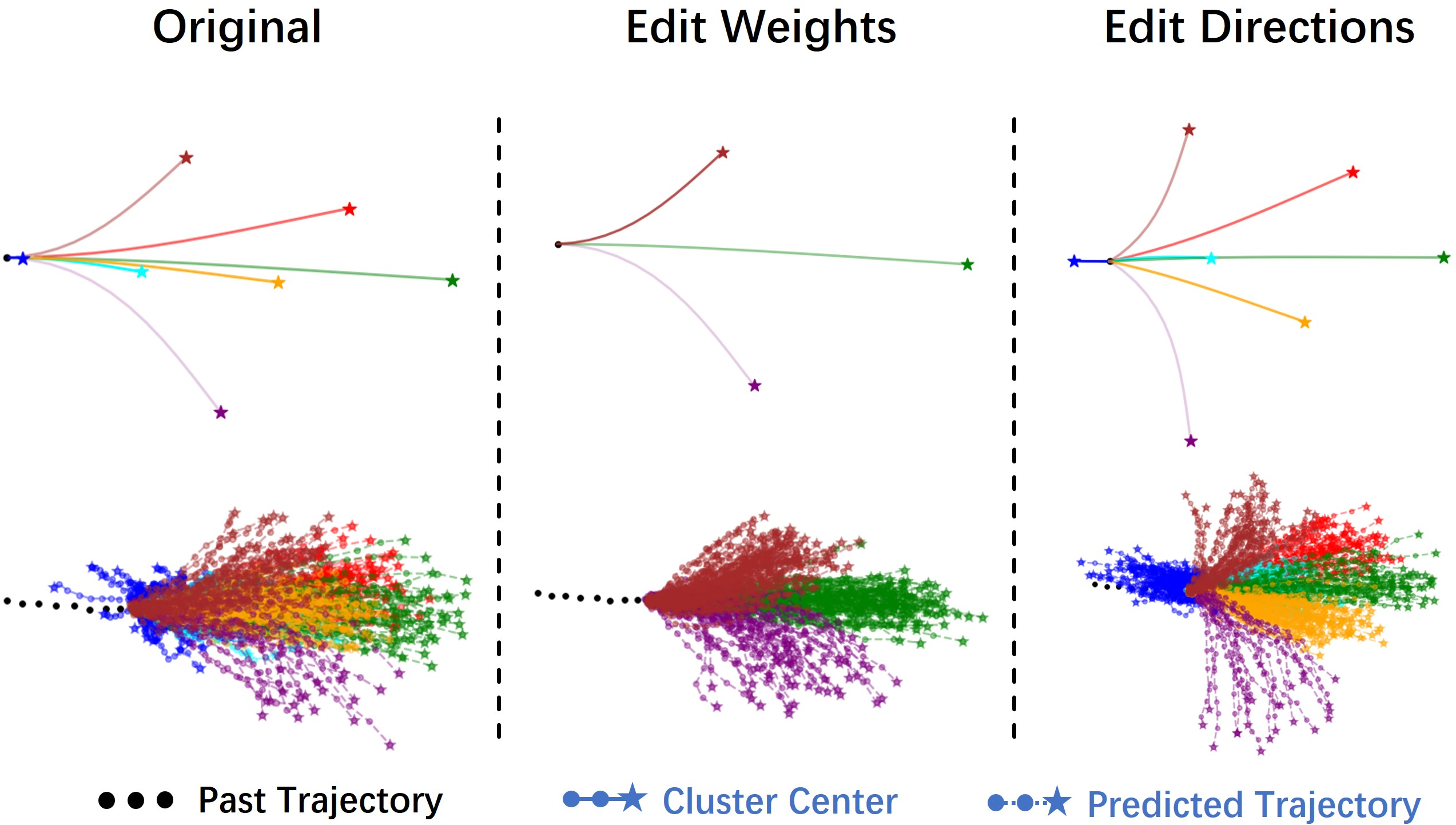

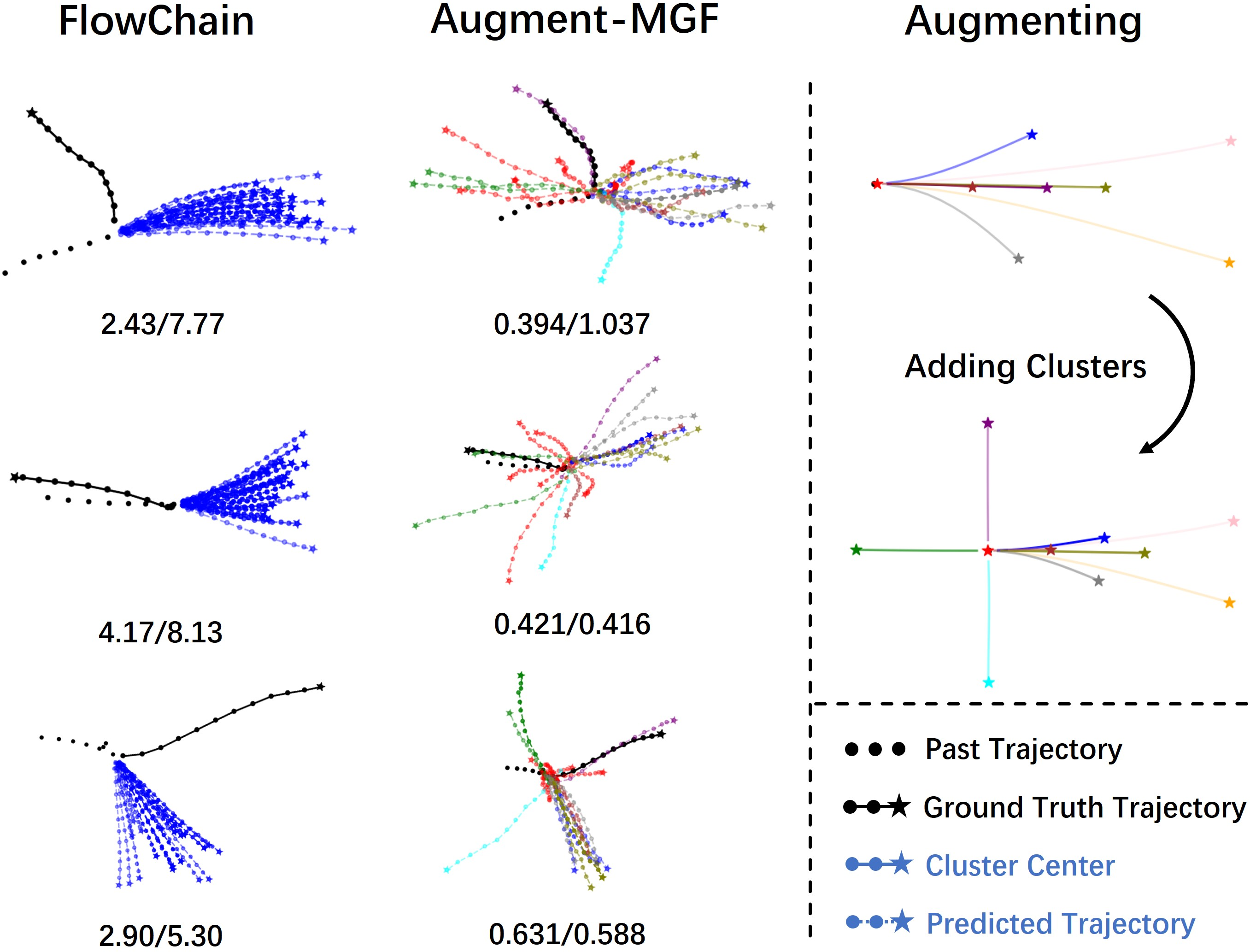

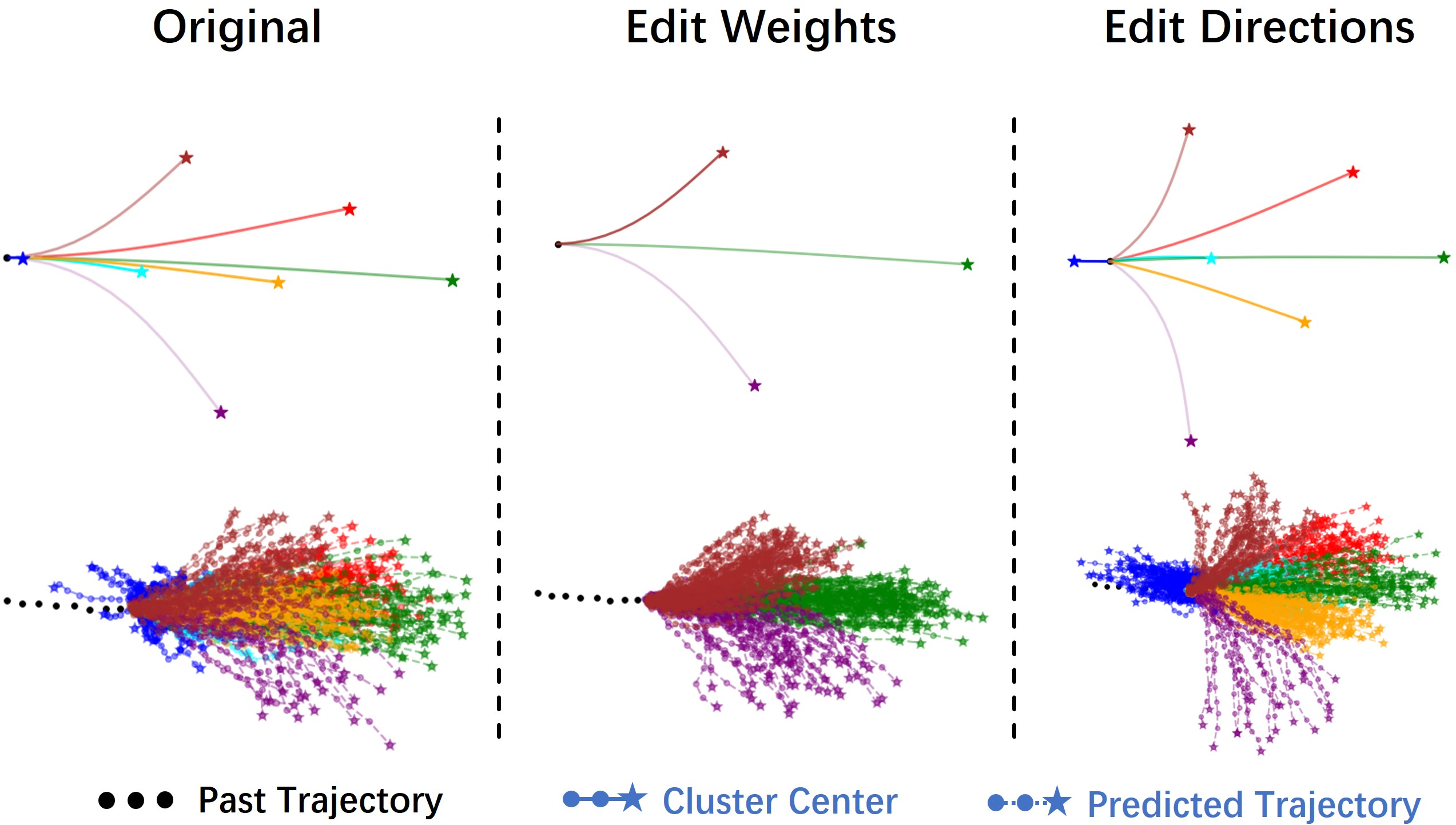

Experiments on the ETH/UCY and SDD datasets demonstrate that MGF achieves state-of-the-art performance in trajectory alignment and diversity. MGF outperforms existing methods in terms of ADE/FDE scores while also achieving superior APD/FPD scores, indicating better diversity in trajectory predictions (Table 1 and 2). Furthermore, the model exhibits controllable generation capabilities, allowing manipulation of the mixed Gaussian prior to control the generated trajectories.

Figure 4: MGF predictions on the ETH dataset, showcasing diverse trajectory patterns corresponding to cluster centers from the mixed Gaussian prior.

Figure 5: Predictions on UNIV dataset corner cases, showing how MGF with augmented priors generates sharp turns and U-turns that FlowChain fails to predict.

Figure 6: Controllable trajectory generation on the ETH dataset, achieved by editing the weights or intentions of cluster centers.

Ablation Studies and Analysis

Ablation studies validate the contribution of key components of the MGF model, including the inverse loss, mixed Gaussian prior, learnable variance, and prediction clustering. The results demonstrate that each component contributes to improved prediction alignment (Table 3).

Conclusion

The MGF model advances the field of trajectory prediction by addressing the limitations of existing methods in generating diverse and controllable trajectories. The use of a mixed Gaussian prior, combined with CIFs and a tailored training strategy, enables the model to capture a wide range of motion patterns and achieve state-of-the-art performance. The introduction of diversity metrics provides a valuable tool for evaluating trajectory prediction models, and the experimental results demonstrate the effectiveness of MGF in generating diverse, controllable, and well-aligned trajectories.