Snap Video: Scaled Spatiotemporal Transformers for Text-to-Video Synthesis

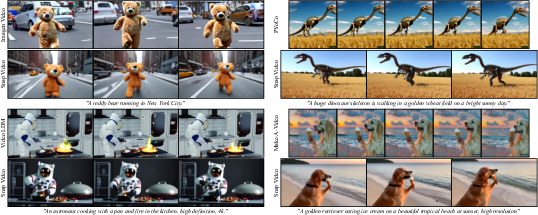

Abstract: Contemporary models for generating images show remarkable quality and versatility. Swayed by these advantages, the research community repurposes them to generate videos. Since video content is highly redundant, we argue that naively bringing advances of image models to the video generation domain reduces motion fidelity, visual quality and impairs scalability. In this work, we build Snap Video, a video-first model that systematically addresses these challenges. To do that, we first extend the EDM framework to take into account spatially and temporally redundant pixels and naturally support video generation. Second, we show that a U-Net - a workhorse behind image generation - scales poorly when generating videos, requiring significant computational overhead. Hence, we propose a new transformer-based architecture that trains 3.31 times faster than U-Nets (and is ~4.5 faster at inference). This allows us to efficiently train a text-to-video model with billions of parameters for the first time, reach state-of-the-art results on a number of benchmarks, and generate videos with substantially higher quality, temporal consistency, and motion complexity. The user studies showed that our model was favored by a large margin over the most recent methods. See our website at https://snap-research.github.io/snapvideo/.

- Pika lab discord server. https://www.pika.art/. Accessed: 2023-11-01.

- Latent-shift: Latent diffusion with temporal shift for efficient text-to-video generation. arXiv, 2023.

- ediff-i: Text-to-image diffusion models with an ensemble of expert denoisers. ArXiv, 2022.

- Align your latents: High-resolution video synthesis with latent diffusion models. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Generating long videos of dynamic scenes. In Advances in Neural Information Processing Systems (NeurIPS), 2022.

- Instructpix2pix: Learning to follow image editing instructions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Ting Chen. On the importance of noise scheduling for diffusion models. arXiv, 2023.

- Fit: Far-reaching interleaved transformers. arXiv, 2023.

- Efficient video generation on complex datasets. arXiv, 2019.

- Diffusion models beat GANs on image synthesis. In Advances in Neural Information Processing Systems (NeurIPS), 2021.

- Structure and content-guided video synthesis with diffusion models. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Long video generation with time-agnostic vqgan and time-sensitive transformer. In Proceedings of the European Conference of Computer Vision (ECCV), 2022.

- Preserve your own correlation: A noise prior for video diffusion models. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), 2023.

- f-dm: A multi-stage diffusion model via progressive signal transformation. International Conference on Learning Representations (ICLR), 2023a.

- Matryoshka diffusion models. arXiv, 2023b.

- Animatediff: Animate your personalized text-to-image diffusion models without specific tuning. arXiv, 2023.

- Latent video diffusion models for high-fidelity long video generation. arXiv, 2023.

- Gans trained by a two time-scale update rule converge to a local nash equilibrium. In Advances in Neural Information Processing Systems (NeurIPS), 2017.

- Classifier-free diffusion guidance. arXiv, 2022.

- Denoising diffusion probabilistic models. In Advances in Neural Information Processing Systems (NeurIPS), 2020.

- Imagen video: High definition video generation with diffusion models. arXiv, 2022a.

- Video diffusion models. In ICLR Workshop on Deep Generative Models for Highly Structured Data, 2022b.

- Cogvideo: Large-scale pretraining for text-to-video generation via transformers. arXiv, 2022.

- Simple diffusion: End-to-end diffusion for high resolution images. In Proceedings of the 40th International Conference on Machine Learning (ICML), 2023.

- Elucidating the design space of diffusion-based generative models. In Advances in Neural Information Processing Systems (NeurIPS), 2022.

- Adam: A method for stochastic optimization. arXiv, 2015.

- The role of imagenet classes in fréchet inception distance. In International Conference on Learning Representations (ICLR), 2023.

- Stochastic adversarial video prediction. arXiv, abs/1804.01523, 2018.

- Video generation from text. Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), 2018.

- Snapfusion: Text-to-image diffusion model on mobile devices within two seconds. arXiv, 2023.

- Evalcrafter: Benchmarking and evaluating large video generation models. arXiv, 2023.

- Videofusion: Decomposed diffusion models for high-quality video generation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Image and video compression with neural networks: A review. IEEE Transactions on Circuits and Systems for Video Technology, 2019.

- Sync-draw: Automatic video generation using deep recurrent attentive architectures. In Proceedings of the 25th ACM International Conference on Multimedia, 2017.

- CCVS: Context-aware controllable video synthesis. In Advances in Neural Information Processing Systems (NeurIPS), 2021.

- PyTorch: An Imperative Style, High-Performance Deep Learning Library. 2019.

- Fatezero: Fusing attentions for zero-shot text-based video editing. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), 2023.

- Learning transferable visual models from natural language supervision. In International Conference on Machine Learning (ICML), 2021.

- Exploring the limits of transfer learning with a unified text-to-text transformer. Journal of Machine Learning Research (JMLR), 2022.

- High-resolution image synthesis with latent diffusion models. arXiv, 2021.

- U-net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention (MICCAI), 2015.

- Dreambooth: Fine tuning text-to-image diffusion models for subject-driven generation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Photorealistic text-to-image diffusion models with deep language understanding. arXiv, 2022.

- Train sparsely, generate densely: Memory-efficient unsupervised training of high-resolution temporal gan, 2020.

- Progressive distillation for fast sampling of diffusion models. In International Conference on Learning Representations (ICLR), 2022.

- Improved techniques for training gans. In Advances in Neural Information Processing Systems (NeurIPS), 2016.

- Mostgan-v: Video generation with temporal motion styles. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Make-a-video: Text-to-video generation without text-video data. arXiv, 2022.

- Stylegan-v: A continuous video generator with the price, image quality and perks of stylegan2. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Deep unsupervised learning using nonequilibrium thermodynamics. In International Conference on Machine Learning (ICML), 2015.

- Generative modeling by estimating gradients of the data distribution. In Advances in Neural Information Processing Systems (NeurIPS), 2019.

- Improved techniques for training score-based generative models. In Advances in Neural Information Processing Systems (NeurIPS), 2020.

- Maximum likelihood training of score-based diffusion models, 2021a.

- Score-based generative modeling through stochastic differential equations. In International Conference on Learning Representations (ICLR), 2021b.

- UCF101: A dataset of 101 human actions classes from videos in the wild. arXiv, 2012.

- Unsupervised learning of video representations using lstms. In International Conference on Machine Learning (ICML), 2015.

- Relay diffusion: Unifying diffusion process across resolutions for image synthesis. arXiv, 2023.

- A good image generator is what you need for high-resolution video synthesis. In International Conference on Learning Representations (ICLR), 2021.

- Mocogan: Decomposing motion and content for video generation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.

- Towards accurate generative models of video: A new metric & challenges. arXiv, 2018.

- Phenaki: Variable length video generation from open domain textual description. In International Conference on Learning Representations (ICLR), 2023.

- Videofactory: Swap attention in spatiotemporal diffusions for text-to-video generation. arXiv, 2023.

- Godiva: Generating open-domain videos from natural descriptions. ArXiv, 2021.

- Nüwa: Visual synthesis pre-training for neural visual world creation. In Proceedings of the European Conference of Computer Vision (ECCV), 2022.

- Msr-vtt: A large video description dataset for bridging video and language. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016.

- Videogpt: Video generation using vq-vae and transformers. arXiv, 2021.

- Nuwa-xl: Diffusion over diffusion for extremely long video generation. In Annual Meeting of the Association for Computational Linguistics, 2023.

- Large batch optimization for deep learning: Training bert in 76 minutes. In International Conference on Learning Representations (ICLR), 2020.

- Scaling autoregressive models for content-rich text-to-image generation. Transactions on Machine Learning Research, 2022a.

- Magvit: Masked generative video transformer. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2023.

- Generating videos with dynamics-aware implicit generative adversarial networks. In International Conference on Learning Representations (ICLR), 2022b.

- Magicvideo: Efficient video generation with latent diffusion models. arXiv, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.