ToMBench: Benchmarking Theory of Mind in Large Language Models

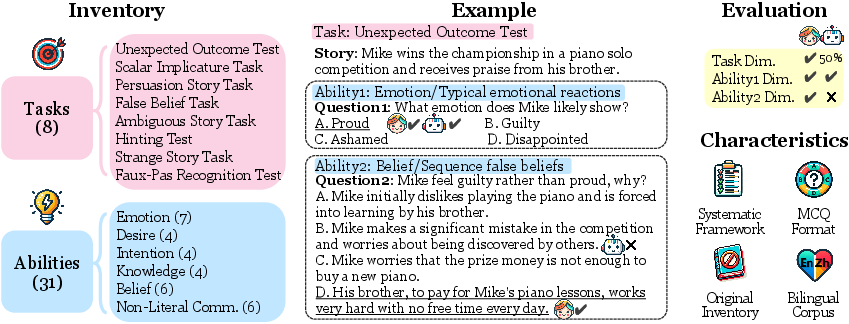

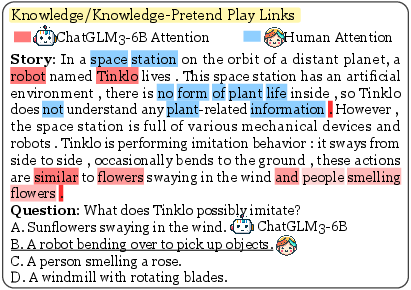

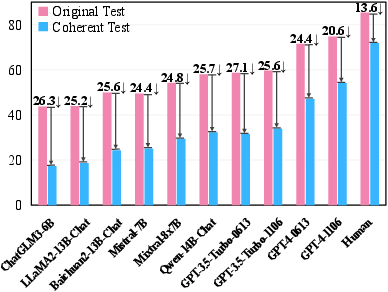

Abstract: Theory of Mind (ToM) is the cognitive capability to perceive and ascribe mental states to oneself and others. Recent research has sparked a debate over whether LLMs exhibit a form of ToM. However, existing ToM evaluations are hindered by challenges such as constrained scope, subjective judgment, and unintended contamination, yielding inadequate assessments. To address this gap, we introduce ToMBench with three key characteristics: a systematic evaluation framework encompassing 8 tasks and 31 abilities in social cognition, a multiple-choice question format to support automated and unbiased evaluation, and a build-from-scratch bilingual inventory to strictly avoid data leakage. Based on ToMBench, we conduct extensive experiments to evaluate the ToM performance of 10 popular LLMs across tasks and abilities. We find that even the most advanced LLMs like GPT-4 lag behind human performance by over 10% points, indicating that LLMs have not achieved a human-level theory of mind yet. Our aim with ToMBench is to enable an efficient and effective evaluation of LLMs' ToM capabilities, thereby facilitating the development of LLMs with inherent social intelligence.

- Gpt-4 technical report. arXiv preprint arXiv:2303.08774.

- KOLMOGOROV AN. 1933. Sulla determinazione empirica di una legge didistribuzione. Giorn Dell’inst Ital Degli Att, 4:89–91.

- James N Aronson and Claire Golomb. 1999. Preschoolers’ understanding of pretense and presumption of congruity between action and representation. Developmental Psychology, 35(6):1414.

- Qwen technical report. arXiv preprint arXiv:2309.16609.

- Baichuan-Inc. 2023. Baichuan 2. Online.

- Does the autistic child have a “theory of mind”? Cognition, 21(1):37–46.

- Recognition of faux pas by normally developing children and children with asperger syndrome or high-functioning autism. Journal of autism and developmental disorders, 29:407–418.

- Systematic review and inventory of theory of mind measures for young children. Frontiers in psychology, 10:2905.

- Mark Bennett and Linda Galpert. 1993. Children’s understanding of multiple desires. International Journal of Behavioral Development, 16(1):15–33.

- Helene Borke. 1971. Interpersonal perception of young children: Egocentrism or empathy? Developmental psychology, 5(2):263.

- Sandra Bosacki and Janet Wilde Astington. 1999. Theory of mind in preadolescence: Relations between social understanding and social competence. Social development, 8(2):237–255.

- Cross-cultural differences in adult theory of mind abilities: a comparison of native-english speakers and native-chinese speakers on the self/other differentiation task. Quarterly Journal of Experimental Psychology, 71(12):2665–2676.

- Michael Brambring and Doreen Asbrock. 2010. Validity of false belief tasks in blind children. Journal of Autism and Developmental Disorders, 40:1471–1484.

- Sparks of artificial general intelligence: Early experiments with gpt-4. arXiv preprint arXiv:2303.12712.

- Longitudinal effects of theory of mind on later peer relations: the role of prosocial behavior. Developmental psychology, 48(1):257.

- Stephanie M Carlson and Louis J Moses. 2001. Individual differences in inhibitory control and children’s theory of mind. Child development, 72(4):1032–1053.

- Precursors of a theory of mind: A longitudinal study. British Journal of Developmental Psychology, 26(4):561–577.

- Schizophrenia, symptomatology and social inference: investigating “theory of mind” in people with schizophrenia. Schizophrenia research, 17(1):5–13.

- Jean Decety and Philip L Jackson. 2004. The functional architecture of human empathy. Behavioral and cognitive neuroscience reviews, 3(2):71–100.

- Susanne A Denham. 1986. Social cognition, prosocial behavior, and emotion in preschoolers: Contextual validation. Child development, pages 194–201.

- Do autism spectrum disorders differ from each other and from non-spectrum disorders on emotion recognition tests? European child & adolescent psychiatry, 10:105–116.

- Development of knowledge about the appearance-reality distinction. Monographs of the society for research in child development, pages i–87.

- Shahriar Golchin and Mihai Surdeanu. 2023. Time travel in llms: Tracing data contamination in large language models. arXiv preprint arXiv:2308.08493.

- Noah D Goodman and Andreas Stuhlmüller. 2013. Knowledge and implicature: Modeling language understanding as social cognition. Topics in cognitive science, 5(1):173–184.

- Felice W Gordis et al. 1989. Young children’s understanding of simultaneous conflicting emotions.

- Francesca GE Happé. 1994. An advanced test of theory of mind: Understanding of story characters’ thoughts and feelings by able autistic, mentally handicapped, and normal children and adults. Journal of autism and Developmental disorders, 24(2):129–154.

- Children’s understanding of the distinction between real and apparent emotion. Child development, pages 895–909.

- Ignorance versus false belief: A developmental lag in attribution of epistemic states. Child development, pages 567–582.

- Mistral 7b. arXiv preprint arXiv:2310.06825.

- Epitome: Experimental protocol inventory for theory of mind evaluation. In First Workshop on Theory of Mind in Communicating Agents.

- The accidental transgressor: Morally-relevant theory of mind. Cognition, 119(2):197–215.

- Fantom: A benchmark for stress-testing machine theory of mind in interactions. In EMNLP, pages 14397–14413.

- Theory-of-mind deficits and causal attributions. British journal of Psychology, 89(2):191–204.

- Annotation error detection: Analyzing the past and present for a more coherent future. Computational Linguistics, 49(1):157–198.

- Empathy in early childhood: Genetic, environmental, and affective contributions. Annals of the New York Academy of Sciences, 1167(1):103–114.

- Anna M Kołodziejczyk and Sandra L Bosacki. 2016. Young-school-aged children’s use of direct and indirect persuasion: role of intentionality understanding. Psychology of Language and Communication, 20(3):292–315.

- Michal Kosinski. 2023. Theory of mind may have spontaneously emerged in large language models. arXiv preprint arXiv:2302.02083.

- Unmasking clever hans predictors and assessing what machines really learn. Nature communications, 10(1):1096.

- Revisiting the evaluation of theory of mind through question answering. In EMNLP.

- Changmao Li and Jeffrey Flanigan. 2023. Task contamination: Language models may not be few-shot anymore. arXiv preprint arXiv:2312.16337.

- Tomchallenges: A principle-guided dataset and diverse evaluation tasks for exploring theory of mind. arXiv preprint arXiv:2305.15068.

- Towards a holistic landscape of situated theory of mind in large language models. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 1011–1031.

- Andrew N Meltzoff. 1995. Understanding the intentions of others: Re-enactment of intended acts by 18-month-old children. Developmental psychology, 31(5):838.

- Mistral AI. 2023. Mixtral of experts: A high quality sparse mixture-of-experts. Online.

- Infants determine others’ focus of attention by pragmatics and exclusion. Journal of Cognition and Development, 7(3):411–430.

- OpenAI. 2023a. Gpt-3.5-turbo-0613: Function calling, 16k context window, and lower prices. Online.

- OpenAI. 2023b. New models and developer products announced at devday. Online.

- Josef Perner and Heinz Wimmer. 1985. “john thinks that mary thinks that…” attribution of second-order beliefs by 5-to 10-year-old children. Journal of experimental child psychology, 39(3):437–471.

- Keeping the reader’s mind in mind: development of perspective-taking in children’s dictations. Journal of applied developmental psychology, 35(1):35–43.

- Infants’ ability to connect gaze and emotional expression to intentional action. Cognition, 85(1):53–78.

- Bradford H Pillow. 1989. Early understanding of perception as a source of knowledge. Journal of experimental child psychology, 47(1):116–129.

- Francisco Pons and Paul Harris. 2000. Test of emotion comprehension: TEC. University of Oxford.

- David Premack and Guy Woodruff. 1978. Does the chimpanzee have a theory of mind? Behavioral and brain sciences, pages 515–526.

- François Quesque and Yves Rossetti. 2020. What do theory-of-mind tasks actually measure? theory and practice. Perspectives on Psychological Science, 15(2):384–396.

- Betty M Repacholi and Alison Gopnik. 1997. Early reasoning about desires: evidence from 14-and 18-month-olds. Developmental psychology, 33(1):12.

- Neural theory-of-mind? on the limits of social intelligence in large lms. ArXiv.

- Socialiqa: Commonsense reasoning about social interactions. arXiv preprint arXiv:1904.09728.

- Clever hans or neural theory of mind? stress testing social reasoning in large language models. arXiv preprint arXiv:2305.14763.

- Herbert A Simon and Herbert A Simon. 1977. Spurious correlation: A causal interpretation. Springer.

- Theory of mind and peer acceptance in preschool children. British journal of developmental psychology, 20(4):545–564.

- Patricia A Smiley. 2001. Intention understanding and partner-sensitive behaviors in young children’s peer interactions. Social Development, 10(3):330–354.

- How children tell a lie from a joke: The role of second-order mental state attributions. British journal of developmental psychology, 13(2):191–204.

- J Swettenham. 1996. Can children be taught to understand false belief using computers? child psychology & psychiatry & allied disciplines, 37 (2), 157–165.

- THUDM. 2023. Chatglm3. Online.

- Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288.

- Tomer Ullman. 2023. Large language models fail on trivial alterations to theory-of-mind tasks. arXiv preprint arXiv:2302.08399.

- Theory of mind in large language models: Examining performance of 11 state-of-the-art models vs. children aged 7-10 on advanced tests. arXiv preprint arXiv:2310.20320.

- Henry M Wellman and Karen Bartsch. 1988. Young children’s reasoning about beliefs. Cognition, 30(3):239–277.

- Frank Wilcoxon. 1947. Individual comparisons of grouped data by ranking methods.

- Think twice: Perspective-taking improves large language models’ theory-of-mind capabilities. arXiv preprint arXiv:2311.10227.

- Heinz Wimmer and Josef Perner. 1983. Beliefs about beliefs: Representation and constraining function of wrong beliefs in young children’s understanding of deception. Cognition, 13(1):103–128.

- Hi-tom: A benchmark for evaluating higher-order theory of mind reasoning in large language models. In Findings of the Association for Computational Linguistics: EMNLP 2023, pages 10691–10706.

- On large language models’ selection bias in multi-choice questions. arXiv preprint arXiv:2309.03882.

- How far are large language models from agents with theory-of-mind? arXiv preprint arXiv:2310.03051.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.