Learning and Leveraging World Models in Visual Representation Learning

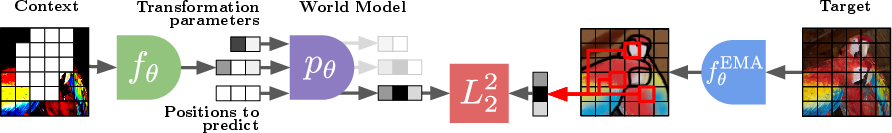

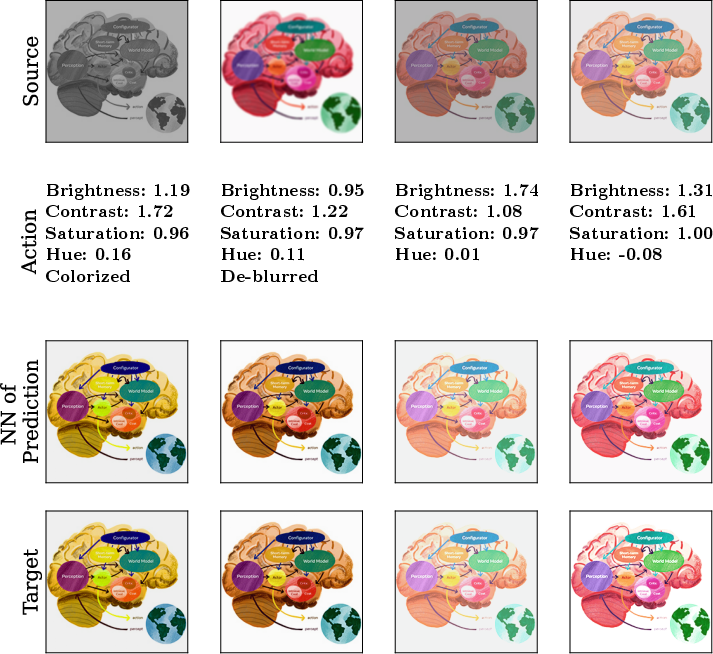

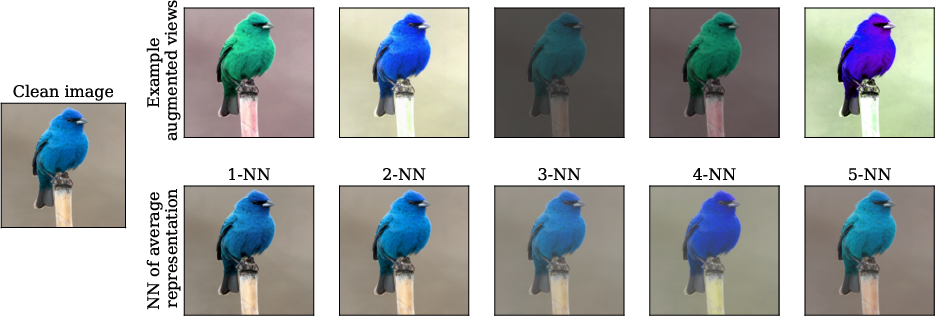

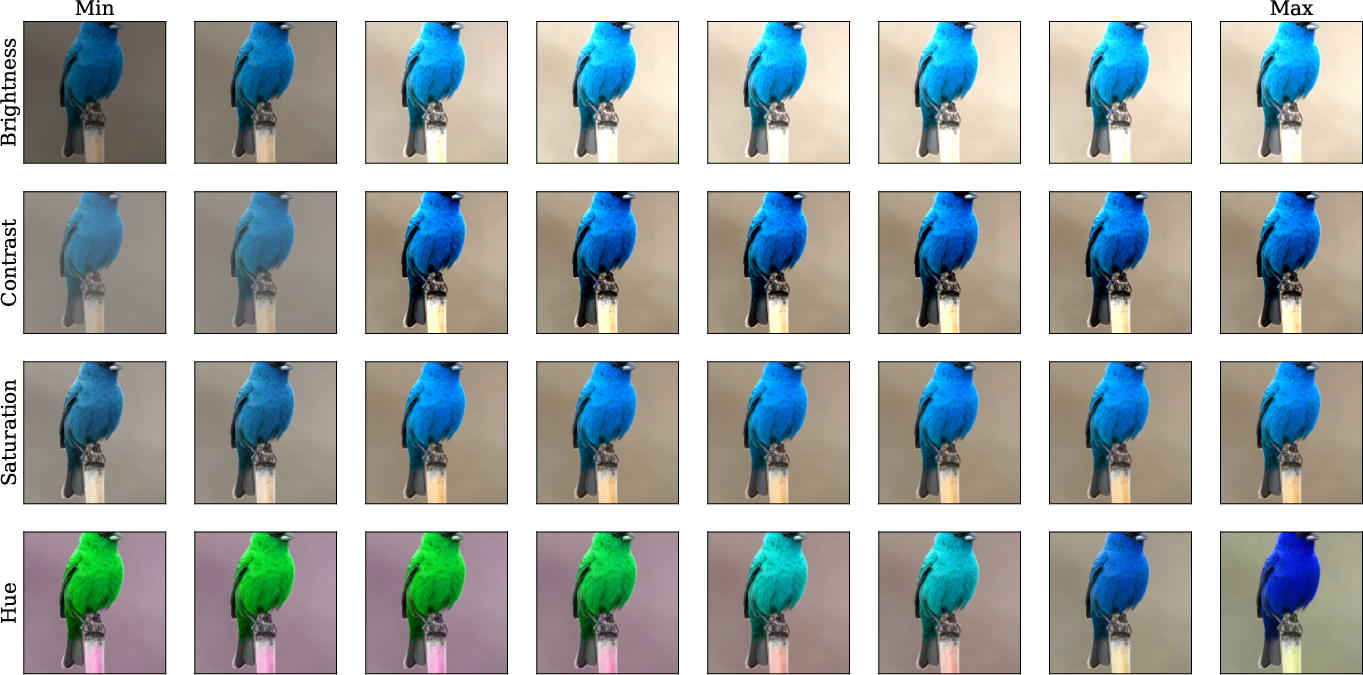

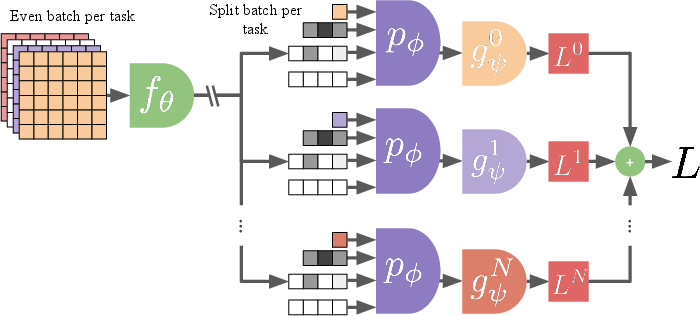

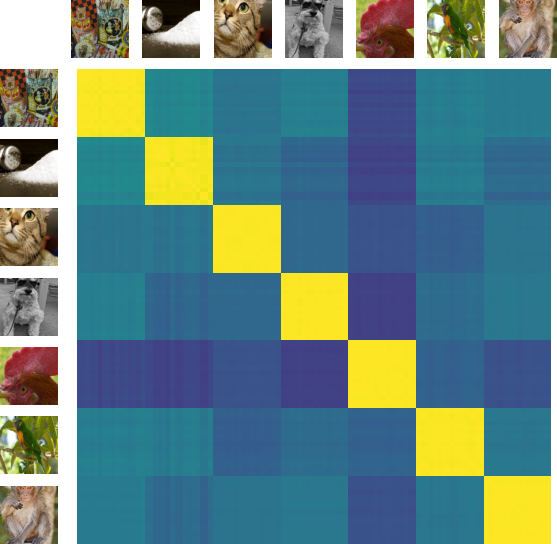

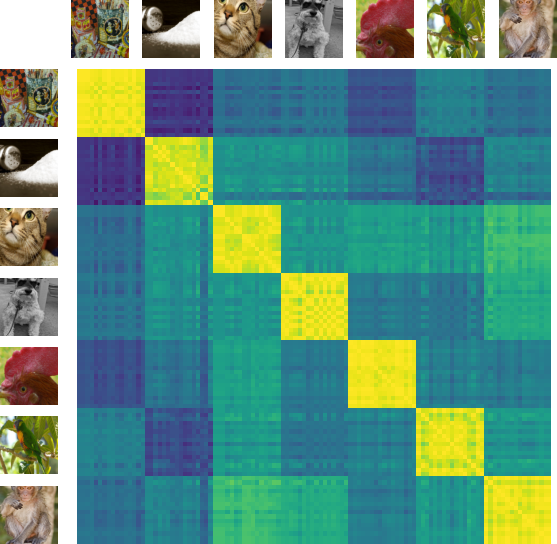

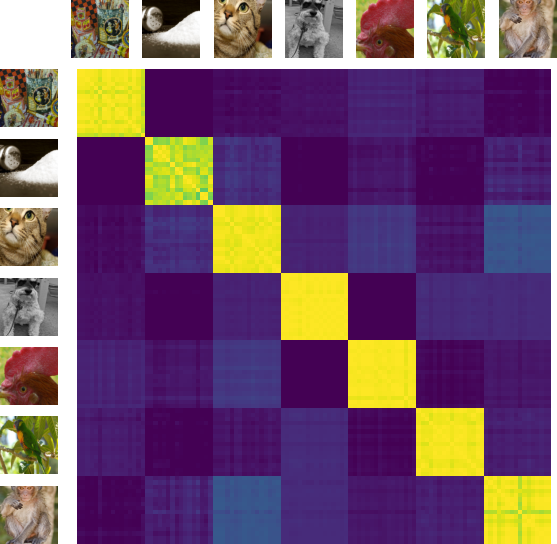

Abstract: Joint-Embedding Predictive Architecture (JEPA) has emerged as a promising self-supervised approach that learns by leveraging a world model. While previously limited to predicting missing parts of an input, we explore how to generalize the JEPA prediction task to a broader set of corruptions. We introduce Image World Models, an approach that goes beyond masked image modeling and learns to predict the effect of global photometric transformations in latent space. We study the recipe of learning performant IWMs and show that it relies on three key aspects: conditioning, prediction difficulty, and capacity. Additionally, we show that the predictive world model learned by IWM can be adapted through finetuning to solve diverse tasks; a fine-tuned IWM world model matches or surpasses the performance of previous self-supervised methods. Finally, we show that learning with an IWM allows one to control the abstraction level of the learned representations, learning invariant representations such as contrastive methods, or equivariant representations such as masked image modelling.

- Self-supervised learning from images with a joint-embedding predictive architecture. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 15619–15629, 2023.

- Data2vec: A general framework for self-supervised learning in speech, vision and language. In International Conference on Machine Learning, pages 1298–1312. PMLR, 2022.

- Beit: Bert pre-training of image transformers. arXiv preprint arXiv:2106.08254, 2021.

- Vicreg: Variance-invariance-covariance regularization for self-supervised learning. arXiv preprint arXiv:2105.04906, 2021.

- VICRegl: Self-supervised learning of local visual features. In Alice H. Oh, Alekh Agarwal, Danielle Belgrave, and Kyunghyun Cho, editors, Advances in Neural Information Processing Systems, 2022. https://openreview.net/forum?id=ePZsWeGJXyp.

- Emerging properties in self-supervised vision transformers. In ICCV, 2021.

- Quality diversity for visual pre-training. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), pages 5384–5394, October 2023a.

- Amortised invariance learning for contrastive self-supervision. In The Eleventh International Conference on Learning Representations, 2023b. https://openreview.net/forum?id=nXOhmfFu5n.

- A simple framework for contrastive learning of visual representations. In ICML, pages 1597–1607. PMLR, 2020a.

- Context autoencoder for self-supervised representation learning. International Journal of Computer Vision, pages 1–16, 2023.

- Exploring simple siamese representation learning. In CVPR, 2020.

- Improved baselines with momentum contrastive learning. arXiv preprint arXiv:2003.04297, 2020b.

- An empirical study of training self-supervised vision transformers. In ICCV, 2021.

- Deconstructing denoising diffusion models for self-supervised learning, 2024.

- Text-to-image diffusion models are zero-shot classifiers. arXiv preprint arXiv:2303.15233, 2023.

- MMSegmentation Contributors. MMSegmentation: Openmmlab semantic segmentation toolbox and benchmark. https://github.com/open-mmlab/mmsegmentation, 2020.

- Randaugment: Practical automated data augmentation with a reduced search space. In H. Larochelle, M. Ranzato, R. Hadsell, M.F. Balcan, and H. Lin, editors, Advances in Neural Information Processing Systems, volume 33, pages 18613–18624. Curran Associates, Inc., 2020. https://proceedings.neurips.cc/paper_files/paper/2020/file/d85b63ef0ccb114d0a3bb7b7d808028f-Paper.pdf.

- Equivariant contrastive learning. arXiv preprint arXiv:2111.00899, 2021.

- Imagenet: A large-scale hierarchical image database. In CVPR, 2009.

- Equimod: An equivariance module to improve self-supervised learning. arXiv preprint arXiv:2211.01244, 2022.

- An image is worth 16x16 words: Transformers for image recognition at scale. In ICLR, 2021.

- Dytox: Transformers for continual learning with dynamic token expansion. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022.

- Scalable pre-training of large autoregressive image models. arXiv preprint arXiv:2401.08541, 2024.

- Whitening for self-supervised representation learning, 2021.

- On the duality between contrastive and non-contrastive self-supervised learning. In The Eleventh International Conference on Learning Representations, 2023a. https://openreview.net/forum?id=kDEL91Dufpa.

- Self-supervised learning of split invariant equivariant representations. In Proceedings of the 40th International Conference on Machine Learning, volume 202 of Proceedings of Machine Learning Research, pages 10975–10996. PMLR, 23–29 Jul 2023b. https://proceedings.mlr.press/v202/garrido23b.html.

- Bootstrap your own latent: A new approach to self-supervised learning. In NeurIPS, 2020.

- Structuring representation geometry with rotationally equivariant contrastive learning, 2023.

- Recurrent world models facilitate policy evolution. In Advances in Neural Information Processing Systems 31, pages 2451–2463. 2018.

- Dream to control: Learning behaviors by latent imagination. arXiv preprint arXiv:1912.01603, 2019.

- Mastering diverse domains through world models. arXiv preprint arXiv:2301.04104, 2023.

- Modem: Accelerating visual model-based reinforcement learning with demonstrations. arXiv preprint arXiv:2212.05698, 2022.

- Provable guarantees for self-supervised deep learning with spectral contrastive loss. NeurIPS, 34, 2021.

- Momentum contrast for unsupervised visual representation learning. In CVPR, 2020.

- Masked autoencoders are scalable vision learners. arXiv preprint arXiv:2111.06377, 2021.

- The inaturalist species classification and detection dataset. In CVPR, 2018.

- Gaia-1: A generative world model for autonomous driving, 2023.

- Soda: Bottleneck diffusion models for representation learning, 2023.

- Contrastive learning of structured world models. arXiv preprint arXiv:1911.12247, 2019.

- Yann LeCun. A path towards autonomous machine intelligence version 0.9. 2, 2022-06-27. Open Review, 62(1), 2022.

- Prefix-tuning: Optimizing continuous prompts for generation, 2021.

- Neural manifold clustering and embedding. arXiv preprint arXiv:2201.10000, 2022.

- Decoupled weight decay regularization. In International Conference on Learning Representations, 2019. https://openreview.net/forum?id=Bkg6RiCqY7.

- Dinov2: Learning robust visual features without supervision. arXiv preprint arXiv:2304.07193, 2023.

- Learning Symmetric Embeddings for Equivariant World Models, June 2022. http://arxiv.org/abs/2204.11371. arXiv:2204.11371 [cs].

- Finetuned language models are zero-shot learners, 2022.

- Sun database: Large-scale scene recognition from abbey to zoo. In 2010 IEEE computer society conference on computer vision and pattern recognition, pages 3485–3492. IEEE, 2010.

- Unified perceptual parsing for scene understanding. In ECCV, 2018.

- Simmim: A simple framework for masked image modeling, 2022.

- Learning interactive real-world simulators. arXiv preprint arXiv:2310.06114, 2023.

- Decoupled contrastive learning. arXiv preprint arXiv:2110.06848, 2021.

- Large batch training of convolutional networks. arXiv preprint arXiv:1708.03888, 2017.

- Cutmix: Regularization strategy to train strong classifiers with localizable features. In Proceedings of the IEEE/CVF international conference on computer vision, pages 6023–6032, 2019.

- Barlow twins: Self-supervised learning via redundancy reduction. In ICML, pages 12310–12320. PMLR, 2021.

- mixup: Beyond empirical risk minimization. In International Conference on Learning Representations, 2018. https://openreview.net/forum?id=r1Ddp1-Rb.

- Instruction tuning for large language models: A survey, 2023.

- Learning deep features for scene recognition using places database. In NeurIPS, 2014.

- Semantic understanding of scenes through the ade20k dataset. IJCV, 2019.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.