Exact Enforcement of Temporal Continuity in Sequential Physics-Informed Neural Networks

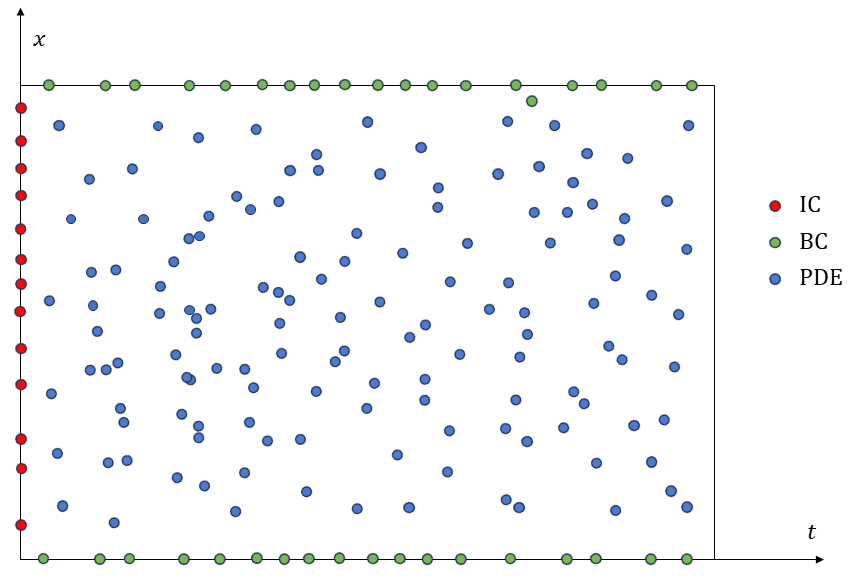

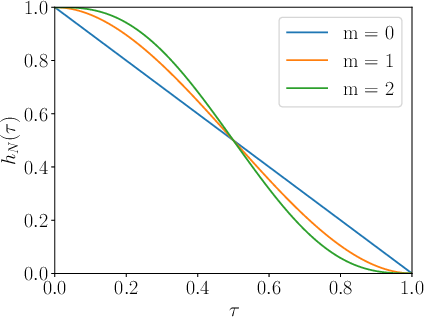

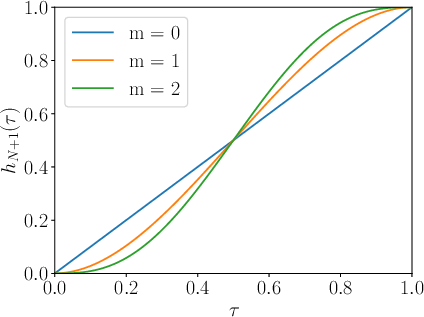

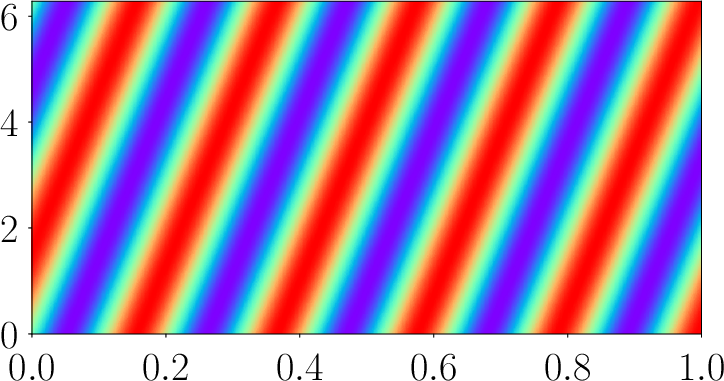

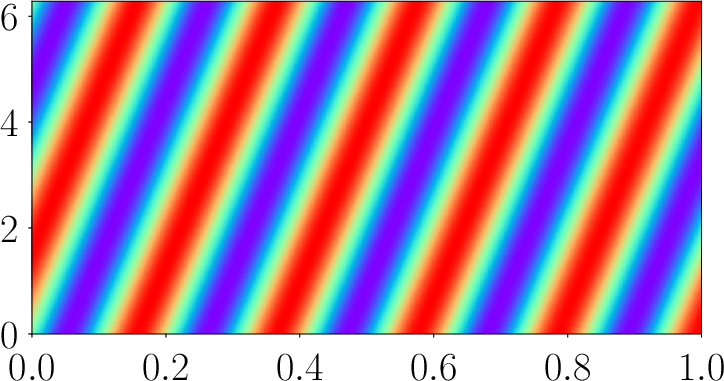

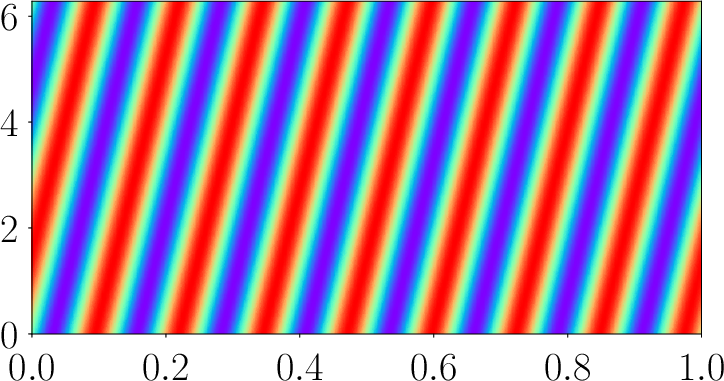

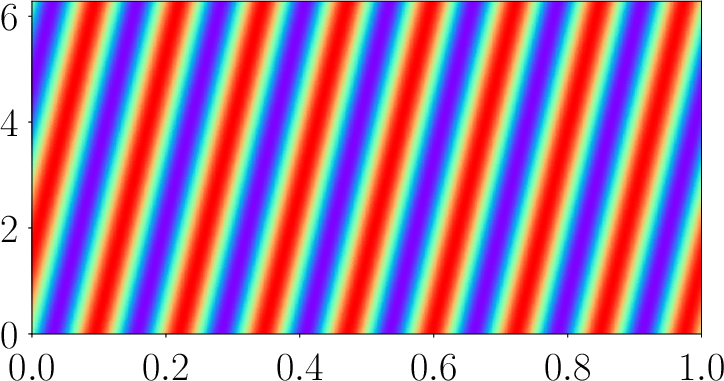

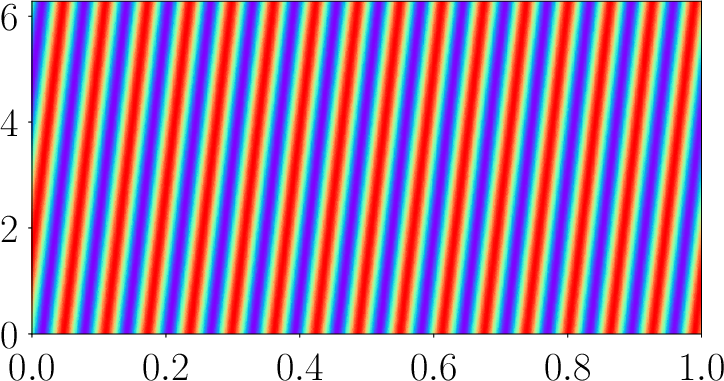

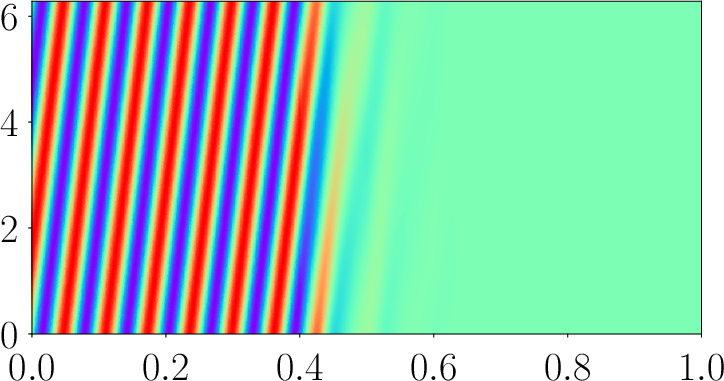

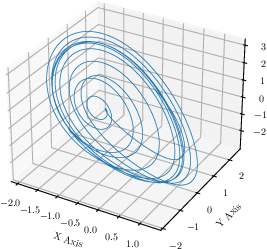

Abstract: The use of deep learning methods in scientific computing represents a potential paradigm shift in engineering problem solving. One of the most prominent developments is Physics-Informed Neural Networks (PINNs), in which neural networks are trained to satisfy partial differential equations (PDEs). While this method shows promise, the standard version has been shown to struggle in accurately predicting the dynamic behavior of time-dependent problems. To address this challenge, methods have been proposed that decompose the time domain into multiple segments, employing a distinct neural network in each segment and directly incorporating continuity between them in the loss function of the minimization problem. In this work we introduce a method to exactly enforce continuity between successive time segments via a solution ansatz. This hard constrained sequential PINN (HCS-PINN) method is simple to implement and eliminates the need for any loss terms associated with temporal continuity. The method is tested for a number of benchmark problems involving both linear and non-linear PDEs. Examples include various first order time dependent problems in which traditional PINNs struggle, namely advection, Allen-Cahn, and Korteweg-de Vries equations. Furthermore, second and third order time-dependent problems are demonstrated via wave and Jerky dynamics examples, respectively. Notably, the Jerky dynamics problem is chaotic, making the problem especially sensitive to temporal accuracy. The numerical experiments conducted with the proposed method demonstrated superior convergence and accuracy over both traditional PINNs and the soft-constrained counterparts.

- M. Raissi, P. Perdikaris, and G. E. Karniadakis, “Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations,” Journal of Computational physics, vol. 378, pp. 686–707, 2019.

- S. Cai, Z. Mao, Z. Wang, M. Yin, and G. E. Karniadakis, “Physics-informed neural networks (PINNs) for fluid mechanics: A review,” Acta Mechanica Sinica, vol. 37, no. 12, pp. 1727–1738, 2021.

- M. Mahmoudabadbozchelou, G. E. Karniadakis, and S. Jamali, “nn-PINNs: Non-newtonian physics-informed neural networks for complex fluid modeling,” Soft Matter, vol. 18, no. 1, pp. 172–185, 2022.

- H. Eivazi, M. Tahani, P. Schlatter, and R. Vinuesa, “Physics-informed neural networks for solving reynolds-averaged navier–stokes equations,” Physics of Fluids, vol. 34, no. 7, 2022.

- S. K. Biswas and N. Anand, “Three-dimensional laminar flow using physics informed deep neural networks,” Physics of Fluids, vol. 35, no. 12, 2023.

- S. Cai, Z. Wang, S. Wang, P. Perdikaris, and G. E. Karniadakis, “Physics-informed neural networks for heat transfer problems,” Journal of Heat Transfer, vol. 143, no. 6, p. 060801, 2021.

- Y. Ghaffari Motlagh, P. K. Jimack, and R. de Borst, “Deep learning phase-field model for brittle fractures,” International Journal for Numerical Methods in Engineering, vol. 124, no. 3, pp. 620–638, 2023.

- A. Sarma, C. Annavarapu, P. Roy, S. Jagannathan, and D. Valiveti, “Variational interface physics informed neural networks (VI-PINNs) for heterogeneous subsurface systems,” in ARMA US Rock Mechanics/Geomechanics Symposium, pp. ARMA–2023, ARMA, 2023.

- S. A. Niaki, E. Haghighat, T. Campbell, A. Poursartip, and R. Vaziri, “Physics-informed neural network for modelling the thermochemical curing process of composite-tool systems during manufacture,” Computer Methods in Applied Mechanics and Engineering, vol. 384, p. 113959, 2021.

- D. N. Tanyu, J. Ning, T. Freudenberg, N. Heilenkötter, A. Rademacher, U. Iben, and P. Maass, “Deep learning methods for partial differential equations and related parameter identification problems,” Inverse Problems, vol. 39, no. 10, p. 103001, 2023.

- L. Yang, X. Meng, and G. E. Karniadakis, “B-PINNs: Bayesian physics-informed neural networks for forward and inverse PDE problems with noisy data,” Journal of Computational Physics, vol. 425, p. 109913, 2021.

- L. Lu, R. Pestourie, W. Yao, Z. Wang, F. Verdugo, and S. G. Johnson, “Physics-informed neural networks with hard constraints for inverse design,” SIAM Journal on Scientific Computing, vol. 43, no. 6, pp. B1105–B1132, 2021.

- A. D. Jagtap, D. Mitsotakis, and G. E. Karniadakis, “Deep learning of inverse water waves problems using multi-fidelity data: Application to serre–green–naghdi equations,” Ocean Engineering, vol. 248, p. 110775, 2022.

- Y. Chen, L. Lu, G. E. Karniadakis, and L. Dal Negro, “Physics-informed neural networks for inverse problems in nano-optics and metamaterials,” Optics express, vol. 28, no. 8, pp. 11618–11633, 2020.

- A. Serebrennikova, R. Teubler, L. Hoffellner, E. Leitner, U. Hirn, and K. Zojer, “Physics informed neural networks reveal valid models for reactive diffusion of volatiles through paper,” Chemical Engineering Science, vol. 285, p. 119636, 2024.

- Cambridge University Press, 2022.

- A. Krishnapriyan, A. Gholami, S. Zhe, R. Kirby, and M. W. Mahoney, “Characterizing possible failure modes in physics-informed neural networks,” Advances in Neural Information Processing Systems, vol. 34, pp. 26548–26560, 2021.

- S. Wang, S. Sankaran, and P. Perdikaris, “Respecting causality is all you need for training physics-informed neural networks,” arXiv preprint arXiv:2203.07404, 2022.

- S. Cuomo, V. S. Di Cola, F. Giampaolo, G. Rozza, M. Raissi, and F. Piccialli, “Scientific machine learning through physics–informed neural networks: Where we are and what’s next,” Journal of Scientific Computing, vol. 92, no. 3, p. 88, 2022.

- S. Dong and N. Ni, “A method for representing periodic functions and enforcing exactly periodic boundary conditions with deep neural networks,” Journal of Computational Physics, vol. 435, p. 110242, 2021.

- N. Sukumar and A. Srivastava, “Exact imposition of boundary conditions with distance functions in physics-informed deep neural networks,” Computer Methods in Applied Mechanics and Engineering, vol. 389, p. 114333, 2022.

- C. L. Wight and J. Zhao, “Solving Allen-Cahn and Cahn-Hilliard equations using the adaptive physics informed neural networks,” arXiv preprint arXiv:2007.04542, 2020.

- R. Mattey and S. Ghosh, “A novel sequential method to train physics informed neural networks for Allen Cahn and Cahn Hilliard equations,” Computer Methods in Applied Mechanics and Engineering, vol. 390, p. 114474, 2022.

- A. Bihlo and R. O. Popovych, “Physics-informed neural networks for the shallow-water equations on the sphere,” Journal of Computational Physics, vol. 456, p. 111024, 2022.

- S. Wang, S. Sankaran, H. Wang, and P. Perdikaris, “An expert’s guide to training physics-informed neural networks,” arXiv preprint arXiv:2308.08468, 2023.

- M. Penwarden, A. D. Jagtap, S. Zhe, G. E. Karniadakis, and R. M. Kirby, “A unified scalable framework for causal sweeping strategies for physics-informed neural networks (PINNs) and their temporal decompositions,” arXiv preprint arXiv:2302.14227, 2023.

- D. P. Kingma and J. Ba, “Adam: A method for stochastic optimization,” arXiv preprint arXiv:1412.6980, 2014.

- D. C. Liu and J. Nocedal, “On the limited memory BFGS method for large scale optimization,” Mathematical programming, vol. 45, no. 1-3, pp. 503–528, 1989.

- X. Glorot and Y. Bengio, “Understanding the difficulty of training deep feedforward neural networks,” in Proceedings of the thirteenth international conference on artificial intelligence and statistics, pp. 249–256, JMLR Workshop and Conference Proceedings, 2010.

- T. A. Driscoll, N. Hale, and L. N. Trefethen, “Chebfun guide,” 2014.

- U. Braga-Neto, “Characteristics-informed neural networks for forward and inverse hyperbolic problems,” arXiv preprint arXiv:2212.14012, 2022.

- S. M. Allen and J. W. Cahn, “A microscopic theory for antiphase boundary motion and its application to antiphase domain coarsening,” Acta metallurgica, vol. 27, no. 6, pp. 1085–1095, 1979.

- R. M. Miura, “The Korteweg–deVries equation: a survey of results,” SIAM review, vol. 18, no. 3, pp. 412–459, 1976.

- E. N. Lorenz, “Deterministic nonperiodic flow,” Journal of atmospheric sciences, vol. 20, no. 2, pp. 130–141, 1963.

- Elsevier, 1974.

- O. E. Rössler, “Continuous chaos - four prototype equations,” Annals of the New York Academy of Sciences, vol. 316, no. 1, pp. 376–392, 1979.

- J. Sprott, “Some simple chaotic jerk functions,” American Journal of Physics, vol. 65, no. 6, pp. 537–543, 1997.

- R. Eichhorn, S. J. Linz, and P. Hänggi, “Transformations of nonlinear dynamical systems to jerky motion and its application to minimal chaotic flows,” Physical Review E, vol. 58, no. 6, p. 7151, 1998.

- J. Sprott, “Simplifications of the Lorenz attractor,” Nonlinear dynamics, psychology, and life sciences, vol. 13, no. 3, p. 271, 2009.

- S. Wang, X. Yu, and P. Perdikaris, “When and why PINNs fail to train: A neural tangent kernel perspective,” Journal of Computational Physics, vol. 449, p. 110768, 2022.

- Z. Xiang, W. Peng, X. Liu, and W. Yao, “Self-adaptive loss balanced physics-informed neural networks,” Neurocomputing, vol. 496, pp. 11–34, 2022.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.