Considering Nonstationary within Multivariate Time Series with Variational Hierarchical Transformer for Forecasting

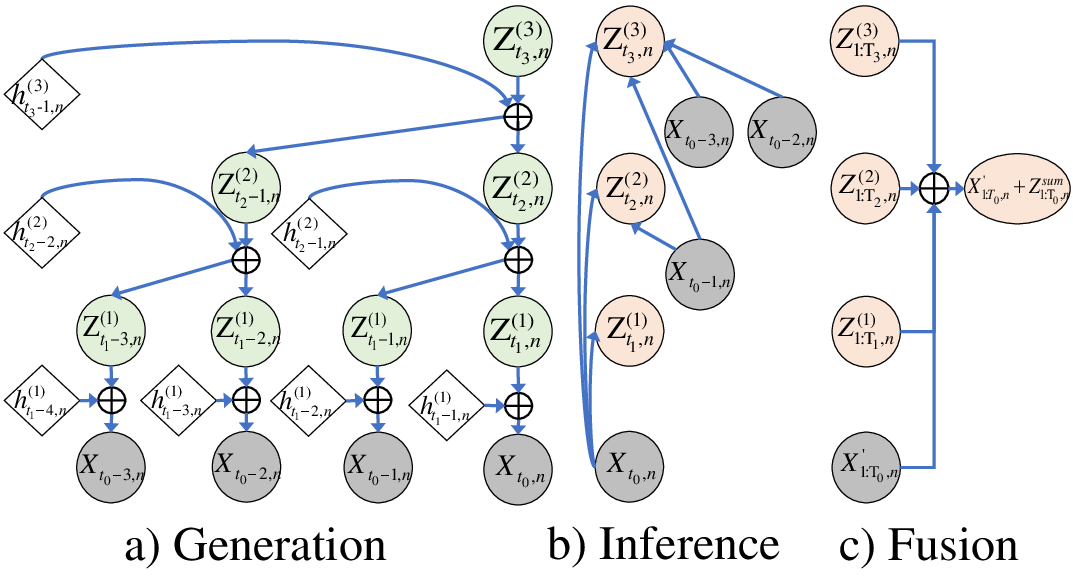

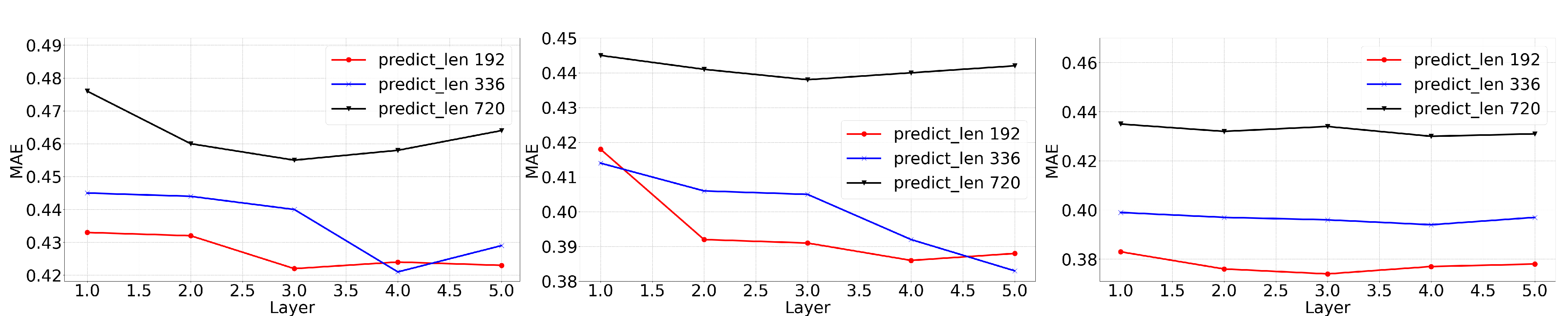

Abstract: The forecasting of Multivariate Time Series (MTS) has long been an important but challenging task. Due to the non-stationary problem across long-distance time steps, previous studies primarily adopt stationarization method to attenuate the non-stationary problem of the original series for better predictability. However, existing methods always adopt the stationarized series, which ignores the inherent non-stationarity, and has difficulty in modeling MTS with complex distributions due to the lack of stochasticity. To tackle these problems, we first develop a powerful hierarchical probabilistic generative module to consider the non-stationarity and stochastic characteristics within MTS, and then combine it with transformer for a well-defined variational generative dynamic model named Hierarchical Time series Variational Transformer (HTV-Trans), which recovers the intrinsic non-stationary information into temporal dependencies. Being a powerful probabilistic model, HTV-Trans is utilized to learn expressive representations of MTS and applied to forecasting tasks. Extensive experiments on diverse datasets show the efficiency of HTV-Trans on MTS forecasting tasks

- Passenger demand forecasting with multi-task convolutional recurrent neural networks. in PAKDD, 29–42.

- Box, G. E. P. 1976. Time series analysis, forecasting and control rev. ed. Time series analysis, forecasting and control rev. ed.

- Spectral temporal graph neural network for multivariate time-series forecasting. in NeurIPS, 33: 17766–17778.

- Autoformer: Searching transformers for visual recognition. in CVPR, 12270–12280.

- Infinite Switching Dynamic Probabilistic Network with Bayesian Nonparametric Learning. in TSP.

- Switching Poisson gamma dynamical systems. in IJCAI.

- Switching Gaussian Mixture Variational RNN for Anomaly Detection of Diverse CDN Websites. in INFOCOM.

- SDFVAE: Static and Dynamic Factorized VAE for Anomaly Detection of Multivariate CDN KPIs. in WWW, 3076–3086.

- An image is worth 16x16 words: Transformers for image recognition at scale. in ICLR.

- Forecasting: principles and practice. OTexts.

- Reversible instance normalization for accurate time-series forecasting against distribution shift. in CVPR.

- Reformer: The efficient transformer. in ICLR.

- Pyraformer: Low-complexity pyramidal attention for long-range time series modeling and forecasting. In ICLR.

- Non-stationary Transformers: Exploring the Stationarity in Time Series Forecasting. in NeurIPS.

- LSTM-based encoder-decoder for multi-sensor anomaly detection. in ICML.

- Adaptive normalization: A novel data normalization approach for non-stationary time series. In The 2010 International Joint Conference on Neural Networks (IJCNN), 1–8. IEEE.

- Adaptive Normalization: A novel data normalization approach for non-stationary time series. In International Joint Conference on Neural Networks, IJCNN 2010, Barcelona, Spain, 18-23 July, 2010.

- Deep adaptive input normalization for time series forecasting. IEEE transactions on neural networks and learning systems, 31(9): 3760–3765.

- Deep Adaptive Input Normalization for Time Series Forecasting. Papers.

- DeepAR: Probabilistic forecasting with autoregressive recurrent networks. in International Journal of Forecasting, 36(3): 1181–1191.

- Joint modeling of local and global temporal dynamics for multivariate time series forecasting with missing values. in AAAI, 34(04): 5956–5963.

- Attention is all you need. in NeurIPS, 30.

- Transformers in Time Series: A Survey. in arXiv preprint arXiv:2202.07125.

- Autoformer: Decomposition transformers with auto-correlation for long-term series forecasting. Advances in Neural Information Processing Systems, 34: 22419–22430.

- Deep multi-view spatial-temporal network for taxi demand prediction. in AAAI, 32(1).

- A deep neural network for unsupervised anomaly detection and diagnosis in multivariate time series data. in AAAI, 33(01): 1409–1416.

- Crossformer: Transformer Utilizing Cross-Dimension Dependency for Multivariate Time Series Forecasting. In The Eleventh International Conference on Learning Representations.

- Informer: Beyond efficient transformer for long sequence time-series forecasting. in AAAI.

- FEDformer: Frequency enhanced decomposed transformer for long-term series forecasting. In ICML.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.