- The paper introduces an explicit 3D Gaussian Splatting framework that achieves real-time rendering and improved scene reconstruction compared to implicit methods.

- The paper presents quality enhancement techniques like Mip-Splatting and VDGS, which mitigate aliasing and improve view-dependent rendering.

- The paper explores efficient compression and dynamic reconstruction strategies that reduce data size while enabling smooth temporal deformations.

Recent Advances in 3D Gaussian Splatting

Introduction to 3D Gaussian Splatting

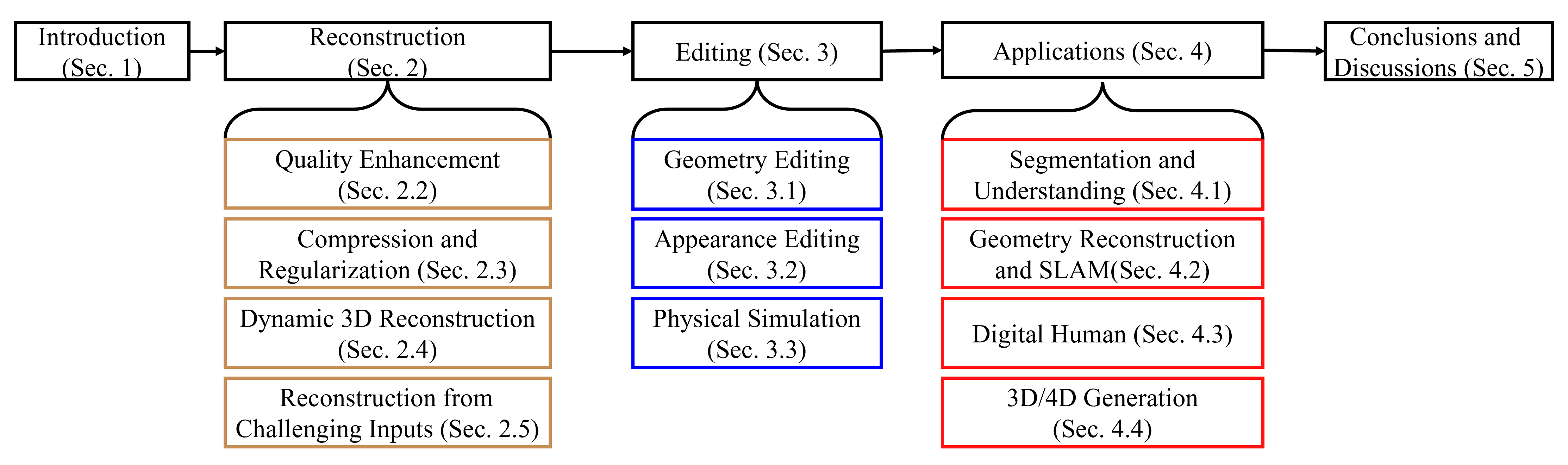

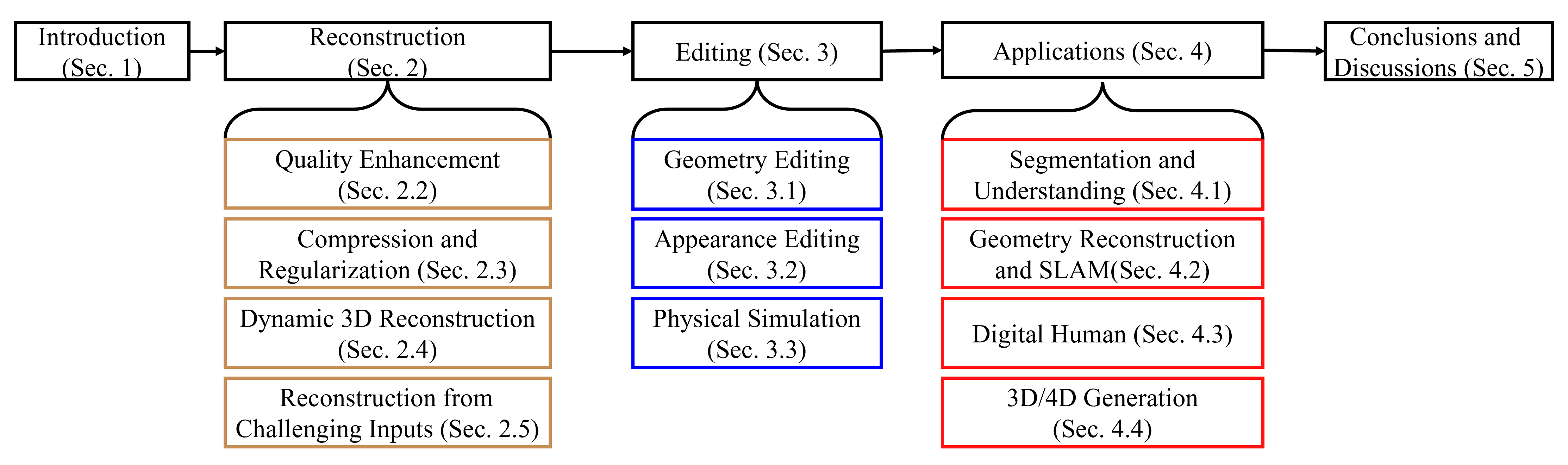

3D Gaussian Splatting (3DGS) represents a significant evolution in the domain of 3D graphics and rendering. Unlike Neural Radiance Fields (NeRF), which rely on implicit neural network representations for novel view synthesis, 3DGS utilizes Gaussian ellipsoids to model scenes, achieving enhanced efficiency in rendering through rasterization. This method not only promises superior rendering speeds but also opens new possibilities in dynamic reconstruction, geometry editing, and physical simulations, owing to its explicit representation. The paper provides a comprehensive review of the recent methodologies in 3DGS, categorizing them by their applicability in 3D reconstruction, editing, and other application domains.

Figure 1: Structure of the literature review and taxonomy of current 3D Gaussian Splatting methods.

3DGS for 3D Reconstruction

Point-based Rendering

3DGS is founded on the principles of point-based rendering where discrete geometric primitives are used to create realistic images. Traditional methods like those proposed by Grossman and Dally involve single pixels per point. Zwicker et al. extended this to splat-based rendering, where ellipsoids cover multiple pixels, ensuring seamless images without gaps. This framework laid the foundation for 3DGS innovation. Unlike NeRF, which requires dense sampling and neural network evaluation, 3DGS optimizes a set of parameters for Gaussian ellipsoids—position, rotation, and scale—that determine attributes like color and opacity. The abandonment of neural networks allows 3DGS to achieve real-time rendering capabilities, making it ideal for devices with limited computational power, while maintaining high quality at 1080p resolution.

Quality Enhancement

Current enhancement strategies for 3DGS focus on resolving artifacts due to aliasing and optimizing view-dependent appearance. For instance, Mip-Splatting introduces filters to combat high-frequency artifacts, while MS3DGS addresses aliasing by selecting Gaussians at varying resolution levels. Handling view-dependency, VDGS integrates neural networks for attribute prediction, similar to NeRF, yet employs 3DGS's efficient method. Innovations such as GaussianPro enhance Gaussian growth and address popping artifacts for view consistency, allowing for smoother transitions across different perspectives.

Compression and Regularization

To optimize computational demands of 3DGS, several compression techniques have been explored. EAGLES uses vector quantization for Gaussian attributes, while others like Compact3D and LightGaussian employ techniques that preserve important features while reducing data size. These methods significantly reduce the storage requirements and improve rendering speeds without compromising quality, facilitating resource-efficient 3DGS applications.

Dynamic 3D Reconstruction

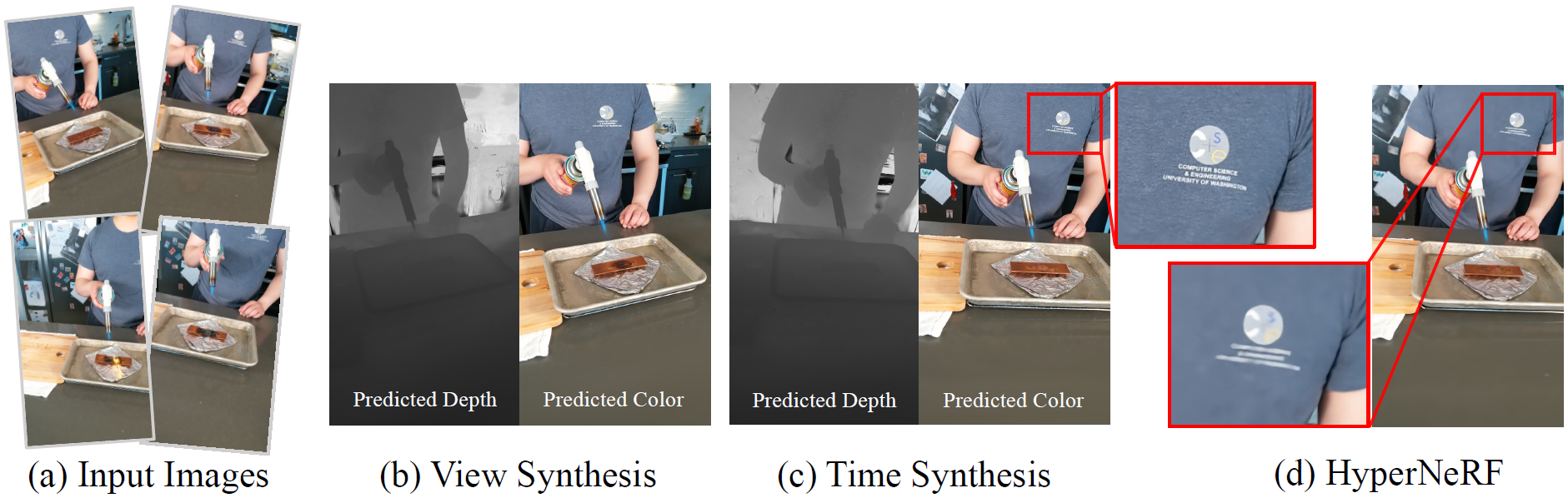

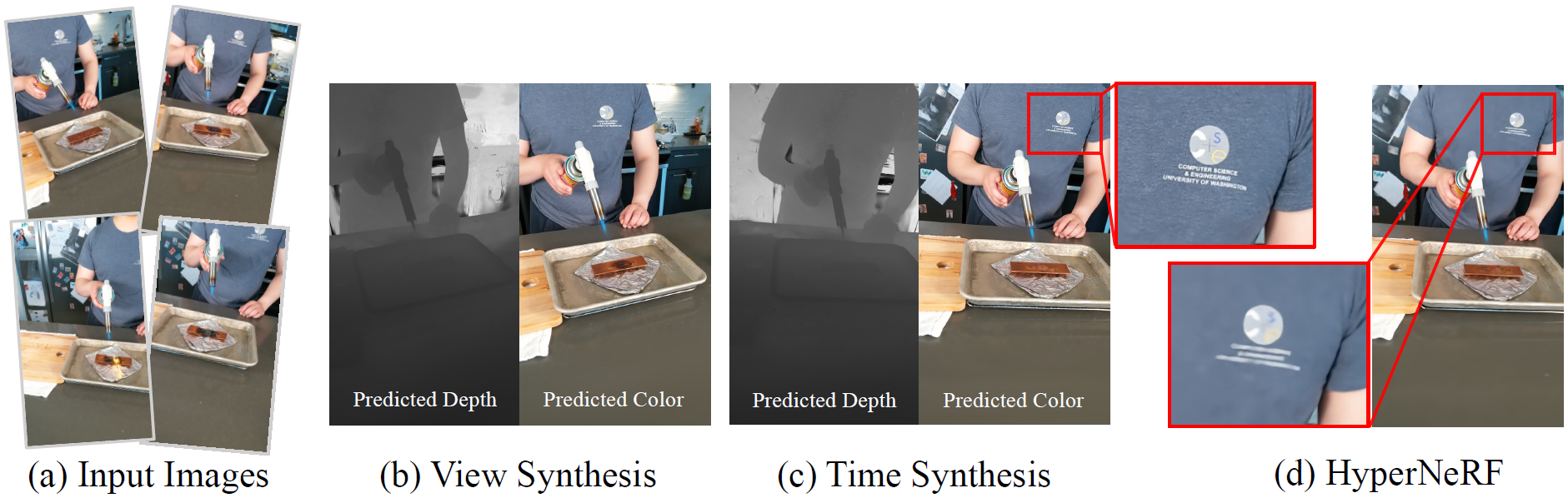

Dynamic scene reconstruction extends the capabilities of 3DGS, leveraging time-variant attributes to model motion. Approaches vary from frame-by-frame attributes assignment, as seen in Luiten et al.'s work, to the use of deformation fields for continuity, such as in work by Yang et al. The integration of canonical spaces and neural fields models temporal deformations effectively, making 3DGS applicable for complex dynamic scenes, enhancing temporal coherence and reducing artifacts in rendering.

Figure 2: The results of Deformable3DGS showcase novel view synthesis and better time synthesis quality compared to prior methods.

Challenges and Future Directions

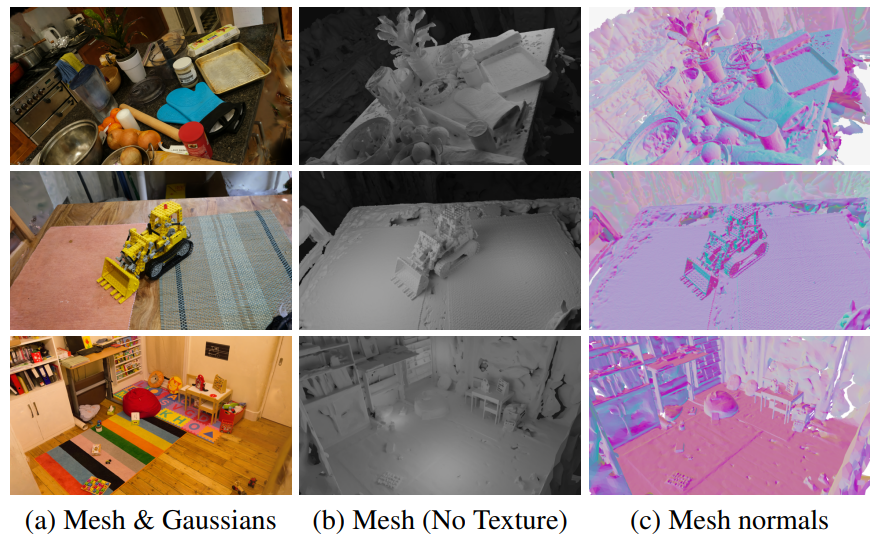

Despite its advancements, 3DGS does face challenges in universality and quality. For instance, sparse view reconstruction remains a hurdle, addressed only partially by methods using depth augmentation. The explicit representation offers efficiency yet needs improvements in alias-free rendering and geometry accuracy to become comparable with traditional mesh or implicit method capabilities in detailed scene modeling.

Looking forward, the integration of more robust inverse rendering techniques, potentially leveraging machine learning advancements and 3D data priors, could further elevate the quality and applicability of 3DGS. Enhancements in memory and computational efficiency will also be crucial in enabling scalable applications across varied devices and platforms.

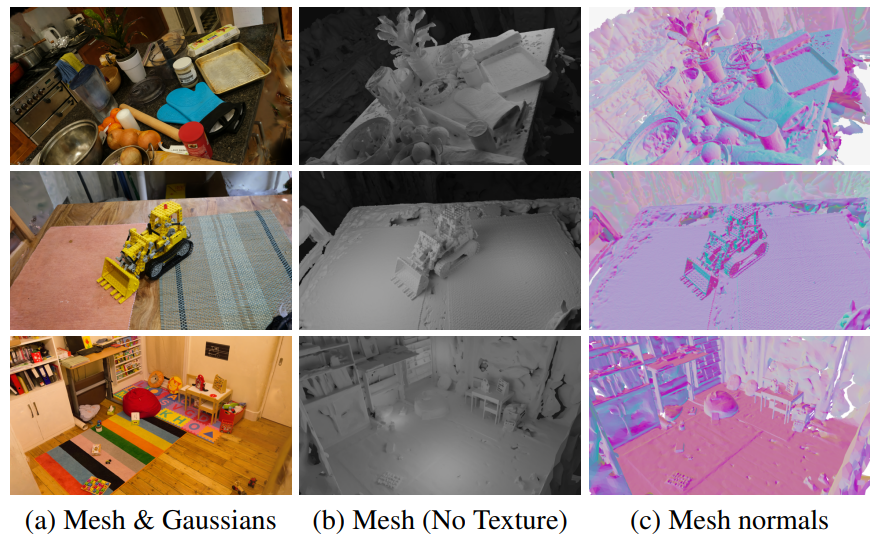

Figure 3: Geometry reconstruction results by SuGaR demonstrate the integration of 3D Gaussian splatting into geometry reconstruction pipelines.

Conclusion

The journey of 3D Gaussian Splatting from traditional point-based methods to a versatile tool for real-time rendering showcases its potential in various applications. By overcoming its current limitations and expanding its reach through innovative integration with neural networks and advanced computational techniques, 3DGS holds promise as a pivotal technology in graphics and beyond. As research continues, the push towards higher fidelity and efficiency will likely make 3DGS an integral part of the visualization and simulation toolkit.