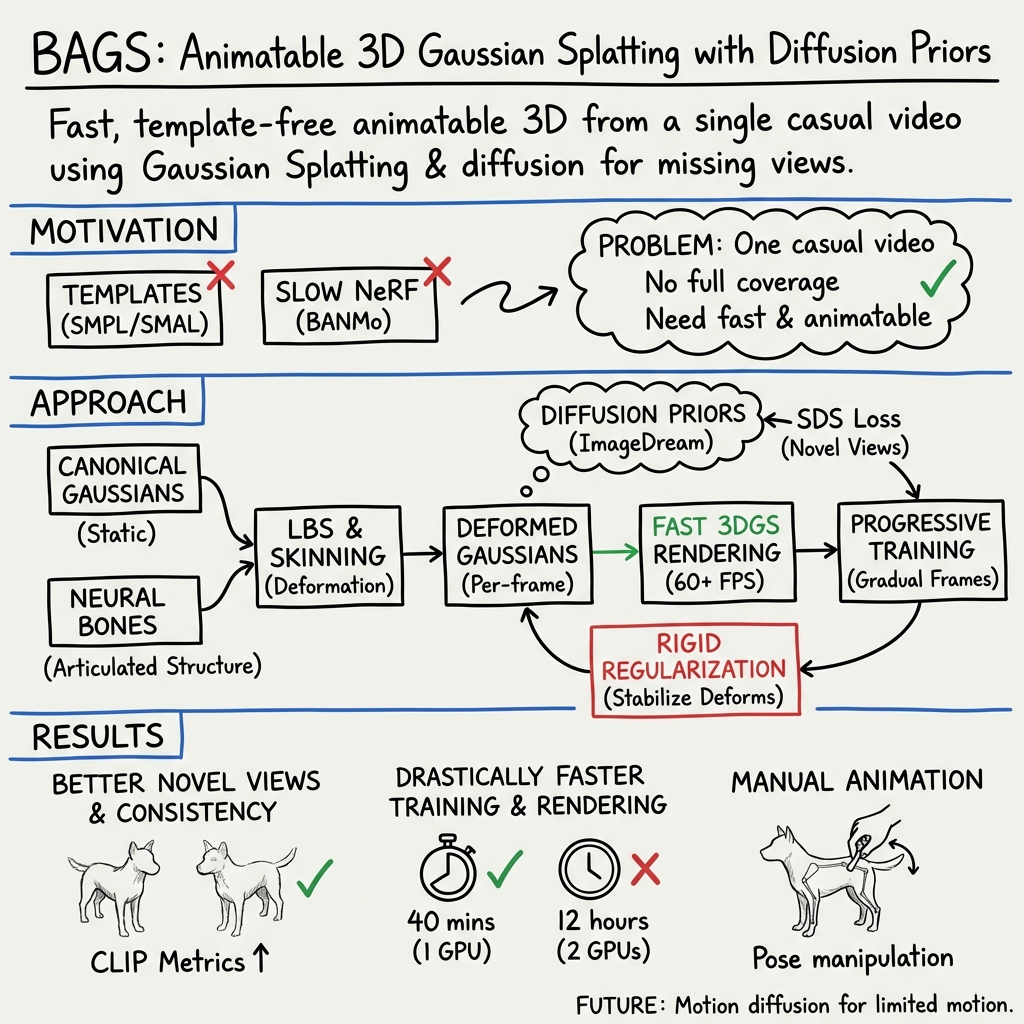

- The paper presents a method to build animatable 3D models from monocular video using 3D Gaussian splatting reinforced by diffusion priors.

- It employs neural bones and Mahalanobis-based skinning weights to animate models without relying on extensive 3D scan datasets.

- Experimental results demonstrate superior geometry fidelity and faster, real-time rendering on a single 40 GB A100 GPU compared to state-of-the-art methods.

BAGS: An Approach for Constructing Animatable 3D Models using Gaussian Splatting and Diffusion Priors

Introduction

The construction of animatable 3D models is a pivotal task in numerous applications, ranging from augmented reality to digital content creation. Traditional methods for acquiring these models are not only labor-intensive but also require a significant amount of expert knowledge, leading to models that may lack realism. Recent advancements have introduced methods to construct animatable 3D models from monocular videos, promising to alleviate some of these challenges. However, existing techniques often fall short due to their dependency on extensive view coverage within the input and considerable computational demands for training and rendering. Addressing these limitations, this paper proposes a novel method named BAGS (Building Animatable Gaussian Splatting from a Monocular Video with Diffusion Priors), which significantly accelerates the training and rendering process. It incorporates 3D Gaussian representations and leverages diffusion priors to learn 3D models under limited viewpoints, presenting a novel methodology in the field of 3D reconstruction.

Methodology

Neural Bone and Skinning Weight

A core component of BAGS is its novel approach to animating 3D Gaussian Splatting. Utilizing neural bones represented through Gaussian ellipsoids, the method does not rely on parametric model parameters. This offers a significant advantage as it discards the necessity of extensive 3D scan datasets for various categories. The neural bones are driven by Multi-Layer Perceptrons (MLPs), which predict bone parameters based on positional embedding, allowing for a versatile representation suited to various objects and actions. Skinning weights are calculated using the Mahalanobis distance, enabling Linear Blend Skinning (LBS) to animate the 3D Gaussian model according to the target pose, showcasing the method’s ability to adaptively animate models based on video input.

Integration of Diffusion Priors

The innovative use of diffusion priors addresses the challenge of insufficient view coverage present in casual monocular videos. By synthesizing novel view images conditioned on the input image, BAGS overcomes the limitation of not having comprehensive view coverage of the object. However, the naive application of diffusion priors may introduce inconsistencies. To counteract this, a rigid regularization technique is introduced, refining the use of diffusion priors by ensuring transformations are as close to rigid as possible, thereby enhancing model fidelity.

Experimental Evaluation

BAGS was substantiated through extensive evaluation on a collected dataset comprising various real-world videos featuring a diverse range of animal species. The methodology demonstrated superior performance in geometry, appearance, and animation quality when compared to current state-of-the-art methods, notably outperforming BANMo, a closely related baseline. Not only did BAGS achieve higher similarity scores to reference images and consistency in novel view images, but it also drastically reduced optimization time and allowed for real-time rendering on a single 40 GB A100 GPU.

Conclusion and Future Work

The BAGS framework represents a significant step forward in the construction of animatable 3D models from monocular videos. By leveraging 3D Gaussian Splatting and diffusion priors, it addresses critical challenges that have hindered previous methods, offering a more efficient and robust solution. Future directions for this work could involve integrating motion diffusion models to further enhance the animatable capabilities of constructed models, especially in scenarios with limited motion in the input video. Additionally, refining the approach to mitigate artifacts introduced by diffusion models will be essential in pushing the boundaries of what can be achieved in 3D reconstruction from monocular video inputs.