Evolutionary Optimization of Model Merging Recipes

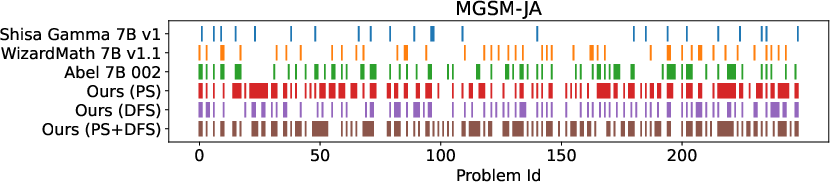

Abstract: LLMs have become increasingly capable, but their development often requires substantial computational resources. While model merging has emerged as a cost-effective promising approach for creating new models by combining existing ones, it currently relies on human intuition and domain knowledge, limiting its potential. Here, we propose an evolutionary approach that overcomes this limitation by automatically discovering effective combinations of diverse open-source models, harnessing their collective intelligence without requiring extensive additional training data or compute. Our approach operates in both parameter space and data flow space, allowing for optimization beyond just the weights of the individual models. This approach even facilitates cross-domain merging, generating models like a Japanese LLM with Math reasoning capabilities. Surprisingly, our Japanese Math LLM achieved state-of-the-art performance on a variety of established Japanese LLM benchmarks, even surpassing models with significantly more parameters, despite not being explicitly trained for such tasks. Furthermore, a culturally-aware Japanese VLM generated through our approach demonstrates its effectiveness in describing Japanese culture-specific content, outperforming previous Japanese VLMs. This work not only contributes new state-of-the-art models back to the open-source community, but also introduces a new paradigm for automated model composition, paving the way for exploring alternative, efficient approaches to foundation model development.

- Open AI. 2023. GPT-4V(ision) System Card. https://cdn.openai.com/papers/GPTV_System_Card.pdf

- Optuna: A Next-generation Hyperparameter Optimization Framework. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining (Anchorage, AK, USA) (KDD ’19). Association for Computing Machinery, New York, NY, USA, 2623–2631. https://doi.org/10.1145/3292500.3330701

- augmxnt. 2023. shisa-gamma-7b. HuggingFace. https://hf.co/augmxnt/shisa-gamma-7b-v1

- AUTOMATIC1111. 2022. Stable Diffusion WebUI. https://github.com/AUTOMATIC1111/stable-diffusion-webui.

- Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond. arXiv:2308.12966 [cs.CV]

- Generative AI for Math: Abel. https://github.com/GAIR-NLP/abel.

- Training Verifiers to Solve Math Word Problems. CoRR abs/2110.14168 (2021). arXiv:2110.14168 https://arxiv.org/abs/2110.14168

- Model Merging by Uncertainty-Based Gradient Matching. In The Twelfth International Conference on Learning Representations. https://openreview.net/forum?id=D7KJmfEDQP

- InstructBLIP: Towards General-purpose Vision-Language Models with Instruction Tuning. arXiv:2305.06500 [cs.CV]

- A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE transactions on evolutionary computation 6, 2 (2002), 182–197.

- Gintare Karolina Dziugaite and Daniel M Roy. 2017. Computing nonvacuous generalization bounds for deep (stochastic) neural networks with many more parameters than training data. arXiv preprint arXiv:1703.11008 (2017).

- Adam Gaier and David Ha. 2019. Weight agnostic neural networks. Advances in neural information processing systems 32 (2019).

- A framework for few-shot language model evaluation. https://doi.org/10.5281/zenodo.10256836

- Transformer feed-forward layers build predictions by promoting concepts in the vocabulary space. arXiv preprint arXiv:2203.14680 (2022).

- Charles O. Goddard. 2024. mergekit. https://github.com/arcee-ai/mergekit

- Hypernetworks. arXiv preprint arXiv:1609.09106 (2016).

- Nikolaus Hansen. 2006. The CMA evolution strategy: a comparing review. Towards a new evolutionary computation: Advances in the estimation of distribution algorithms (2006), 75–102.

- Sepp Hochreiter and Jürgen Schmidhuber. 1994. Simplifying neural nets by discovering flat minima. Advances in neural information processing systems 7 (1994).

- Sepp Hochreiter and Jürgen Schmidhuber. 1997. Flat minima. Neural computation 9, 1 (1997), 1–42.

- HuggingFace. 2023. Open LLM Leaderboard. HuggingFace. https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- Editing models with task arithmetic. arXiv preprint arXiv:2212.04089 (2022).

- Mistral 7B. arXiv:2310.06825 [cs.CL]

- FastText.zip: Compressing text classification models. arXiv preprint arXiv:1612.03651 (2016).

- Bag of Tricks for Efficient Text Classification. arXiv preprint arXiv:1607.01759 (2016).

- When do flat minima optimizers work? Advances in Neural Information Processing Systems 35 (2022), 16577–16595.

- On Large-Batch Training for Deep Learning: Generalization Gap and Sharp Minima. In International Conference on Learning Representations. https://openreview.net/forum?id=H1oyRlYgg

- Maxime Labonne. 2024a. Automerger Experiment. Tweet Thread (2024). https://twitter.com/maximelabonne/status/1767124527551549860

- Maxime Labonne. 2024b. Merge Large Language Models with mergekit. Hugging Face Blog (2024). https://huggingface.co/blog/mlabonne/merge-models

- BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models. arXiv:2301.12597 [cs.CV]

- Improved Baselines with Visual Instruction Tuning. arXiv:2310.03744 [cs.CV]

- LLaVA-NeXT: Improved reasoning, OCR, and world knowledge. https://llava-vl.github.io/blog/2024-01-30-llava-next/

- Visual Instruction Tuning. arXiv:2304.08485 [cs.CV]

- WizardMath: Empowering Mathematical Reasoning for Large Language Models via Reinforced Evol-Instruct. CoRR abs/2308.09583 (2023). https://doi.org/10.48550/ARXIV.2308.09583 arXiv:2308.09583

- Michael S Matena and Colin A Raffel. 2022. Merging models with fisher-weighted averaging. Advances in Neural Information Processing Systems 35 (2022), 17703–17716.

- Locating and editing factual associations in GPT. Advances in Neural Information Processing Systems 35 (2022), 17359–17372.

- nostalgebraist. 2021. Interpreting GPT: The Logit Lens. https://www.lesswrong.com/posts/AcKRB8wDpdaN6v6ru/interpreting-gpt-the-logit-lens. Accessed: 2024-03-08.

- Relative Flatness and Generalization. In Advances in Neural Information Processing Systems, A. Beygelzimer, Y. Dauphin, P. Liang, and J. Wortman Vaughan (Eds.). https://openreview.net/forum?id=sygvo7ctb_

- Regularized evolution for image classifier architecture search. In Proceedings of the aaai conference on artificial intelligence, Vol. 33. 4780–4789.

- High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 10684–10695.

- Jürgen Schmidhuber. 1992. Learning to control fast-weight memories: An alternative to dynamic recurrent networks. Neural Computation 4, 1 (1992), 131–139.

- Language models are multilingual chain-of-thought reasoners. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net. https://openreview.net/pdf?id=fR3wGCk-IXp

- Visual Question Answering Dataset for Bilingual Image Understanding: A Study of Cross-Lingual Transfer Using Attention Maps. In Proceedings of the 27th International Conference on Computational Linguistics (Santa Fe, New Mexico, USA). Association for Computational Linguistics, 1918–1928. http://aclweb.org/anthology/C18-1163

- Makoto Shing and Takuya Akiba. 2023. Japanese Stable VLM. https://huggingface.co/stabilityai/japanese-stable-vlm

- The evolved transformer. In International conference on machine learning. PMLR, 5877–5886.

- Kenneth O Stanley and Risto Miikkulainen. 2002. Evolving neural networks through augmenting topologies. Evolutionary computation 10, 2 (2002), 99–127.

- EvoJAX: Hardware-Accelerated Neuroevolution. arXiv preprint arXiv:2202.05008 (2022).

- Tom White. 2016. Sampling generative networks. arXiv preprint arXiv:1609.04468 (2016).

- Model soups: averaging weights of multiple fine-tuned models improves accuracy without increasing inference time. In International Conference on Machine Learning. PMLR, 23965–23998.

- TIES-Merging: Resolving Interference When Merging Models. In Advances in Neural Information Processing Systems 36: Annual Conference on Neural Information Processing Systems 2023, NeurIPS 2023, New Orleans, LA, USA, December 10 - 16, 2023, Alice Oh, Tristan Naumann, Amir Globerson, Kate Saenko, Moritz Hardt, and Sergey Levine (Eds.). http://papers.nips.cc/paper_files/paper/2023/hash/1644c9af28ab7916874f6fd6228a9bcf-Abstract-Conference.html

- Language Models are Super Mario: Absorbing Abilities from Homologous Models as a Free Lunch. arXiv:2311.03099 [cs.CL]

- Barret Zoph and Quoc V Le. 2016. Neural architecture search with reinforcement learning. arXiv preprint arXiv:1611.01578 (2016).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.