Emergent World Models and Latent Variable Estimation in Chess-Playing Language Models

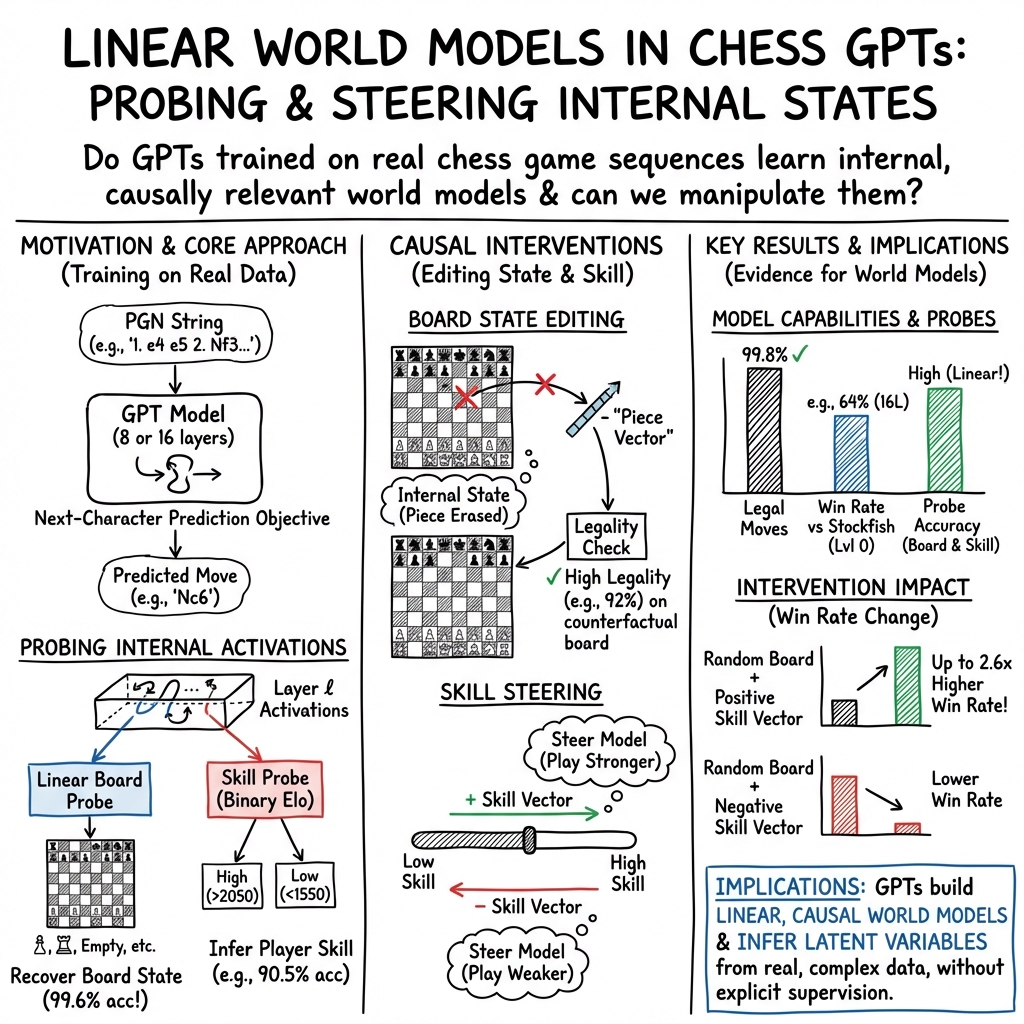

Abstract: LLMs have shown unprecedented capabilities, sparking debate over the source of their performance. Is it merely the outcome of learning syntactic patterns and surface level statistics, or do they extract semantics and a world model from the text? Prior work by Li et al. investigated this by training a GPT model on synthetic, randomly generated Othello games and found that the model learned an internal representation of the board state. We extend this work into the more complex domain of chess, training on real games and investigating our model's internal representations using linear probes and contrastive activations. The model is given no a priori knowledge of the game and is solely trained on next character prediction, yet we find evidence of internal representations of board state. We validate these internal representations by using them to make interventions on the model's activations and edit its internal board state. Unlike Li et al's prior synthetic dataset approach, our analysis finds that the model also learns to estimate latent variables like player skill to better predict the next character. We derive a player skill vector and add it to the model, improving the model's win rate by up to 2.6 times.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper explores a big question about AI LLMs like GPT: do they just copy patterns from text, or do they build an inner “world model” that helps them understand what’s going on? To test this, the authors trained a small GPT-like model only to guess the next character in chess game transcripts. Even without being taught chess rules, the model learned to play legal, sensible moves—and, more importantly, it seemed to keep track of the chessboard in its “mind.”

What questions did the researchers ask?

To make this simple, think of the model as a student who only reads game notes and tries to guess the next symbol.

They asked:

- Does the model form an internal picture of the chessboard as the game goes on?

- Can we “read” that internal picture from the model’s hidden layers?

- Does the model also pick up hidden facts about the game, like the players’ skill level, to help it predict moves?

- If we nudge the model’s internal state, can we change how it plays—like erasing a piece from its memory or making it play stronger or weaker?

How did they study this?

Imagine peeking inside the model’s “brain” while it’s reading a chess game.

- Training:

- They trained two small GPTs (8-layer and 16-layer; much smaller than modern chatbots) on 16 million real chess games from Lichess.

- The model saw chess in PGN format (the text version of moves like “1.e4 e5 2.Nf3…”), one character at a time, and learned to predict the next character. It was not told any rules.

- Reading the model’s mind (linear probes):

- A “linear probe” is a very simple tool—like a basic meter—that looks at a model’s hidden activations and tries to tell what’s on each square of the board (empty, white knight, black queen, etc.).

- If a simple probe can accurately tell the board state, that suggests the model has a clean, readable internal representation of the chessboard.

- Hidden traits (latent variables):

- They also tried to see if the model estimated “latent” or hidden features—like the players’ Elo ratings (skill levels)—because knowing skill can help predict the kinds of moves players make.

- Interventions (steering the model):

- “Interventions” are like tiny edits to the model’s internal activity while it’s thinking.

- They tried two kinds:

- 1) Board-state edits: delete a specific piece from the model’s internal board, then see if it adjusts its moves accordingly.

- 2) Skill edits: add a “skill vector” to make the model play better, or subtract it to make it play worse.

Analogy: Think of the model’s activations as a sketchbook. Probes are like simple rulers reading the drawing. Interventions are like erasing or darkening parts of the sketch to see how the final picture changes.

What did they find?

Here are the main takeaways:

- The model learned chess from scratch:

- It played legal moves almost all the time (about 99.6–99.8%).

- The larger model (16 layers) beat a beginner-level setting of the Stockfish engine about 64% of the time; the smaller one about 46%.

- It wasn’t just memorizing: its games diverged from the training data early (by move 10, all tested games were unique).

- The model kept an internal board:

- Simple linear probes could read the board state from the model’s hidden layers with very high accuracy (up to 99.6%).

- This means the model “knows” where pieces are, in a neat, readable way inside its activations.

- The model estimated player skill:

- Probes could classify whether players were low or high Elo at much better-than-chance levels (around 90% vs ~70% for a random baseline).

- The model likely uses this hidden skill estimate to choose more realistic next moves.

- Interventions worked:

- Board edits: When the researchers “erased” a piece from the model’s internal state and from the actual board, the model adjusted and made moves that were much more likely to be legal for the new, edited position. Legal move rate jumped to about 92% versus ~40% without the internal edit.

- Skill edits: Adding a “high-skill” vector made the model play better; subtracting it made it play worse. On messy, randomly started positions, the 16-layer model’s win rate rose from ~16.7% to ~43.2% (about 2.6× better) with the positive skill edit.

Why is this important?

- It shows LLMs can build internal “world models” even from plain text in complex settings like chess—without being told the rules.

- The model didn’t just track the board; it also inferred player skill, a hidden trait that helps with better predictions.

- Because researchers could read and edit these internal representations, it suggests we can make models more understandable and steerable, not just mysterious black boxes.

What could this mean for the future?

- Better transparency: If we can detect and edit what a model “knows,” we can make AI more reliable and safer—like reducing mistakes or “hallucinations” in other tasks.

- Practical steering: The same techniques might help tune models’ behavior in language, not just games—e.g., making them more careful, truthful, or detailed on demand.

- Limits still exist: Chess is structured and rule-based; real-world language is messier. But this is a promising step toward understanding and controlling what large models learn internally.

Collections

Sign up for free to add this paper to one or more collections.