Creating a Digital Twin of Spinal Surgery: A Proof of Concept

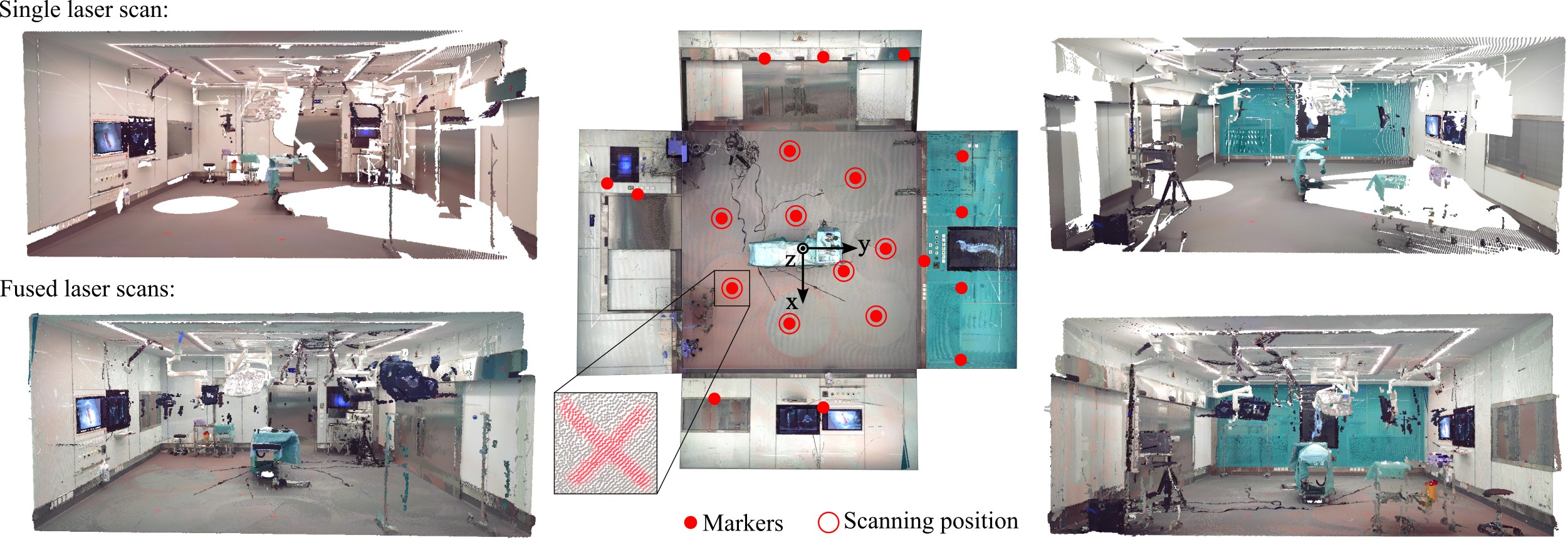

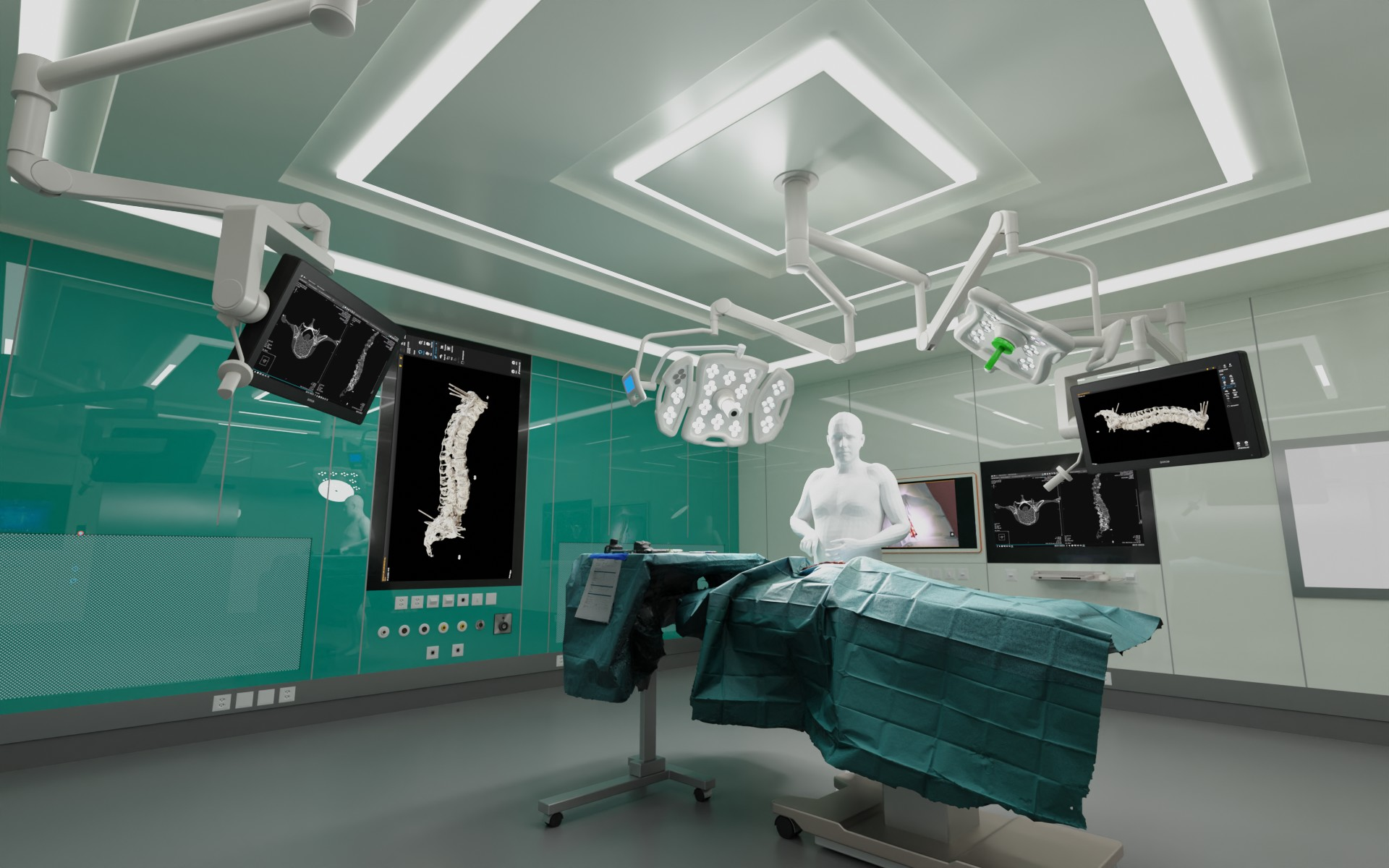

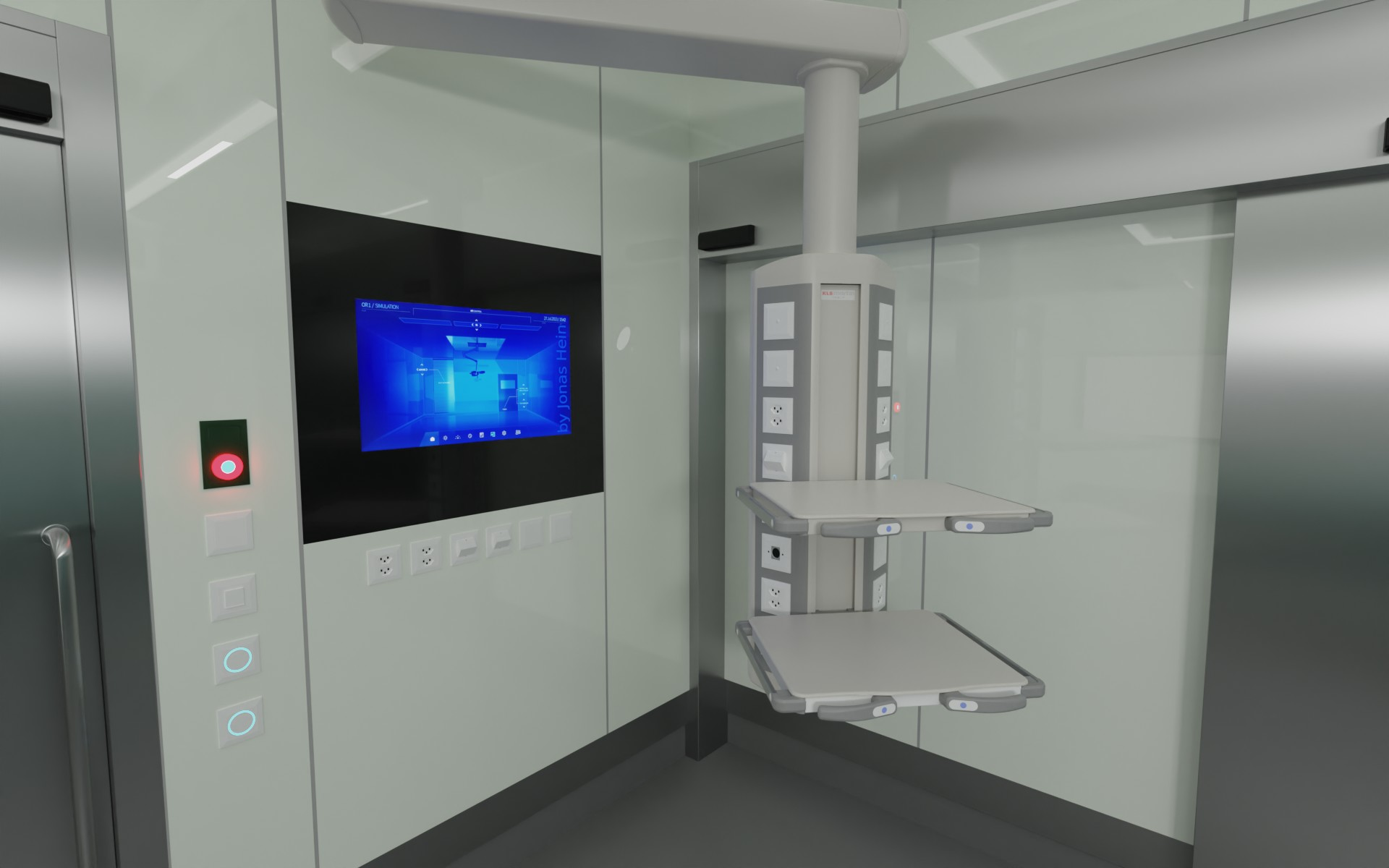

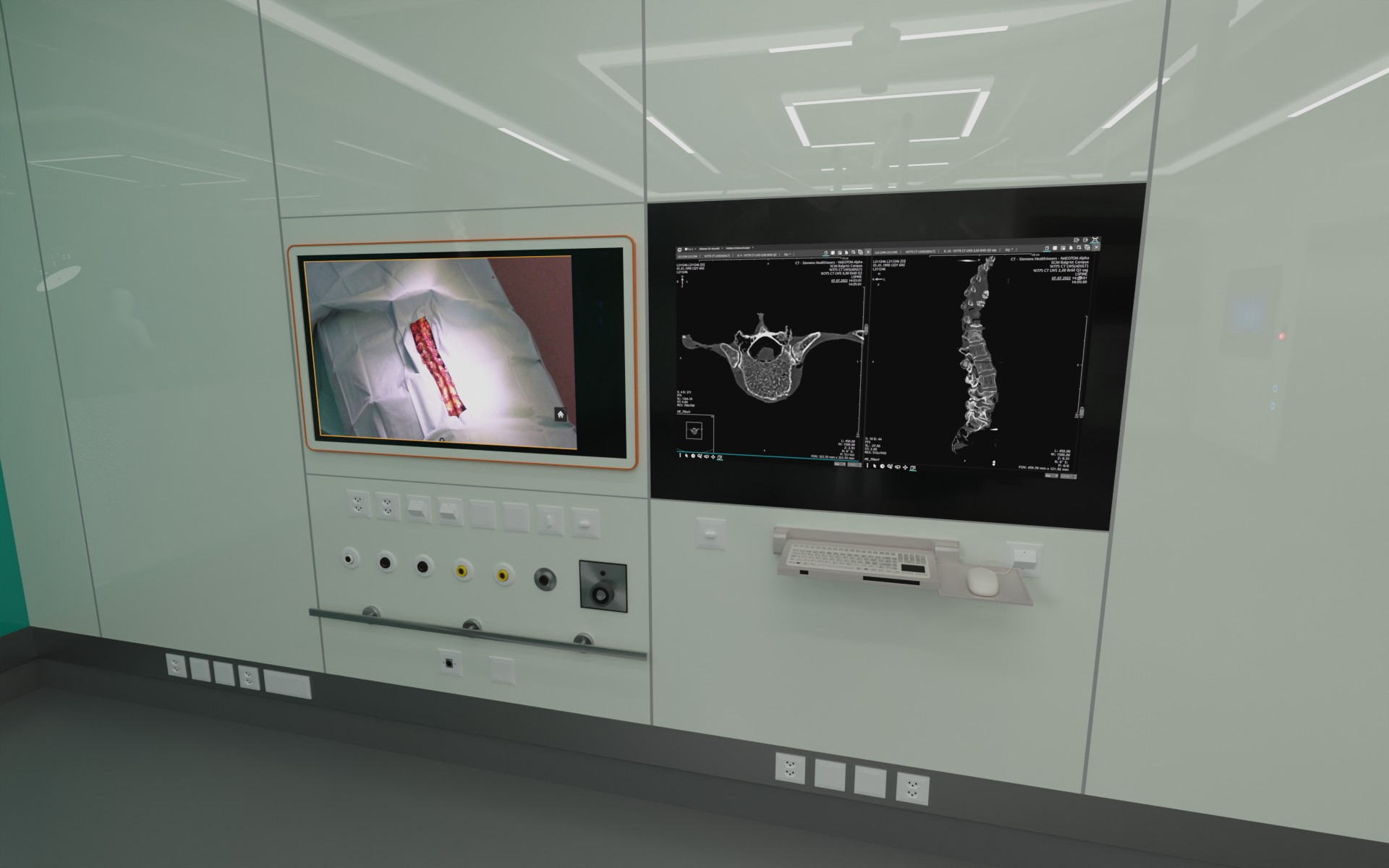

Abstract: Surgery digitalization is the process of creating a virtual replica of real-world surgery, also referred to as a surgical digital twin (SDT). It has significant applications in various fields such as education and training, surgical planning, and automation of surgical tasks. In addition, SDTs are an ideal foundation for machine learning methods, enabling the automatic generation of training data. In this paper, we present a proof of concept (PoC) for surgery digitalization that is applied to an ex-vivo spinal surgery. The proposed digitalization focuses on the acquisition and modelling of the geometry and appearance of the entire surgical scene. We employ five RGB-D cameras for dynamic 3D reconstruction of the surgeon, a high-end camera for 3D reconstruction of the anatomy, an infrared stereo camera for surgical instrument tracking, and a laser scanner for 3D reconstruction of the operating room and data fusion. We justify the proposed methodology, discuss the challenges faced and further extensions of our prototype. While our PoC partially relies on manual data curation, its high quality and great potential motivate the development of automated methods for the creation of SDTs.

- Fast and easy focus stacking. URL: https://github.com/PetteriAimonen/focus-stack.

- Robotic surgery with lean reinforcement learning. CoRR abs/2105.01006.

- Aracam: A rgb-d multi-view photogrammetry system for lower limb 3d reconstruction applications. Sensors 22, 2443.

- Toward a digital twin for arthroscopic knee surgery: A systematic review. IEEE Access 10, 45029–45052.

- Dense 3d reconstruction through lidar: A comparative study on ex-vivo porcine tissue. arXiv preprint arXiv:2401.10709 .

- Openpose: Realtime multi-person 2d pose estimation using part affinity fields. IEEE Transactions on Pattern Analysis and Machine Intelligence .

- Opera: Attention-regularized transformers for surgical phase recognition, in: Medical Image Computing and Computer Assisted Intervention–MICCAI 2021, Springer. pp. 604–614.

- Multimodal medical imaging fusion for patient specific musculoskeletal modeling of the lumbar spine system in functional posture. Journal of Medical and Biological Engineering 37, 739–749.

- Reinforcement learning in surgery. Surgery 170, 329–332.

- Hmd-egopose: Head-mounted display-based egocentric marker-less tool and hand pose estimation for augmented surgical guidance. International Journal of Computer Assisted Radiology and Surgery 17, 2253–2262.

- Pico lantern: Surface reconstruction and augmented reality in laparoscopic surgery using a pick-up laser projector. Medical image analysis 25, 95–102.

- Surgery 4.0: the natural culmination of the industrial revolution? Innovative Surgical Sciences 2, 105–108.

- Dynamic depth-supervised nerf for multi-view rgb-d operating room videos, in: International Workshop on PRedictive Intelligence In MEdicine, Springer. pp. 218–230.

- Digital twin: manufacturing excellence through virtual factory replication. White paper 1, 1–7.

- The advances in computer vision that are enabling more autonomous actions in surgery: A systematic review of the literature. Sensors 22, 4918.

- Chapter 38 - Surgical data science, in: Zhou, S.K., Rueckert, D., Fichtinger, G. (Eds.), Handbook of Medical Image Computing and Computer Assisted Intervention. Academic Press. The Elsevier and MICCAI Society Book Series, pp. 931–952. doi:https://doi.org/10.1016/B978-0-12-816176-0.00043-0.

- Next-generation surgical navigation: Marker-less multi-view 6dof pose estimation of surgical instruments. arXiv:2305.03535.

- Dynamic scene graph representation for surgical video, in: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 81--87.

- X23D - intraoperative 3d lumbar spine shape reconstruction based on sparse multi-view x-ray data. J. Imaging 8, 271.

- Articulated clinician detection using 3d pictorial structures on RGB-D data. Medical Image Anal. 35, 215--224.

- A multi-view rgb-d approach for human pose estimation in operating rooms, in: 2017 IEEE winter conference on applications of computer vision (WACV), IEEE. pp. 363--372.

- 3d gaussian splatting for real-time radiance field rendering. ACM Transactions on Graphics 42.

- Prototyping a digital twin for real time remote control over mobile networks: Application of remote surgery. Ieee Access 7, 20325--20336.

- Category-level articulated object pose estimation, in: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 3706--3715.

- Neuralangelo: High-fidelity neural surface reconstruction, in: CVPR.

- Automatic registration with continuous pose updates for marker-less surgical navigation in spine surgery. Medical Image Analysis 91, 103027.

- Sgt++: Improved scene graph-guided transformer for surgical report generation. IEEE Transactions on Medical Imaging .

- Endogaussian: Gaussian splatting for deformable surgical scene reconstruction. arXiv preprint arXiv:2401.12561 .

- The perioperative human digital twin. Anesthesia & Analgesia 134, 885--892.

- SMPL: A skinned multi-person linear model. ACM Transactions on Graphics, (Proc. SIGGRAPH Asia) 34, 248:1--248:16.

- Segment anything in medical images. Nature Communications 15, 654.

- Surgical data science--from concepts toward clinical translation. Medical image analysis 76, 102306.

- Surgical data science for next-generation interventions. Nature Biomedical Engineering 1, 691--696.

- Immersive Virtual Reality for Surgical Training: A Systematic Review. Journal of Surgical Research 268, 40--58.

- Computer vision in surgery: from potential to clinical value. npj Digit. Medicine 5.

- A structured light-based laparoscope with real-time organs’ surface reconstruction for minimally invasive surgery, in: 2012 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, IEEE. pp. 5769--5772.

- Virtual reality in surgery. Bmj 323, 912--915.

- Resfields: Residual neural fields for spatiotemporal signals. arXiv preprint arXiv:2309.03160 .

- Nerf: Representing scenes as neural radiance fields for view synthesis. Communications of the ACM 65, 99--106.

- Surgical process modeling. Innovative surgical sciences 2, 123--137.

- Multimodal semantic scene graphs for holistic modeling of surgical procedures. arXiv preprint arXiv:2106.15309 .

- Defining digital surgery for the future. npj Digit. Medicine 5.

- Embodied hands: Modeling and capturing hands and bodies together. ACM Transactions on Graphics, (Proc. SIGGRAPH Asia) 36.

- Twin-s: a digital twin for skull base surgery. International Journal of Computer Assisted Radiology and Surgery , 1--8.

- Mvor: A multi-view rgb-d operating room dataset for 2d and 3d human pose estimation.

- Neus2: Fast learning of neural implicit surfaces for multi-view reconstruction, in: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3295--3306.

- 4d gaussian splatting for real-time dynamic scene rendering. arXiv preprint arXiv:2310.08528 .

- Surrol: An open-source reinforcement learning centered and dvrk compatible platform for surgical robot learning, in: 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), IEEE. pp. 1821--1828.

- Advancing surgical vqa with scene graph knowledge. arXiv preprint arXiv:2312.10251 .

- Synthesizing diverse human motions in 3d indoor scenes, in: ICCV, pp. 14692--14703.

- You only learn once: Universal anatomical landmark detection, in: Medical Image Computing and Computer Assisted Intervention--MICCAI 2021: 24th International Conference, Strasbourg, France, September 27--October 1, 2021, Proceedings, Part V 24, Springer. pp. 85--95.

- LABRAD-OR: Lightweight Memory Scene Graphs for Accurate Bimodal Reasoning in Dynamic Operating Rooms, in: Medical Image Computing and Computer Assisted Intervention – MICCAI 2023, Springer Nature Switzerland, Cham. pp. 302--311.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.