Empowering Biomedical Discovery with AI Agents

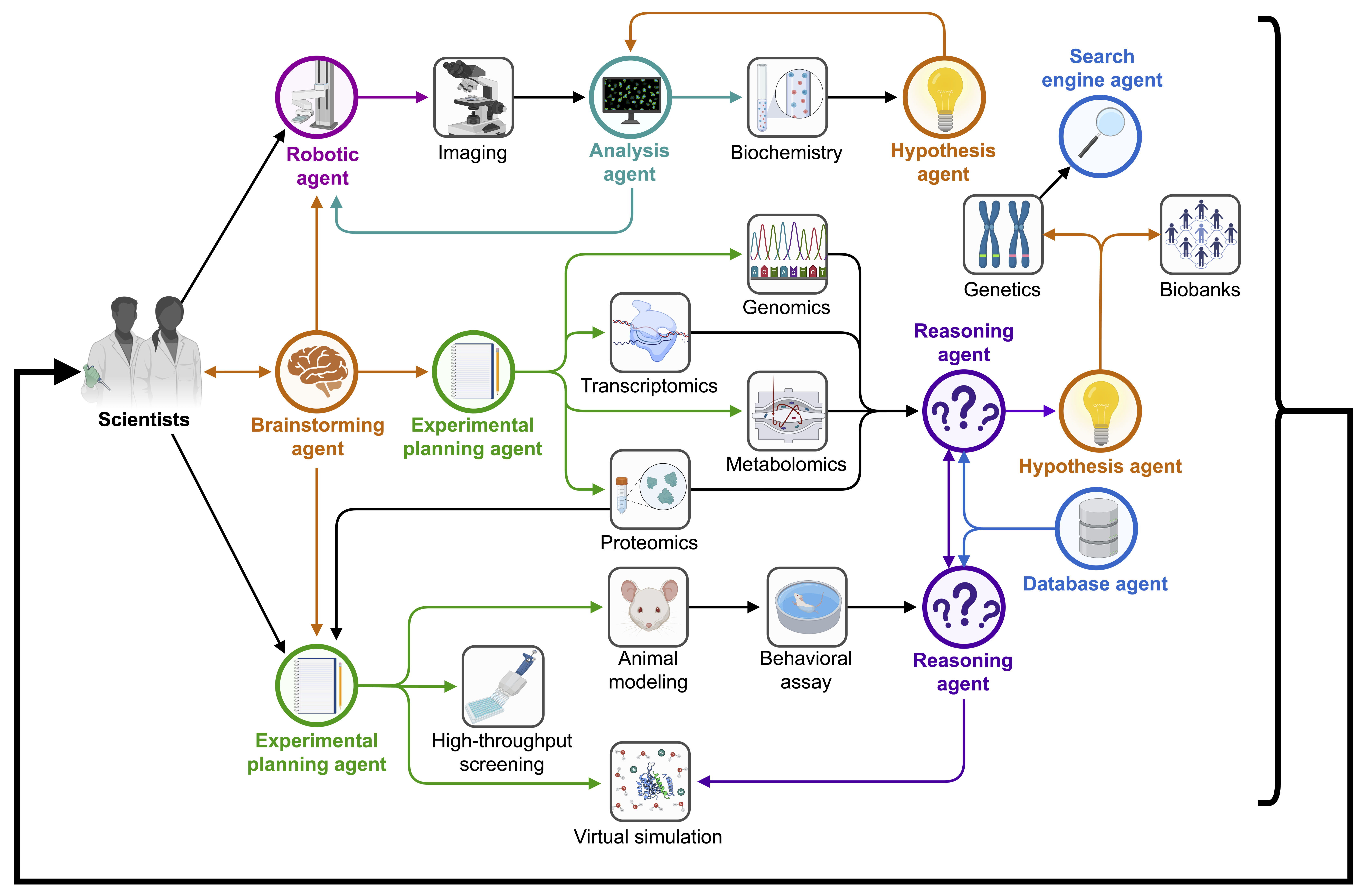

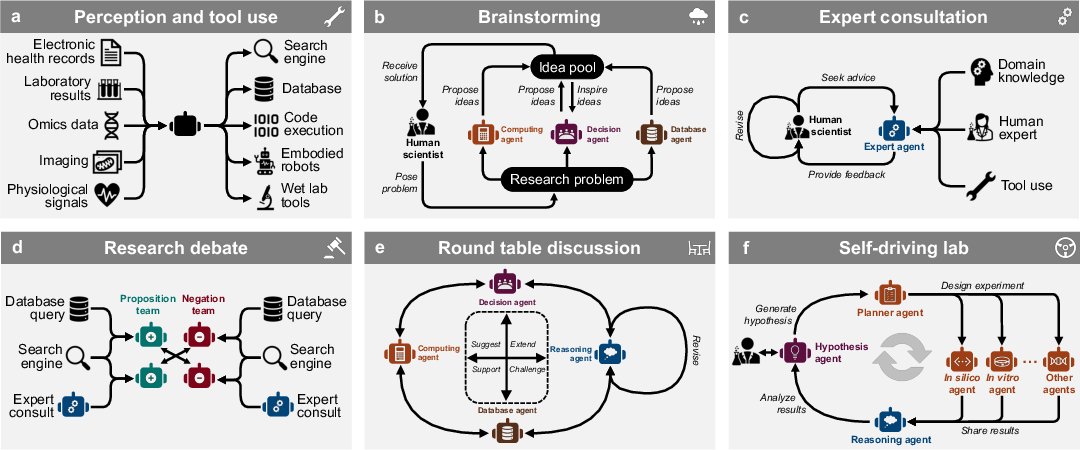

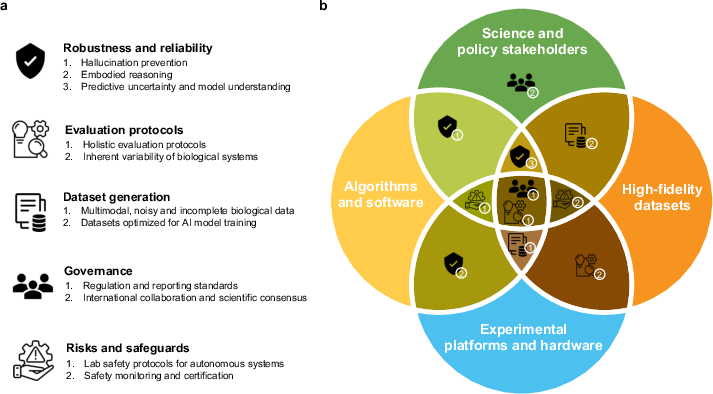

Abstract: We envision "AI scientists" as systems capable of skeptical learning and reasoning that empower biomedical research through collaborative agents that integrate AI models and biomedical tools with experimental platforms. Rather than taking humans out of the discovery process, biomedical AI agents combine human creativity and expertise with AI's ability to analyze large datasets, navigate hypothesis spaces, and execute repetitive tasks. AI agents are poised to be proficient in various tasks, planning discovery workflows and performing self-assessment to identify and mitigate gaps in their knowledge. These agents use LLMs and generative models to feature structured memory for continual learning and use machine learning tools to incorporate scientific knowledge, biological principles, and theories. AI agents can impact areas ranging from virtual cell simulation, programmable control of phenotypes, and the design of cellular circuits to developing new therapies.

- Hiroaki Kitano. Nobel turing challenge: creating the engine for scientific discovery. NPJ systems biology and applications, 7(1):29, 2021.

- Autonomous chemical research with large language models. Nature, 624(7992):570–578, 2023.

- Augmenting large language models with chemistry tools. In NeurIPS 2023 AI for Science Workshop, 2023. URL https://openreview.net/forum?id=wdGIL6lx3l.

- The rise and potential of large language model based agents: A survey. arXiv preprint arXiv:2309.07864, 2023.

- Large language model based multi-agents: A survey of progress and challenges. arXiv preprint arXiv:2402.01680, 2024.

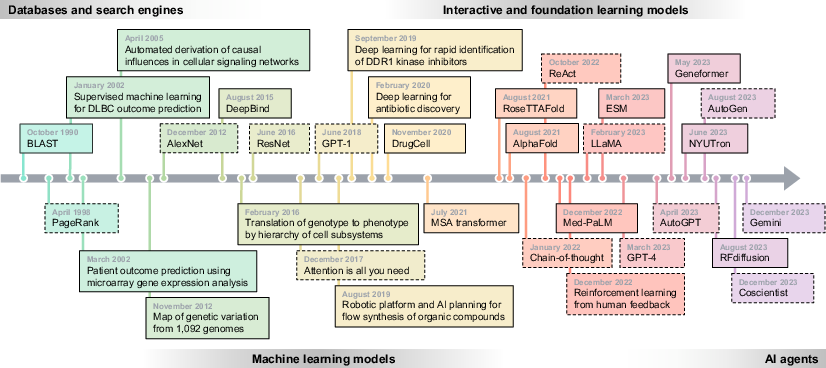

- Scientific discovery in the age of artificial intelligence. Nature, 620(7972):47–60, 2023a.

- Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023.

- Gemini: a family of highly capable multimodal models. arXiv preprint arXiv:2312.11805, 2023.

- Improving language understanding by generative pre-training. 2018.

- Chatgpt for robotics: Design principles and model abilities. Microsoft Auton. Syst. Robot. Res, 2:20, 2023.

- Significant Gravitas. Autogpt, 2023. URL https://agpt.co.

- React: Synergizing reasoning and acting in language models. In The Eleventh International Conference on Learning Representations, 2023a. URL https://openreview.net/forum?id=WE_vluYUL-X.

- Reflexion: Language agents with verbal reinforcement learning. In Thirty-seventh Conference on Neural Information Processing Systems, 2023.

- Autogen: Enabling next-gen llm applications via multi-agent conversation framework. arXiv preprint arXiv:2308.08155, 2023a.

- Progprompt: Generating situated robot task plans using large language models. In 2023 IEEE International Conference on Robotics and Automation (ICRA), pages 11523–11530, 2023. doi: 10.1109/ICRA48891.2023.10161317.

- Language models as zero-shot planners: Extracting actionable knowledge for embodied agents. In International Conference on Machine Learning, pages 9118–9147. PMLR, 2022a.

- On scientific understanding with artificial intelligence. Nature Reviews Physics, 4(12):761–769, 2022.

- Trustllm: Trustworthiness in large language models. arXiv:2401.05561, 2024.

- Understanding catastrophic forgetting in language models via implicit inference. arXiv:2309.10105, 2023.

- Ethics of large language models in medicine and medical research. The Lancet Digital Health, 5(6):e333–e335, 2023a.

- Unreliable llm bioethics assistants: Ethical and pedagogical risks. The American Journal of Bioethics, 23(10):89–91, 2023.

- The ethics of interaction: Mitigating security threats in llms. arXiv:2401.12273, 2024.

- Causal protein-signaling networks derived from multiparameter single-cell data. Science, 308(5721):523–529, 2005.

- Msa transformer. In International Conference on Machine Learning, pages 8844–8856. PMLR, 2021.

- Evolutionary-scale prediction of atomic-level protein structure with a language model. Science, 379(6637):1123–1130, 2023.

- Accurate prediction of protein structures and interactions using a three-track neural network. Science, 373(6557):871–876, 2021.

- Predicting the sequence specificities of dna-and rna-binding proteins by deep learning. Nature biotechnology, 33(8):831–838, 2015.

- Transfer learning enables predictions in network biology. Nature, pages 1–9, 2023.

- Translation of genotype to phenotype by a hierarchy of cell subsystems. Cell systems, 2(2):77–88, 2016.

- Gene expression correlates of clinical prostate cancer behavior. Cancer cell, 1(2):203–209, 2002.

- Diffuse large b-cell lymphoma outcome prediction by gene-expression profiling and supervised machine learning. Nature medicine, 8(1):68–74, 2002.

- Predicting drug response and synergy using a deep learning model of human cancer cells. Cancer cell, 38(5):672–684, 2020.

- A small-molecule tnik inhibitor targets fibrosis in preclinical and clinical models. Nature Biotechnology, March 2024. ISSN 1087-0156, 1546-1696. doi: 10.1038/s41587-024-02143-0. URL https://www.nature.com/articles/s41587-024-02143-0.

- A deep learning approach to antibiotic discovery. Cell, 180(4):688–702, 2020.

- The protein data bank. Nucleic acids research, 28(1):235–242, 2000.

- 1000 Genomes Project Consortium et al. An integrated map of genetic variation from 1,092 human genomes. Nature, 491(7422):56, 2012.

- Drugbank: a comprehensive resource for in silico drug discovery and exploration. Nucleic acids research, 34(suppl_1):D668–D672, 2006.

- Alphafold protein structure database: massively expanding the structural coverage of protein-sequence space with high-accuracy models. Nucleic acids research, 50(D1):D439–D444, 2022.

- Highly accurate protein structure prediction with alphafold. Nature, 596(7873):583–589, 2021.

- The anatomy of a large-scale hypertextual web search engine. Computer networks and ISDN systems, 30(1-7):107–117, 1998.

- Basic local alignment search tool. Journal of molecular biology, 215(3):403–410, 1990.

- The chembl database in 2017. Nucleic acids research, 45(D1):D945–D954, 2017.

- Fast and accurate protein structure search with foldseek. Nature Biotechnology, pages 1–4, 2023.

- Siren’s song in the ai ocean: a survey on hallucination in large language models. arXiv preprint arXiv:2309.01219, 2023a.

- Imagenet classification with deep convolutional neural networks. Advances in neural information processing systems, 25, 2012.

- Deep learning. MIT press, 2016.

- Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 770–778, 2016.

- Attention is all you need. Advances in neural information processing systems, 30, 2017.

- Multi-fidelity active learning with GFlownets, 2024. URL https://openreview.net/forum?id=3QR230r11w.

- Training language models to follow instructions with human feedback. In Alice H. Oh, Alekh Agarwal, Danielle Belgrave, and Kyunghyun Cho, editors, Advances in Neural Information Processing Systems, 2022. URL https://openreview.net/forum?id=TG8KACxEON.

- Deep learning enables rapid identification of potent ddr1 kinase inhibitors. Nature biotechnology, 37(9):1038–1040, 2019.

- Adaptive machine learning for protein engineering. Current Opinion in Structural Biology, 72:145–152, February 2022. ISSN 0959440X. doi: 10.1016/j.sbi.2021.11.002. URL https://linkinghub.elsevier.com/retrieve/pii/S0959440X21001457.

- Top-down design of protein architectures with reinforcement learning. Science, 380(6642):266–273, April 2023. ISSN 0036-8075, 1095-9203. doi: 10.1126/science.adf6591.

- Deep batch active learning for drug discovery. bioRxiv, pages 2023–07, 2023.

- Evidential deep learning for guided molecular property prediction and discovery. ACS Central Science, 7(8):1356–1367, August 2021. ISSN 2374-7943, 2374-7951. doi: 10.1021/acscentsci.1c00546.

- Active learning for optimal intervention design in causal models. Nature Machine Intelligence, 5(10):1066–1075, October 2023b. ISSN 2522-5839. doi: 10.1038/s42256-023-00719-0.

- Optimizing risk-based breast cancer screening policies with reinforcement learning. Nature Medicine, 28(1):136–143, January 2022. ISSN 1078-8956, 1546-170X. doi: 10.1038/s41591-021-01599-w. URL https://www.nature.com/articles/s41591-021-01599-w.

- Cognitive architectures for language agents. Transactions on Machine Learning Research, 2024. ISSN 2835-8856. URL https://openreview.net/forum?id=1i6ZCvflQJ. Survey Certification.

- A survey on large language model based autonomous agents. Frontiers of Computer Science, 18(6):1–26, 2024.

- Finetuned language models are zero-shot learners. In International Conference on Learning Representations, 2022a. URL https://openreview.net/forum?id=gEZrGCozdqR.

- A visual–language foundation model for pathology image analysis using medical twitter. Nature medicine, 29(9):2307–2316, 2023a.

- Can generalist foundation models outcompete special-purpose tuning? case study in medicine. arXiv preprint arXiv:2311.16452, 2023.

- Generative agents: Interactive simulacra of human behavior. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, pages 1–22, 2023.

- Biogpt: generative pre-trained transformer for biomedical text generation and mining. Briefings in Bioinformatics, 23(6):bbac409, 2022.

- Health system-scale language models are all-purpose prediction engines. Nature, pages 1–6, 2023.

- Large language models encode clinical knowledge. Nature, 620(7972):172–180, 2023a.

- Towards expert-level medical question answering with large language models. arXiv preprint arXiv:2305.09617, 2023b.

- Language models are few-shot learners. In H. Larochelle, M. Ranzato, R. Hadsell, M.F. Balcan, and H. Lin, editors, Advances in Neural Information Processing Systems, volume 33, pages 1877–1901. Curran Associates, Inc., 2020.

- Voyager: An open-ended embodied agent with large language models. In Intrinsically-Motivated and Open-Ended Learning Workshop @NeurIPS2023, 2023b. URL https://openreview.net/forum?id=nfx5IutEed.

- Promptbreeder: Self-referential self-improvement via prompt evolution, 2024. URL https://openreview.net/forum?id=HKkiX32Zw1.

- Large language models as optimizers. arXiv preprint arXiv:2309.03409, 2023.

- Yann LeCun. A path towards autonomous machine intelligence. Open Review, 62(1), 2022.

- Can large language models provide useful feedback on research papers? a large-scale empirical analysis. arXiv preprint arXiv:2310.01783, 2023a.

- Reconcile: Round-table conference improves reasoning via consensus among diverse llms. arXiv preprint arXiv:2309.13007, 2023.

- Biological research and self-driving labs in deep space supported by artificial intelligence. Nature Machine Intelligence, 5(3):208–219, 2023.

- Advancing mathematics by guiding human intuition with ai. Nature, 600(7887):70–74, 2021.

- A brief history of the hypothesis. Cell, 134(3):378–381, 2008.

- In silico protein interaction screening uncovers donson’s role in replication initiation. Science, 381(6664):eadi3448, 2023.

- Chain-of-thought prompting elicits reasoning in large language models. Advances in Neural Information Processing Systems, 35:24824–24837, 2022b.

- Automated bioinformatics analysis via autoba. arXiv preprint arXiv:2309.03242, 2023a.

- Medagents: Large language models as collaborators for zero-shot medical reasoning. arXiv preprint arXiv:2311.10537, 2023.

- De novo drug design using reinforcement learning with multiple gpt agents. In Thirty-seventh Conference on Neural Information Processing Systems, 2023a.

- Levels of agi: Operationalizing progress on the path to agi. arXiv preprint arXiv:2311.02462, 2023.

- Dual use of artificial-intelligence-powered drug discovery. Nature Machine Intelligence, 4(3):189–191, March 2022. ISSN 2522-5839. doi: 10.1038/s42256-022-00465-9. URL https://www.nature.com/articles/s42256-022-00465-9.

- Prioritizing safeguarding over autonomy: Risks of llm agents for science. arXiv preprint arXiv:2402.04247, 2024.

- Protein design meets biosecurity, 2024.

- A tutorial on conducting genome-wide association studies: Quality control and statistical analysis. Int. J. Methods Psychiatr. Res., 27(2):e1608, June 2018.

- Genome-wide association studies. Nature Reviews Methods Primers, 1(1):59, Aug 2021. ISSN 2662-8449. doi: 10.1038/s43586-021-00056-9. URL https://doi.org/10.1038/s43586-021-00056-9.

- Felix W Frueh. Real-world clinical effectiveness, regulatory transparency and payer coverage: three ingredients for translating pharmacogenomics into clinical practice. Pharmacogenomics, 11(5):657–660, May 2010.

- CP Panayiotopoulos. The Epilepsies: Seizures, Syndromes and Management. Bladon Medical Publishing, Oxfordshire, UK, 2005.

- International League Against Epilepsy Consortium on Complex Epilepsies. Gwas meta-analysis of over 29,000 people with epilepsy identifies 26 risk loci and subtype-specific genetic architecture. Nature Communications, 9(1):1–12, 2018.

- Uk biobank: An open access resource for identifying the causes of a wide range of complex diseases of middle and old age. PLoS medicine, 12(3):e1001779, 2015.

- Identification of potential disease-associated variants in idiopathic generalized epilepsy using targeted sequencing. Journal of Human Genetics, 69(2):59–67, Feb 2024. ISSN 1435-232X. doi: 10.1038/s10038-023-01208-3. URL https://doi.org/10.1038/s10038-023-01208-3.

- Genes4Epilepsy: An epilepsy gene resource. Epilepsia, 64(5):1368–1375, May 2023.

- Recruitment strategies and lessons learned from a large genetic study of African Americans. PLOS Glob. Public Health, 2(8):e0000416, August 2022.

- Brahim Aissani. Confounding by linkage disequilibrium. Journal of Human Genetics, 59(2):110–115, 2014. ISSN 1435-232X. doi: 10.1038/jhg.2013.130. URL https://doi.org/10.1038/jhg.2013.130.

- The human cell atlas. eLife, 6:e27041, December 2017. ISSN 2050-084X. doi: 10.7554/eLife.27041. URL https://elifesciences.org/articles/27041.

- A next generation connectivity map: L1000 platform and the first 1,000,000 profiles. Cell, 171(6):1437–1452.e17, November 2017. ISSN 00928674. doi: 10.1016/j.cell.2017.10.049. URL https://linkinghub.elsevier.com/retrieve/pii/S0092867417313090.

- A proteome-wide atlas of drug mechanism of action. Nature Biotechnology, 41(6):845–857, June 2023. ISSN 1087-0156, 1546-1696. doi: 10.1038/s41587-022-01539-0. URL https://www.nature.com/articles/s41587-022-01539-0.

- Next-generation characterization of the cancer cell line encyclopedia. Nature, 569(7757):503–508, May 2019. ISSN 0028-0836, 1476-4687. doi: 10.1038/s41586-019-1186-3. URL https://www.nature.com/articles/s41586-019-1186-3.

- Three million images and morphological profiles of cells treated with matched chemical and genetic perturbations. Biorxiv, pages 2022–01, 2022.

- Rxrx3: Phenomics map of biology. bioRxiv, pages 2023–02, 2023.

- Convolutional networks for supervised mining of molecular patterns within cellular context. Nature Methods, 20(2):284–294, February 2023. ISSN 1548-7091, 1548-7105. doi: 10.1038/s41592-022-01746-2. URL https://www.nature.com/articles/s41592-022-01746-2.

- Serial lift-out: sampling the molecular anatomy of whole organisms. Nature Methods, pages 1–9, 2023.

- Emma Lundberg and Georg H. H. Borner. Spatial proteomics: a powerful discovery tool for cell biology. Nature Reviews Molecular Cell Biology, 20(5):285–302, May 2019. ISSN 1471-0072, 1471-0080. doi: 10.1038/s41580-018-0094-y. URL https://www.nature.com/articles/s41580-018-0094-y.

- Opencell: Endogenous tagging for the cartography of human cellular organization. Science, 375(6585):eabi6983, March 2022. ISSN 0036-8075, 1095-9203. doi: 10.1126/science.abi6983. URL https://www.science.org/doi/10.1126/science.abi6983.

- Building the next generation of virtual cells to understand cellular biology. Biophysical Journal, 122(18):3560–3569, September 2023. ISSN 00063495. doi: 10.1016/j.bpj.2023.04.006. URL https://linkinghub.elsevier.com/retrieve/pii/S0006349523002369.

- Contextualizing protein representations using deep learning on protein networks and single-cell data. Nature Methods, 2024.

- Digital pipette: open hardware for liquid transfer in self-driving laboratories. Digital Discovery, 2(6):1745–1751, 2023. doi: 10.1039/D3DD00115F. URL https://pubs.rsc.org/en/content/articlelanding/2023/dd/d3dd00115f.

- Systematic profiling of conditional pathway activation identifies context-dependent synthetic lethalities. Nature Genetics, 55(10):1709–1720, October 2023. ISSN 1061-4036, 1546-1718. doi: 10.1038/s41588-023-01515-7. URL https://www.nature.com/articles/s41588-023-01515-7.

- Slide-tags enables single-nucleus barcoding for multimodal spatial genomics. Nature, 625(7993):101–109, January 2024. ISSN 0028-0836, 1476-4687. doi: 10.1038/s41586-023-06837-4. URL https://www.nature.com/articles/s41586-023-06837-4.

- Proximity extension assay in combination with next-generation sequencing for high-throughput proteome-wide analysis. Molecular and Cellular Proteomics, 20:100168, 2021. ISSN 15359476. doi: 10.1016/j.mcpro.2021.100168. URL https://linkinghub.elsevier.com/retrieve/pii/S1535947621001407.

- Spatial single-cell mass spectrometry defines zonation of the hepatocyte proteome. Nature Methods, 20(10):1530–1536, October 2023. ISSN 1548-7091, 1548-7105. doi: 10.1038/s41592-023-02007-6. URL https://www.nature.com/articles/s41592-023-02007-6.

- High-plex protein and whole transcriptome co-mapping at cellular resolution with spatial cite-seq. Nature Biotechnology, 41(10):1405–1409, October 2023a. ISSN 1087-0156, 1546-1696. doi: 10.1038/s41587-023-01676-0. URL https://www.nature.com/articles/s41587-023-01676-0.

- Perturb-seq: Dissecting molecular circuits with scalable single-cell rna profiling of pooled genetic screens. Cell, 167(7):1853–1866.e17, December 2016. ISSN 00928674. doi: 10.1016/j.cell.2016.11.038. URL https://linkinghub.elsevier.com/retrieve/pii/S0092867416316105.

- Simultaneous crispr screening and spatial transcriptomics reveals intracellular, intercellular, and functional transcriptional circuits. Biorxiv, 2023.

- Diffdock: Diffusion steps, twists, and turns for molecular docking. In The Eleventh International Conference on Learning Representations, 2023. URL https://openreview.net/forum?id=kKF8_K-mBbS.

- Robust deep learning–based protein sequence design using proteinmpnn. Science, 378(6615):49–56, 2022.

- De novo design of protein structure and function with rfdiffusion. Nature, 620(7976):1089–1100, 2023.

- Discovery of a structural class of antibiotics with explainable deep learning. Nature, pages 1–9, 2023.

- Scaling deep learning for materials discovery. Nature, pages 1–6, 2023.

- Drugging the’undruggable’cancer targets. Nature Reviews Cancer, 17(8):502–508, 2017.

- Mitochondrial fragmentation drives selective removal of deleterious mtdna in the germline. Nature, 570(7761):380–384, 2019.

- Camel: Communicative agents for" mind" exploration of large language model society. In Thirty-seventh Conference on Neural Information Processing Systems, 2023b.

- Visual instruction tuning. In Thirty-seventh Conference on Neural Information Processing Systems, 2023b. URL https://openreview.net/forum?id=w0H2xGHlkw.

- Video-LLaMA: An instruction-tuned audio-visual language model for video understanding. In Yansong Feng and Els Lefever, editors, Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, pages 543–553, Singapore, December 2023c. Association for Computational Linguistics. doi: 10.18653/v1/2023.emnlp-demo.49. URL https://aclanthology.org/2023.emnlp-demo.49.

- Deep learning tools for the measurement of animal behavior in neuroscience. Current Opinion in Neurobiology, 60:1–11, February 2020. ISSN 09594388. doi: 10.1016/j.conb.2019.10.008. URL https://linkinghub.elsevier.com/retrieve/pii/S0959438819301151.

- Live-imaging of astrocyte morphogenesis and function in zebrafish neural circuits. Nature Neuroscience, 23(10):1297–1306, October 2020. ISSN 1097-6256, 1546-1726. doi: 10.1038/s41593-020-0703-x. URL https://www.nature.com/articles/s41593-020-0703-x.

- PaLM-e: An embodied multimodal language model. In Andreas Krause, Emma Brunskill, Kyunghyun Cho, Barbara Engelhardt, Sivan Sabato, and Jonathan Scarlett, editors, Proceedings of the 40th International Conference on Machine Learning, volume 202 of Proceedings of Machine Learning Research, pages 8469–8488. PMLR, 23–29 Jul 2023. URL https://proceedings.mlr.press/v202/driess23a.html.

- Proteome-wide mapping of short-lived proteins in human cells. Molecular cell, 81(22):4722–4735, 2021.

- Learning transferable visual models from natural language supervision. In International conference on machine learning, pages 8748–8763. PMLR, 2021.

- MiniGPT-4: Enhancing vision-language understanding with advanced large language models. In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=1tZbq88f27.

- Flamingo: a visual language model for few-shot learning. Advances in Neural Information Processing Systems, 35:23716–23736, 2022.

- InstructBLIP: Towards general-purpose vision-language models with instruction tuning. In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=vvoWPYqZJA.

- Next-gpt: Any-to-any multimodal llm. arXiv preprint arXiv:2309.05519, 2023b.

- Introducing our multimodal models, 2023. URL https://www.adept.ai/blog/fuyu-8b.

- Revisiting human-agent communication: The importance of joint co-construction and understanding mental states. Frontiers in Psychology, 12:580955, 2021.

- Inner monologue: Embodied reasoning through planning with language models. In 6th Annual Conference on Robot Learning, 2022b. URL https://openreview.net/forum?id=3R3Pz5i0tye.

- Direct preference optimization: Your language model is secretly a reward model. arXiv preprint arXiv:2305.18290, 2023.

- Self-adaptive large language model (llm)-based multiagent systems. In 2023 IEEE International Conference on Autonomic Computing and Self-Organizing Systems Companion (ACSOS-C), pages 104–109. IEEE, 2023.

- Multi-agent actor-critic for mixed cooperative-competitive environments. Advances in neural information processing systems, 30, 2017.

- MetaGPT: Meta programming for multi-agent collaborative framework. In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=VtmBAGCN7o.

- Building cooperative embodied agents modularly with large language models. In The Twelfth International Conference on Learning Representations, 2024a. URL https://openreview.net/forum?id=EnXJfQqy0K.

- Encouraging divergent thinking in large language models through multi-agent debate. arXiv preprint arXiv:2305.19118, 2023b.

- Improving language model negotiation with self-play and in-context learning from ai feedback. arXiv preprint arXiv:2305.10142, 2023.

- Roco: Dialectic multi-robot collaboration with large language models. arXiv preprint arXiv:2307.04738, 2023.

- Can language models teach weaker agents? teacher explanations improve students via theory of mind. arXiv preprint arXiv:2306.09299, 2023.

- Epidemic modeling with generative agents. arXiv preprint arXiv:2307.04986, 2023.

- Social simulacra: Creating populated prototypes for social computing systems. In Proceedings of the 35th Annual ACM Symposium on User Interface Software and Technology, pages 1–18, 2022.

- Talm: Tool augmented language models. arXiv preprint arXiv:2205.12255, 2022.

- Toolformer: Language models can teach themselves to use tools. In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=Yacmpz84TH.

- Webgpt: Browser-assisted question-answering with human feedback. arXiv preprint arXiv:2112.09332, 2021.

- HuggingGPT: Solving AI tasks with chatGPT and its friends in hugging face. In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=yHdTscY6Ci.

- Chatdb: Augmenting llms with databases as their symbolic memory. arXiv preprint arXiv:2306.03901, 2023b.

- A robotic platform for flow synthesis of organic compounds informed by ai planning. Science, 365(6453):eaax1566, 2019.

- Do as i can, not as i say: Grounding language in robotic affordances. arXiv preprint arXiv:2204.01691, 2022.

- Zero-shot text-to-image generation. In International Conference on Machine Learning, pages 8821–8831. PMLR, 2021.

- LoRA: Low-rank adaptation of large language models. In International Conference on Learning Representations, 2022. URL https://openreview.net/forum?id=nZeVKeeFYf9.

- Communicative agents for software development. arXiv preprint arXiv:2307.07924, 2023.

- Llm as dba. arXiv preprint arXiv:2308.05481, 2023b.

- Ghost in the minecraft: Generally capable agents for open-world enviroments via large language models with text-based knowledge and memory. arXiv preprint arXiv:2305.17144, 2023.

- Text and code embeddings by contrastive pre-training. arXiv preprint arXiv:2201.10005, 2022.

- Memorybank: Enhancing large language models with long-term memory. Proceedings of the AAAI Conference on Artificial Intelligence, 38(17):19724–19731, Mar. 2024a. doi: 10.1609/aaai.v38i17.29946. URL https://ojs.aaai.org/index.php/AAAI/article/view/29946.

- QLoRA: Efficient finetuning of quantized LLMs. In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=OUIFPHEgJU.

- Locating and editing factual associations in gpt. In Advances in Neural Information Processing Systems, 2022.

- A comprehensive study of knowledge editing for large language models. arXiv preprint arXiv:2401.01286, 2024b.

- Sayplan: Grounding large language models using 3d scene graphs for scalable robot task planning. In 7th Annual Conference on Robot Learning, 2023. URL https://openreview.net/forum?id=wMpOMO0Ss7a.

- Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality. See https://vicuna. lmsys. org (accessed 14 April 2023), 2023.

- Large language models with controllable working memory. In Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki, editors, Findings of the Association for Computational Linguistics: ACL 2023, pages 1774–1793, Toronto, Canada, July 2023c. Association for Computational Linguistics. doi: 10.18653/v1/2023.findings-acl.112. URL https://aclanthology.org/2023.findings-acl.112.

- Large language models are zero-shot reasoners. Advances in neural information processing systems, 35:22199–22213, 2022.

- Llm+ p: Empowering large language models with optimal planning proficiency. arXiv preprint arXiv:2304.11477, 2023c.

- Dynamic planning with a llm. arXiv preprint arXiv:2308.06391, 2023.

- Igniting language intelligence: The hitchhiker’s guide from chain-of-thought reasoning to language agents. arXiv preprint arXiv:2311.11797, 2023d.

- Let’s think outside the box: Exploring leap-of-thought in large language models with creative humor generation. In The IEEE / CVF Computer Vision and Pattern Recognition Conference (CVPR), 2024b.

- Planning with large language models via corrective re-prompting. In NeurIPS 2022 Foundation Models for Decision Making Workshop, 2022.

- Tree of thoughts: Deliberate problem solving with large language models. In Thirty-seventh Conference on Neural Information Processing Systems, 2023b. URL https://openreview.net/forum?id=5Xc1ecxO1h.

- Recmind: Large language model powered agent for recommendation. arXiv preprint arXiv:2308.14296, 2023c.

- Least-to-most prompting enables complex reasoning in large language models. In The Eleventh International Conference on Learning Representations, 2023c. URL https://openreview.net/forum?id=WZH7099tgfM.

- Self-consistency improves chain of thought reasoning in language models. In The Eleventh International Conference on Learning Representations, 2023d. URL https://openreview.net/forum?id=1PL1NIMMrw.

- Graph of thoughts: Solving elaborate problems with large language models. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 38, pages 17682–17690, 2024.

- Reasoning with language model is planning with world model. In The 2023 Conference on Empirical Methods in Natural Language Processing, 2023. URL https://openreview.net/forum?id=VTWWvYtF1R.

- Self-refine: Iterative refinement with self-feedback. In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=S37hOerQLB.

- Llm-planner: Few-shot grounded planning for embodied agents with large language models. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 2998–3009, 2023.

- Teaching large language models to self-debug. In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=KuPixIqPiq.

- Embers of autoregression: Understanding large language models through the problem they are trained to solve. arXiv preprint arXiv:2309.13638, 2023.

- Reasoning or reciting? exploring the capabilities and limitations of language models through counterfactual tasks. arXiv preprint arXiv:2307.02477, 2023c.

- Show your work: Scratchpads for intermediate computation with language models. arXiv preprint arXiv:2112.00114, 2021.

- Quantifying uncertainty in answers from any language model via intrinsic and extrinsic confidence assessment. arXiv preprint arXiv:2308.16175, 2023.

- Just ask for calibration: Strategies for eliciting calibrated confidence scores from language models fine-tuned with human feedback. In The 2023 Conference on Empirical Methods in Natural Language Processing, 2023. URL https://openreview.net/forum?id=g3faCfrwm7.

- Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. In The Eleventh International Conference on Learning Representations, 2023. URL https://openreview.net/forum?id=VD-AYtP0dve.

- A tutorial on conformal prediction. Journal of Machine Learning Research, 9(3), 2008a.

- A tutorial on conformal prediction. Journal of Machine Learning Research, 9(12):371–421, 2008b. URL http://jmlr.org/papers/v9/shafer08a.html.

- Discovering language model behaviors with model-written evaluations. In Anna Rogers, Jordan Boyd-Graber, and Naoaki Okazaki, editors, Findings of the Association for Computational Linguistics: ACL 2023, pages 13387–13434, Toronto, Canada, July 2023. Association for Computational Linguistics. doi: 10.18653/v1/2023.findings-acl.847. URL https://aclanthology.org/2023.findings-acl.847.

- Fine-tuning aligned language models compromises safety, even when users do not intend to! In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=hTEGyKf0dZ.

- Jailbroken: How does LLM safety training fail? In Thirty-seventh Conference on Neural Information Processing Systems, 2023. URL https://openreview.net/forum?id=jA235JGM09.

- Holistic evaluation of language models. Annals of the New York Academy of Sciences, 1525(1):140–146, 2023. doi: https://doi.org/10.1111/nyas.15007. URL https://nyaspubs.onlinelibrary.wiley.com/doi/abs/10.1111/nyas.15007.

- GAIA: a benchmark for general AI assistants. In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=fibxvahvs3.

- Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. Transactions on Machine Learning Research, 2023. ISSN 2835-8856. URL https://openreview.net/forum?id=uyTL5Bvosj.

- Benchmarking large language models as ai research agents. ArXiv, abs/2310.03302, 2023b. URL https://api.semanticscholar.org/CorpusID:263671541.

- Agentbench: Evaluating LLMs as agents. In The Twelfth International Conference on Learning Representations, 2024. URL https://openreview.net/forum?id=zAdUB0aCTQ.

- Machine learning for integrating data in biology and medicine: Principles, practice, and opportunities. Information Fusion, 50:71–91, 2019. ISSN 1566-2535. doi: https://doi.org/10.1016/j.inffus.2018.09.012. URL https://www.sciencedirect.com/science/article/pii/S1566253518304482.

- Office of Science and Technology Policy. Ai bill of rights. https://www.whitehouse.gov/ostp/ai-bill-of-rights/, 2023. Accessed: 02/2024.

- Ai regulation has its own alignment problem: The technical and institutional feasibility of disclosure, registration, licensing, and auditing. George Washington Law Review, 11 2023. Forthcoming, Available at SSRN: https://ssrn.com/abstract=4634443.

- Plug in the safety chip: Enforcing constraints for llm-driven robot agents. In International Conference on Robotics and Automation, 2024.

- Evaluating large language models trained on code. arXiv:2107.03374, 2021.

- Code as policies: Language model programs for embodied control. In 2023 IEEE International Conference on Robotics and Automation (ICRA), pages 9493–9500. IEEE, 2023c.

- Enhancing trust in llm-based ai automation agents: New considerations and future challenges. In International Joint Conference on Artificial Intelligence, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.