Graph Reinforcement Learning for Combinatorial Optimization: A Survey and Unifying Perspective

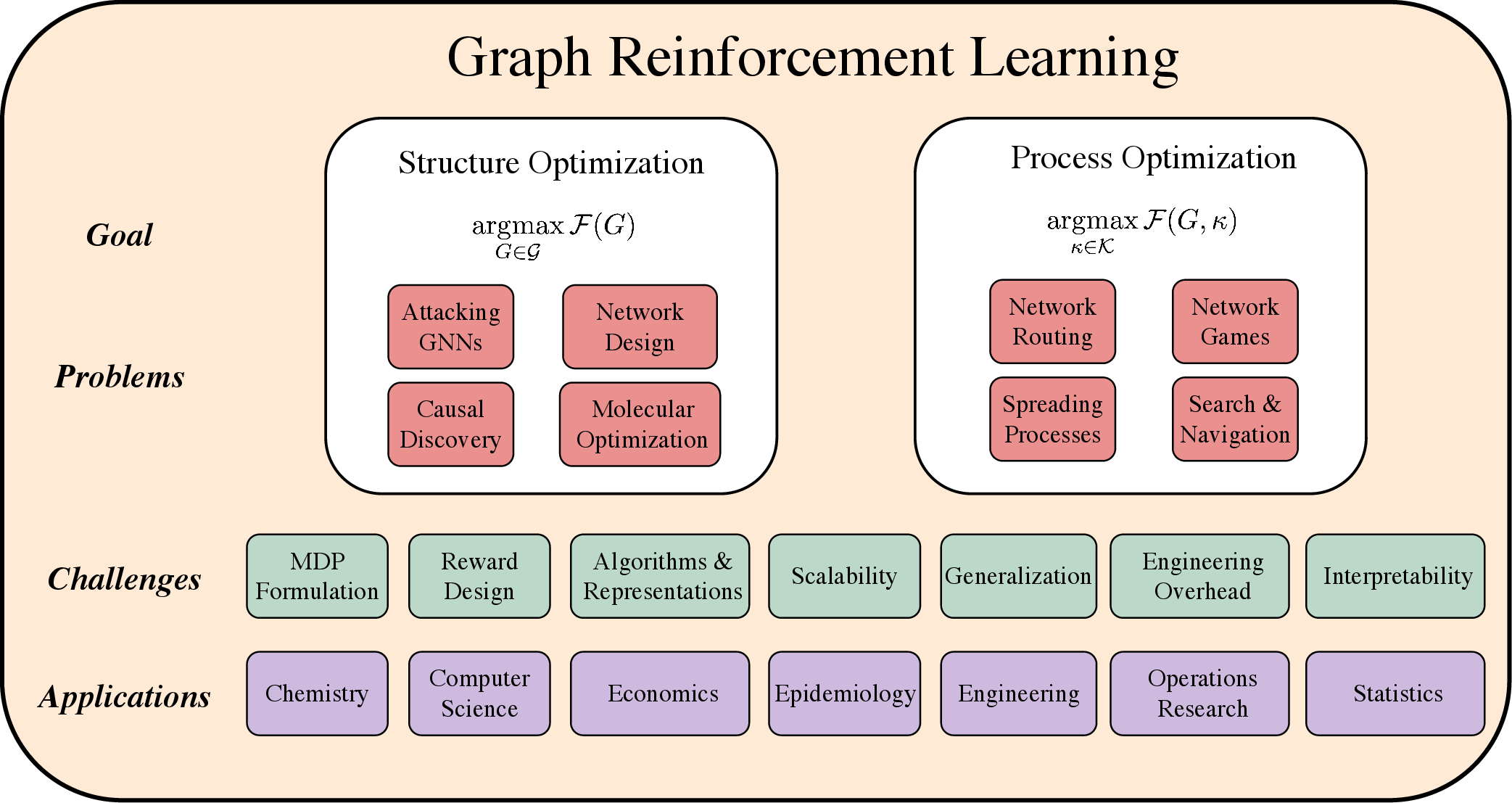

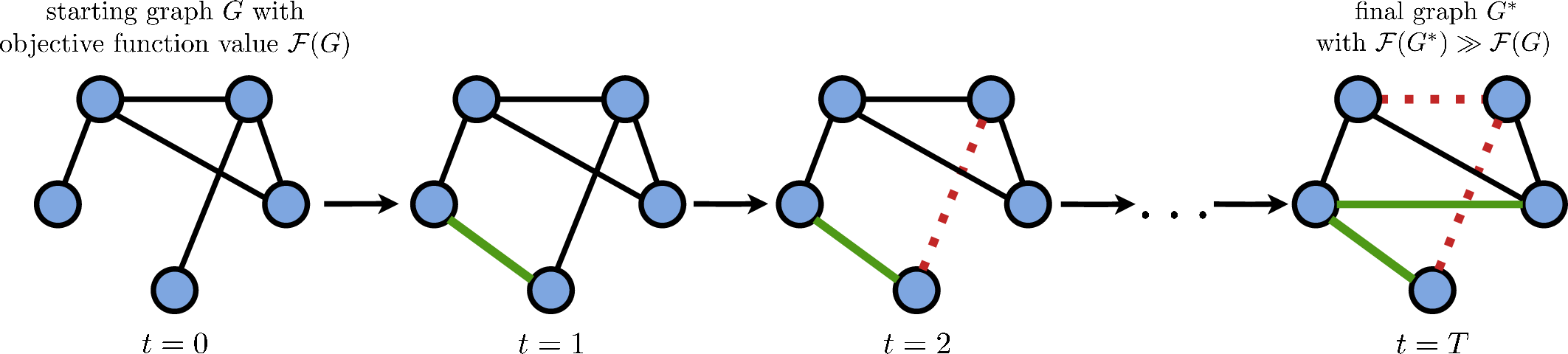

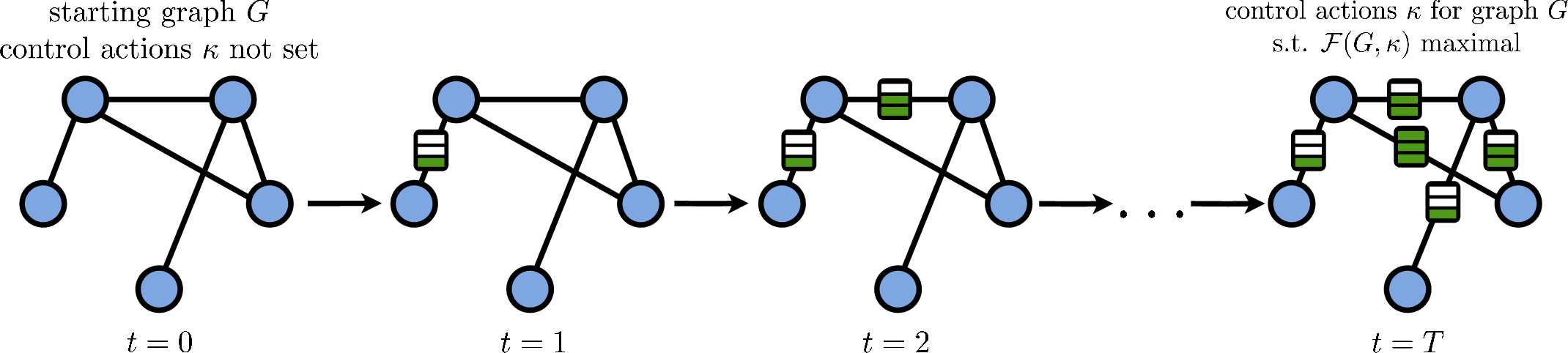

Abstract: Graphs are a natural representation for systems based on relations between connected entities. Combinatorial optimization problems, which arise when considering an objective function related to a process of interest on discrete structures, are often challenging due to the rapid growth of the solution space. The trial-and-error paradigm of Reinforcement Learning has recently emerged as a promising alternative to traditional methods, such as exact algorithms and (meta)heuristics, for discovering better decision-making strategies in a variety of disciplines including chemistry, computer science, and statistics. Despite the fact that they arose in markedly different fields, these techniques share significant commonalities. Therefore, we set out to synthesize this work in a unifying perspective that we term Graph Reinforcement Learning, interpreting it as a constructive decision-making method for graph problems. After covering the relevant technical background, we review works along the dividing line of whether the goal is to optimize graph structure given a process of interest, or to optimize the outcome of the process itself under fixed graph structure. Finally, we discuss the common challenges facing the field and open research questions. In contrast with other surveys, the present work focuses on non-canonical graph problems for which performant algorithms are typically not known and Reinforcement Learning is able to provide efficient and effective solutions.

- Bruce Abramson. Expected-outcome: a general model of static evaluation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 12(2):182–193, 1990.

- A fast and scalable radiation hybrid map construction and integration strategy. Genome Research, 10(3):350–364, 2000.

- Learning what to defer for maximum independent sets. In ICML, 2020.

- Ravindra K. Ahuja. Network Flows: Theory, Algorithms, and Applications. Prentice Hall, Englewood Cliffs, NJ, 1993.

- Chapter 1 Applications of network optimization. In Handbooks in Operations Research and Management Science, volume 7 of Network Models, pp. 1–83. Elsevier, 1995.

- Error and attack tolerance of complex networks. Nature, 406(6794):378–382, 2000.

- Towards real-time routing optimization with deep reinforcement learning: Open challenges. In HPSR, 2021.

- Thinking Fast and Slow with Deep Learning and Tree Search. In NeurIPS, 2017.

- Certification of an optimal tsp tour through 85,900 cities. Operations Research Letters, 37(1):11–15, 2009.

- Finite-time Analysis of the Multiarmed Bandit Problem. Machine Learning, 47(2):235–256, 2002.

- An overview of evolutionary algorithms for parameter optimization. Evolutionary Computation, 1(1):1–23, 1993.

- Neural Machine Translation by Jointly Learning to Align and Translate. In ICLR, 2016.

- A framework for behavioural cloning. In Machine Intelligence 15, pp. 103–129, 1999.

- Egon Balas. Machine sequencing via disjunctive graphs: an implicit enumeration algorithm. Operations Research, 17(6):941–957, 1969.

- Albert-László Barabási. Network Science. Cambridge University Press, 2016.

- Emergence of Scaling in Random Networks. Science, 286(5439):509–512, 1999.

- Dynamical Processes on Complex Networks. Cambridge University Press, 2008.

- Marc Barthélemy. Spatial networks. Physics Reports, 499(1-3), 2011.

- Relational inductive biases, deep learning, and graph networks. arXiv preprint arXiv:1806.01261, 2018.

- Laplacian Eigenmaps and Spectral Techniques for Embedding and Clustering. In NeurIPS, 2002.

- A distributional perspective on reinforcement learning. In ICML, 2017.

- Richard A. Bellman. Dynamic Programming. Princeton University Press, 1957.

- Machine Learning for Combinatorial Optimization: a Methodological Tour d’Horizon. European Journal of Operational Research, 290:405–421, 2021.

- Dimitri P. Bertsekas. Dynamic Programming and Optimal Control. Athena Scientific, 1995.

- Improving Network Robustness by Edge Modification. Physica A, 357:593–612, 2005.

- Learning heuristic search via imitation. In CoRL, 2017.

- A survey on metaheuristics for stochastic combinatorial optimization. Natural Computing, 8(2):239–287, 2009.

- Evasion attacks against machine learning at test time. In ECML-PKDD, 2013.

- Graph Theory, 1736-1936. Oxford University Press, 1986.

- Metaheuristics in combinatorial optimization: Overview and conceptual comparison. ACM Computing Surveys (CSUR), 35(3):268–308, 2003.

- Translating embeddings for modeling multi-relational data. In NeurIPS, 2013.

- What’s wrong with deep learning in tree search for combinatorial optimization. In ICLR, 2022.

- Packet Routing in Dynamically Changing Networks: A Reinforcement Learning Approach. In NeurIPS, 1994.

- Geometric Deep Learning: Going beyond Euclidean data. IEEE Signal Processing Magazine, 34(4):18–42, 2017.

- A survey of Monte Carlo tree search methods. IEEE Transactions on Computational Intelligence and AI in Games, 4(1):1–43, 2012.

- Spectral Networks and Locally Connected Networks on Graphs. In ICLR, 2014.

- Localization of the maximal entropy random walk. Physical review letters, 102(16):160602, 2009.

- Machine learning for molecular and materials science. Nature, 559(7715):547–555, 2018.

- Deep Blue. Artificial Intelligence, 134(1-2):57–83, 2002.

- Combinatorial optimization and reasoning with graph neural networks. In IJCAI, 2021.

- Ic insertion: an application of the travelling salesman problem. The International Journal of Production Research, 27(10):1837–1841, 1989.

- Progressive Strategies for Monte-Carlo Tree Search. New Mathematics and Natural Computation, 04(03):343–357, 2008.

- Contingency-aware influence maximization: A reinforcement learning approach. In UAI, 2021.

- Learning to perform local rewriting for combinatorial optimization. In NeurIPS, 2019.

- Large-sample learning of Bayesian networks is NP-hard. Journal of Machine Learning Research, 5:1287–1330, 2004.

- End-to-end driving via conditional imitation learning. In ICRA, 2018.

- Resilience of the Internet to Random Breakdowns. Physical Review Letters, 85(21):4626–4628, 2000.

- Breakdown of the Internet under Intentional Attack. Physical Review Letters, 86(16):3682–3685, 2001.

- Stephen A. Cook. The complexity of theorem-proving procedures. In STOC, 1971.

- Introduction to Algorithms. MIT Press, Fourth edition, 2022.

- Discriminative embeddings of latent variable models for structured data. In ICML, 2016.

- Adversarial attack on graph structured data. In ICML, 2018.

- Linear Programming, 1: Introduction. Springer, 1997.

- Solution of a large-scale traveling-salesman problem. Journal of the Operations Research Society of America, 2(4):393–410, 1954.

- Goal-directed graph construction using reinforcement learning. Proceedings of the Royal Society A: Mathematical, Physical and Engineering Sciences, 477(2254):20210168, 2021a.

- Solving Graph-based Public Goods Games with Tree Search and Imitation Learning. In NeurIPS, 2021b.

- Graph Neural Modeling of Network Flows. arXiv preprint arXiv:2209.05208, 2022.

- Planning spatial networks with Monte Carlo tree search. Proceedings of the Royal Society A: Mathematical, Physical and Engineering Sciences, 479(2269):20220383, 2023a.

- Tree search in DAG space with model-based reinforcement learning for causal discovery. arXiv preprint arXiv:2310.13576, 2023b.

- Go for a walk and arrive at the answer: Reasoning over paths in knowledge bases using reinforcement learning. In ICLR, 2018.

- MolGAN: An implicit generative model for small molecular graphs. In ICML Deep Generative Models Workshop, 2018.

- Convolutional Neural Networks on Graphs with Fast Localized Spectral Filtering. In NeurIPS, 2016.

- Learning to control a low-cost manipulator using data-efficient reinforcement learning. In RSS, 2011.

- Learning Structural Node Embeddings Via Diffusion Wavelets. In KDD, 2018.

- Dynamic Network Reconfiguration for Entropy Maximization using Deep Reinforcement Learning. In LoG, 2022.

- Experiments with the graph traverser program. Proceedings of the Royal Society of London. Series A. Mathematical and Physical Sciences, 294(1437):235–259, 1966.

- Ant colony optimization. IEEE Computational Intelligence Magazine, 1(4):28–39, 2006.

- BQ-NCO: Bisimulation quotienting for generalizable neural combinatorial optimization. In NeurIPS, 2023.

- Deep Reinforcement Learning in Large Discrete Action Spaces. In ICML, 2015.

- Convolutional Networks on Graphs for Learning Molecular Fingerprints. In NeurIPS, 2015.

- Shimon Even. Graph Algorithms. Cambridge University Press, 2011.

- Why (and how) networks should run themselves. arXiv preprint arXiv:1710.11583, 2017.

- Increasing internet capacity using local search. Computational Optimization and Applications, 29(1):13–48, 2004.

- Addressing function approximation error in actor-critic methods. In ICML, 2018.

- Computers and Intractability. A Guide to the Theory of NP-Completeness. W. H. Freeman and Co, 1979.

- Combining online and offline knowledge in UCT. In ICML, 2007.

- Algorithmic concept-based explainable reasoning. In AAAI, 2022.

- Neural Message Passing for Quantum Chemistry. In ICML, 2017.

- Oded Goldreich. Computational Complexity: A Conceptual Perspective. Cambridge University Press, 2008.

- Sanjeev Goyal. Connections: An Introduction to the Economics of Networks. Princeton University Press, 2012.

- node2vec: Scalable Feature Learning for Networks. In KDD, 2016.

- Continuous deep q-learning with model-based acceleration. In ICML, 2016.

- Objective-Reinforced Generative Adversarial Networks (ORGAN) for Sequence Generation Models. arXiv preprint arXiv:1705.10843, 2018.

- Deep Learning for Real-Time Atari Game Play Using Offline Monte-Carlo Tree Search Planning. In NeurIPS, 2014.

- Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. In ICML, 2018.

- Representation learning on graphs: Methods and applications. IEEE Data Engineering Bulletin, 40(3):52–74, 2017a.

- Inductive Representation Learning on Large Graphs. In NeurIPS, 2017b.

- Graphical Enumeration. Academic Press, New York, 1973.

- Deep Reinforcement Learning with a Combinatorial Action Space for Predicting Popular Reddit Threads. In EMNLP, 2016.

- Keld Helsgaun. An effective implementation of the Lin–Kernighan traveling salesman heuristic. European Journal of Operational Research, 126(1):106–130, 2000.

- Deep Convolutional Networks on Graph-Structured Data. arXiv preprint arXiv:1506.05163, 2015.

- Rainbow: Combining Improvements in Deep Reinforcement Learning. In AAAI, 2018.

- Generative adversarial imitation learning. In NeurIPS, 2016.

- GDDR: GNN-based Data-Driven Routing. In ICDCS, 2021.

- Chapter 3 - Games on Networks. In Handbook of Game Theory with Economic Applications, volume 4, pp. 95–163. Elsevier, 2015.

- Graph Coloring Problems. Wiley, New York, 1995.

- Unleashing the potential of data-driven networking. In COMSNETS, 2017.

- Junction Tree Variational Autoencoder for Molecular Graph Generation. In ICML, 2018.

- Richard M. Karp. Reducibility among combinatorial problems. In Complexity of Computer Computations, pp. 85–103. Springer, 1972.

- A contribution to the mathematical theory of epidemics. Proceedings of the Royal Society of London Series A, 115(772):700–721, 1927.

- Learning combinatorial optimization algorithms over graphs. In NeurIPS, 2017.

- MIP-GNN: A data-driven framework for guiding combinatorial solvers. In AAAI, 2022.

- Learning collaborative policies to solve np-hard routing problems. In NeurIPS, 2021.

- Sym-NCO: Leveraging Symmetricity for Neural Combinatorial Optimization. In NeurIPS, 2022.

- Semi-Supervised Classification with Graph Convolutional Networks. In ICLR, 2017.

- Optimization by simulated annealing. Science, 220(4598):671–680, 1983.

- Bandit based Monte-Carlo planning. In ECML, 2006.

- Probabilistic Graphical Models: Principles and Techniques. MIT Press, 2009.

- Attention, learn to solve routing problems! In ICLR, 2019.

- Grammar Variational Autoencoder. In ICML, 2017.

- POMO: Policy optimization with multiple optima for reinforcement learning. In NeurIPS, 2020.

- An automatic method of solving discrete programming problems. Econometrica, 28(3):497–520, 1960.

- Ranked reward: Enabling self-play reinforcement learning for combinatorial optimization. arXiv preprint arXiv:1807.01672, 2018.

- Efficient Behavior of Small-World Networks. Physical Review Letters, 87(19):198701, 2001.

- Leonid Anatolevich Levin. Universal sequential search problems. Problemy Peredachi Informatsii, 9(3):115–116, 1973.

- Guided policy search. In ICML, 2013.

- Gated Graph Sequence Neural Networks. In ICLR, 2017.

- Learning deep generative models of graphs. In ICML, 2018.

- Efficient Graph Generation with Graph Recurrent Attention Networks. In NeurIPS, 2019.

- Continuous control with deep reinforcement learning. In ICLR, 2016.

- An Effective Heuristic Algorithm for the Traveling-Salesman Problem. Operations Research, 21(2):498–516, 1973.

- Graph adversarial attack via rewiring. In KDD, 2021.

- GCOMB: Learning budget-constrained combinatorial algorithms over billion-sized graphs. In NeurIPS, 2020.

- Simple random search provides a competitive approach to reinforcement learning. arXiv preprint arXiv:1803.07055, 2018.

- Abraham H. Maslow. The Psychology of Science: a Reconnaissance. Harper & Row, 1966.

- Reinforcement learning for combinatorial optimization: A survey. Computers & Operations Research, 134:105400, 2021.

- Controlling graph dynamics with reinforcement learning and graph neural networks. In ICML, 2021.

- Human-level control through deep reinforcement learning. Nature, 518(7540):529–533, 2015.

- Asynchronous Methods for Deep Reinforcement Learning. In ICML, 2016.

- A framework for mesencephalic dopamine systems based on predictive Hebbian learning. The Journal of Neuroscience, 16(5):1936–1947, 1996.

- Machine-learning–based column selection for column generation. Transportation Science, 55(4):815–831, 2021.

- Damon Mosk-Aoyama. Maximum algebraic connectivity augmentation is NP-hard. Operations Research Letters, 36(6):677–679, 2008.

- Towards interpretable reinforcement learning using attention augmented agents. In NeurIPS, 2019.

- John Nash. Some games and machines for playing them. Technical Report D-1164, Rand Corporation, 1952.

- M. E. J. Newman. Networks. Oxford University Press, 2018.

- Action-conditional video prediction using deep networks in atari games. In NeurIPS, 2015.

- Asymmetric Transitivity Preserving Graph Embedding. In KDD, 2016.

- Learning graph search heuristics. In LoG, 2022.

- Intrinsically motivated graph exploration using network theories of human curiosity. In Proceedings of the Second Learning on Graphs (LoG) Conference, 2023.

- Judea Pearl. Heuristics: Intelligent Search Strategies for Computer Problem Solving. Addison-Wesley Longman Publishing Co., Inc., 1984.

- Judea Pearl. Probabilistic Reasoning in Intelligent Systems: Networks of Plausible Inference. Morgan Kaufmann, 1988.

- DeepWalk: Online Learning of Social Representations. In KDD, 2014.

- Reinforcement learning for adaptive routing. In IJCNN, 2002.

- Elements of Causal Inference: Foundations and Learning Algorithms. MIT Press, 2017.

- Learning Partial Policies to Speedup MDP Tree Search via Reduction to IID Learning. The Journal of Machine Learning Research, 18(1):2179–2213, 2017.

- Dean A. Pomerleau. ALVINN: An autonomous land vehicle in a neural network. In NeurIPS, 1988.

- Dean A. Pomerleau. Efficient training of artificial neural networks for autonomous navigation. Neural Computation, 3(1):88–97, 1991.

- struc2vec: Learning Node Representations from Structural Identity. In KDD, 2017.

- Martin Riedmiller. Neural Fitted Q Iteration – First Experiences with a Data Efficient Neural Reinforcement Learning Method. In ECML, 2005.

- A Survey of Multi-Objective Sequential Decision-Making. Journal of Artificial Intelligence Research, 48:67–113, 2013.

- An adaptive large neighborhood search heuristic for the pickup and delivery problem with time windows. Transportation Science, 40(4):455–472, 2006.

- Christopher D. Rosin. Nested Rollout Policy Adaptation for Monte Carlo Tree Search. In IJCAI, 2011.

- A reduction of imitation learning and structured prediction to no-regret online learning. In AISTATS, 2011.

- Artificial Intelligence: a Modern Approach. Prentice Hall, Fourth edition, 2020.

- Evolution strategies as a scalable alternative to reinforcement learning. arXiv preprint arXiv:1703.03864, 2017.

- Variational annealing on graphs for combinatorial optimization. In NeurIPS, 2023.

- The Graph Neural Network Model. IEEE Transactions on Neural Networks, 20(1):61–80, 2009.

- Prioritized experience replay. In ICLR, 2016.

- Mitigation of malicious attacks on networks. PNAS, 108(10):3838–3841, 2011.

- Mastering Atari, Go, chess and shogi by planning with a learned model. Nature, 588(7839):604–609, 2020.

- Trust region policy optimization. In ICML, 2015.

- Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Gideon Schwarz. Estimating the dimension of a model. The Annals of Statistics, 6(2):461–464, 1978.

- M-Walk: Learning to Walk over Graphs using Monte Carlo Tree Search. In NeurIPS, 2018.

- David Silver. Reinforcement Learning of Local Shape in the Game of Go. In IJCAI, 2007.

- High performance outdoor navigation from overhead data using imitation learning. In RSS, 2008.

- Mastering the game of Go with deep neural networks and tree search. Nature, 529(7587):484–489, 2016.

- Mastering the game of Go without human knowledge. Nature, 550(7676):354–359, 2017.

- A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play. Science, 362(6419):1140–1144, 2018.

- Information theory of complex networks: on evolution and architectural constraints. In Complex Networks, pp. 189–207. Springer, 2004.

- Supervised neural networks for the classification of structures. IEEE Transactions on Neural Networks, 8(3):714–735, 1997.

- Equibind: Geometric deep learning for drug binding structure prediction. In ICML, 2022.

- Peter Stone. TPOT-RL Applied to Network Routing. In ICML, 2000.

- Adversarial attacks on graph neural networks via node injections: A hierarchical reinforcement learning approach. In WWW, 2020.

- Reinforcement Learning: An Introduction. MIT Press, 2018.

- Intriguing properties of neural networks. In ICLR, 2014.

- A Multi-Agent, Policy-Gradient approach to Network Routing. In ICML, 2001.

- On-line Policy Improvement using Monte-Carlo Search. In NeurIPS, 1997.

- An Introduction to Linear Programming and Game Theory. John Wiley & Sons, 2011.

- Learning strategic network emergence games. In NeurIPS, 2020.

- GraphOpt: Learning Optimization Models of Graph Formation. In ICML, 2020.

- Learning to Route with Deep RL. In NeurIPS Deep Reinforcement Learning Symposium, 2017.

- Deep reinforcement learning with double Q-learning. In AAAI, 2016.

- Graph attention networks. In ICLR, 2018.

- Programmatically interpretable reinforcement learning. In ICML, 2018.

- Graphical models, exponential families, and variational inference. Foundations and Trends in Machine Learning, 1(1–2):1–305, 2008.

- Scientific discovery in the age of artificial intelligence. Nature, 620(7972):47–60, 2023.

- Neural Architecture Search using Deep Neural Networks and Monte Carlo Tree Search. In AAAI, 2020.

- Qi Wang and Chunlei Tang. Deep reinforcement learning for transportation network combinatorial optimization: A survey. Knowledge-Based Systems, 233:107526, 2021.

- Improving robustness of complex networks via the effective graph resistance. The European Physical Journal B, 87(9):221, 2014.

- Ordering-based causal discovery with reinforcement learning. In IJCAI, 2021.

- Christopher J. C. H. Watkins and Peter Dayan. Technical note: Q-learning. Machine Learning, 8(3-4):279–292, 1992.

- Ronald J. Williams. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine Learning, 8(3-4):229–256, 1992.

- The Design of Approximation Algorithms. Cambridge University Press, 2011.

- No free lunch theorems for optimization. IEEE Transactions on Evolutionary Computation, 1(1):67–82, 1997.

- How powerful are graph neural networks? In ICLR, 2018a.

- What can neural networks reason about? In ICLR, 2020.

- Experience-driven networking: A deep reinforcement learning based approach. In IEEE INFOCOM, 2018b.

- Reinforcement causal structure learning on order graph. In AAAI, 2023a.

- Learning to boost resilience of complex networks via neural edge rewiring. Transactions on Machine Learning Research, 2023b.

- Hierarchical Graph Representation Learning with Differentiable Pooling. In NeurIPS, 2018.

- GNNExplainer: Generating explanations for graph neural networks. In NeurIPS, 2019.

- Graph convolutional policy network for goal-directed molecular graph generation. In NeurIPS, 2018a.

- GraphRNN: Generating Realistic Graphs with Deep Auto-regressive Models. In ICML, 2018b.

- Learn What Not to Learn: Action Elimination with Deep Reinforcement Learning. In NeurIPS, 2018.

- Learning to walk with dual agents for knowledge graph reasoning. In AAAI, 2022.

- CFR-RL: Traffic engineering with reinforcement learning in SDN. IEEE Journal on Selected Areas in Communications, 38(10):2249–2259, 2020.

- Deep learning enables rapid identification of potent DDR1 kinase inhibitors. Nature Biotechnology, 37(9):1038–1040, 2019.

- DAGs with no tears: continuous optimization for structure learning. In NeurIPS, 2018.

- Optimization of molecules via deep reinforcement learning. Scientific reports, 9(1):10752, 2019.

- Causal discovery with reinforcement learning. In ICLR, 2020.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.