- The paper presents a comprehensive review of memory mechanisms, highlighting writing, management, and reading operations in LLM-based agents.

- It details memory's role in self-evolution and context maintenance across applications like personal assistants, role-playing, and simulation tasks.

- The survey evaluates memory implementations through direct and indirect methods and outlines future research directions for enhanced memory modules.

A Survey on the Memory Mechanism of LLM-Based Agents

Introduction

The paper "A Survey on the Memory Mechanism of LLM-Based Agents" presents a comprehensive overview of the memory module utilized in LLM-based agents. Memory is a critical component distinguishing LLM-based agents from standard LLMs by enabling self-evolution in dynamic environments and supporting complex agent-environment interactions.

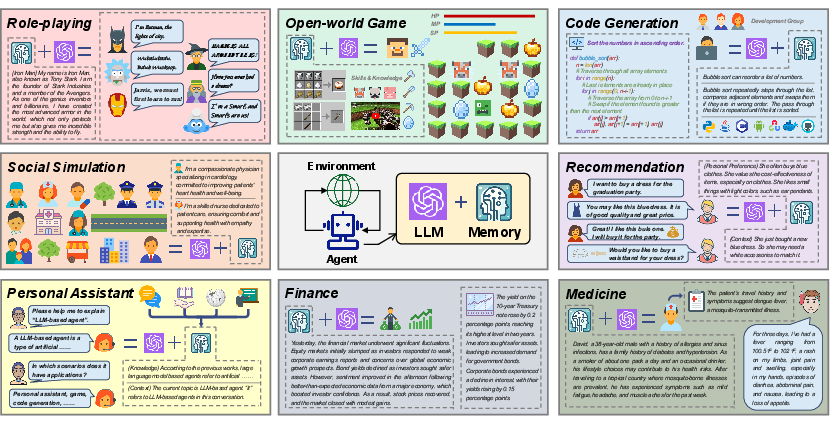

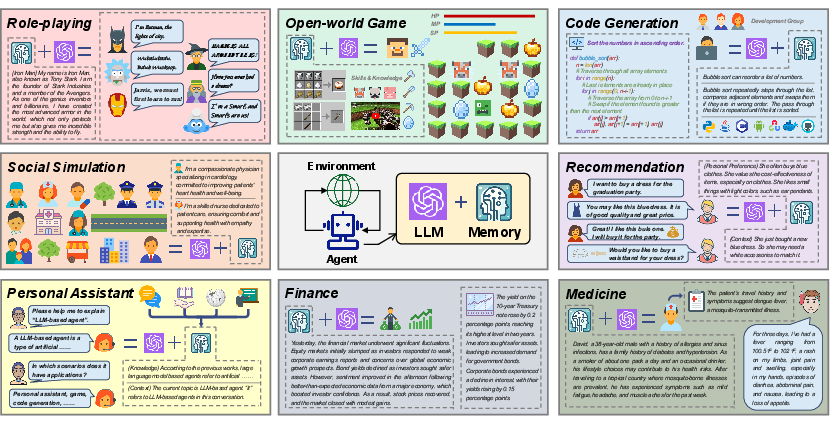

Role-playing agents, personal assistants, open-world game explorers, code generators, and expert systems in domains like medicine and finance are exemplars demonstrating the crucial role memory plays in various LLM-based applications.

Figure 1: The importance of the memory module in LLM-based agents.

What is Memory in LLM-Based Agents

Basic Definitions

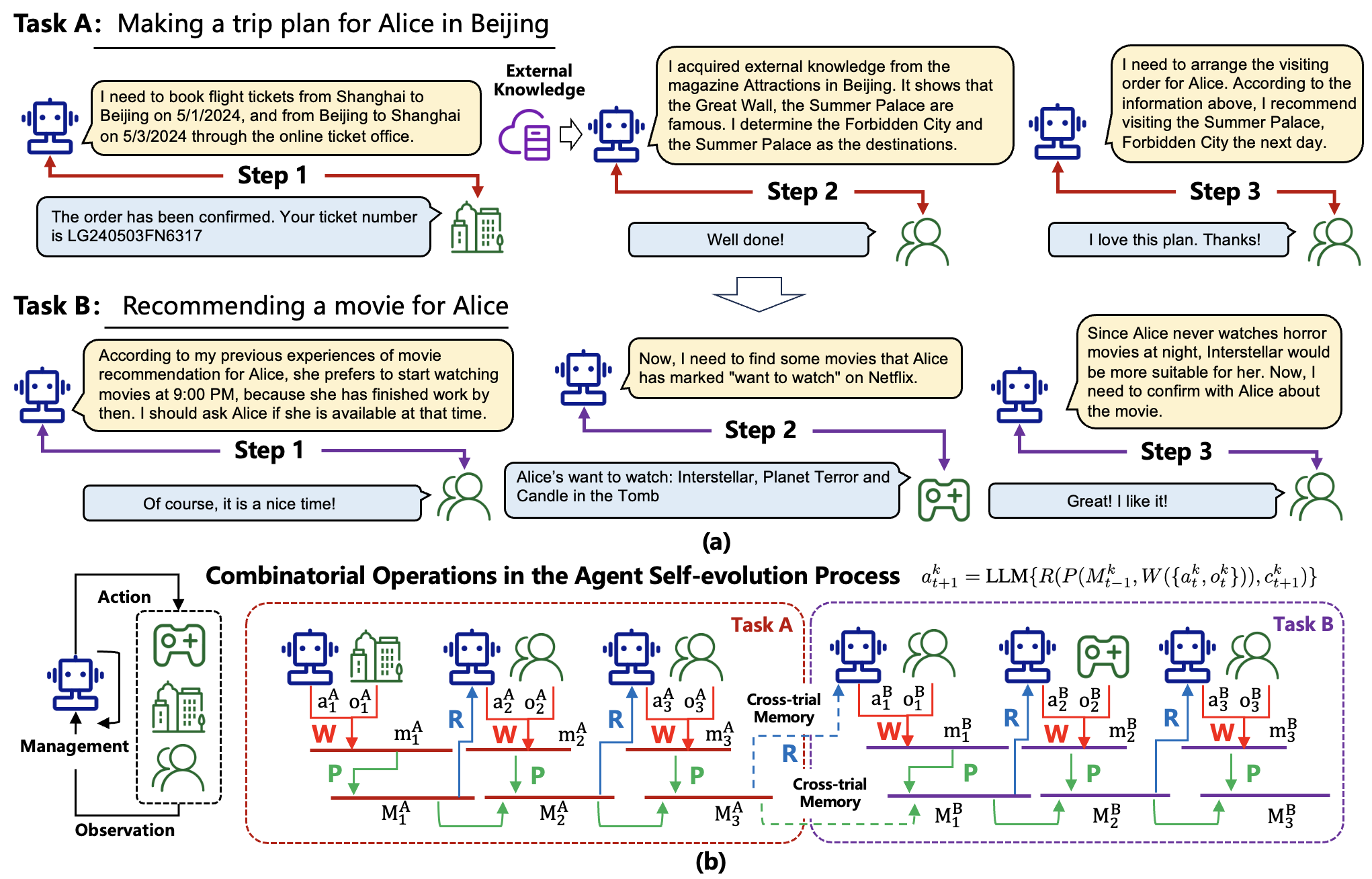

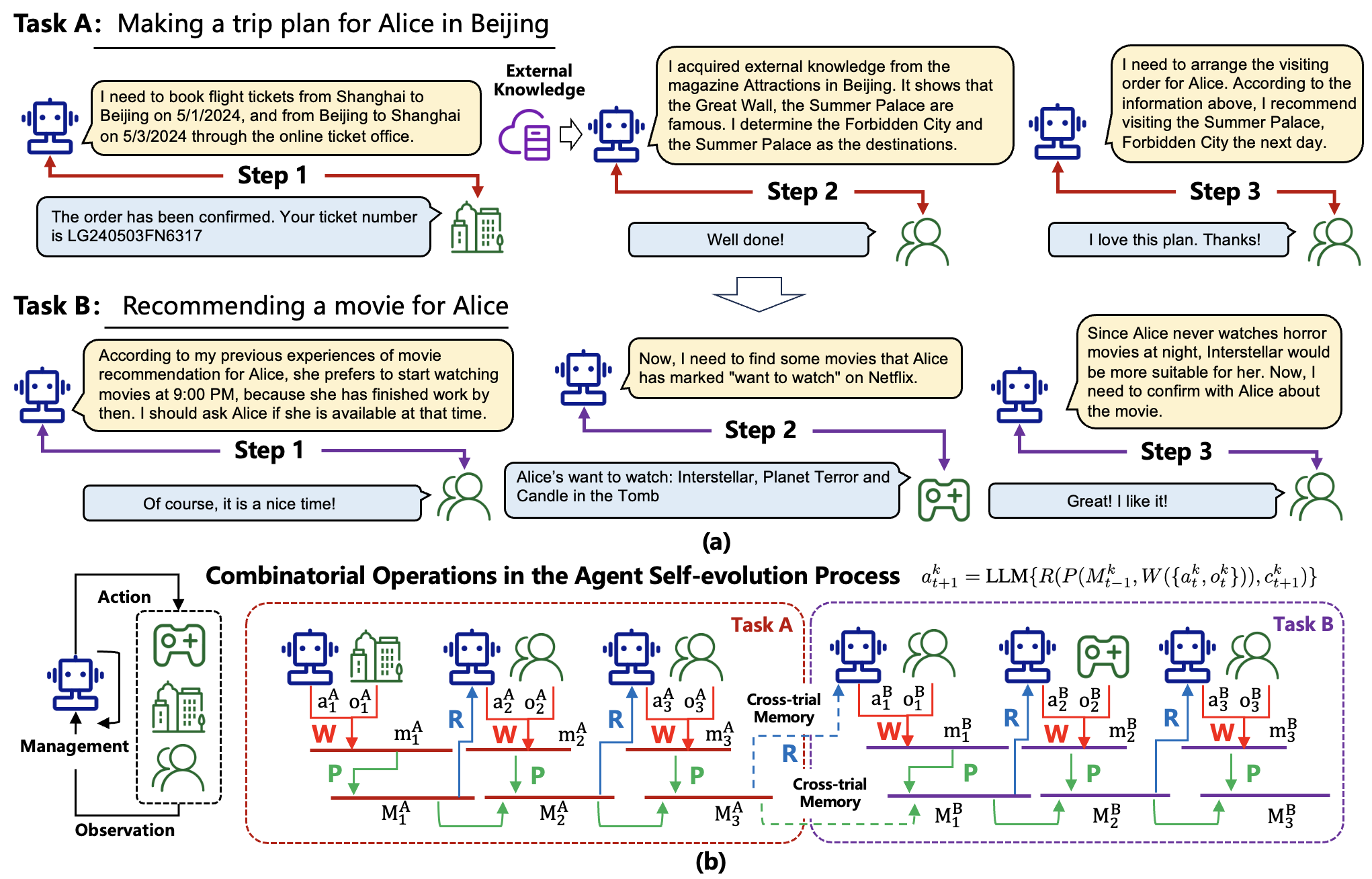

Memory in LLM-based agents can be outlined from narrow and broad perspectives. In the narrow sense, memory captures historical information within a single trial, whereas the broad perspective encompasses cross-trial information and external knowledge.

A trial is a complete agent-environment interaction process consisting of multiple steps. Each step involves an action from the agent and a response from the environment. Over various trials, the agent accumulates knowledge, enhancing its ability to interact intelligently in complex scenarios.

Memory Operations

Three primary operations govern the memory mechanism.

- Memory Writing: This operation projects raw observations into stored memory contents, often summarizing or abstracting relevant information.

- Memory Management: It processes stored information to optimize its usage, including summarizing or merging redundant memory entries and forgetting irrelevant information.

- Memory Reading: The process of retrieving informative memory content based on the current task context to guide future actions.

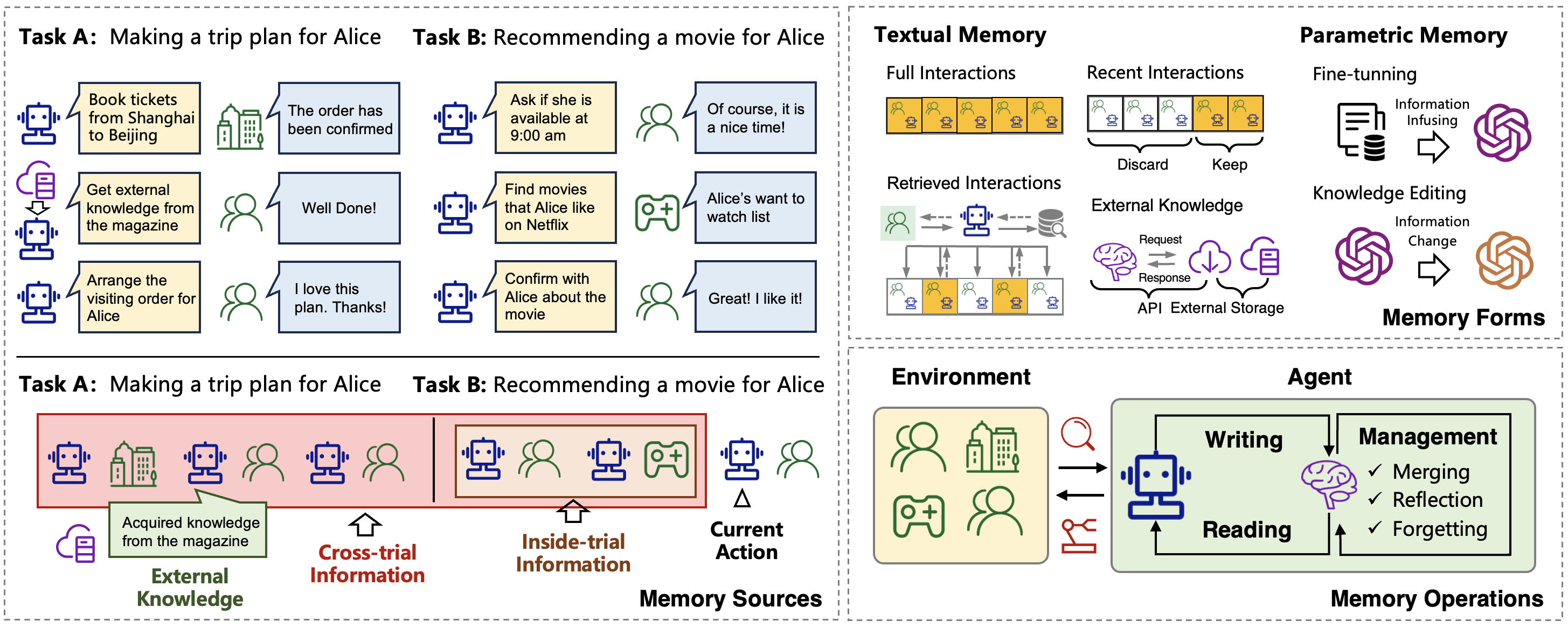

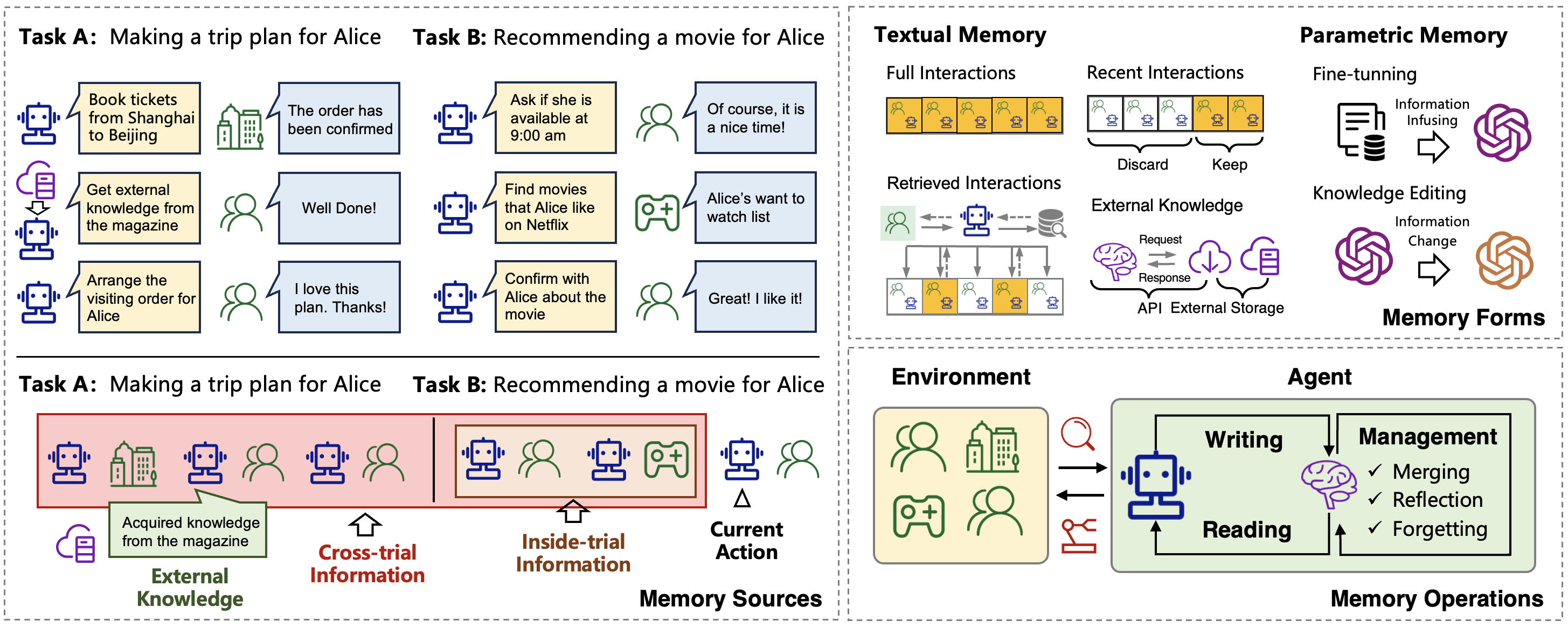

Figure 2: An overview of the sources, forms, and operations of the memory in LLM-based agents.

Why Memory is Essential

Cognitive Psychology

Memory is foundational in human cognitive processes such as knowledge accumulation, social norm formation, and reasoning. For LLM-based agents aiming to emulate human-like intelligence, a robust memory mechanism is essential.

Self-Evolution

To autonomously evolve, agents must accumulate experiences, explore environments, and summarize knowledge effectively. Memory facilitates these processes, playing a fundamental role in agents' self-evolution.

Agent Applications

In various applications, memory is indispensable. In conversational agents, it ensures continuity and context-awareness. In simulation roles, memory helps maintain consistent behaviors that align with predefined profiles.

Memory Implementation

Memory Sources

Memory can derive from three predominant sources:

- Inside-trial Information: Historical steps within a single trial are the most immediate source of informative signals.

- Cross-trial Information: Facilitates experience accumulation by storing knowledge across multiple trials.

- External Knowledge: Integrates external data sources, expanding agents' knowledge beyond internal environments.

Memory can be represented in:

- Textual Form: Utilizes natural languages, balancing interpretability with efficiency.

- Parametric Form: Encodes memory into the model's parameters, offering dense information representation and higher efficiency.

Figure 3: Illustration of the memory reading, writing, and management processes.

Memory Operations

Effective memory operations involve coordinated writing, management, and reading strategies, aiming to optimize information storage, retrieval, and utilization.

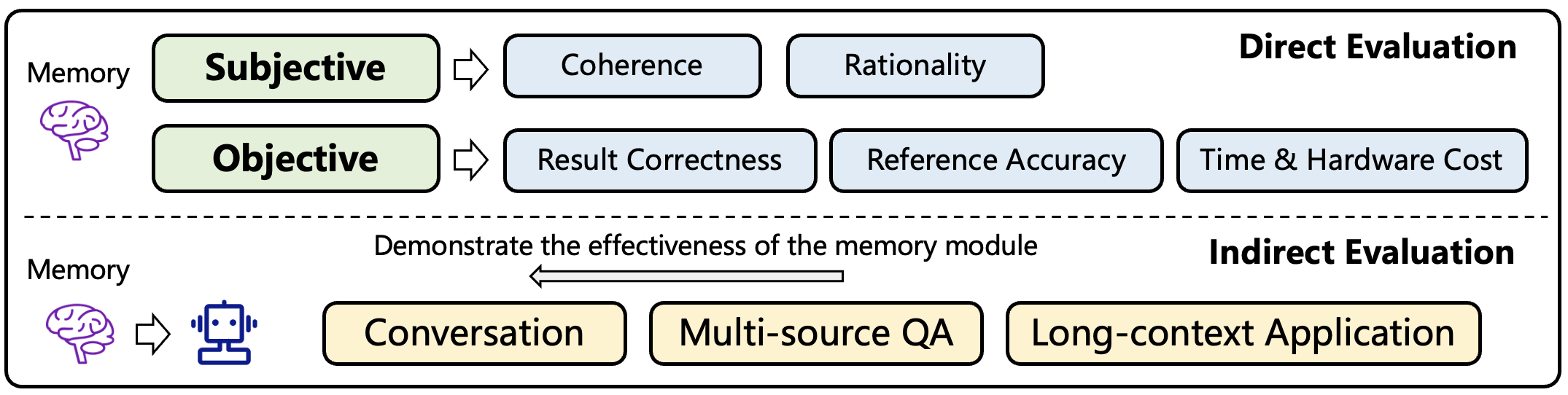

Evaluation Methods

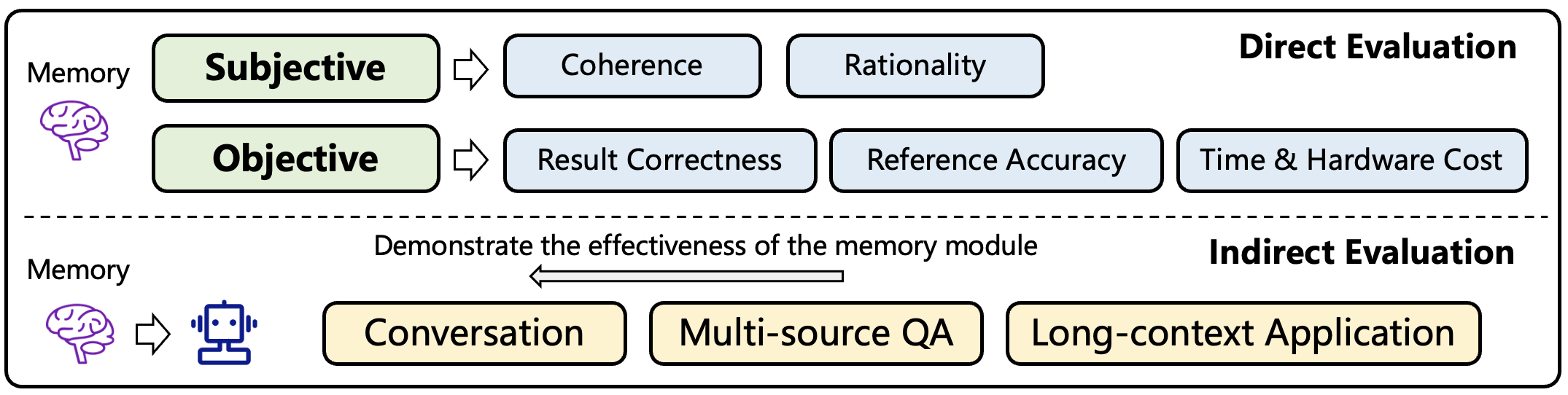

Direct Evaluation

Direct assessment of memory modules involves subjective and objective measurement of coherence, rationality, correctness, and accuracy.

Indirect Evaluation

Tasks such as conversation, multi-source question-answering, and long-context applications serve as indirect assessment tools for memory efficacy, with success rates and exploration scopes further gauge memory performance.

Figure 4: An overview of the evaluation methods of the memory module.

Memory-enhanced Applications

In applications like role-playing, social simulation, personal assistant tasks, and more, memory is critical for maintaining context, personalizing user experiences, and providing specialized domain knowledge through external data integration.

Future Directions

Further research avenues include parametric memory advancements, memory in multi-agent systems, lifelong learning, and humanoid agents. These developments hold the promise of optimizing LLM-based agents' abilities across diverse scenarios.

Conclusion

This survey systematically explores the memory mechanisms pivotal to transitioning LLMs to self-evolving, intelligent agents. The comprehensive review offers insights and foundational knowledge, aiming to inspire future research while tackling memory-centric challenges in AI development.