Towards Incremental Learning in Large Language Models: A Critical Review

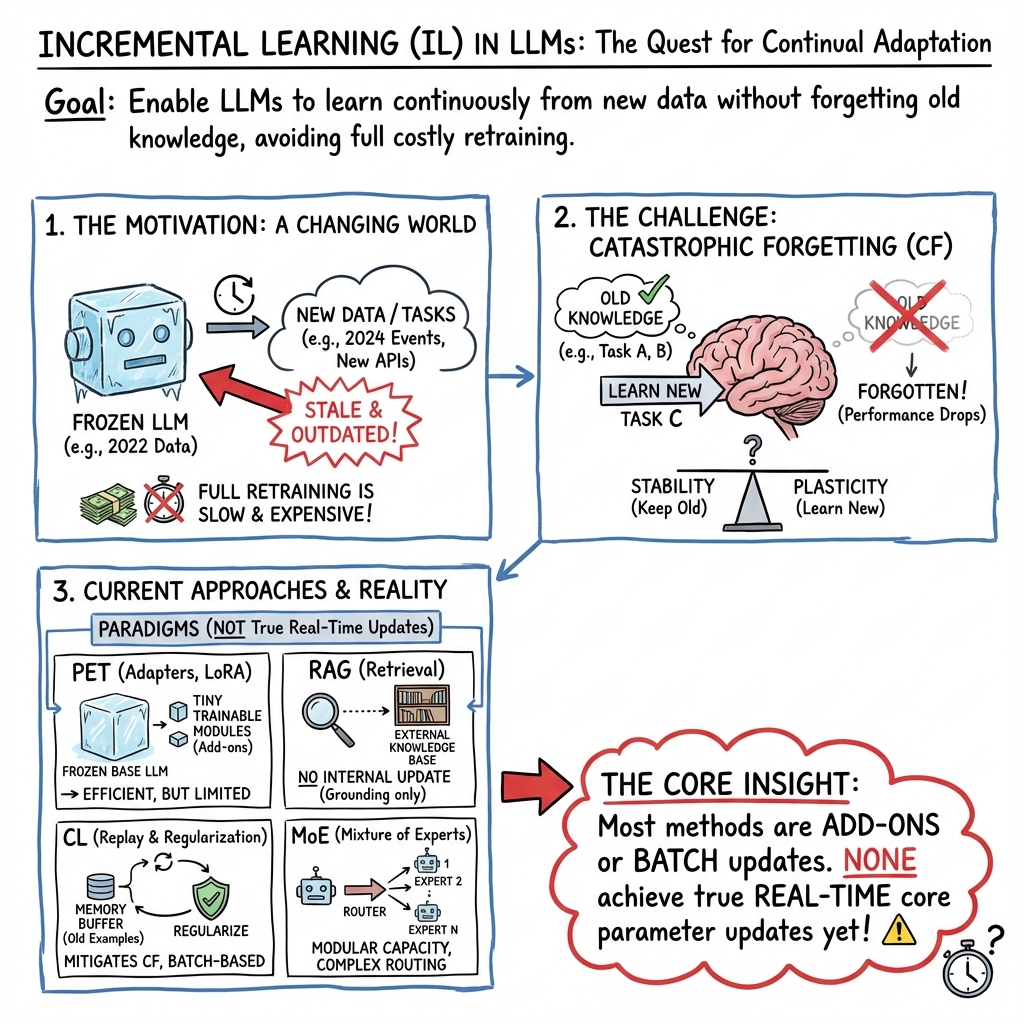

Abstract: Incremental learning is the ability of systems to acquire knowledge over time, enabling their adaptation and generalization to novel tasks. It is a critical ability for intelligent, real-world systems, especially when data changes frequently or is limited. This review provides a comprehensive analysis of incremental learning in LLMs. It synthesizes the state-of-the-art incremental learning paradigms, including continual learning, meta-learning, parameter-efficient learning, and mixture-of-experts learning. We demonstrate their utility for incremental learning by describing specific achievements from these related topics and their critical factors. An important finding is that many of these approaches do not update the core model, and none of them update incrementally in real-time. The paper highlights current problems and challenges for future research in the field. By consolidating the latest relevant research developments, this review offers a comprehensive understanding of incremental learning and its implications for designing and developing LLM-based learning systems.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (in plain words)

This paper reviews how to help big AI text generators—called LLMs—keep learning new things over time without forgetting what they already know. This ability is called incremental learning. Think of it like teaching a smart assistant new skills day by day, instead of rebuilding its whole brain every few months.

The authors look at many recent ideas that try to make LLMs learn continuously. They explain what works, what doesn’t, and what’s still missing, especially for real-time learning.

The main questions the paper asks

- What does “incremental learning” mean for LLMs in practice?

- Which current techniques help LLMs pick up new tasks while keeping old skills?

- Do any methods let LLMs update themselves instantly (in real time)?

- What common problems show up, like “catastrophic forgetting” (losing old knowledge)?

- How should we design future LLM systems that learn safely, quickly, and reliably?

How the authors approached the review

This is a critical review, not a single experiment. The authors collected and compared many studies (from research libraries like Scopus, ACM, IEEE), focusing on methods that were actually tested. They grouped the ideas into a few big families and explained them with a unified view.

Here are the main families, explained with simple analogies:

- Continual learning: ways to keep new learning from wiping out old learning (to fight “catastrophic forgetting”).

- Consolidation methods: add “guard rails” so important parts don’t change too much. This can be done by gently steering updates (regularization) or by having a “teacher model” guide a “student model” (distillation).

- Dynamic-architecture methods: “add a new drawer for each new task.” The model grows by adding small task-specific parts (modules/adapters), or by using a team structure (mixture-of-experts) so different “specialists” handle different tasks.

- Memory-based methods: keep a “flashcard box” of old examples (or generate similar ones) and replay them so the model doesn’t forget.

- Parameter-efficient tuning (PET): instead of updating the whole huge model, add small “knobs” like LoRA adapters or soft prompts. These are lightweight add-ons that teach new skills without rewriting the entire brain.

- Meta-learning: “learn how to learn.” The model tries to get better at picking up new tasks quickly from small amounts of information.

- Mixture-of-experts (MoE): a “team of specialists” where a gate picks which expert to use for a given input. This can help scale learning without changing everything at once.

- Related system strategies:

- RAG (Retrieval-Augmented Generation): “look it up.” When the model answers, it searches an external library or database for facts, which reduces made-up answers. This improves accuracy but doesn’t change the model’s memory.

- In-context learning (few-shot prompting): “learn from examples you see right now,” inside the prompt. This helps on the spot but is temporary—the model doesn’t truly remember later.

- Knowledge editing: quick “surgery” to fix or add facts in a model. Handy, but side effects are not fully understood.

- Multimodal learning: teach models to handle text plus images (and sometimes audio/video). This is harder and can lead to more “hallucinations” if not done carefully.

What the review found (and why it matters)

Here are the key takeaways the authors emphasize:

- No true real-time updating of the core model yet:

- Many methods avoid changing the model’s main “brain” (its core parameters) during use. They freeze it and add adapters, prompts, or use external tools like search. That helps, but it’s not the same as permanently learning a new skill on the fly.

- None of the approaches truly update the core model incrementally in real time while it’s interacting with users.

- Catastrophic forgetting is still the central challenge:

- If you teach new tasks carelessly, older skills can fade. The different strategies fight this, but each has trade-offs:

- Dynamic methods often grow the model’s size and training cost as tasks increase.

- Memory/replay methods need storing or recreating old data (which may be private, unavailable, or expensive).

- Parameter-efficient methods are lighter, but as you add more adapters/prompts for more tasks, the system can still bloat and get harder to manage.

- Balancing three “continual” goals is tricky:

- Continual pretraining (general knowledge), continual instruction tuning (following task instructions), and continual alignment (matching human values) can conflict. For example, updating general knowledge later might weaken earlier alignment or instruction-following unless done very carefully.

- External help is useful but not “true memory”:

- RAG and in-context learning can boost answers quickly, but they don’t store new knowledge inside the model. Once the prompt or retrieval is gone, the model hasn’t truly learned.

- Pretraining helps, but not enough:

- Very large pretraining can make models more robust to new tasks, yet they still struggle with out-of-distribution data (surprising or unusual examples) and can overfit if fine-tuned poorly.

- Multimodal learning adds extra hurdles:

- Connecting images and text helps models do more, but can increase hallucinations and overfitting. Careful, moderate fine-tuning (like LoRA) helps; aggressive, repeated fine-tuning can hurt.

- Knowledge editing is promising but not fully understood:

- Small, targeted changes can fix facts fast, but we don’t fully know the ripple effects in giant models.

Overall, today’s solutions often rely on add-ons, careful scheduling, and smart prompts. They help, but we still lack a clean, safe way to let the core LLM learn permanently and instantly from new experiences.

Why this matters and what’s next

If LLMs could truly learn incrementally, they could:

- Stay up to date with world events without full retraining.

- Adapt to each user’s preferences safely and privately.

- Handle new tools, rules, and languages as they appear.

- Work better in the real world, where data and needs change constantly.

To get there, future research needs to:

- Develop safe, efficient ways to update core model knowledge in real time without forgetting or breaking alignment.

- Combine multiple strategies (e.g., parameter-efficient updates plus smart memory and retrieval) in a coordinated system.

- Create stronger tests and benchmarks for continual and multimodal learning (including safety, truthfulness, and privacy).

- Reduce compute, data, and environmental costs while improving reliability.

- Better understand how and where knowledge is stored in LLMs, so edits and updates don’t have bad side effects.

In short: this review shows that we’ve made progress with clever add-ons and training tricks, but truly “learning on the fly” like a person—fast, safe, and without forgetting—remains an open challenge for LLMs.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps the paper leaves unresolved and that future research could directly address:

- Real-time incremental updates: No demonstrated algorithms or systems that update core LLM weights continuously during interaction (streaming/online IL) with stability-plasticity control, safety guarantees, and bounded compute.

- Operational IL taxonomy: Lack of a unified, LLM-specific definition and taxonomy that spans task-, domain-, and class-incremental scenarios across modalities, with clear assumptions (labels, task identity, drift types) and evaluation protocols.

- Standard IL benchmarks for LLMs: Absence of comprehensive, streaming benchmarks and datasets for text and multimodal IL (including OOD drift, low-resource languages, privacy constraints, and long task sequences), plus standardized metrics for catastrophic forgetting, sample efficiency, alignment drift, reliability, and compute/carbon cost.

- Multi-objective training conflicts: Unresolved interference between continual pretraining (CPT), continual instruction tuning (CIT), and continual alignment (CA); need methods to jointly optimize (or schedule) these objectives without degrading previously learned capabilities.

- Persistence of retrieved knowledge: Online in-context learning and RAG do not retain learned information; missing mechanisms to distill retrieved facts into persistent, auditable, and reversible model/memory updates without full finetuning.

- Knowledge editing side-effects: Unclear mapping between edited model components (layers, FFNs, adapters) and downstream behavioral changes; need edit compositionality analysis, conflict resolution for many concurrent edits, and longitudinal stability tests.

- Privacy-preserving IL: Replay/rehearsal methods assume access to old data; lacking differentially private IL, efficient federated IL for LLMs (communication/memory-light), and exemplar-free alternatives with formal privacy guarantees.

- Prompt/adapter scalability: Progressive prompt/adapters cause linear growth in parameters; need lifecycle management (merge, compress, prune, distill) and adaptive selection under strict parameter budgets.

- Concept drift handling: Missing integrated drift detectors and targeted update strategies for LLMs that adapt to drift without triggering catastrophic forgetting or misalignment.

- Multimodal IL robustness: Instruction tuning for MLLMs suffers from hallucinations, overfitting, and high compute; open how to design robust alignment losses, curricula, and data pipelines that enable multi-image/multi-modal reasoning with lower compute.

- Knowledge localization in transformers: Insufficient understanding of where and how facts/concepts are encoded; need principled attribution methods to enable targeted edits and continual updates with minimal collateral changes.

- Continual alignment beyond RLHF: RLHF is costly and error-prone; open avenues for automated feedback (programmatic rewards, synthetic oversight), off-policy learning, and constraint-based training that ensure ongoing safety/alignment.

- Cross-lingual IL at scale: Limited coverage of low-resource languages, tasks, and models; need methods that persistently transfer and retain cross-lingual knowledge (beyond in-context) while measuring fairness and bias drift.

- Exemplar selection and generative replay for text: No reliable, privacy-preserving selection/compression strategies; need text-specific generative replay with auditability and controls to avoid bias/amplification.

- Environmental impact: Missing quantification and optimization of compute/carbon footprints for IL pipelines (CPT/CIT/CA, multimodal tuning); need budget-aware algorithms and energy-efficient scheduling.

- Safety and security in IL: Unaddressed risks of data poisoning and adversarial updates in streaming IL; require robust validation gates, rollback mechanisms, and anomaly detection for incremental changes.

- Production orchestration: Lack of practical frameworks for online update orchestration (versioning, A/B testing, rollback, continuous evaluation/monitoring) for LLMs deployed at scale.

- Stability–plasticity metrics: No standard, model-agnostic measures to quantify and trade off plasticity (learning new tasks) vs. stability (retaining old tasks) alongside reliability metrics (truthfulness, toxicity, bias) under IL.

- Limited empirical breadth: Several claims are based on small task sets or narrow scenarios (e.g., two tasks in alignment experiments); need large-scale, systematic meta-analyses with ablations, statistical significance, and cross-dataset generalization tests.

- Mixture-of-experts (MoE) in IL: Insufficient analysis of dynamic expert addition/retirement, routing drift, and inter-expert interference under continual updates; need scalable gating and capacity balancing strategies.

- Parameter-efficient IL limits: LoRA/adapters reduce trainable parameters but still incur long finetuning times and cumulative overhead; open how to accelerate updates and cap total overhead across many tasks/classes.

- Edge/on-device IL: Feasibility of continual updates under tight memory/compute/privacy constraints on edge devices remains unexplored.

- Token-level adaptation and decoding: Open whether per-token or per-utterance gradient updates can mitigate repetition and improve robustness without destabilizing generation; interaction with decoding strategies (greedy, sampling, nucleus).

- Explicit non-parametric knowledge: Missing architectures that integrate symbolic/external knowledge stores with persistent, query-time reasoning and principled, incremental updates linked to provenance and governance.

- CF quantification in MLLMs: EMT-style evaluation is early-stage; need broader CF measurement frameworks across diverse multimodal tasks, progressive finetuning protocols, and validated prevention strategies.

Glossary

- Additive decomposition: A technique that breaks model parameters into additive components to separate shared and task-specific parts. "additive decomposition, singular value decomposition, and low-rank factorization [36]."

- AI alignment: The practice of aligning model outputs with human values, preferences, and ethical norms. "aligns the model's outputs with human values, preferences, and ethical guidelines (AI alignment)."

- Attention map: A matrix representing how tokens attend to each other within the attention mechanism. "The MSA clusters each token by an attention map obtained from two linear mappings of the input tokens (as their dot product)."

- Attention-aware Self-adaptive Prompt (ASP): A framework that inserts prompts into attention blocks to separate task-invariant and task-specific information for FSCIL. "The Attention-aware Self-adaptive Prompt (ASP) framework [58] adds specific prompts between attention blocks as attention-aware task-invariant prompts (TIP) and self-adaptive task-specific prompts (TSP)."

- Autoregressive pattern: A generation process where each token is produced conditioned on previously generated tokens. "First, they exhibit the autoregressive pattern, performing the generation task in iterations."

- Bootstrapping Language-Image Pre-training (BLIP): A vision-language pretraining approach that leverages frozen unimodal models to learn cross-modal representations. "Bootstrapping Language-Image Pre-training with frozen unimodal models (BLIP) [63]"

- Catastrophic forgetting (CF): The tendency of neural networks to lose previously learned knowledge when trained on new tasks. "catastrophic forgetting (CF), a limitation that prevents them from learning a sequence of tasks."

- Chain-of-thought: A prompting technique that elicits stepwise reasoning in model outputs. "chain-of-thought (stepwise instructions with logical context to simulate reasoning)"

- Class-Incremental Learning (CIL): Learning that adds new classes over time while retaining performance on previously seen classes. "and learn unknown classes (class-incremental learning) [20]."

- Class-Knowledge-Task Multi-Head Attention (CKT-MHA): An attention module that uses class and task features to enable continual fine-tuning in ViT. "The Class-Knowledge-Task Multi-Head Attention (CKT-MHA) module enables continuous ViT finetuning"

- Complementary Learning System theory: A cognitive theory inspiring architectures that combine fast and slow learning systems. "inspired by the Complementary Learning System theory [68]."

- Concept drift: A change in the underlying data distribution over time that can degrade model performance. "CF may occur when compensating for concept drift [21]."

- Consolidation-based methods: Approaches that protect important parameters during new learning via regularization or distillation. "Consolidation-based methods, such as regularization [27] or distillation [28], protect important parameters from significant shifts."

- Continual Alignment (CA): Ongoing adjustment of models to adhere to human values and preferences. "3) Continual Alignment (CA) ensures the model's outputs adhere to human values, preferences, and ethical and societal norms [45]."

- Continual Instruction Tuning (CIT): Successive supervised tuning using instruction-formatted data to improve task-following. "2) Continual Instruction Tuning (CIT) improves the model's response to specific user inputs [44]."

- Continual Learning (CL): Methods for learning new tasks over time while mitigating forgetting. "Continual learning (CL) learns new, emerging tasks efficiently while mitigating CF in LLMs."

- Continual Pre-training (CPT): Extended self-supervised pretraining to expand general language understanding and generation capabilities. "1) Continual Pre-training (CPT) expands the model's general NL understanding and generation capabilities [43]."

- Cross-lingual In-Context learning (X-ICL): An in-context method that aligns labels and retrieves cross-lingual exemplars to improve performance in low-resource languages. "Cross-lingual In-Context learning (X-ICL) method"

- Diffusion Models (DMs): Generative models that denoise data progressively to produce synthetic samples. "Diffusion Models (DMs) [51], generative models that produce synthetic samples, to improve the classifier performance in CIL tasks."

- Domain-incremental learning: Learning the same task across changing input distributions without explicit task labels. "Domain-incremental learning learns to solve the same problem in different contexts (input distribution changes)."

- Downstream tasks: Task-specific applications trained after general pretraining. "aka downstream tasks"

- ExpAndable Subspace Ensemble (EASE): A class-incremental method that adds adapters and synthesizes prototypes to prevent forgetting. "ExpAndable Subspace Ensemble (EASE) is an approach to PTM-based CIL"

- Experience replay: Reusing stored samples from previous tasks during training on new tasks to reduce forgetting. "Experience replay records a small amount of old training examples [39]."

- Evaluating MulTimodality (EMT): A framework to measure catastrophic forgetting in multimodal LLMs via image classification. "introduced Evaluating MulTimodality (EMT) for measuring CF in MLLMs."

- Federated Class-Incremental Learning (FCIL): A federated method to learn new classes continually while mitigating forgetting across clients and servers. "to learn new classes in Federated Class-Incremental Learning (FCIL)."

- Federated Learning (FL): Distributed training across multiple data owners without centralizing raw data. "It is based on a distributed ML paradigm called Federated Learning (FL), where multiple data owners (or local models) collaborate to train a shared or server model."

- Feedforward Network (FFN): A neural module composed of linear layers and activations that process attention outputs in transformers. "The decoder comprises Multi-head Self-Attention (MSA) and Feedforward Network (FFN) modules."

- Few-Shot Class-Incremental Learning (FSCIL): Learning new classes with limited data while retaining prior class knowledge. "Few-Shot Class-Incremental Learning (FSCIL) [57] trains a base model with sufficient data."

- Function regularization: Constraints on the model’s outputs or intermediate representations to preserve prior behavior. "Function regularization is a robust approach targeting the model's in-between results or the prediction."

- Generative Multi-modal Model (GMM): A model that generates image descriptions to aid class-incremental classification using multimodal inputs. "The Generative Multi-modal Model (GMM) framework for CIL [62] generates detailed image descriptions"

- Generative replay: Using a generative model to synthesize past task examples for rehearsal. "Generative replay employs a generative model to produce synthetic examples for training [40]."

- In-context learning (ICL): Learning to perform a task at inference time from examples provided in the prompt, without weight updates. "In-context learning (ICL) is sometimes used to refer to few-shot prompting"

- INCremental Prompting (INCPrompt): A prompting approach that generates attention-based prompts to mitigate forgetting across tasks. "The INCremental Prompting (INCPrompt) [64] utilizes flexible task-aware prompts"

- Interactive Continual Learning (ICL): A framework where fast and slow systems interact to continually learn, inspired by cognitive theory. "The study in [67] presents an Interactive Continual Learning (ICL) framework"

- Instruction Information Metric (InsInfo): A metric to assess instruction quality and diversity to guide replay selection. "it introduces the Instruction Information Metric (InsInfo) to measure the instructions' quality and diversity"

- Instruction tuning: Supervised fine-tuning on instruction-formatted data to teach models to follow natural language instructions. "Instruction tuning involves obtaining a new, instruction-financed model capable of following instructions."

- Incremental learning (IL): The capability to acquire and use knowledge from continuous input streams without full retraining. "Incremental learning (IL) is the ability of a learning system to make decisions based on continuously incoming inputs"

- Incremental Vision-Language Object Detection (IVLOD): Continual adaptation of vision-language detectors to new domains while preserving capabilities. "Incremental Vision-Language Object Detection (IVLOD) [70] adapts pre-trained Vision-Language Object Detection Models (VLODMs)"

- Knowledge Distillation (KD): Training a smaller student model to match outputs of a larger teacher model, preserving knowledge. "It typically implements Knowledge Distillation (KD) [30] by learning a small student model from a large teacher model"

- Knowledge Editing (KE): Post-training modifications to model parameters or components to insert, change, or erase specific knowledge. "The study on Knowledge Editing (KE) for LLMs presents practical and efficient methods for modifying and evaluating LLMs post-training"

- LLMs: Transformer-based models that generate natural language text at scale. "LLMs paved the way for many other FMs"

- LoRA: A parameter-efficient fine-tuning method that injects low-rank adapters to adjust weights with few parameters. "finetunes it using LoRA with a small number of parameters"

- Low-rank factorization: Decomposition of matrices into low-rank components to reduce parameter count or specialize features. "additive decomposition, singular value decomposition, and low-rank factorization [36]."

- Masked language modeling: A pretraining objective where masked tokens are predicted from context. "Masked language modeling"

- Meta-learning: Learning strategies that adjust how models learn new tasks (e.g., via learned inductive biases). "meta-learning of arriving tasks [32]"

- Mixture-of-Experts (MOEs): Architectures that route inputs to specialized expert sub-networks to handle diverse tasks. "Mixture-of-Experts (MOEs) architectures are examples of this method."

- MiniGPT-4: A system that connects a visual encoder with an LLM via a projection layer for vision-language tasks. "MiniGPT-4 [59] connects a pre-trained visual encoder and a pre-trained LLM."

- Model decomposition: Separating model parameters into shared and task-specific components for continual adaptation. "Model decomposition separates a model into shared and task-specific elements."

- Model Tailor: A post-training method that sparsely replaces critical fine-tuned parameters to balance current and past task performance. "The Model Tailor retains the pre-trained parameters and replaces fewer finetuned parameters (up to 10%)."

- Modular network: A design with separate modules per task to avoid interference during incremental learning. "A modular network includes modules or sub-networks learning incremental tasks simultaneously"

- Multimodal Chain of Thought (MCoT): Prompting that elicits reasoning across modalities (e.g., text and images). "Multimodal Chain of Thought (MCoT)."

- Multimodal In-Context Learning (MI-CL): In-context learning extended to multimodal inputs. "Multimodal In-Context Learning (MI-CL)"

- Multimodal Instruction Tuning (MIT): Instruction tuning applied to multimodal models, often increasing data and compute demands. "Multimodal Instruction Tuning (MIT), Multimodal In-Context Learning (MI-CL), and Multimodal Chain of Thought (MCoT)."

- Multi-Distribution Matching (MDM): Models that balance sample quality and diversity when generating synthetic data across distributions. "Multi-Distribution Matching (MDM) models"

- Multi-head Self-Attention (MSA): An attention mechanism with multiple heads that allows tokens to attend to different subspaces. "The decoder comprises Multi-head Self-Attention (MSA) and Feedforward Network (FFN) modules."

- Natural language generation (NLG): Tasks focused on producing text from features or prompts. "Natural language generation (NLG)"

- Natural language understanding (NLU): Tasks focused on extracting and classifying information from text. "Natural language understanding (NLU)"

- Out-of-distribution (OOD): Data that differs from the distribution seen during training, challenging generalization. "out-of-distribution (OOD) data in CL scenarios."

- Parameter expansion: Increasing model size by adding task-specific parameters during continual learning. "also known as parameter expansion"

- Parameter-efficient tuning (PET): Methods that adapt models by training a small number of additional parameters (e.g., adapters, prompts). "Some approaches integrate PET with CL."

- Positional encodings: Numeric representations that encode token positions for transformer processing. "words' positional encodings (embedding vectors)"

- Prefix-tuning: A parameter-efficient method that prepends learnable vectors (prefixes) to guide attention without changing base weights. "prefix-tuning optimizes a small continuous task-specific vector (aka prefix)"

- Pretraining-finetuning: A two-stage training scheme with general unsupervised pretraining followed by supervised domain-specific fine-tuning. "Pretraining-finetuning includes unsupervised training with a massive amount of data"

- Prototype (module): A representation vector of a class used for aggregation and bias mitigation in federated continual learning. "PLoRA introduces a shared prototype module for the global server"

- Q-Former: A lightweight transformer module that bridges vision and language by querying visual features. "Q-Former [61]"

- Retrieval-augmented generation (RAG): Combining LLMs with external retrieval to ground outputs and reduce hallucinations. "Retrieval-augmented generation (RAG) [13] is a framework for increasing the quality of LLMs' outputs"

- Reparameterizable Dual Branch (RDB): A dual-branch adaptation module added in parallel to preserve and extend knowledge without overwriting. "adds a parallel branch component (Reparameterizable Dual Branch - RDB) for tuning on downstream tasks"

- Reward hacking: Exploiting the reward model to maximize rewards in unintended ways. "reward hacking, and evaluation"

- Reinforcement Learning from Human Feedback (RLHF): Refining models via human preference rankings and a learned reward model. "Reinforcement Learning from Human Feedback (RLHF) represents an alternative"

- Selective Synthetic Image Augmentation (SSIA): Augmentation strategy to expand distributions and increase plasticity before synthetic sample generation. "Before generating samples, Selective Synthetic Image Augmentation (SSIA) enlarges the training data distribution"

- Sequence-to-sequence modeling: Modeling that maps input sequences to output sequences, often with encoder-decoder architectures. "Sequence-to- sequence modeling"

- Singular value decomposition: A matrix factorization used in model decomposition to separate parameter components. "additive decomposition, singular value decomposition, and low-rank factorization [36]."

- Soft prompt tuning: Training learnable input embeddings (prompts) while freezing the base model. "Soft prompt tuning is a case in which the model is frozen"

- Swin Transformer: A hierarchical vision transformer architecture used as a backbone in detection tasks. "The IVLOD uses a Swin Transformer [71] as a backbone VLM."

- Task-incremental learning: Learning multiple distinct tasks where task identity is known at test time. "Task-incremental learning learns to solve several distinct tasks."

- Tree-of-thought: A prompting approach that explores multiple solution paths during reasoning. "tree-of-thought (guidance to select among multiple solutions)"

- Upstream tasks: General-purpose tasks targeted during unsupervised pretraining. "also known as upstream tasks"

- Visual instruction tuning: Finetuning multimodal models with visual instruction-following data to connect vision and language. "Visual instruction tuning uses machine-generated instruction-following data to improve LLMs in the language-to-image tasks."

- Visual Prompt Tuning (VPT): A parameter-efficient method that prepends learnable visual prompts to a pre-trained model. "Visual Prompt Tuning (VPT) [37] combines previous techniques"

- Vision-Language Object Detection Models (VLODMs): Detection models that align vision and language features for object localization and classification. "pre-trained Vision-Language Object Detection Models (VLODMs)"

- Vision Transformer (ViT): A transformer architecture applied to images using patch embeddings and self-attention. "VIT-B/16-IN21K [53] (a vision transformer encoder model with 86.4 million parameters pre-trained on ImageNet-21k"

- von Mises-Fisher Outlier Detection and Interaction (vMF-ODI): A mechanism to estimate sample complexity and coordinate interactions between systems. "The von Mises-Fisher Outlier Detection and Interaction (vMF-ODI) mechanism estimates sample complexity"

- Wasserstein Distance (WD): A metric between probability distributions used to measure task similarity for replay. "based on their similarity calculated as Wasserstein Distance (WD)."

- Weight regularization: Penalizing parameter changes based on their importance to prior tasks to protect knowledge. "Weight regularization selectively regularizes variation of NN's parameters."

- Zero-shot: Prompting without task-specific examples, relying solely on instructions and formatting. "zero-shot (instructions with task-specific formatting but without task-specific examples)"

- Zero-interference Loss (ZiL): A loss that penalizes interference between new and learned knowledge in dual-branch adaptation. "Zero- interference Loss (ZiL)"

- Zero-interference Reparameterizable Adaptation (ZiRa): An adaptation method adding a dual branch and loss to learn new tasks without overwriting knowledge. "Zero-interference Reparameterizable Adaptation (ZiRa) with Zero- interference Loss (ZiL)"

Practical Applications

Practical Applications Derived from the Paper

Below are actionable, real-world applications that leverage the paper’s findings on incremental learning (IL) for LLMs—including continual learning (CL), instruction tuning, parameter-efficient methods (LoRA, adapters, prompts), retrieval-augmented generation (RAG), knowledge editing, and multimodal extensions. Applications are grouped into immediate and long-term, with sector links, potential tools/workflows, and assumptions or dependencies noted.

Immediate Applications

These can be deployed now using existing methods and tooling, typically via batch-incremental or parameter-efficient updates rather than real-time core model changes.

- Incremental domain updates for enterprise chatbots (customer support, e-commerce)

- Workflow: Continual Pre-training (CPT) for new domain corpora + Continual Instruction Tuning (CIT) for task formats + RAG for grounding; scheduled batch updates to avoid catastrophic forgetting (CF).

- Tools: LoRA/adapters/prefix-tuning, vector databases (e.g., FAISS, Milvus), prompt templates.

- Assumptions: High-quality domain data; governance for knowledge sources; monitoring for CF and format drift.

- Safety and policy alignment refresh cycles (content moderation, social platforms)

- Workflow: Continual Alignment (CA) via targeted RLHF or preference learning, using parameter-efficient finetuning to minimize interference with prior capabilities.

- Tools: LoRA; alignment datasets; red-teaming/evaluation suites (toxicity, bias, truthfulness).

- Assumptions: Human oversight quality; clear reward model specifications; compute budget for periodic updates.

- Knowledge editing for fast corrections in regulated environments (finance, legal, compliance)

- Workflow: Apply knowledge editing frameworks (insertion/modification/erasure) to update facts or policies without full retraining; validate with regression tests.

- Tools: EasyEdit, MEND-style editors; change logs; A/B evaluation.

- Assumptions: Limited scope edits; potential side effects due to unclear internal knowledge localization; strict evaluation gates.

- Parameter-efficient task specialization (healthcare triage, legal drafting, customer analytics)

- Workflow: Add LoRA/adapters/prefix/soft prompts per task; freeze core model; deploy multiple adapters for different subdomains.

- Tools: LoRA, adapters, prefix/soft prompt libraries; adapter routing.

- Assumptions: Enough labeled samples; careful adapter management to avoid parameter creep; prompt hygiene and consistency.

- Federated class-incremental collaboration (healthcare networks, banking consortia)

- Workflow: Federated learning with PLoRA—clients learn local class prototypes and share only trainable parameters and prototype summaries to a central server.

- Tools: PLoRA; ViT-based backbones; secure aggregation protocols.

- Assumptions: Data governance and privacy guarantees; network bandwidth; higher training and coordination cost.

- Code-AI assistants that adapt to new APIs (software engineering, DevOps)

- Workflow: CL with replay/regularization for out-of-distribution (OOD) API usage streams; periodic updates with exemplar storage or synthetic generation.

- Tools: GPT-2/RoBERTa-based code models; experience replay; generative replay; curated GitHub data streams.

- Assumptions: Licensed data use; reproducible pipelines; limited generalizability beyond curated ID/OOD setup.

- Multimodal assistants in visual workflows (manufacturing QA, retail cataloging, design)

- Workflow: LLaVA/MiniGPT-4 style MLLMs with visual instruction tuning; ZiRa for domain adaptations that preserve base capabilities; moderate LoRA finetuning to reduce hallucinations.

- Tools: LLaVA, MiniGPT-4, BLIP/Q-Former; ZiRa; LoRA; cross-modal connectors.

- Assumptions: High-quality image-text instruction datasets; known risk of hallucinations; increased model size with adaptation branches.

- Low-resource language support via cross-lingual prompting (education, NGOs, public services)

- Workflow: X-ICL to align labels across languages; cross-lingual retrieval for in-context exemplars; prompt format standardization.

- Tools: BLOOM/XGLM; multilingual retrieval; label aligners in prompts.

- Assumptions: Availability of labeled source exemplars; limited inherent capability upgrade; scope restricted to covered languages and tasks.

- CF monitoring and finetuning QA in MLOps (industry labs, academia)

- Workflow: EMT-like evaluation for multimodal/classification tasks; track performance across task sequences; detect overfitting and hallucinations during progressive finetuning.

- Tools: EMT frameworks, standard benchmarks (CIFAR/ImageNet), truthfulness/toxicity/bias tests.

- Assumptions: Representative benchmarks; dedicated evaluation compute; continuous reporting.

- Incremental object detection domain adaptation (robotics, smart cities, surveillance)

- Workflow: IVLOD with ZiRa—add reparameterizable branches to preserve pretraining knowledge while adapting to new domains; penalize interference via ZiL.

- Tools: Swin Transformer backbones; dual-branch adapters; loss regularization.

- Assumptions: Increased model size; domain-specific datasets; integration with existing detection pipelines.

- Sequential task operations using Progressive Prompts (BPO, contact centers, help desks)

- Workflow: Maintain soft prompts per task; concatenate progressively for transfer without touching base model; manage prompt lifecycle.

- Tools: Prompt registries; BERT/T5 backbones; prompt orchestration.

- Assumptions: Memory footprint grows with tasks; need for prompt governance; occasional revalidation after updates.

- Instruction-based continual learning for enterprise task libraries (knowledge management)

- Workflow: InsCL calculates task similarity (Wasserstein Distance) to select replay sets; balances plasticity and stability across evolving instruction sets.

- Tools: Instruction embedding pipelines; replay selection; InsInfo metrics.

- Assumptions: High-quality, well-formatted instructions; fuzzy instructions degrade similarity; replay storage policies.

- RAG as a safety layer for daily assistants (productivity, research)

- Workflow: Retrieval-augmented grounding to mitigate outdated knowledge and hallucinations; regular corpus refresh; citation tracking.

- Tools: Vector DBs; document parsing; citation/traceability modules.

- Assumptions: Timely data ingestion; curation of sources; context length constraints.

Long-Term Applications

These require advances in algorithms, scalable infrastructure, governance, or new evaluation standards; many hinge on overcoming CF and enabling real-time, on-device, privacy-preserving incremental learning.

- True real-time, on-the-fly core model updating (software, robotics, personalized assistants)

- Vision: Continuous, low-latency updates to core weights without CF; instantaneous adaptation to user, environment, or task drift.

- Dependencies: Novel CF-resistant learning rules; efficient memory architectures; robust safety guards; hardware acceleration.

- Scalable dynamic architectures and MoE that grow without linear cost (cloud AI providers)

- Vision: Task-specific experts added or pruned online; efficient routing and shared representation preservation.

- Dependencies: Cost-aware expansion; online gating; lifelong capacity planning; policies for expert lifecycle.

- Conflict-aware, large-scale knowledge editing (publishing, law, enterprise data stewardship)

- Vision: Batch and streaming edits with auto-conflict detection, audit trails, and rollback; enterprise “model change management.”

- Dependencies: Reliable localization of knowledge within networks; edit impact prediction; standardized edit logs and governance.

- Privacy-preserving continuous alignment beyond expensive RLHF (policy, healthcare, finance)

- Vision: Lightweight alignment from implicit signals (user feedback, usage telemetry) with provable privacy and fairness guarantees.

- Dependencies: Differential privacy; robust preference modeling; regulator-approved protocols; human oversight pipelines.

- Edge/on-device lifelong multimodal assistants (IoT, wearables, vehicles)

- Vision: Federated IL with PLoRA/adapters; local prototypes and sparse patch updates; low energy footprint.

- Dependencies: Efficient edge hardware; intermittent connectivity; secure aggregation; model patch management.

- Sector-wide CF benchmarks and monitoring standards (academia, standards bodies, regulators)

- Vision: Common IL/CF dashboards and test suites across text, code, vision, and multimodal tasks; longitudinal performance tracking.

- Dependencies: Community datasets; agreed metrics for CF, hallucination, reliability; reproducible test harnesses (EMT-like).

- Automated concept drift detection with safe, adaptive rollbacks (finance risk, cybersecurity)

- Vision: Continuous drift monitoring; guarded adaptation; automated rollback if reliability drops; provenance-aware updates.

- Dependencies: Streaming anomaly detection; policy rules; audit trails; human-in-the-loop signoff.

- Low-hallucination multimodal instruction tuning at scale (healthcare imaging, manufacturing)

- Vision: Robust cross-modal alignment with minimal finetuning; compositional reasoning; multi-image contexts.

- Dependencies: Large, high-quality multimodal instruction datasets; improved connectors; scalable context windows.

- Human-in-the-loop governance for incremental learning (enterprise AI safety, policy)

- Vision: Structured review boards for IL changes; bias/drift assessments; impact statements for model updates.

- Dependencies: Organizational processes; tooling for explorable diffs; traceable performance changes; training for reviewers.

- Patch-based model tailoring integrated into MLOps (software tooling)

- Vision: “Model Tailor” workflows to extract critical parameter subsets for new tasks; patch registries; automatic patch decoration for backward compatibility.

- Dependencies: Loss- and parameter-change analytics; inverse Hessian approximations; versioned patch management.

- Registries and marketplaces for adapters/prompts/experts (software ecosystem, education)

- Vision: Shareable, auditable parameter-efficient modules and prompt packs; discovery and governance of incremental components.

- Dependencies: Metadata standards; security scanning; license compliance; compatibility testing across base models.

Collections

Sign up for free to add this paper to one or more collections.