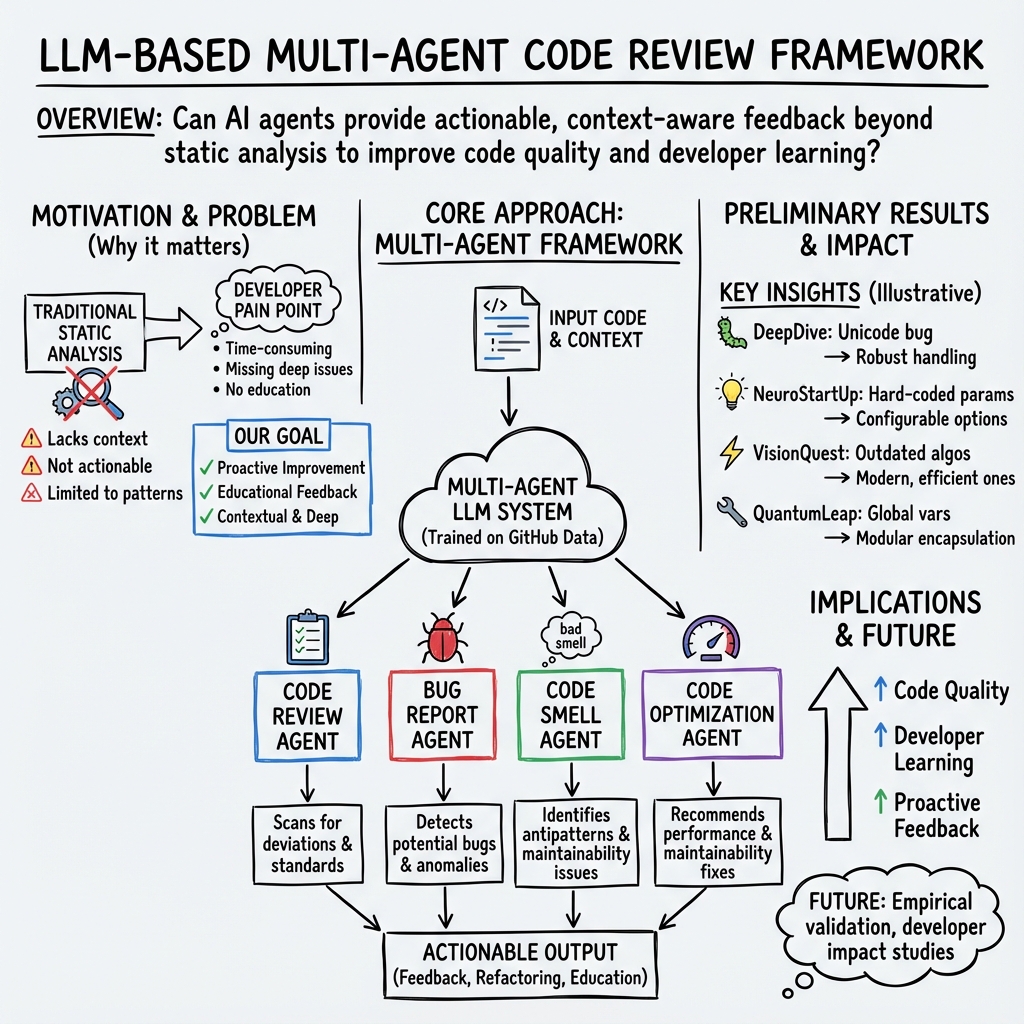

- The paper presents a novel LLM-based model for automating code reviews that identifies bugs, code smells, and refactoring opportunities.

- The methodology uses specialized agents trained on diverse GitHub repositories to deliver context-aware feedback and actionable recommendations.

- Results across ten AI projects show significant improvements in code quality and processing efficiency, highlighting the potential for software optimization and developer education.

AI-powered Code Review with LLMs: Early Results

This paper presents a novel approach leveraging LLMs for conducting code reviews, explicitly aiming to enhance software quality and developer education. The suggested model is trained on extensive datasets, including code repositories, bug reports, and best practices documentation. This essay will explore the methods, outcomes, and implications of this research.

Introduction

The integration of LLMs into software development represents a significant stride in automating code-related processes. This paper explores utilizing a LLM-based model to autonomously review software code, identify code smells, potential bugs, and deviations from coding standards, while also suggesting actionable improvements that contribute to both code optimization and the educational growth of developers.

Methodology

The methodology involves developing a LLM-based AI agent model that includes multiple agents with distinct specializations: Code Review Agent, Bug Report Agent, Code Smell Agent, and Code Optimization Agent. Each agent is fine-tuned on diverse GitHub repositories accessed via GitHub's REST API. These agents are trained to handle various tasks:

- Code Review Agent: Focuses on identifying code issues and suggesting amendments.

- Bug Report Agent: Specializes in detecting anomalies synonymous with bugs.

- Code Smell Agent: Identifies anti-patterns and proposes refactoring suggestions.

- Code Optimization Agent: Delivers recommendations and automatic enhancements for efficient code execution.

Training these agents on real-world projects equips them to provide precise, context-aware feedback.

Results

Empirical analyses on ten varied AI projects from GitHub demonstrated the model's efficacy. Noteworthy outcomes include:

- DeepDive: Detected a unicode parsing flaw, suggesting improved handling mechanisms.

- NeuroStartUp: Addressed hard-coded parameters, recommending abstract config options.

- VisionQuest: Enhanced processing speed by adopting more efficient algorithms.

- LinguaKit: Improved throughput by employing parallel processing.

- AIFriendly: Implemented error reporting systems for better user guidance.

- QuantumLeap: Suggested refactoring to improve scalability and readability.

These insights indicate the model's capability to deliver deep, actionable analysis and recommendations, fostering enhanced software quality.

Future Work

Future research will focus on evaluating LLM-generated documentation's accuracy versus manual efforts. Empirical studies will be performed to gauge the effectiveness of outcomes shown to developers during peer reviews, focusing on comprehensively understanding generated insights' impacts on software quality and developer education.

Conclusions

The paper establishes the potential of LLM-based AI agents to supplement traditional code review processes significantly. By pinpointing specific issues and offering contextual suggestions, they serve as a dual-purpose tool enhancing software quality while fostering developer growth. The study suggests a promising trajectory for future innovations in software development using AI, aiming for more efficient, quality-driven, and automated software engineering processes.